GPT-5.5 Developer Giveaway: How OpenAI Is Escalating the AI Coding War with Anthropic

GPT-5.5 Developer Giveaway: How OpenAI Is Escalating the AI Coding War with Anthropic

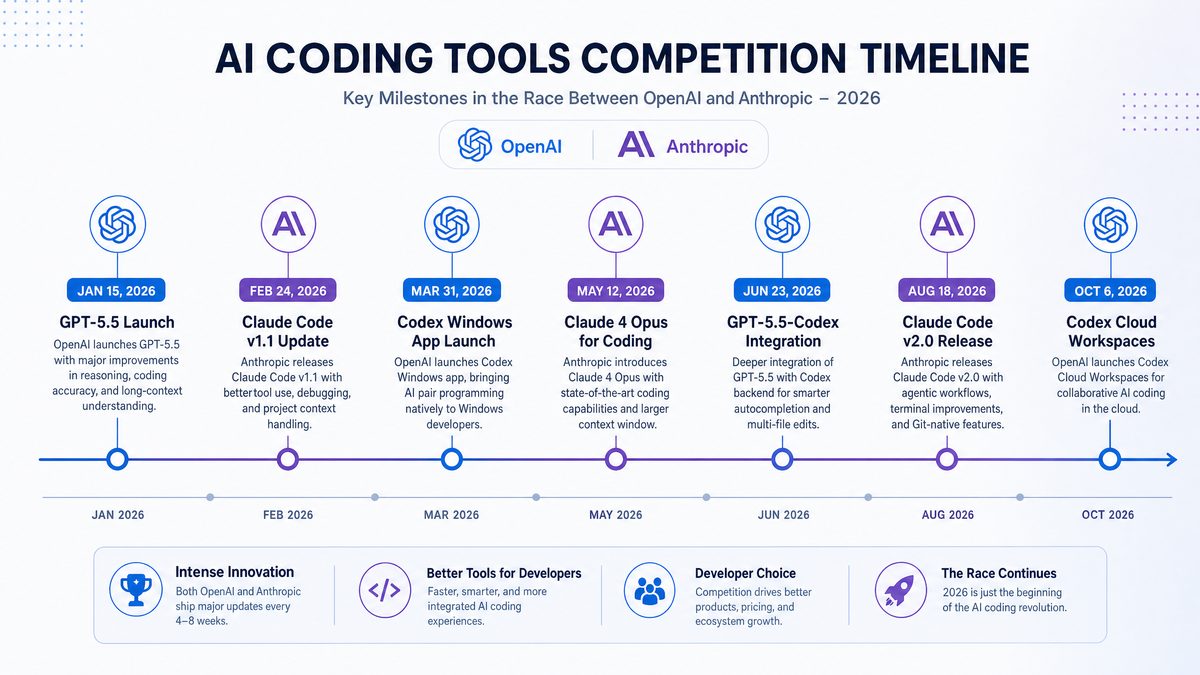

The AI coding landscape has entered a new era of rapid innovation and fierce competition, with OpenAI’s recent launch of GPT-5.5 marking a pivotal moment. To capitalize on its momentum and outpace rivals such as Anthropic, OpenAI has transformed what was initially a sold-out launch celebration into an unprecedented monthlong giveaway for developers. This giveaway significantly increases access to Codex, OpenAI’s specialized coding AI, by offering 10x rate limits to over 8,000 developers worldwide.

This article provides a detailed exploration of the GPT-5.5 developer giveaway, analyzing its technical advancements, strategic implications for the AI coding ecosystem, and how it intensifies the competitive landscape dominated by Anthropic and other players. We will also dissect the direct benefits to developers and enterprises, alongside a comprehensive comparative overview of OpenAI’s Codex and Anthropic’s offerings.

OpenAI’s GPT-5.5 and the Codex Giveaway: Technical Overview and Motivations

OpenAI’s GPT-5.5 represents a significant step beyond GPT-4 and earlier iterations, particularly in the domain of AI-assisted coding. While the core architecture builds upon transformer-based language models, GPT-5.5 integrates numerous enhancements aimed at developer productivity and code generation accuracy. These include improved understanding of complex programming languages, support for multistep reasoning in code synthesis, and better contextual awareness of legacy codebases.

Central to this launch is the Codex API, which powers AI-assisted code generation across multiple platforms and integrates with popular IDEs like Visual Studio Code, GitHub Copilot, and JetBrains IntelliJ. The giveaway campaign exponentially increases the rate limits for thousands of developers, allowing them to submit significantly more requests to the Codex API without throttling or additional cost.

Technical Advancements in GPT-5.5

- Multilingual Programming Support: GPT-5.5 extends Codex’s fluency beyond mainstream languages like Python and JavaScript, adding robust support for Rust, Go, and emerging domain-specific languages.

- Context Window Expansion: The model now supports a context window of up to 64k tokens, enabling it to understand and generate code involving large projects and extensive documentation seamlessly.

- Enhanced Reasoning and Debugging: GPT-5.5 introduces reasoning modules optimized for debugging and refactoring tasks, allowing developers to request not just code generation but in-depth explanations and corrective suggestions.

- API Rate Limit Improvements: The giveaway increases API throughput by a factor of 10 for selected developers, effectively removing a major bottleneck in rapid iteration and experimentation cycles.

These advancements contribute to a more fluid developer experience and reduce the friction typically associated with AI-powered coding solutions. The ability to handle larger codebases and more complex queries amplifies GPT-5.5’s utility in real-world software development workflows.

To delve deeper, the multilingual programming support in GPT-5.5 is underpinned by comprehensive training on an expanded corpus of open-source repositories, technical documentation, and domain-specific codebases. For example, the model was exposed extensively to Rust crates and Go modules, enabling it to not only generate syntactically correct code but also follow idiomatic patterns and best practices within these ecosystems. This is crucial for developers working on performance-critical or systems-level code where language nuances matter significantly.

The context window expansion to 64k tokens is a game-changer for dealing with monolithic legacy codebases or microservices architectures. Previous models struggled with maintaining context across multiple files or lengthy documentation, often resulting in inconsistent or fragmented code suggestions. With this extended window, GPT-5.5 can process entire project files, config files, and test suites in a single prompt, facilitating more coherent and maintainable code generation. This is particularly beneficial for tasks like automated code refactoring where understanding the interdependencies within the codebase is essential.

The enhanced reasoning and debugging capabilities are powered by a novel hybrid architecture combining transformer layers with specialized logic modules trained on curated debugging datasets. These modules allow GPT-5.5 to simulate step-by-step code execution reasoning, identify potential runtime errors, suggest optimizations, and even recommend security patches. For instance, a developer working on a complex algorithm can ask the model to explain the logic behind a piece of code or propose fixes for segmentation faults or memory leaks, receiving actionable insights alongside generated code snippets.

API rate limit improvements not only benefit individual developers but also enterprises integrating AI coding assistance into their CI/CD pipelines. Higher throughput enables parallel processing of code review requests, unit test generation, and automated documentation updates without incurring delays or throttling penalties. This scalability is vital for organizations aiming to embed AI deeply within their software development lifecycle (SDLC).

Strategic Motivations Behind the Giveaway

OpenAI’s choice to offer an extended Codex giveaway is a strategic maneuver to solidify its dominant position in the AI coding market. By empowering thousands of developers with enhanced access, OpenAI aims to:

- Accelerate Adoption: Increasing hands-on usage fosters familiarity and dependence on OpenAI’s APIs, raising switching costs for developers and organizations.

- Gather Richer Feedback: A larger user base generating diverse code samples and requests provides invaluable data to further tune GPT-5.5 and future models.

- Preempt Competitors: With Anthropic and other AI companies aggressively developing coding assistants, the giveaway serves as both a marketing weapon and a community-building effort to maintain mindshare.

- Drive Ecosystem Growth: Enhanced rate limits encourage experimentation with novel coding applications, from automated testing to code review bots, expanding the overall AI tooling ecosystem.

This giveaway is not merely a promotional stunt but a calculated escalation in the AI coding war, leveraging OpenAI’s technological lead and developer goodwill to maintain a competitive advantage.

Diving into the first motivation, accelerating adoption is critical in the AI coding domain because developers tend to exhibit path dependency — once a tool or API becomes integral to their workflow, switching costs become prohibitive. By providing a generous 10x rate limit increase, OpenAI effectively lowers the barrier for deep experimentation and integration, making it less likely that developers will explore alternative solutions from competitors such as Anthropic. This hands-on experience also nurtures an ecosystem of plugins, extensions, and third-party tools that further entrench OpenAI’s presence.

Gathering richer feedback from a broad and diverse developer base is strategically invaluable. The variety of coding styles, project domains, and language ecosystems exposed during this giveaway enables OpenAI to collect nuanced telemetry on model performance, edge cases, and failure modes. This data feeds into iterative model retraining cycles and fine-tuning, improving code generation accuracy and safety. It also helps identify gaps in language or framework support, guiding future development priorities.

Preempting competitors is an increasingly urgent concern as companies like Anthropic invest heavily in constitutional AI frameworks and safety-first models. By launching this giveaway, OpenAI not only captures developer mindshare but also signals confidence in its technological superiority. The campaign also serves as a community-building exercise, fostering loyalty through engagement and collaboration, which competitors might struggle to replicate at scale.

Lastly, driving ecosystem growth through enhanced rate limits catalyzes innovation beyond straightforward code generation. Developers are empowered to build AI-powered code review bots, automated documentation generators, intelligent test case creators, and even domain-specific development assistants. These new applications enrich the entire software development toolchain and create network effects that amplify OpenAI’s market position.

Competitive Landscape: OpenAI Codex vs. Anthropic’s AI Coding Solutions

The competitive dynamics between OpenAI and Anthropic have intensified in the AI-assisted coding domain. While OpenAI’s Codex has been the de facto industry leader since its inception, Anthropic has made significant strides with Claude, its AI assistant optimized for code generation and reasoning.

Below, we present a detailed comparison of the two companies’ coding AI offerings, highlighting their technical capabilities, usage limits, integration ecosystems, and pricing models.

| Feature | OpenAI Codex (GPT-5.5) | Anthropic Claude Code Assistant |

|---|---|---|

| Core Model Architecture | Transformer-based, fine-tuned GPT-5.5 with enhanced coding modules | Anthropic’s Constitutional AI-enhanced large language model specialized for coding |

| Supported Programming Languages | Python, JavaScript, Java, C++, Rust, Go, TypeScript, SQL, and more | Python, JavaScript, Java, Ruby, TypeScript, Shell scripting, and additional languages |

| Context Window | Up to 64k tokens | Up to 32k tokens |

| API Rate Limits | Standard: 60 RPM, Giveaway: 600 RPM (10x increase) | Standard: 50 RPM, Premium tiers up to 300 RPM |

| Integration Ecosystem | GitHub Copilot, VS Code, JetBrains, Azure OpenAI Service | Anthropic CLI, VS Code extension (beta), Cloud APIs (limited) |

| Pricing Model | Pay-as-you-go, subscription tiers, and free trial with giveaway extension | Subscription-based with enterprise licensing, limited free tier |

| Debugging and Reasoning Support | Advanced built-in reasoning for code explanation and debugging | Moderate reasoning capabilities with focus on safe and interpretable outputs |

| Community and Developer Support | Extensive documentation, forums, GitHub repositories, Claude Opus 4.7 for Software Engineering: The Complete 2026 Guide | Growing community, active research publications, limited open-source contributions |

As the table illustrates, OpenAI’s GPT-5.5 Codex currently leads in raw capability, rate limits, and ecosystem integration. However, Anthropic’s emphasis on safety, interpretability, and constitutional AI frameworks presents a unique value proposition that appeals to risk-conscious enterprises.

To unpack the core model architecture further, OpenAI’s GPT-5.5 builds upon a massively scaled transformer network with tens of billions of parameters, fine-tuned specifically with a focus on code synthesis, debugging, and multistep reasoning. The model leverages extensive supervised fine-tuning on curated code repositories, augmented with reinforcement learning from human feedback (RLHF) to align outputs with developer expectations. Additionally, GPT-5.5 includes plug-in modules that enhance reasoning capabilities by simulating symbolic execution patterns and static code analysis heuristics.

Anthropic’s Claude, while also transformer-based, incorporates a unique Constitutional AI framework designed to enforce ethical and safety constraints during generation. This involves an iterative self-critique loop where the model evaluates and revises its outputs against a set of predefined principles, reducing the likelihood of generating harmful or insecure code. Although this safety-first approach slightly limits raw throughput and context window size compared to GPT-5.5, it appeals to sectors where security and compliance are paramount.

Regarding supported languages, OpenAI’s broader language set includes emerging languages like Rust and Go, which are increasingly popular in systems programming and cloud-native development. Anthropic covers established languages and scripting environments but has yet to fully incorporate newer or domain-specific languages at scale. This gap presents opportunities for both companies to expand their language coverage based on user demand.

Context window size is critical for handling large-scale projects. GPT-5.5’s 64k token window enables it to process entire codebases or extended documentation in a single request, whereas Claude’s 32k token window, while substantial, may require chunking or context summarization techniques for very large inputs. Both providers are actively researching methods to extend effective context without incurring prohibitive computational costs.

In terms of API rate limits and pricing, OpenAI’s giveaway offers a substantial boost to developer productivity, particularly for startups and educational institutions. Anthropic maintains a tiered pricing model favoring enterprise customers, with more conservative free quotas. These differences reflect divergent go-to-market strategies: OpenAI aims for broad accessibility and rapid ecosystem expansion, while Anthropic prioritizes high-assurance deployments.

Integration ecosystems also differ markedly. OpenAI’s partnerships with GitHub Copilot and IDE vendors provide seamless workflows directly within developers’ favorite tools, facilitating adoption. Anthropic’s ecosystem is still maturing, with CLI tools and beta VS Code extensions indicating progress but lacking the breadth of OpenAI’s integrations.

Implications of Increased Rate Limits for Developers

The 10x rate limit increase granted to over 8,000 developers during the giveaway unlocks new possibilities:

- Rapid Prototyping: Developers can iterate faster, submitting multiple variations of coding prompts without waiting for quota resets.

- Complex Workflows: High-volume batch processing enables automated code reviews, testing suites, and integration with CI/CD pipelines.

- Educational Use Cases: Coding bootcamps and training programs can provide hands-on AI coding assistance at scale.

- Research and Experimentation: Data scientists and AI researchers can explore novel coding assistance paradigms without usage constraints.

This democratization of AI coding access accelerates innovation cycles and fosters a larger community of developers who rely on AI to augment their workflows. It also pressures competitors to meet or exceed these generous limits to remain relevant.

For example, rapid prototyping enabled by increased rate limits allows developers working on complex algorithms to submit a series of prompts testing different implementation strategies or optimization heuristics within minutes, something that was previously throttled by lower quotas. This iterative process, combined with GPT-5.5’s enhanced reasoning, can lead to higher-quality solutions and faster debugging cycles.

In complex workflows, enterprises can now configure automated pipelines where Codex analyzes pull requests, generates suggested code improvements, and runs AI-generated unit tests all in parallel. This integration reduces manual review overhead and accelerates delivery timelines. Startups building AI-powered development tools can also leverage the increased capacity to serve more users concurrently without sacrificing responsiveness.

Educational institutions benefit by providing students with near-real-time AI assistance during coding exercises. Large classrooms can simultaneously query the Codex API without encountering rate limit errors, enabling personalized learning experiences and on-demand debugging help. This scalability supports diverse curricula from introductory programming to advanced software engineering topics.

In research settings, unrestricted access opens doors to exploring new paradigms such as interactive code synthesis, multimodal programming (combining code with diagrams or natural language), and AI-assisted pair programming. Researchers can collect vast datasets on model interactions, enabling breakthroughs in understanding AI-human collaboration dynamics.

Real-World Use Cases and Developer Experiences with GPT-5.5 Codex Giveaway

Since the giveaway’s rollout, developers across diverse sectors have reported transformative impacts on their coding productivity and project scope. Here we analyze several prominent use cases and the technical nuances involved.

Enterprise Software Development

Large-scale enterprises with sprawling codebases benefit from GPT-5.5’s expanded context window and debugging capabilities. Teams integrate Codex-powered tools to automate routine code generation tasks, generate comprehensive unit tests, and even automate code refactoring suggestions. The increased API throughput allows multiple teams to work concurrently without hitting rate limits, streamlining development cycles.

For example, a Fortune 500 financial services company integrated GPT-5.5 Codex into its internal development platform. The Codex-powered assistant automatically generates boilerplate code for microservices, helps enforce compliance with internal coding standards, and assists in migrating legacy code to cloud-native architectures. With the 10x rate limit increase, the company scaled usage across 15 development teams, reducing development time by 30% and cutting defect rates in production by 15% due to improved code quality and automated reviews.

Open Source Contributions

Open source maintainers utilize the giveaway to accelerate patch contributions and resolve complex bugs. The AI’s ability to understand diverse coding styles and legacy constructs reduces onboarding time for new contributors. Combined with GitHub Copilot integration, GPT-5.5 has become a valuable collaborator in these projects.

Consider the case of a popular open-source database project that used GPT-5.5 Codex to triage and resolve issues reported by the community. Contributors leveraged the AI to generate test cases for new features, refactor inefficient query algorithms, and document code changes. The AI’s contextual understanding of prior code helped maintain consistency across releases, enhancing project stability. Moreover, the giveaway’s increased rate limits allowed maintainers to automate batch code reviews, freeing up human reviewers to focus on architectural decisions.

Educational Platforms and Coding Bootcamps

Educational institutions leverage the giveaway to provide students with real-time AI coding assistance, personalized feedback, and debugging hints. This enhances learning outcomes, especially for novice programmers. The scalable API access supports large classes simultaneously without degradation in responsiveness.

For instance, a prominent coding bootcamp integrated GPT-5.5 Codex into its online curriculum, enabling students to receive AI-generated hints and code explanations while working on programming assignments. The AI’s ability to provide step-by-step reasoning for complex problems helped students grasp difficult concepts more quickly. The giveaway’s extended rate limits enabled the bootcamp to support over 500 concurrent students without interruptions, a critical factor during intensive cohort sessions.

Startups and Indie Developers

For startups and solo developers, the giveaway’s extended rate limits remove cost and quota concerns, enabling rapid experimentation. Whether building minimum viable products or exploring AI-powered developer tools, access to GPT-5.5 Codex accelerates time-to-market and enables innovation that was previously cost-prohibitive.

A startup focusing on AI-assisted API development utilized GPT-5.5 to generate SDKs and documentation for multiple programming languages automatically. The increased throughput allowed the team to deliver multi-language support within weeks instead of months. Additionally, the startup integrated Codex into their product’s code generation features, offering customers AI-powered code snippets tailored to their specific use cases, gaining a competitive edge in a crowded market.

Challenges and Considerations

Despite its advantages, developers must navigate certain challenges:

- API Cost Management: Post-giveaway, usage costs may rise sharply, necessitating careful budgeting and optimization of API calls.

- Code Quality Assurance: While GPT-5.5 improves accuracy, generated code still requires human review to ensure security, performance, and compliance.

- Data Privacy: Enterprises must consider the implications of sending proprietary code to cloud-hosted AI services.

- Model Bias and Safety: Developers should be aware of potential biases in AI suggestions and implement guardrails in sensitive applications.

Understanding these factors helps developers maximize the benefits of GPT-5.5 while mitigating risks.

Regarding API cost management, organizations are advised to implement usage monitoring and rate limiting at the application level, prioritize caching of frequent queries, and batch API calls where feasible to optimize spending. Leveraging usage analytics can identify high-cost workflows and inform cost-saving strategies.

For code quality assurance, integrating AI-generated code with existing static analysis, automated testing, and peer review processes is essential. GPT-5.5’s enhanced reasoning support can assist in generating test cases and documentation, but human oversight remains paramount to avoid introducing vulnerabilities or performance regressions.

Data privacy concerns prompt some enterprises to adopt on-premises or hybrid deployment models where possible, or use differential privacy techniques and data anonymization before sending code to cloud APIs. OpenAI has also announced efforts towards offering private endpoint options and stricter data handling agreements to address these issues.

Model bias and safety are ongoing challenges. Developers should implement guardrails such as prompt filtering, output validation, and incorporate ethical considerations into AI-assisted code generation workflows. OpenAI and Anthropic continue to invest in bias mitigation research and constitutional AI techniques to improve model trustworthiness.

Future Outlook: The AI Coding War and Its Broader Industry Impact

The GPT-5.5 developer giveaway signals an accelerated arms race in AI-assisted coding technologies. Both OpenAI and Anthropic, among others, are investing heavily in pushing the boundaries of language model capabilities, safety, and integration depth. This competition will shape the future of software development across multiple dimensions.

Market and Ecosystem Evolution

As AI coding assistants become more powerful and accessible, we anticipate:

- Widespread Adoption: Integration into standard development workflows will become the norm rather than an exception.

- Specialized AI Models: Models fine-tuned for domain-specific coding, such as embedded systems or data engineering, will emerge.

- New Developer Roles: The role of developers will evolve towards AI oversight, prompt engineering, and model training collaboration.

- Increased Vendor Competition: More entrants will join the AI coding space, including cloud providers and open-source initiatives, driving innovation and pricing pressure.

The adoption of AI coding assistants is expected to transform developer productivity metrics dramatically. Studies predict up to 50% reduction in routine coding tasks, allowing developers to focus on higher-level design and innovation. Specialized models trained on industry-specific languages and standards — for example, automotive embedded C code or financial modeling scripts — will address niche requirements with greater precision.

New developer roles such as prompt engineers, who craft effective inputs to guide AI outputs, and AI trainers, who curate datasets and provide feedback loops, will become mainstream. Organizations may restructure teams to leverage AI-human collaboration effectively, balancing automation with creative problem-solving.

Competition will intensify, with major cloud providers like AWS, Google Cloud, and Microsoft Azure launching proprietary AI coding tools, while open-source communities accelerate development of accessible alternatives. This will foster innovation but also complicate vendor selection strategies.

Technical Trajectories

Future versions beyond GPT-5.5 are expected to deliver:

- Deeper Contextual Awareness: Models will better understand entire project architectures, dependencies, and deployment considerations.

- Improved Explainability: Enhanced transparency in AI-generated code decisions to facilitate debugging and compliance.

- On-device AI Coding: Reduced latency and privacy risks through edge deployments and offline capabilities.

- Cross-modal AI Assistants: Integration of voice, visual, and code modalities for richer developer interactions.

Deeper contextual awareness may involve integration with software dependency graphs, version control histories, and runtime environment metadata. This will enable AI assistants to make contextually informed suggestions, such as updating dependent modules or flagging deprecated APIs automatically.

Improved explainability will tackle the “black box” nature of large language models by providing human-readable rationales for code suggestions and tracing decision paths. This is particularly important for regulated industries requiring auditability.

On-device AI coding solutions aim to offload inference from cloud to local hardware, enhancing privacy and reducing latency. Advances in model compression and efficient architectures will make this feasible for desktops and developer laptops.

Cross-modal assistants will combine code generation with voice commands, graphical UI design, and documentation synthesis, fostering a more natural and interactive development experience. For example, a developer might describe desired functionality verbally while the assistant simultaneously generates code and mockups.

Strategic Recommendations for Developers and Organizations

To thrive in this rapidly evolving environment, developers and organizations should:

- Engage early with AI coding tools like GPT-5.5 Codex to identify productivity gains and integration opportunities.

- Invest in training developers on prompt engineering and AI-assisted debugging to maximize model benefits.

- Implement robust governance policies to address security, privacy, and compliance when using AI-generated code.

- Monitor competitive developments from Anthropic and others to remain agile and leverage emerging capabilities.

- Contribute to community knowledge bases and participate in feedback programs to shape model evolution.

Early engagement allows teams to develop internal expertise and tailor AI tools to their specific workflows, gaining a competitive edge. Training on prompt engineering is critical because effective use of AI coding assistants depends heavily on crafting precise and context-rich prompts.

Governance policies should include guidelines on code review, data handling, and ethical considerations, ensuring AI usage aligns with organizational standards and legal requirements. Continuous monitoring of competitor advancements ensures teams remain informed and can pivot strategies accordingly.

Active participation in feedback channels helps influence model development and prioritize features that best serve community needs, fostering a collaborative ecosystem.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Useful Links

- OpenAI GPT-5.5 Official Release Blog

- OpenAI Codex Documentation

- Anthropic Official Website

- GitHub Copilot Integration Details

- Anthropic’s Constitutional AI Research Paper

- InfoQ: AI-Assisted Coding Tools Overview

- Microsoft AI Developer Resources

- Understanding Transformer Architectures

- Prompt Engineering Best Practices

- The Future of AI in Software Development