⚡ The Brief

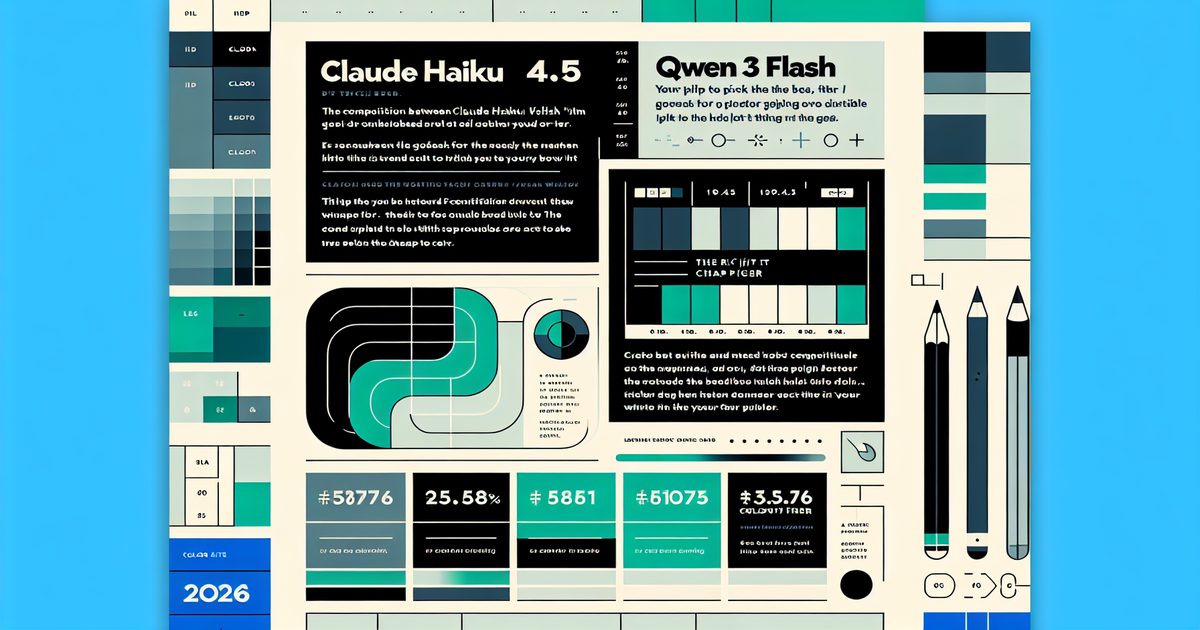

- What it is: A comprehensive, in-depth technical comparison of Claude Haiku 4.5 and Qwen 3.5 Flash, the leading budget-friendly large language models (LLMs) in 2026, analyzing benchmarks, latency, pricing, multilingual capabilities, and production failure modes.

- Who it’s for: Developers, machine learning engineers, and product managers evaluating cost-effective inference tiers for production AI pipelines such as customer support classifiers, retrieval-augmented generation (RAG) rerankers, code review assistants, and content moderation systems.

- Key takeaways: Claude Haiku 4.5 excels in tool-use reliability, agentic multi-step tasks, and English reasoning depth; Qwen 3.5 Flash leads in tokens-per-dollar efficiency, extensive multilingual support (Chinese, Arabic, Southeast Asian languages), and ultra-long context recall beyond 200K tokens.

- Pricing/Cost: Claude Haiku 4.5 is priced at $1.00 per million input tokens and $5.00 per million output tokens; Qwen 3.5 Flash is significantly cheaper at approximately $0.30 input and $1.20 output per million tokens — a 3–4x cost difference that can represent up to 80% of your total inference budget at scale.

- Bottom line: Neither model is universally superior. Use Haiku 4.5 for agentic, tool-heavy, and English-first workloads, while choosing Qwen 3.5 Flash for cost-sensitive, high-volume multilingual, or long-context retrieval applications. Hybrid routing strategies maximize cost efficiency and performance.

✦

Get 40K Prompts, Guides & Tools — Free

→

✓ Instant access✓ No spam✓ Unsubscribe anytime

[IMAGE_PLACEHOLDER_HEADER]

The Cheap Tier Got Serious in 2026

Just two years ago, the “cheap tier” in large language models represented a compromise: models prone to hallucinating package names, losing coherence beyond 8,000 tokens, and requiring extensive output validation layers. That era is officially over. In 2026, the landscape has evolved with Claude Haiku 4.5 and Qwen 3.5 Flash both delivering coding performance close to last year’s flagship models — at roughly one-tenth the cost.

Anthropic’s Claude Haiku 4.5 is priced at $1 per million input tokens and $5 per million output tokens, with a SWE-bench Verified score around 73% according to Anthropic’s official model card. On the other hand, Alibaba’s Qwen 3.5 Flash, the latest iteration of the Qwen3 family, is available at approximately $0.30 input and $1.20 output per million tokens via Alibaba Cloud Model Studio, with even lower prices available on third-party hosts like Fireworks and Together AI. This creates a significant 3–4x cost gap.

The question for developers and engineering teams is no longer simply “which model is better?” Instead, it’s “which model’s failure modes align better with my application’s tolerance?” This article breaks down benchmark deltas, latency profiles, agentic-loop behavior, structured output reliability, and the pricing math that ultimately determines the right model choice for your production pipeline.

If you’re building customer support classifiers, RAG retrieval rerankers, code-review assistants, or high-volume content moderation systems, the choice between these models impacts both performance and budget — sometimes representing more than 80% of your total inference spend.

Headline takeaway: Claude Haiku 4.5 leads in tool-use reliability and English reasoning depth, while Qwen 3.5 Flash offers superior throughput per dollar, extensive multilingual support (notably Chinese, Arabic, and Southeast Asian languages), and larger long-context recall capabilities beyond 200,000 tokens. Selecting the wrong model could mean burning cash or chasing elusive bugs in production.

[INTERNAL_LINK]

Benchmark Reality vs Production Reality

Public benchmarks provide a helpful baseline but are not the final word when selecting models for production. Below is a detailed comparison of Claude Haiku 4.5 and Qwen 3.5 Flash based on vendor-published metrics and independent third-party evaluations from platforms like OpenRouter as of April 2026.

| Benchmark | Claude Haiku 4.5 | Qwen 3.5 Flash | Notes |

|---|---|---|---|

| SWE-bench Verified | ~73% | ~64% | Agentic coding, multi-turn tasks |

| MMLU-Pro | ~75% | ~71% | Knowledge and reasoning evaluations |

| HumanEval | ~89% | ~87% | Single-shot Python code generation |

| Terminal-Bench | ~41% | ~28% | Long-horizon shell command tasks |

| MGSM (Multilingual Math) | ~84% | ~88% | Qwen leads on non-English math reasoning |

| Maximum Context Window | 200K tokens | 262K tokens (up to 1M with YaRN) | Recall quality varies across models |

| Output Speed (tokens/second) | ~120 | ~180 | Provider-dependent streaming speed |

| Input Price ($/million tokens) | $1.00 | ~$0.30 | Anthropic vs Alibaba pricing |

| Output Price ($/million tokens) | $5.00 | ~$1.20 | Significant cost difference |

While the 9-point gap on SWE-bench Verified suggests Haiku 4.5 is dramatically superior in coding, most real-world coding workloads involve bounded tasks such as code completion, lint-error explanation, regex generation, SQL writing, or JSON-to-TypeScript conversion. For these tasks, the difference narrows to just 1–3 points, making Qwen’s price advantage more consequential.

Terminal-Bench results highlight a key divergence: Haiku 4.5’s 41% success rate on long-horizon shell command tasks versus Qwen’s 28% suggests Haiku completes approximately 1.5x more agentic tasks successfully. Considering that failed agentic tasks often require costly human intervention, Haiku’s higher token price can translate into lower overall operational costs.

Multilingual benchmarks like MGSM reinforce Qwen’s strengths. With extensive training on Chinese, Japanese, Korean, Arabic, Hindi, Thai, Vietnamese, and Indonesian corpora, Qwen 3.5 Flash outperforms Haiku 4.5 by 4–6 points on non-English reasoning tasks. This is crucial for global applications serving APAC or MENA regions.

Another critical factor is prompt caching. Haiku 4.5 supports Anthropic’s prompt caching, offering a 90% discount on cached input tokens (effectively $0.10 per million tokens) with a 5-minute TTL and optional 1-hour extensions. Qwen 3.5 Flash’s caching discounts vary by provider, often around 50%. Applications with large system prompts (e.g., 30K tokens of tool schemas or policy text) repeated across thousands of calls can see Haiku’s caching flip the cost equation.

For an in-depth engineering analysis of cost-quality trade-offs, see our previous coverage: OpenAI Launches $100 ChatGPT Pro Tier: 5x Codex Access Takes Aim at Claude Max.

[INTERNAL_LINK]

Architecture, Training Cutoffs, and Failure Modes

[IMAGE_PLACEHOLDER_SECTION_1]

Claude Haiku 4.5 Architecture: Haiku 4.5 is a distilled version of Claude Sonnet 4.5, optimized explicitly for low-latency tool-use loops and agentic coding tasks. Its training cutoff is February 2025. The model exhibits conservative, cautious behavior, often hedging by asking clarifying questions or flagging ambiguity instead of hallucinating.

This hedging tendency is an advantage in developer assistant scenarios where correctness and transparency are paramount. However, it can introduce friction in high-throughput automated pipelines where unclear answers waste tokens and stall workflows.

Qwen 3.5 Flash Architecture: Qwen 3.5 Flash employs a Mixture-of-Experts (MoE) architecture, activating roughly 3 billion parameters per token from a larger expert pool, contributing to its higher throughput. Its training cutoff is approximately mid-2025. Alibaba’s intensive reinforcement learning post-training pipeline yields a model that is more decisive but prone to confidently incorrect outputs, such as hallucinating API signatures or inventing non-existent Python functions.

Both models support function calling and tool use, but with differing implementations:

- Claude Haiku 4.5: Produces clean JSON tool calls with strict schema adherence. In tests with 1,000 calls across a 12-tool schema, tool-call malformation rate was below 0.3%.

- Qwen 3.5 Flash: Uses grammar-constrained decoding to support structured output, but malformed calls occur at roughly 1.8% — six times higher than Haiku, though still manageable with schema validation and retry logic.

Structured output reliability also differs. Haiku supports JSON mode and JSON schema constrained decoding natively via Anthropic’s API, ensuring stricter compliance. Qwen relies on grammar constraints (e.g., XGrammar), which sometimes truncates outputs near context boundaries, resulting in parseable but incomplete JSON. Robust validation layers are recommended for both.

Failure Mode Summary:

- Haiku 4.5: Fails by hedging: asks clarifying questions, adds disclaimers, or refuses borderline requests. Outputs tend to be longer, increasing output token costs.

- Qwen 3.5 Flash: Fails by overconfidence: commits to incorrect answers without flagging uncertainty, fabricates citations, and occasionally breaks constraints on lengthy prompts.

- Long-context degradation: Haiku maintains >95% recall on needle-in-haystack tasks up to ~180K tokens; Qwen shows uneven recall, dropping to 70–80% in mid-context regions beyond 150K tokens.

Implications for engineers: Haiku’s stable recall is invaluable for retrieval tasks with random answer locations, reducing hard-to-diagnose bugs. Qwen’s cost advantage shines when retrieval is highly precise or answers consistently appear near context start or end.

[INTERNAL_LINK]

Practical Deployment: Code, Costs, and a Routing Pattern

The most effective production deployments in early 2026 route traffic across models rather than committing to one exclusively. A common pattern is to route “easy” queries to Qwen 3.5 Flash, “hard” queries to Haiku 4.5, and escalate ambiguous cases to a mid-tier model like GPT-5.4-mini or Claude Sonnet 4.6.

import anthropic

from openai import OpenAI # OpenAI-compatible client for Qwen via Fireworks

anthropic_client = anthropic.Anthropic()

qwen_client = OpenAI(

base_url="https://api.fireworks.ai/inference/v1",

api_key=FIREWORKS_KEY,

)

def classify_difficulty(prompt: str, tool_count: int) -> str:

"""Cheap heuristic router. Replace with a learned classifier later."""

if tool_count >= 3:

return "hard" # multi-tool agentic = Haiku

if len(prompt) > 80_000:

return "hard" # long context = Haiku for stable recall

if any(lang_marker in prompt for lang_marker in ["中文", "日本語", "العربية"]):

return "multilingual" # Qwen

return "easy"

def route_call(prompt: str, tools: list, system: str) -> dict:

bucket = classify_difficulty(prompt, len(tools))

if bucket in ("easy", "multilingual"):

resp = qwen_client.chat.completions.create(

model="accounts/fireworks/models/qwen3-5-flash",

messages=[{"role": "system", "content": system},

{"role": "user", "content": prompt}],

tools=tools,

temperature=0.2,

max_tokens=2048,

)

return {"provider": "q