Mastering ChatGPT Prompts in 2026: The Practitioner’s Framework for Structured, High-Impact Prompting

The landscape of AI interaction has undergone a radical transformation. What constituted effective prompting just a year or two ago is now largely obsolete, a relic of an era characterized by smaller context windows and less sophisticated models. As of 2026, with the widespread deployment of models like OpenAI’s GPT-5.4 and Anthropic’s Claude Opus 4.6, the art and science of prompting demand a far more nuanced and structured approach. The days of simple, one-line commands yielding optimal results are behind us. These advanced models, with their vastly extended context windows—often exceeding 1 million tokens—and enhanced reasoning capabilities, necessitate a deeper understanding of how to guide their immense computational power.

The evolution isn’t merely about scale; it’s about qualitative improvements in understanding, coherence, and the ability to follow complex instructions. Early “tips and tricks” often focused on brevity or keyword stuffing, strategies that now actively hinder performance by failing to leverage the model’s capacity for intricate processing. Today, effective prompting is about constructing a comprehensive operational brief for the AI, treating it less like a search engine and more like a highly intelligent, albeit instruction-dependent, collaborator. This shift is critical for anyone looking to extract maximum value from these powerful AI systems in professional and creative applications.

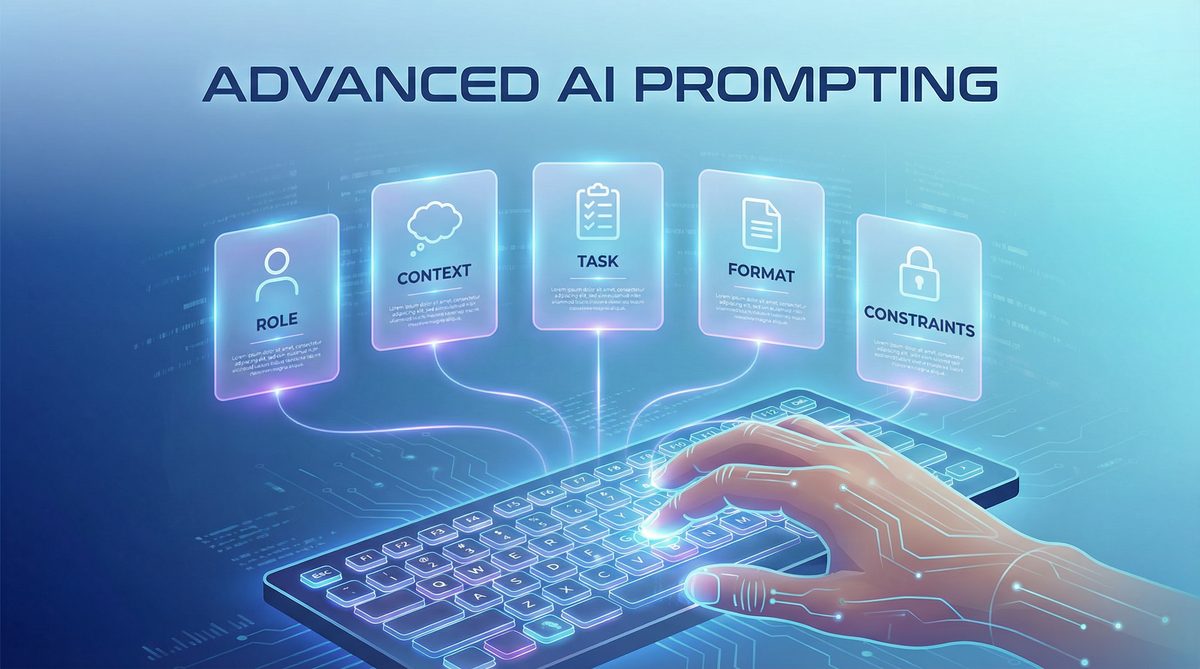

The Structured Prompt Framework: Your Blueprint for AI Interaction

To consistently achieve high-quality, relevant, and actionable outputs from advanced AI models, a structured prompt framework is indispensable. We advocate for a robust model comprising five core components: Role, Context, Task, Format, and Constraints. This framework provides a comprehensive blueprint, ensuring that all necessary information is conveyed clearly and unambiguously to the AI.

- Role: Assigning a specific persona or expertise to the AI. This primes the model to adopt a particular perspective, tone, and knowledge base. For example, “Act as a senior cybersecurity analyst” or “You are a seasoned venture capitalist.”

- Context: Providing all relevant background information, data, and environmental factors. This includes the ‘why’ behind the task, historical data, relevant industry trends, or specific project details. A rich context prevents generic responses and ensures outputs are tailored to your specific situation.

- Task: A clear, explicit description of what needs to be done. This is the core instruction, detailing the desired action or outcome. Use active verbs and break down complex tasks into manageable sub-tasks if necessary.

- Format: Specifying the desired output structure. This is crucial for integration into workflows and for readability. Examples include JSON, markdown tables, bulleted lists, Python code, or a formal report structure.

- Constraints: Defining boundaries, limitations, and specific requirements for the output. This can include word counts, tone, style guides, exclusion of certain topics, adherence to specific ethical guidelines, or computational resource limits.

By systematically addressing each of these elements, users can minimize ambiguity, reduce the need for iterative corrections, and significantly improve the precision and utility of AI-generated content. This framework is particularly vital when dealing with complex problems that require multi-faceted analysis or highly specialized knowledge.

Role-Based Prompting: Mastering Expert Personas

One of the most effective ways to elevate AI output is through precise role assignment. Modern AI models are trained on vast datasets encompassing nearly all human knowledge, allowing them to simulate a wide array of professional and expert personas. When you assign a role, you are essentially activating a specific knowledge subgraph and a particular reasoning style within the model. This is far more potent than simply asking a generic question.

Examples of Effective Role Assignments:

- “Act as a senior data privacy lawyer specializing in GDPR and CCPA.” This role would prime the AI to focus on legal compliance, risk assessment, and specific regulatory language.

- “Assume the persona of a lead software architect for a large-scale enterprise SaaS platform.” Here, the AI would prioritize scalability, security, integration patterns, and maintainability in its recommendations.

- “You are a compassionate but firm career coach for mid-career professionals looking to pivot into AI ethics.” This role guides the AI’s tone, advice style, and the specific domain of expertise it should draw upon.

- “Embody a skeptical financial auditor scrutinizing Q3 earnings reports.” This prompts the AI to look for discrepancies, potential red flags, and areas of concern, rather than simply summarizing positive outcomes.

The key is to be specific. Instead of “Act as a writer,” try “Act as a long-form investigative journalist with a focus on environmental policy,” or “Act as a copywriter specializing in direct-response marketing for B2B SaaS.” The more defined the role, the more targeted and insightful the AI’s contribution will be. This technique is especially powerful when combined with a rich context, allowing the AI to apply its specialized ‘knowledge’ to your specific scenario.

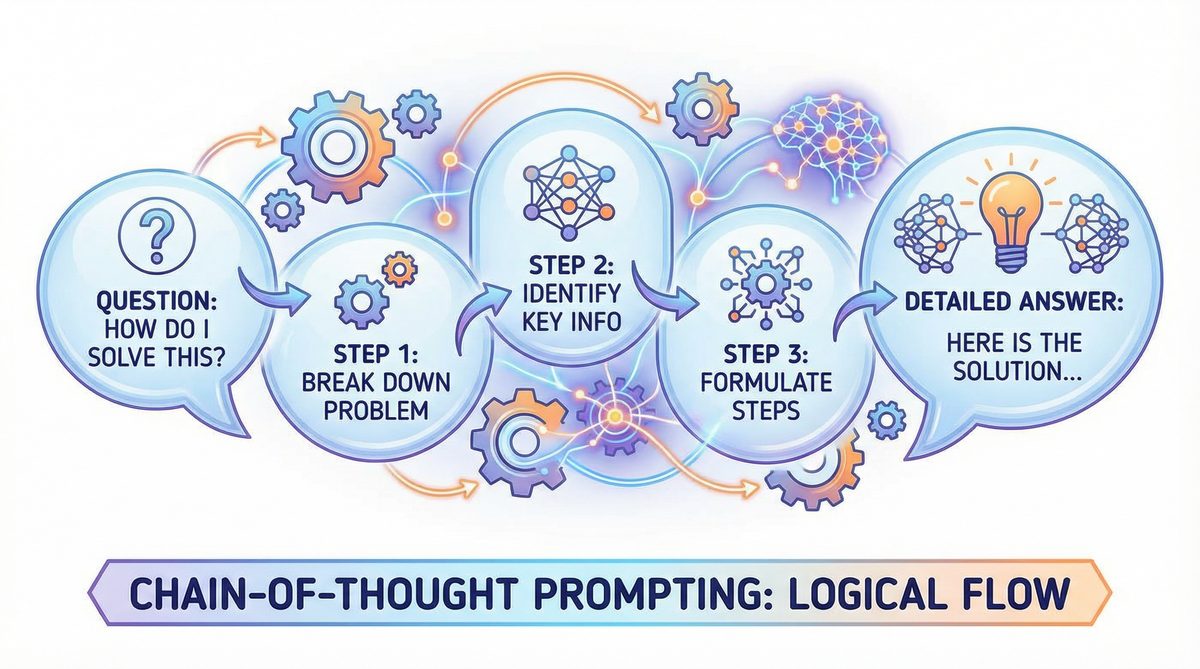

Chain-of-Thought Prompting: Deconstructing Complexity

Chain-of-Thought (CoT) prompting, often initiated with phrases like “Think step by step,” “Walk me through your reasoning,” or “Before answering, outline your analytical process,” remains a cornerstone of advanced prompting. Its efficacy has only grown with more powerful models. CoT compels the AI to articulate its internal reasoning process, breaking down complex problems into smaller, manageable steps. This not only improves the final output’s accuracy and coherence but also makes the AI’s logic transparent, allowing users to identify and correct faulty assumptions.

When to Use CoT:

- Complex Problem Solving: Any task requiring multiple logical steps, such as debugging code, solving mathematical problems, or developing a strategic plan.

- Decision Making: When evaluating trade-offs, weighing pros and cons, or recommending a course of action.

- Creative Generation with Constraints: For tasks like writing a story with specific plot points or designing a system architecture that meets various non-functional requirements.

- Avoiding Hallucinations: By forcing the AI to show its work, you can often catch instances where it might be fabricating information or making unsupported leaps in logic.

Variants of CoT:

- Standard CoT: “Think step by step. First, identify the core problem. Second, list potential solutions. Third, evaluate each solution against criteria X, Y, Z. Finally, recommend the best solution with justification.”

- Self-Correction CoT: “Think step by step. After generating your initial analysis, critically review your own work for biases or logical inconsistencies. Then, refine your answer.”

- Tree of Thoughts (ToT): For extremely complex problems, instruct the AI to explore multiple reasoning paths in parallel, then evaluate and prune less promising branches. “Generate three distinct approaches to solving this problem. For each approach, detail the steps, potential challenges, and expected outcomes. Then, select the most viable approach and elaborate on it.”

By making the AI’s thought process explicit, CoT prompting transforms it from a black box into a more understandable and debuggable system, significantly enhancing its utility for critical tasks. For more detailed strategies on leveraging AI for complex problem-solving, consider exploring our guide on advanced AI problem-solving techniques.

Context Injection: The Power of Rich Data

The extended context windows of GPT-5.4 and Claude Opus 4.6 are not just a quantitative improvement; they are a qualitative game-changer. They allow users to inject vast amounts of proprietary data, background documents, previous conversations, and reference materials directly into the prompt. This eliminates the need for the AI to rely solely on its pre-trained knowledge, which might be outdated or too general for specific tasks.

How to Effectively Inject Context:

- Provide Background Documents: Copy-paste entire reports, research papers, meeting transcripts, or codebases directly into the prompt before your main instruction.

- Supply Data Tables: Include CSV data, JSON objects, or formatted tables. Instruct the AI to analyze this specific data.

- Reference Previous Interactions: Explicitly refer to previous turns in a conversation, “Based on our discussion about X, now analyze Y.”

- Define Key Terms and Acronyms: Especially in specialized domains, provide a glossary of terms to ensure consistent understanding.

- Use Delimiters: Wrap injected context in clear delimiters (e.g.,

<context>...</context>or---DOCUMENT START---...---DOCUMENT END---) to help the AI distinguish it from your instructions.

The impact of rich context injection is profound. It allows the AI to perform highly specific analyses, synthesize information from disparate sources, and generate outputs that are deeply integrated with your unique operational environment. This is particularly valuable for tasks like competitive analysis, legal document review, or scientific research synthesis, where the AI needs to operate on very specific and often proprietary information.

Iteration Loops: AI as a Self-Critiquing Partner

One of the most underutilized capabilities of advanced AI models is their capacity for self-critique and iterative refinement. Instead of simply accepting the first output, users can engage the AI in a feedback loop, instructing it to evaluate its own work against specified criteria and then improve upon it. This transforms the AI from a mere content generator into a dynamic, self-improving assistant.

How to Implement Iteration Loops:

- Initial Prompt: Provide your initial structured prompt (Role, Context, Task, Format, Constraints).

- Critique Prompt: After the AI generates its first output, follow up with a prompt like: “Critique your previous response. Specifically, evaluate it against the following criteria: [List 3-5 specific criteria, e.g., clarity, conciseness, adherence to ethical guidelines, factual accuracy, persuasive tone, originality]. Identify areas for improvement and explain why. Then, generate an improved version.”

- Refinement Prompt (Optional): If further refinement is needed, provide specific feedback: “Your revised response is better, but it still lacks [X]. Also, expand on [Y] and shorten [Z]. Incorporate these changes and provide a final version.”

This iterative process allows for continuous improvement, pushing the AI to higher levels of performance and ensuring the final output perfectly aligns with your objectives. It’s particularly useful for creative tasks, complex writing, or strategic planning where multiple perspectives and refinements are beneficial. This technique also helps mitigate the inherent biases or initial misinterpretations the AI might have.

Multi-Turn Conversation Strategies: Building on Previous Responses

Advanced AI models excel in maintaining conversational coherence over extended interactions. Leveraging this capability requires strategic multi-turn prompting, where each subsequent prompt builds intelligently on the previous exchanges. This is distinct from simply asking a series of unrelated questions.

Effective Multi-Turn Strategies:

- Progressive Elaboration: Start with a high-level request, then gradually drill down into details. “First, give me an overview of the market for AI in healthcare. Then, focus specifically on diagnostic imaging. Finally, analyze the competitive landscape for oncology AI solutions.”

- Conditional Branching: Base subsequent questions on the AI’s previous answers. “Based on your analysis of the competitive landscape, which company presents the most significant acquisition target? Justify your choice.”

- Maintaining State: Explicitly remind the AI of prior context if the conversation drifts. “Returning to our earlier discussion about the Q3 financial report, how would the proposed marketing budget adjustment impact those projections?”

- Role Continuity: Ensure the AI maintains its assigned role throughout the conversation, unless explicitly instructed to change. “As a cybersecurity analyst, what are the immediate threats posed by the new vulnerability you just described?”

Strategic multi-turn conversations allow for dynamic exploration of topics, complex problem-solving, and the development of sophisticated artifacts that would be impossible to generate with a single prompt. This is where the AI truly acts as a conversational partner, adapting and evolving its responses based on the ongoing dialogue. For more methods on how to structure long-form AI interactions, refer to our comprehensive guide on effective long-form AI interactions.

Output Format Control: Precision and Integration

Controlling the output format is crucial for integrating AI-generated content into existing workflows and for ensuring readability and usability. Advanced models are highly adept at adhering to specific structural instructions.

Common Output Formats and How to Request Them:

- JSON: Ideal for programmatic use. “Output the data as a JSON array of objects, where each object has keys for ‘product_name’, ‘price’, and ‘availability_status’.”

- Markdown Tables: Excellent for structured, readable data within text. “Present the comparative analysis in a markdown table with columns for ‘Feature’, ‘Product A Score’, ‘Product B Score’, and ‘Rationale’.”

- Code Blocks: For generating code snippets or configuration files. “Provide the Python code for a FastAPI endpoint that handles user authentication. Wrap the code in a markdown code block.”

- Structured Reports: For formal documents. “Generate a market research report with the following sections: Executive Summary, Market Size and Growth, Competitive Landscape, Key Trends, and Recommendations.”

- Bulleted/Numbered Lists: For clear enumeration. “List the top 5 challenges for enterprise AI adoption as a numbered list.”

Always specify the desired format explicitly. You can also provide examples of the desired format to further guide the AI, especially for complex or custom structures. This level of control ensures that the AI’s output is not just intelligent but also immediately usable.

Temperature and Parameter Tuning for Different Use Cases

While often hidden behind user interfaces, understanding prompt parameters like ‘temperature’ is vital for advanced users. Temperature controls the randomness of the output. Higher temperatures lead to more diverse and creative responses, while lower temperatures result in more deterministic and focused outputs.

- High Temperature (e.g., 0.7-1.0): Use for creative writing, brainstorming, generating diverse ideas, or when you want the AI to explore unconventional solutions. Example: “Brainstorm 20 innovative marketing slogans for a sustainable tech startup.”

- Low Temperature (e.g., 0.0-0.3): Use for factual recall, code generation, summarization, legal document drafting, or any task where precision and consistency are paramount. Example: “Summarize the key findings of the attached research paper, ensuring factual accuracy.”

Other parameters, like ‘top_p’ (nucleus sampling) and ‘frequency_penalty’/’presence_penalty’, offer further control over token generation, influencing the diversity and novelty of the output. While not always directly accessible in all front-ends, being aware of these underlying mechanisms helps in understanding why an AI might behave a certain way and how to influence its output through prompt design.

Real-World Prompt Templates for 2026

Here are several practical prompt templates that integrate the structured framework and advanced techniques, tailored for specific professional applications:

1. Content Creation (Blog Post Draft)

**Role:** You are a senior content strategist and SEO specialist for a B2B SaaS company in the AI/ML space.

**Context:**

- **Topic:** "The Ethical Implications of Generative AI in Creative Industries."

- **Target Audience:** Mid-level managers and executives in creative agencies, tech companies, and legal departments.

- **Keywords to include (at least 3 times each):** "generative AI ethics," "copyright in AI," "AI originality," "responsible AI development."

- **Goal:** Educate the audience, highlight potential risks, and propose mitigation strategies.

- **Existing Article Outline (optional, but helpful):**

1. Introduction: The Rise of Generative AI in Creativity

2. Copyright & Ownership: Who Owns AI-Generated Art?

3. Authenticity & Originality: The Value of Human Creativity

4. Bias & Representation: Ethical Pitfalls

5. Mitigating Risks: Towards Responsible AI Deployment

6. Conclusion: A Collaborative Future

**Task:** Write a comprehensive blog post draft (1200-1500 words) that addresses the ethical implications of generative AI in creative industries.

**Format:** Markdown with clear headings (H2, H3), bullet points, and bolded key terms.

**Constraints:**

- Tone: Informative, authoritative, balanced, and forward-looking.

- Avoid overly technical jargon; explain complex concepts clearly.

- Emphasize practical implications and actionable insights.

- Ensure a positive, collaborative outlook in the conclusion.

- Think step by step: First, outline the main arguments for each section. Then, draft the content, ensuring smooth transitions and logical flow. Finally, review for keyword integration and overall coherence.

2. Code Review (Python Function)

**Role:** You are a senior Python software engineer specializing in high-performance data processing and secure API development.

**Context:**

- **Code Snippet to Review:**

```python

def process_data(data_list):

processed = []

for item in data_list:

if item > 10:

processed.append(item * 2)

return processed

```

- **Project:** A new data pipeline for financial transactions.

- **Requirements:**

- High performance for large datasets.

- Robust error handling.

- Security considerations (no sensitive data leakage).

- Readability and maintainability.

- **Previous Feedback:** The current function is too simplistic and lacks proper validation.

**Task:** Perform a comprehensive code review of the provided Python function.

**Format:**

- Output a markdown table summarizing identified issues (Severity: Critical, Major, Minor; Category: Performance, Security, Readability, Logic; Description; Recommended Fix).

- Follow the table with a detailed explanation of the most critical issue and an improved code snippet.

**Constraints:**

- Focus on potential issues related to performance, security vulnerabilities, and adherence to best practices for production-grade code.

- Assume `data_list` can contain various data types, not just integers.

- Think step by step: First, analyze the current function against project requirements. Second, identify all potential issues. Third, categorize and prioritize them. Fourth, propose concrete solutions. Finally, rewrite the function demonstrating the improvements.

3. Data Analysis (Customer Churn Prediction)

**Role:** You are a lead data scientist specializing in customer behavior analytics and predictive modeling.

**Context:**

- **Data (CSV format - imagine this is copy-pasted here):**

```csv

CustomerID,Age,SubscriptionType,MonthlyCharges,TotalCharges,Churn

1001,35,Premium,75.00,1200.00,No

1002,48,Basic,45.00,800.00,Yes

... (100 rows of similar data) ...

```

- **Goal:** Identify key factors contributing to customer churn and propose actionable strategies.

- **Available Models:** Logistic Regression, Random Forest, XGBoost.

- **Business Objective:** Reduce churn by 15% in the next quarter.

**Task:**

1. Perform an exploratory data analysis (EDA) to understand the dataset.

2. Identify the top 3 features most strongly correlated with churn.

3. Suggest which predictive model would be most suitable for this dataset and why.

4. Propose three actionable strategies to mitigate churn, based on your findings.

**Format:** A structured report in markdown, with clear headings for EDA Findings, Feature Importance, Model Recommendation, and Churn Mitigation Strategies. Use bullet points for lists and provide summary statistics where relevant.

**Constraints:**

- Assume the provided data is a representative sample.

- Explain your reasoning clearly for model choice and churn strategies.

- Do not generate any actual code for model training, just discuss concepts.

- Focus on interpretability of results for business stakeholders.

- Think step by step: First, analyze the provided data for patterns. Second, derive insights about churn drivers. Third, evaluate model suitability. Finally, formulate practical recommendations.

4. Research Synthesis (Literature Review)

**Role:** You are an academic researcher specializing in the intersection of cognitive neuroscience and artificial intelligence.

**Context:**

- **Research Papers (imagine 3-5 full abstracts or summaries copy-pasted here):**

- Paper 1: "Neural Correlates of Language Processing in Large Language Models" (Smith et al., 2024)

- Paper 2: "Emergent Properties of Foundation Models: A Cognitive Perspective" (Jones & Lee, 2025)

- Paper 3: "Bridging the Gap: From Biological Brains to Artificial General Intelligence" (Chen, 2023)

- **Research Question:** How do recent advancements in large language models inform our understanding of human cognitive processes, particularly language and reasoning?

- **Audience:** Fellow researchers in AI and neuroscience.

**Task:** Synthesize the key findings from the provided research papers to answer the research question.

**Format:** A concise literature review section (approx. 800-1000 words) formatted in academic style (introduction, thematic analysis, conclusion). Use inline citations (e.g., Smith et al., 2024).

**Constraints:**

- Focus on the thematic connections and divergences between the papers.

- Highlight novel insights and areas for future research.

- Maintain a critical, analytical tone.

- Avoid simply summarizing each paper individually; synthesize across them.

- Think step by step: First, read each paper's core argument. Second, identify common themes and points of contention. Third, structure the synthesis around these themes. Fourth, draft the review, ensuring smooth transitions and a coherent argument.

5. Business Strategy (Market Entry Strategy)

**Role:** You are a senior management consultant specializing in technology market entry strategies for disruptive innovations.

**Context:**

- **Company:** "QuantumShield," a startup developing quantum-safe encryption solutions.

- **Target Market:** Financial services industry (banks, hedge funds, fintechs).

- **Competitive Landscape:** Existing traditional encryption providers, other nascent quantum-safe startups.

- **Key Differentiator:** QuantumShield's patented algorithm offers provable post-quantum security with minimal performance overhead.

- **Budget:** Moderate, aiming for strategic partnerships rather than direct consumer marketing.

- **Timeline:** 18-24 months for initial market penetration.

**Task:** Develop a detailed market entry strategy for QuantumShield targeting the financial services industry.

**Format:** A structured business report in markdown, including the following sections:

1. Executive Summary

2. Market Opportunity & Sizing

3. Competitive Analysis (SWOT for QuantumShield vs. top 2 competitors)

4. Target Customer Segments (within financial services)

5. Go-to-Market Strategy (Partnerships, Sales, Marketing - focus on value proposition)

6. Key Risks & Mitigation

7. Success Metrics

**Constraints:**

- Tone: Professional, analytical, and persuasive.

- Emphasize the unique value proposition of quantum-safe encryption.

- Focus on a B2B sales cycle.

- Prioritize strategic partnerships and regulatory compliance aspects.

- Word count: 1000-1200 words.

- Think step by step: First, analyze the market and competitive landscape. Second, define QuantumShield's unique position. Third, craft a strategy that leverages its strengths and addresses market needs. Finally, articulate risks and success measures.

Common Mistakes That Waste Tokens and Produce Poor Results

Despite the power of current AI models, certain prompting habits can still lead to suboptimal outcomes and unnecessary token consumption:

- Vagueness: Providing insufficient detail in the Role, Context, Task, or Constraints. “Write about AI” is vague; “Act as a tech journalist, write a 500-word article on the impact of GPT-5.4 on content creation, focusing on ethical considerations, for a tech-savvy audience” is specific.

- Lack of Structure: Dumping all information into a single, unformatted paragraph. This makes it harder for the AI to parse instructions and identify key elements.

- Ignoring Context Window Limits (Even Extended Ones): While large, context windows are not infinite. Providing genuinely irrelevant information can dilute the signal and confuse the model. Be judicious with context injection.

- Not Specifying Output Format: This leads to inconsistent and often unusable outputs, requiring manual reformatting.

- Failing to Iterate: Accepting the first draft without engaging in a critique and refinement loop. This leaves significant performance on the table.

- Over-Constraining: Imposing too many conflicting or overly restrictive constraints can lead to “AI refusal” or highly generic, uncreative outputs. Balance freedom with guidance.

- Under-Utilizing Role Assignment: Treating the AI as a generic information retrieval system rather than leveraging its ability to adopt expert personas.

- Repetitive Instructions: Rephrasing the same instruction multiple times, which can sometimes confuse the AI about which instruction to prioritize. State it clearly once.

- Assuming Prior Knowledge: Not providing essential background information or definitions, expecting the AI to ‘know’ your specific project details.

Access 40,000+ ChatGPT Prompts — Free!

Join our community of AI practitioners and get instant access to our comprehensive Notion Prompt Library, curated for developers, marketers, writers, and business owners.

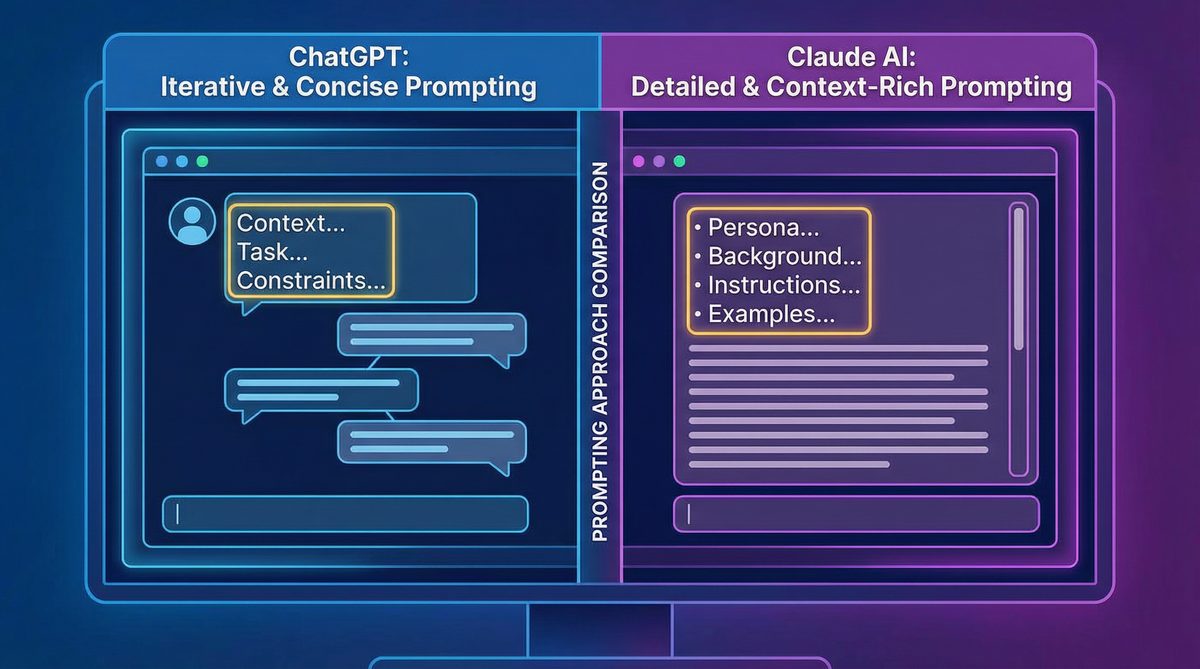

How Prompting Differs Between ChatGPT (GPT-5.4) and Claude (Opus 4.6/Sonnet 4.6)

While both OpenAI’s GPT-5.4 and Anthropic’s Claude (Opus 4.6 and Sonnet 4.6) are cutting-edge large language models, their architectural nuances and training philosophies lead to subtle but important differences in optimal prompting strategies.

- Context Handling: Claude models, particularly Opus, are renowned for their exceptional long-context window performance and ability to maintain coherence over extremely long inputs. They often excel at tasks requiring deep analysis of very lengthy documents (e.g., entire books, extensive legal briefs). GPT-5.4 also boasts a massive context window and strong performance, but some users report Claude having a slight edge in “deep reading” and synthesizing information across vast, disparate sections of a single, very long document. This implies that for tasks requiring meticulous analysis of extremely long, complex texts, Claude might benefit more from maximal context injection.

- Safety and Guardrails: Anthropic’s models are built with a strong emphasis on “Constitutional AI,” meaning they are trained with a set of principles designed to make them more helpful, harmless, and honest. This can sometimes manifest as a slightly more cautious or conservative output, particularly on sensitive topics. While GPT-5.4 also has robust safety mechanisms, Claude might be perceived as less prone to generating harmful or biased content, potentially requiring fewer explicit negative constraints from the user on such topics.

- Instruction Following: Both models are excellent at following instructions. However, Claude often responds exceptionally well to explicit, step-by-step instructions and can be very literal in its interpretation of formatting and process requests. GPT-5.4 is also highly capable but might sometimes infer implicit intentions more readily, which can be a double-edged sword – helpful when instructions are slightly underspecified, but potentially leading to deviations when strict adherence is required. For Claude, being overly explicit with every step of a chain-of-thought is often beneficial.

- Creative vs. Factual: GPT-5.4, especially with higher temperature settings, often demonstrates a slightly more expansive creative range, capable of generating highly imaginative and diverse textual styles. Claude is also creative but might lean slightly more towards structured creativity, maintaining a more consistent and grounded tone. For highly imaginative storytelling or brainstorming, GPT-5.4 might offer a broader initial output spectrum, while Claude might excel at creative tasks requiring strong adherence to thematic or structural constraints.

- Role Persona Adoption: Both models are adept at adopting roles. However, some practitioners find Claude to be particularly convincing and consistent in its persona adoption throughout extended conversations, perhaps due to its strong instruction-following capabilities. Explicitly defining and reaffirming the role in multi-turn interactions can be especially effective with Claude.

Ultimately, the best approach involves understanding these subtle differences and testing prompts across both platforms. What works optimally for one might be slightly tweaked for the other, leveraging each model’s unique strengths for specific tasks. The core structured prompt framework, however, remains universally applicable and foundational for effective interaction with any advanced AI model.

About the Author

Markos Symeonides is the founder of ChatGPT AI Hub, where he covers the latest developments in AI tools, ChatGPT, Claude, and OpenAI Codex. Follow ChatGPT AI Hub for daily AI news, tutorials, and guides.