⚡ The Brief

- What it is: A guide to scaling enterprise AI from pilot projects to full production using advanced AI platforms like ChatGPT and Claude.

- Who it’s for: Developers and enterprise leaders looking to integrate AI at scale within their organizations.

- Key takeaways: Learn how to overcome technological, organizational, and strategic challenges to achieve AI maturity and operational success.

- Pricing/Cost: Considerations include investment in AI platforms, infrastructure, and ongoing maintenance for enterprise-level deployment.

- Bottom line: Successfully scaling AI in enterprises requires strategic planning, robust technology, and leveraging platforms like ChatGPT and Claude.

✓ Instant access✓ No spam✓ Unsubscribe anytime

Executive Summary: The Enterprise AI Landscape in 2026

The year 2026 marks a pivotal moment in the evolution of enterprise Artificial Intelligence (AI), where organizations worldwide shift from tentative experimentation to full-scale AI integration across business functions. Deloitte’s 2026 State of AI report underscores that the primary determinant of success is the ability to move beyond mere ambition towards activation—translating AI aspirations into operational reality with measurable outcomes. This decisive transition requires enterprises to confront a complex matrix of technological, organizational, and strategic challenges while capitalizing on unprecedented opportunities.

Enterprise AI spending has accelerated dramatically, fueled by expanding use cases and growing confidence in AI’s transformative potential across sectors such as finance, healthcare, manufacturing, retail, and telecommunications. The investment surge is paralleled by a widening performance gap between AI leaders who harness AI at scale and laggards stuck in pilot purgatory. This divergence is not only economic but also strategic, manifesting in competitive advantages that redefine market leadership in the digital era.

MIT Sloan’s recent research recommends reorienting AI initiatives from isolated productivity gains—typically focused on enhancing individual tasks—to enterprise-oriented generative AI applications that reshape workflows, decision-making processes, and customer engagement at scale. This shift is essential to unlock the full value of AI, exemplified by the rise of agentic AI—the defining trend of 2026. Agentic AI systems enable autonomous workflows capable of self-directed task execution and continuous adaptation, pushing the boundaries of what AI can accomplish without constant human intervention.

Among the platforms powering this transformation, ChatGPT Enterprise and Claude for Enterprise stand out as the dominant players. Together, they have transformed how enterprises leverage conversational AI for everything from knowledge management and customer support to software development and strategic planning. Brand24’s analysis of 1.3 million ChatGPT mentions reveals that 79% of conversations revolve around work-related use cases, emphasizing the profound integration of AI into daily enterprise operations.

However, the path to AI maturity is fraught with critical challenges. Data governance and security remain paramount concerns, as organizations must safeguard sensitive information while maintaining compliance with evolving regulations. Measuring return on investment (ROI) for AI initiatives continues to be complex, requiring sophisticated metrics that capture both quantitative and qualitative benefits. Furthermore, change management emerges as a decisive factor—successful AI adoption demands cultural shifts, workforce reskilling, and executive alignment to overcome resistance and embed AI-driven processes deeply.

This comprehensive guide explores the phased approach to scaling enterprise AI from pilot projects to production-grade deployments in 2026. It provides detailed frameworks, technical insights, real-world metrics, and strategic considerations drawn from leading institutions and industry pioneers. Additionally, it includes an in-depth comparison of ChatGPT Enterprise and Claude for Enterprise across diverse use cases, highlights common pitfalls with mitigation strategies, and concludes with actionable next steps for enterprise leaders committed to AI excellence.

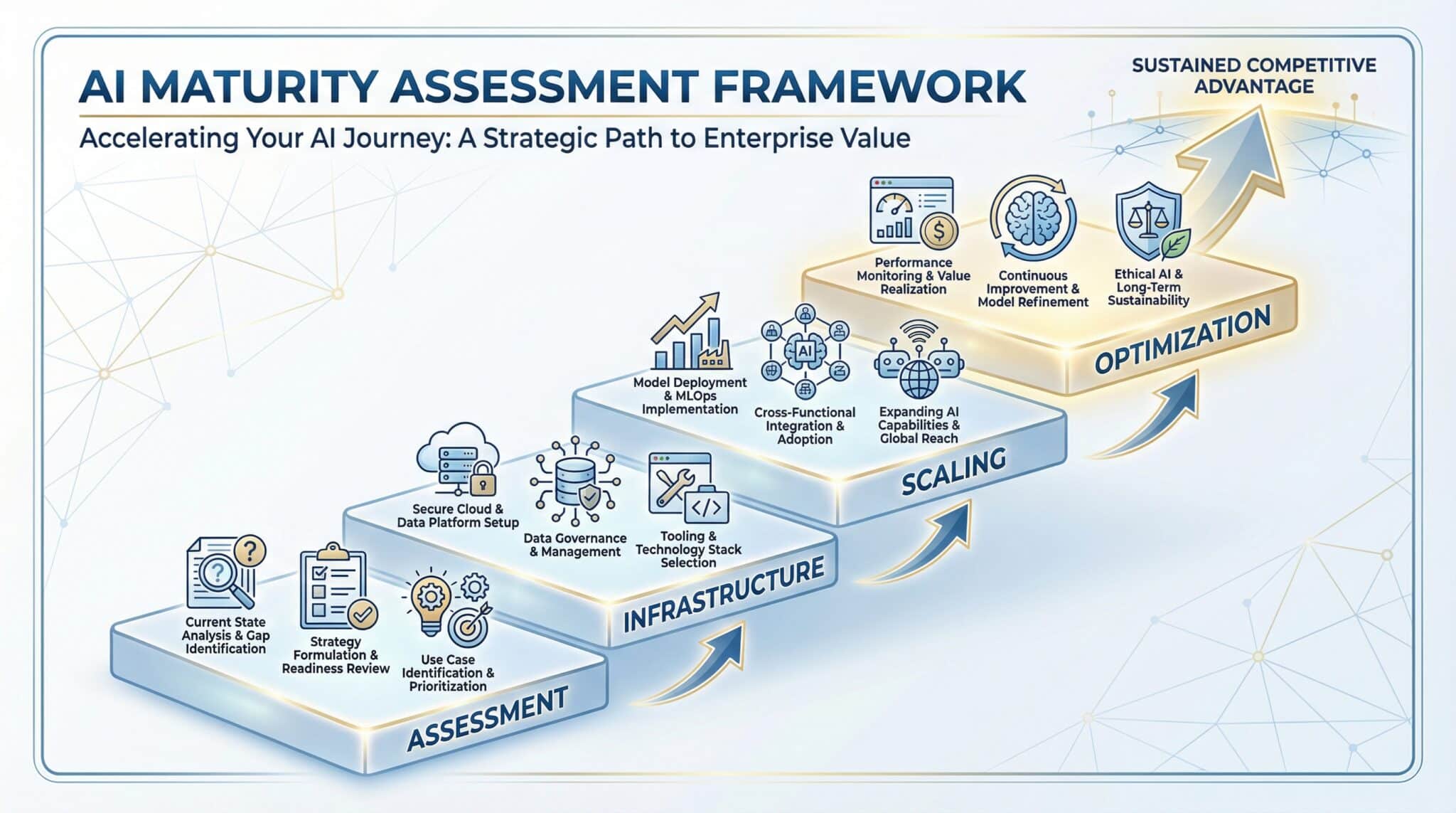

Phase 1: Assessment and Pilot Design with Specific Frameworks

Launching an enterprise AI initiative requires a rigorous assessment phase designed to ground AI ambitions in strategic business realities. The first phase sets the foundation by identifying high-impact use cases, evaluating organizational readiness, and designing pilot projects that serve as proof points for broader adoption. This phase demands a multidisciplinary approach involving business leaders, data scientists, IT architects, and compliance officers.

Strategic Frameworks for AI Assessment

Deloitte’s 2026 report emphasizes a structured framework encompassing three core dimensions: Business Impact Potential, Data Maturity, and Organizational Capability. Each dimension includes granular criteria:

- Business Impact Potential: Evaluate use cases based on revenue uplift, cost reduction, customer experience improvement, and strategic differentiation. For example, automating claims processing in insurance may reduce operational costs by 30%, while personalized marketing can increase conversion rates by 15%.

- Data Maturity: Assess data availability, quality, and accessibility. Enterprises must inventory data assets, identify silos, and evaluate data governance structures. High-quality, well-governed data is a prerequisite for successful AI pilots.

- Organizational Capability: Analyze talent readiness, executive sponsorship, and cultural openness to innovation. A company with strong data literacy and an agile mindset will navigate AI adoption more effectively.

Use Case Prioritization Matrix

Building on this framework, enterprises should apply a prioritization matrix that scores potential AI projects along axes of feasibility and impact. High-feasibility/high-impact use cases become prime candidates for pilots. Examples include:

- Automated customer support chatbots using conversational AI for first-level inquiry triage.

- Generative AI for code generation and review to accelerate software development cycles.

- Predictive maintenance in manufacturing leveraging sensor data and anomaly detection.

Pilot Design Methodology

The pilot itself should be scoped to deliver measurable outcomes within 3 to 6 months. Key design principles include:

- Defined Success Metrics: KPIs aligned with business goals (e.g., average handle time reduction, accuracy improvement, revenue impact).

- Cross-Functional Teams: Involve stakeholders from IT, business units, compliance, and security to ensure holistic oversight.

- Data Preparation and Integration: Early collaboration with data engineering teams to secure reliable data pipelines and address privacy concerns.

- Technology Evaluation: Pilot with multiple AI platforms if possible, such as ChatGPT Enterprise and Claude for Enterprise, to assess fit for purpose.

Risk Assessment and Compliance Considerations

Enterprises must conduct thorough risk assessments to identify privacy, security, and ethical risks. This includes:

- Data anonymization and encryption standards.

- Regulatory compliance alignment, particularly in heavily regulated industries like finance and healthcare.

- Bias and fairness audits of AI models to prevent reputational damage and legal exposure.

Integrating these considerations into pilot design reduces downstream barriers to scaling.

Case Example: GitLab’s AI Pilot Strategy

In March 2026, GitLab published its approach to converting early AI wins into sustainable competitive advantage. Their strategy emphasizes iterative pilot cycles with rapid feedback loops, embedding AI into developer workflows, and creating reusable AI components. This pragmatic approach aligns well with the frameworks outlined above, demonstrating how pilot design serves as a springboard for enterprise-wide AI adoption.

For a deeper understanding of how to architect enterprise AI initiatives from assessment through execution, consider exploring insights on emerging AI innovations and future trends in our article The Future of AI: Key Innovations and Trends to Watch in March 2026. This can provide valuable context for integrating cutting-edge developments into your AI deployment strategies.

Phase 2: Building the AI Infrastructure Stack with Technical Details

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.

Transitioning from pilot to production requires a robust, scalable AI infrastructure stack capable of supporting diverse workloads and ensuring operational reliability. This phase involves selecting and integrating components across data management, model development, deployment, monitoring, and security.

Core Components of the AI Infrastructure Stack

- Data Lakes and Warehouses: Centralized repositories like Snowflake, Databricks, or AWS S3 serve as the foundation for data ingestion and storage. Enterprises often adopt a hybrid architecture combining data lakes for unstructured data and data warehouses for structured, analytics-ready data.

- Data Engineering Pipelines: Tools such as Apache Airflow, dbt, and Kafka orchestrate data extraction, transformation, and loading (ETL/ELT), ensuring data quality and timeliness for AI consumption.

- Feature Stores: Platforms like Feast or Tecton provide reusable, versioned feature sets that enable consistent model training and inference.

- Model Development Environments: Integrated development environments (IDEs) such as Jupyter Notebooks combined with AI frameworks like PyTorch, TensorFlow, or Hugging Face facilitate model experimentation and training.

- Model Serving and Orchestration: Infrastructure such as Kubernetes, KFServing, or Seldon Core allows scalable deployment of AI models as microservices, enabling real-time inference.

- Monitoring and Observability: Tools like Prometheus, Grafana, and specialized AI monitoring platforms track model performance, data drift, and system health.

- Security and Governance Layers: Encryption, access controls, audit logging, and compliance tools ensure data privacy and regulatory adherence.

Technical Considerations for Enterprise-Grade AI

Building the stack requires addressing critical technical challenges:

- Scalability: The infrastructure must handle increasing data volumes and concurrent AI workloads. Cloud-native architectures leveraging autoscaling Kubernetes clusters are industry best practice.

- Latency and Throughput: Real-time use cases such as fraud detection demand low-latency inference pipelines optimized for high throughput.

- Interoperability: Integration with existing enterprise systems (ERP, CRM, BI tools) is essential to embed AI outputs within business processes.

- Model Lifecycle Management: Robust versioning, rollback capabilities, and continuous integration/continuous deployment (CI/CD) pipelines for models ensure agility and reliability.

- Data Privacy: Techniques such as differential privacy, federated learning, and secure multi-party computation are gaining traction to enable AI on sensitive data without compromising privacy.

Platform Choices: ChatGPT Enterprise and Claude for Enterprise Integration

Among the AI platforms dominating enterprise adoption, ChatGPT Enterprise and Claude for Enterprise offer distinct advantages. Both provide scalable APIs, enterprise-grade security, and integration with collaboration tools. Architecturally, they can be deployed in hybrid cloud configurations, supporting data residency requirements.

Enterprises often build middleware layers that abstract platform-specific APIs, facilitating seamless switching or concurrent use of both platforms depending on use cases. For example, ChatGPT Enterprise is frequently leveraged for customer-facing chatbot solutions due to its superior contextual understanding, while Claude is preferred for internal knowledge management and document summarization tasks.

Infrastructure Automation and DevOps

Automation is key to managing complex AI infrastructure. Infrastructure as Code (IaC) tools like Terraform and Ansible codify environment provisioning, while ML Ops platforms such as MLflow and Pachyderm automate model training, testing, and deployment cycles. These practices reduce manual errors, accelerate iteration speed, and enhance reproducibility.

Example: Financial Services AI Infrastructure

A major global bank recently built a multi-cloud AI infrastructure stack integrating AWS data lakes with on-premises GPU clusters for model training. They implemented feature stores and automated monitoring pipelines to detect model drift in credit risk scoring models, reducing default rates by 12% within six months of deployment.

For organizations interested in understanding how AI strategies are shaping enterprise growth, our comprehensive review of OpenAI’s 2026 Strategy: Driving Enterprise Growth Through Practical AI Adoption offers valuable insights. This resource provides context on how AI deployment plans align with scalable, practical implementations discussed in this playbook.

Phase 3: Scaling from Pilot to Production with Real Metrics

Scaling AI from pilot projects to full production deployments is arguably the most challenging phase, requiring overcoming technical, operational, and organizational hurdles. Success depends on establishing reliable processes, expanding infrastructure capacity, and embedding AI outputs into business workflows.

Key Metrics for Scaling Success

Enterprises track several quantitative and qualitative metrics to assess scaling progress:

- Adoption Rate: Percentage of target users actively engaging with AI-powered tools. For instance, a sales AI assistant reaching 70% adoption within the salesforce signals strong buy-in.

- Model Accuracy and Reliability: Continuous evaluation of precision, recall, F1 score, and uptime to ensure AI outputs maintain quality at scale.

- Operational Efficiency Gains: Metrics such as reduction in manual processing time, error rates, and customer wait times.

- Business Impact: Revenue uplift, cost savings, or customer satisfaction improvements directly attributable to AI deployment.

- Incident Rates: Tracking system failures, security breaches, or compliance violations to mitigate risks.

Organizational Scaling Practices

Scaling requires formalizing governance structures, including:

- AI Center of Excellence (CoE): A centralized team responsible for setting standards, managing resources, and fostering best practices.

- Cross-Functional Collaboration: Ensuring alignment between IT, business units, legal, and HR to coordinate efforts and manage change.

- Training and Enablement Programs: Continuous upskilling of employees on AI tools and workflows.

Real-World Scaling Examples

One leading retailer scaled a customer service chatbot pilot using ChatGPT Enterprise from 5,000 monthly interactions to 500,000 within nine months. They implemented real-time monitoring dashboards and automated fallback mechanisms to human agents, reducing average resolution time by 40%. This success was achieved by deploying additional cloud instances and refining conversational models based on user feedback.

Similarly, a technology firm scaled Claude for Enterprise-powered document summarization from an internal pilot supporting 100 users to an enterprise-wide platform serving 10,000 users. They integrated the AI service with Microsoft Teams and Slack, enabling seamless access and driving productivity across departments.

Agentic AI and Autonomous Workflows

The rise of agentic AI in 2026 introduces new scaling paradigms. Autonomous agents capable of executing complex multi-step workflows—such as automated contract review, compliance checks, or supply chain optimization—require orchestration frameworks that manage task dependencies, exception handling, and human override capabilities.

Enterprises are adopting platforms that enable these agentic capabilities, blending generative AI, reinforcement learning, and robotic process automation (RPA). This evolution demands not only technical scaling but also trust-building measures, including transparency, auditability, and explainability.

Phase 4: Measuring ROI and Continuous Optimization with KPIs

Measuring ROI from enterprise AI investments extends beyond traditional financial metrics, encompassing operational, strategic, and intangible benefits. Continuous optimization is essential to sustain value as AI models and business environments evolve.

Comprehensive ROI Measurement Framework

To capture the multifaceted impact of AI, organizations employ a balanced scorecard approach incorporating:

- Financial KPIs: Cost savings, revenue growth, profit margins, and reduction in capital expenditures.

- Operational KPIs: Cycle times, error rates, throughput, and utilization rates of AI systems.

- Customer KPIs: Net promoter scores (NPS), customer satisfaction ratings, and retention rates influenced by AI-powered personalization or service.

- Employee KPIs: Productivity improvements, employee satisfaction, and reduction in repetitive tasks.

- Innovation KPIs: Number of new AI-driven products or services launched, patents filed, and time-to-market improvements.

Continuous Monitoring and Feedback Loops

AI models degrade over time due to data drift, changing market conditions, or evolving user behavior. Enterprises implement continuous monitoring systems that track key performance indicators and trigger retraining or model updates automatically.

Feedback loops involving end-users and business stakeholders enable iterative improvements. For example, integrating user satisfaction surveys post-interaction with a chatbot can inform conversational model refinements.

Attribution Challenges and Advanced Analytics

Attributing business outcomes solely to AI initiatives is complex due to intertwined factors. Advanced analytics techniques such as multi-touch attribution, controlled experiments (A/B testing), and econometric modeling help isolate AI’s contribution.

Example: ROI Metrics from a Healthcare AI Deployment

A healthcare provider implemented an AI-powered diagnostic assistant that improved diagnostic accuracy by 18% and reduced patient wait times by 25%. Financially, the initiative yielded a 20% reduction in unnecessary tests, saving $5 million annually. Continuous model monitoring detected shifts in patient demographics, prompting timely retraining and maintaining clinical efficacy.

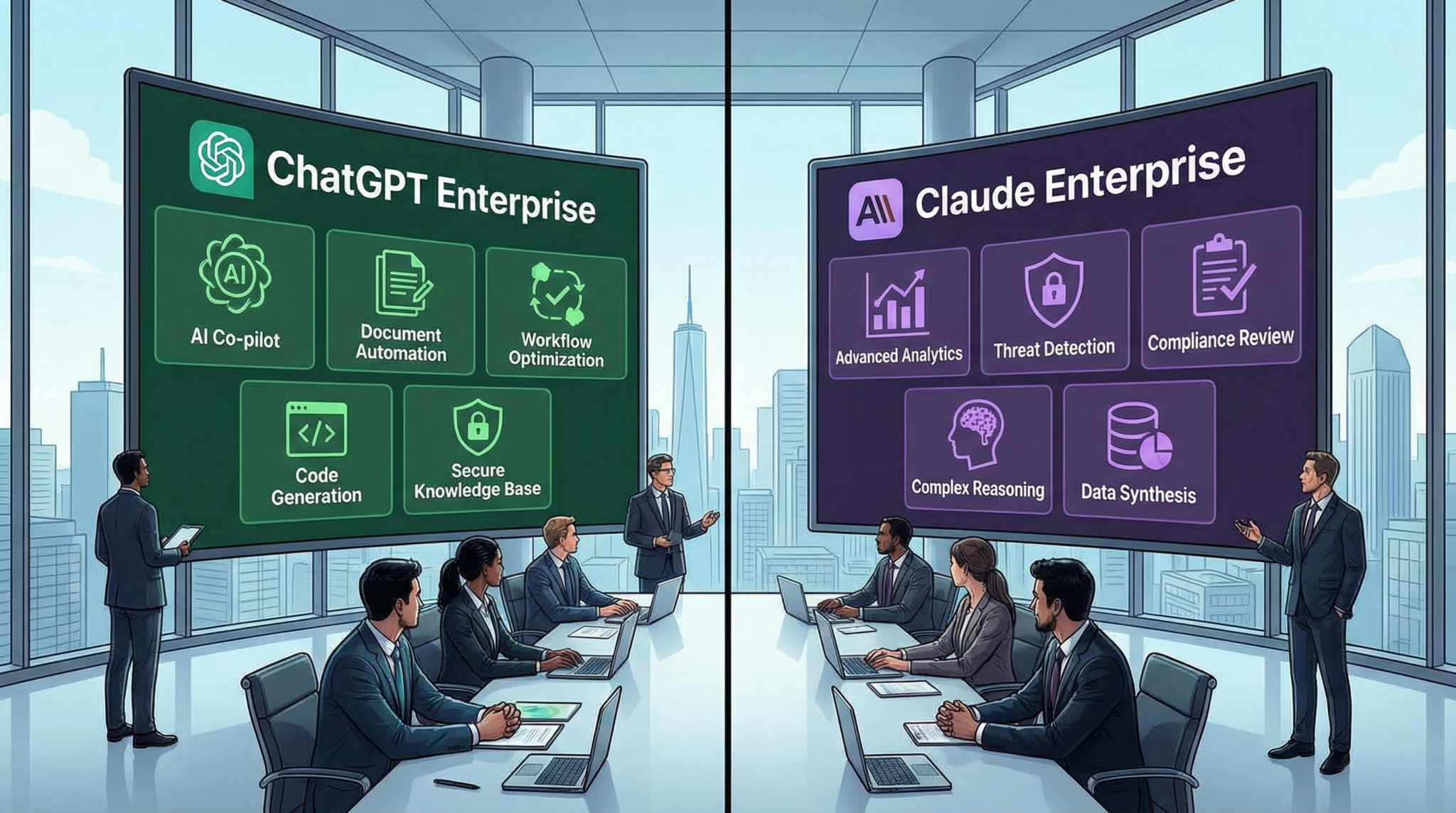

Detailed Comparison: ChatGPT Enterprise vs Claude for Enterprise for Different Use Cases

ChatGPT Enterprise and Claude for Enterprise are the two dominant conversational AI platforms in 2026, each tailored to distinct enterprise needs. Understanding their comparative strengths helps enterprises align platform selection with specific use cases.

Core Architectural Differences

- ChatGPT Enterprise: Built on OpenAI’s GPT-4 architecture with enhanced context windows, fine-tuning capabilities, and seamless Microsoft 365 integration. It supports extensive API access and offers robust data privacy controls compliant with SOC 2 and HIPAA standards.

- Claude for Enterprise: Developed by Anthropic, Claude emphasizes safety and interpretability, leveraging constitutional AI principles to reduce harmful outputs. It features advanced summarization, multi-document comprehension, and strong multi-turn dialogue coherence.

Use Case Suitability

| Use Case | ChatGPT Enterprise | Claude for Enterprise |

|---|---|---|

| Customer Support Chatbots | Excels with natural language understanding, contextual memory, and integration with CRM systems. Supports automated escalation workflows. | Effective for compliance-sensitive industries due to safer output generation and stronger content filters but slightly less flexible in complex context retention. |

| Internal Knowledge Management | Strong integration with Microsoft 365 enables seamless document search and generation. Good for interactive knowledge bases. | Superior summarization and synthesis of large document sets, ideal for research teams and legal departments requiring precise information extraction. |

| Software Development Assistance | Advanced code generation and debugging assistance powered by OpenAI Codex integration. Supports multiple programming languages and frameworks. | Offers reliable code explanations and best practice suggestions with a focus on safety, reducing risk of generating insecure code snippets. |

| Regulatory Compliance Monitoring | Good for generating compliance reports and interpreting regulations, relying on large-scale knowledge bases. | Excels in maintaining strict adherence to ethical guidelines and audit trails, preferred for highly regulated sectors like finance. |

| Autonomous Workflow Agents | Supports complex agentic AI workflows with flexible API orchestration and integration with RPA tools. | Focused on safe execution and interpretability of agent decisions, providing better transparency for autonomous tasks. |

Pricing and Licensing

ChatGPT Enterprise offers usage-based pricing with volume discounts and enterprise support tiers, whereas Claude for Enterprise employs a subscription model including dedicated instance hosting and enhanced privacy guarantees. Cost-efficiency varies based on usage patterns and integration complexity.

Security and Compliance

Both platforms meet rigorous security standards, but Claude’s design prioritizes minimizing hallucinations and harmful content, making it preferable for organizations with heightened risk tolerance. ChatGPT Enterprise’s Microsoft ecosystem integration provides advantages for organizations deeply embedded in Microsoft environments.

Common Pitfalls and How to Avoid Them with Examples

Despite the promise of AI, many enterprises stumble during scaling. Recognizing common pitfalls and proactively mitigating them is critical.

Pitfall 1: Inadequate Data Governance

Many AI projects fail because data is siloed, poorly documented, or lacks quality controls. For example, a retail chain’s AI pilot faltered when inconsistent product data led to inaccurate demand forecasts, costing millions in inventory mismanagement. Remedy involves establishing unified data governance frameworks, appointing data stewards, and investing in data quality tools.

Pitfall 2: Overlooking Change Management

Resistance from employees unaccustomed to AI-driven processes can derail initiatives. A financial services firm faced user pushback due to insufficient training and communication, resulting in low adoption of AI-based underwriting tools. Effective change management includes transparent communication, training programs, and involving end-users early in design.

Pitfall 3: Neglecting Security and Compliance

Ignoring security risks can lead to breaches. A healthcare provider experienced a data leak when AI systems accessed unencrypted PHI due to misconfigured permissions. Enterprises must implement rigorous access controls, encryption, and regular audits.

Pitfall 4: Failure to Define Clear KPIs

Without measurable objectives, AI projects drift without demonstrating value. A logistics company launched an AI-powered route optimization pilot without clear KPIs, leading to ambiguous results and executive skepticism. Establishing KPIs aligned to business goals is essential from the outset.

Pitfall 5: Underestimating Model Monitoring Needs

AI models degrade over time. A telecom operator’s churn prediction model lost accuracy as customer behavior changed, causing revenue losses. Continuous monitoring and retraining protocols prevent such failures.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt LibraryConclusion: Actionable Next Steps for Scaling Enterprise AI in 2026

The journey from AI pilot to production at enterprise scale in 2026 is complex but navigable with a strategic, disciplined approach. Organizations must prioritize comprehensive assessment frameworks, robust infrastructure architectures, and rigorous scaling practices to realize AI’s transformative promise.

Leaders should begin by conducting detailed readiness assessments and prioritizing high-impact, feasible use cases. Investing in modular, scalable AI infrastructure with integrated security and governance layers lays a solid foundation. Scaling efforts require formalized governance, cross-functional collaboration, and continuous measurement of adoption, performance, and ROI. Finally, choosing the right AI platforms—balancing capabilities of ChatGPT Enterprise and Claude for Enterprise—tailored to organizational needs is critical to sustained success.

By anticipating common pitfalls and embedding continuous optimization practices, enterprises can close the widening gap between AI leaders and laggards, translating AI ambition into lasting competitive advantage in this defining technological era.

For a comprehensive understanding of how enterprise AI strategies are evolving to support scalable deployment, explore our analysis of OpenAI’s 2026 Strategy: Driving Enterprise Growth Through Practical AI Adoption. This insight provides valuable context for implementing AI solutions effectively from pilot phases to full production.

Author: Markos Symeonides

🕐 Instant∞ Unlimited🎁 Free

Frequently Asked Questions

What are the main AI platforms in 2026?

In 2026, leading AI platforms include ChatGPT Enterprise, Claude Opus 4.7, Gemini 3, and Codex. These platforms are pivotal in transforming enterprise operations by providing advanced conversational AI capabilities and enabling autonomous workflows.

How do enterprises benefit from AI integration?

Enterprises benefit from AI integration by enhancing operational efficiency, improving decision-making processes, and gaining competitive advantages. AI systems like ChatGPT and Claude streamline workflows, automate routine tasks, and enable data-driven strategies, leading to significant productivity gains.

What challenges do enterprises face with AI scaling?

Enterprises face challenges such as data governance, security, and the need for strategic alignment when scaling AI. Overcoming these requires robust infrastructure, clear policies, and a focus on integrating AI into core business processes to ensure successful deployment and operation.

What is agentic AI, and why is it important?

Agentic AI refers to systems capable of autonomous task execution and adaptation without constant human oversight. It is crucial because it allows enterprises to automate complex workflows, enhance decision-making, and respond dynamically to changing business environments, thus maximizing AI's potential.

How does prompt engineering enhance AI outcomes?

Prompt engineering enhances AI outcomes by structuring inputs to guide AI responses effectively. Techniques like chain-of-thought prompting, structured outputs, and system/developer prompts ensure that AI systems deliver accurate, relevant, and actionable insights tailored to specific enterprise needs.

Why is AI maturity critical for enterprises?

AI maturity is critical because it determines an enterprise's ability to leverage AI for strategic advantage. Mature AI integration leads to better resource allocation, improved customer engagement, and sustained competitive positioning, essential for thriving in the digital economy.