ChatGPT’s Trusted Contact Feature: How OpenAI Is Redefining AI Safety for Vulnerable Users in 2026

ChatGPT’s Trusted Contact Feature: How OpenAI Is Redefining AI Safety for Vulnerable Users in 2026

[IMAGE_PLACEHOLDER_HEADER]

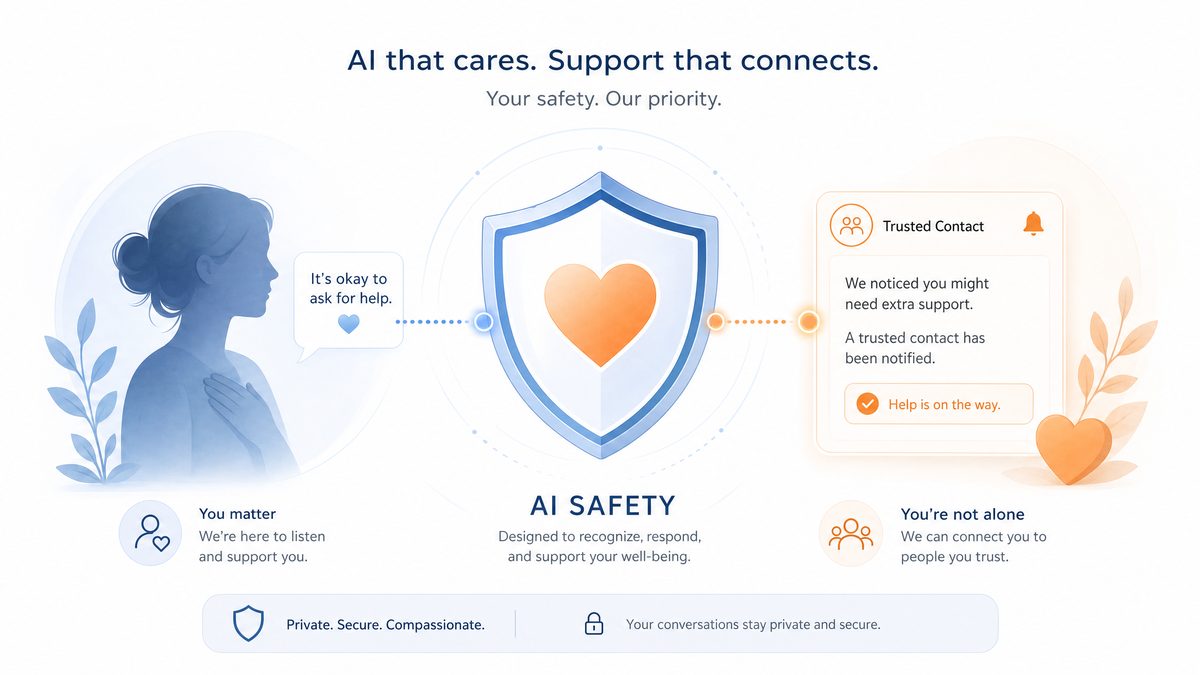

As AI technologies become increasingly integrated into daily life, ensuring user safety—particularly for vulnerable individuals—is paramount. In 2026, OpenAI has taken a groundbreaking step toward this goal by launching the Trusted Contact feature in ChatGPT. This innovative safety mechanism represents a paradigm shift in how AI systems can proactively support mental health and crisis intervention while respecting privacy and autonomy.

This comprehensive article explores the Trusted Contact feature in detail: its technical foundation, user experience, privacy considerations, ethical implications, and its context within the evolving landscape of AI safety. We also compare Trusted Contact with similar measures on other platforms, share expert insights, and discuss what the future holds for AI-mediated wellbeing.

OpenAI Introduces Trusted Contact: A New Safety Milestone for ChatGPT

On May 6, 2026, OpenAI unveiled Trusted Contact, an optional safety feature integrated into ChatGPT designed to identify and respond to signs of severe psychological distress, including self-harm risk and suicidal ideation. When the AI detects credible indications of acute crisis during user interactions, it confidentially notifies a pre-designated individual—the Trusted Contact—so they can provide timely support.

Unlike traditional safety approaches relying solely on content moderation or crisis resource suggestions, Trusted Contact harnesses advanced AI-driven risk assessment coupled with human-centered intervention pathways. This hybrid approach ensures rapid, compassionate response while safeguarding user privacy.

By embedding mental health support directly into AI conversations, OpenAI sets a new industry standard for responsible AI deployment that prioritizes user wellbeing beyond mere compliance.

[IMAGE_PLACEHOLDER_SECTION_1]

How Trusted Contact Works: The Technical Underpinnings

The Trusted Contact system combines cutting-edge natural language processing (NLP), behavioral analytics, and rigorous privacy protocols to deliver sensitive, accurate crisis detection and notification.

Advanced Natural Language Understanding and Risk Detection

At its core, Trusted Contact employs a proprietary AI model developed collaboratively by OpenAI’s research teams, clinical psychologists, and data scientists. This model uses transformer-based neural networks fine-tuned on diverse datasets comprising anonymized clinical transcripts, crisis-related language samples, and social media expressions of mental health conditions.

The model’s multi-dimensional analysis includes:

- Lexical & Semantic Indicators: Detecting explicit language related to self-harm, suicide, and hopelessness.

- Sentiment & Emotional Tone: Quantifying negativity, despair, or agitation contextualized within dialogue.

- Conversational Patterns: Recognizing repetitive or escalating distress signals over time.

- Behavioral Anomalies: Identifying deviations from a user’s typical interaction style signaling crisis onset.

- Contextual Modulation: Distinguishing metaphorical or humorous usage from genuine distress to reduce false positives.

- Multilingual & Cultural Adaptation: Analyzing language nuances across cultures for accurate interpretation.

This sophisticated approach allows for high sensitivity and precision in risk assessment, addressing the complexity of human emotional expression beyond keyword detection.

Real-Time Monitoring and Dynamic Thresholding

During active ChatGPT sessions, user inputs are processed through the risk detection pipeline in real time. The model generates a risk score compared against dynamically calibrated thresholds informed by ongoing machine learning feedback and anonymized outcome validation.

Notifications trigger only when persistent or escalating distress signals surpass set thresholds, preventing unnecessary alerts. Users can also customize sensitivity settings and enjoy cooldown periods between notifications, balancing responsiveness with comfort and trust.

Privacy-First Notification System

When a high-risk event is detected, the Trusted Contact feature initiates a secure notification to the designated individual without sharing detailed conversation histories. The alert includes a concise summary indicating potential crisis and practical guidance on providing support or seeking professional help.

All notifications are end-to-end encrypted and comply with data protection regulations such as GDPR and HIPAA-equivalent standards. The system uses secure channels and stores no sensitive data permanently without explicit user consent. Users have access to audit logs detailing notification history to maintain transparency.

Continuous Model Improvement and Ethical Oversight

OpenAI maintains a rigorous feedback loop involving anonymized data review, clinical expert input, and algorithmic retraining to enhance accuracy and cultural sensitivity. Dedicated ethics committees oversee deployment to mitigate bias, reduce false positives, and prevent stigmatization.

Explainability features enable users and clinicians to understand risk assessment rationales, fostering trust and facilitating collaborative care.

Seamless Integration with ChatGPT Architecture

Trusted Contact functions as a modular extension within ChatGPT’s backend, activated only upon user opt-in. The system ensures data minimization, strict access controls, encrypted communications, and isolated processing environments to uphold confidentiality and robustness without impacting performance.

[INTERNAL_LINK]

Setting Up Trusted Contact: User Experience and Flow

OpenAI has prioritized an intuitive, respectful setup process to empower users while explaining the feature’s function and privacy safeguards clearly.

Step 1: Informed Opt-In Consent

Users receive detailed, transparent information about how Trusted Contact works, including trigger scenarios, data handling, benefits, and limitations. Interactive FAQs and illustrative scenarios help clarify expectations, ensuring voluntary and informed participation.

Step 2: Designation of Trusted Contact

Users provide contact details—such as emails or phone numbers—of trusted individuals like close friends, family, or mental health professionals. Guidance encourages selecting empathetic contacts while considering consent and emotional impact.

Step 3: Contact Verification and Consent

The designated Trusted Contact receives a secure verification request outlining their role and responsibilities, ensuring willing participation before notifications commence.

Step 4: Activation and Management

Once verified, the feature is activated. Users can manage contacts anytime via ChatGPT settings, including adding, replacing, or temporarily suspending notifications. Reminders prompt periodic review to keep safety nets effective and relevant.

Practical User Tips

- Choose Contacts Thoughtfully: Prioritize individuals who can respond supportively and promptly.

- Discuss the Feature: Inform Trusted Contacts about their role to align expectations.

- Review Regularly: Update contact info and notification preferences as circumstances change.

- Consider Professionals: Include therapists or crisis counselors if personal support networks are limited.

Accessibility and Localization

The setup process supports multiple languages and complies with WCAG 2.1 accessibility standards, ensuring usability across diverse users globally. Features like screen reader compatibility, keyboard navigation, and culturally sensitive messaging promote inclusive adoption.

[INTERNAL_LINK]

[IMAGE_PLACEHOLDER_SECTION_2]

Privacy and Consent Considerations

Trusted Contact handles sensitive mental health data, demanding a privacy-first framework that respects user and contact autonomy.

Explicit Dual Consent Model

Activation requires clear, informed consent from both the user and their Trusted Contact, ensuring mutual agreement and ethical data sharing.

Minimal Data Sharing and Purpose Limitation

Notifications exclude detailed conversation content, limiting data to essential alert information and follow-up resources. Data retention policies mandate prompt deletion of transient metadata.

Robust Data Security Measures

End-to-end encryption (TLS 1.3, AES-256), secure hardware modules, role-based access controls, multi-factor authentication, and ongoing security audits safeguard data integrity and confidentiality.

Regulatory Compliance

| Regulation | Key Requirements | Trusted Contact Compliance Measures |

|---|---|---|

| General Data Protection Regulation (GDPR) | User consent, data minimization, right to erasure | Explicit opt-in/out, minimal data sharing, easy contact management |

| Health Insurance Portability and Accountability Act (HIPAA) (US) | Protection of PHI, audit controls | Encrypted communication, audit logging, limited PHI exposure |

| California Consumer Privacy Act (CCPA) | User rights to access and delete personal information | Accessible privacy dashboards, data deletion support |

Transparency and User Empowerment

Users can access detailed policy documentation and privacy dashboards to review or revoke permissions. Audit trails provide insights into notification history, fostering trust.

Independent Audit and Oversight

Third-party audits and quarterly transparency reports disclose usage statistics, false positive rates, and demographic reach, ensuring accountability and continuous improvement.

[INTERNAL_LINK]

The Broader Context of AI Safety in 2026

As AI systems become deeply embedded in personal and professional domains, integrating proactive safety mechanisms has become essential.

Evolution from Reactive to Proactive Safety

Early AI safety efforts centered on content filtering and bias reduction. Today, features like Trusted Contact illustrate a shift toward active mental health risk detection and human-centered intervention, raising ethical and technical standards.

Industry Trends and Best Practices

- Automated Content Moderation to filter harmful content.

- Human-in-the-Loop Review for nuanced assessments.

- Crisis Resource Integration embedded in responses.

- Proactive Risk Detection leveraging AI analytics.

- Personalized Safety Features tailored to user needs.

Trusted Contact exemplifies the proactive, personalized approach emerging as best practice.

Alignment with Global AI Governance

The feature complies with frameworks like the Partnership on AI’s 2025 Safety and Wellbeing Framework and OECD AI Principles, emphasizing human-centered values, transparency, and security, facilitating regulatory acceptance worldwide.

Scaling Challenges

- Cultural and Linguistic Diversity: Adapting models for accurate detection across languages and cultures.

- Resource Constraints: Ensuring availability of trained responders globally.

- Technological Infrastructure: Maintaining secure, low-latency processing at scale.

- Legal Variability: Navigating differing national privacy and mental health laws.

OpenAI actively invests in research and partnerships to overcome these challenges.

Looking ahead, advances like the GPT-5.5 improved default model promise enhanced reliability and contextual understanding, further strengthening AI safety.

Comparing Trusted Contact to Other Platforms’ Safety Measures

Trusted Contact’s user-centric, AI-driven real-time crisis detection and secure third-party notification distinguish it from safety features on major digital platforms. Below is a comparative overview:

| Platform | Detection Method | Type of Intervention | Third-Party Notification | Personalization Level | Privacy Controls |

|---|---|---|---|---|---|

| OpenAI Trusted Contact | AI-driven real-time semantic risk detection | Secure notification to user-designated trusted individual | Yes, pre-designated contacts notified | High – User selects contacts and manages settings | End-to-end encryption, dual consent |

| Facebook/Instagram | Content flagging by users and AI moderation | Automated prompts and crisis resource links | No third-party notification | Low – Standardized prompts to all flagged users | Content moderation policies, limited user control |

| Keyword detection and user reports | Automated crisis prompts and helpline access | No third-party notification | Low – Uniform intervention approach | Privacy policies, no personal contact involvement | |

| Discord | Community moderation and automated bots | Resource provision and moderation assistance | No third-party notification | Moderate – Community-driven alerts | Community guidelines and privacy policies |

Trusted Contact’s unique combination of AI precision, user control, and privacy safeguards provides a superior model for crisis intervention embedded within conversational AI.

Expert Opinions on AI-Mediated Mental Health Support

Clinical Psychologist Perspective

Dr. Emily Chen, clinical psychologist specializing in digital interventions, states:

“The Trusted Contact feature is a significant innovation in early crisis detection. Timely notification to a trusted individual bridges the gap between AI identification and effective human support. Precise alerts paired with clear guidance for contacts maximize positive outcomes while minimizing distress.”

AI Ethics Viewpoint

Dr. Marcus Feldman, AI ethicist, comments:

“Balancing user autonomy with safety is delicate. OpenAI’s dual-consent and transparency mechanisms foster trust and ethical deployment. Ongoing audits and community engagement are essential to manage risks like privacy breaches and false positives.”

Empirical Evidence Supporting AI-Assisted Crisis Detection

Meta-analyses published in 2025 demonstrate that AI-mediated crisis detection combined with human follow-up reduces emergency hospitalizations by approximately 18%. Personalized AI interventions involving trusted contacts increase user engagement with mental health resources, validating the Trusted Contact approach.

Ethical Considerations and Potential Concerns

False Positives and User Anxiety

Though highly accurate, detection algorithms can generate false positives, potentially causing anxiety or strained relationships. OpenAI addresses this through conservative thresholds, continuous refinement, and user controls to disable or adjust notifications. Communications clarify that alerts are not clinical diagnoses.

Privacy and Data Security Risks

Handling sensitive mental health data heightens privacy risks. OpenAI’s encryption, access controls, and regulatory compliance mitigate these risks, supported by ongoing security audits and rapid incident response protocols.

Dependence on Automated Judgment

AI detection supplements but does not replace professional clinical judgment. OpenAI emphasizes that Trusted Contact alerts are informational and encourages follow-up with qualified health providers.

Equity and Access Challenges

Users lacking supportive contacts may have limited benefit from Trusted Contact. OpenAI explores integrations with professional crisis services and peer support to broaden access, particularly in underserved populations.

Ongoing Community Engagement and Oversight

OpenAI maintains active dialogue with mental health experts, ethicists, and user communities, ensuring ethical concerns are addressed proactively through transparent reporting and collaborative policy development.

The Future of AI Safety Features: Toward Proactive, Compassionate AI

Multi-Contact Support Networks

Future updates aim to enable users to designate multiple Trusted Contacts, creating dynamic support networks with escalation protocols to enhance reliability and reduce individual burden.

Contextualized, Adaptive Interventions

Advances in contextual AI will allow tailored notifications based on severity, cultural context, and user preferences, complemented by empathetic AI conversational agents providing first-line support.

Integration with Emergency and Professional Services

With consent, AI systems may directly interface with crisis helplines and emergency responders, facilitating timely, informed intervention while maintaining stringent privacy safeguards.

Collaborative Multidisciplinary Development

OpenAI’s roadmap emphasizes partnerships among psychologists, ethicists, behavioral scientists, and technologists to ensure safety features evolve in alignment with human values and scientific evidence.

Broader Mental Health Tools

Building on Trusted Contact, future AI capabilities may include mood tracking, personalized coping strategies, and integration with biosensors to enrich understanding of user wellbeing and support proactive mental health management.

Implications for ChatGPT Users

Trusted Contact enhances user safety by introducing personalized, proactive crisis support while preserving autonomy and privacy.

- Early Crisis Detection: Identifies distress signals that may otherwise be missed.

- Trusted Human Intervention: Engages meaningful support networks.

- User Control: Full management over contacts, notifications, and settings.

- Complementary Resources: Integrates with existing ChatGPT crisis prompts and external links.

Recommendations for Users

- Incorporate Trusted Contact into a holistic mental health strategy when using AI.

- Select and communicate clearly with trusted individuals.

- Review and update settings regularly.

- Utilize professional mental health resources alongside the feature.

Impact on User Experience

Designed for minimal intrusion, Trusted Contact activates only in high