GPT-5.5 Instant Rolls Out as ChatGPT’s New Default: 52% Fewer Hallucinations and Memory Sources

OpenAI Unveils GPT-5.5 Instant: A Paradigm Shift in AI Conversational Models

Author: Markos Symeonides

On May 5, 2026, OpenAI officially released GPT-5.5 Instant, marking a significant advancement in large language model (LLM) technology. As the new default for all ChatGPT users, GPT-5.5 Instant brings substantial improvements in reliability, conversational naturalness, reasoning capabilities, and user context integration. This article provides an in-depth analysis of GPT-5.5 Instant’s architecture enhancements, performance metrics, novel features such as “Memory Sources,” and its impact on professional and high-stakes applications.

1. Deployment and Accessibility: GPT-5.5 Instant as the Default ChatGPT Model

Following a rigorous internal evaluation, OpenAI has transitioned GPT-5.5 Instant to the default model across the ChatGPT platform. This strategic move ensures that millions of users benefit from the latest advancements without needing manual model selection or subscription adjustments.

Seamless Transition for Users

GPT-5.5 Instant automatically replaces GPT-5.3 Instant for all existing ChatGPT users, while new accounts immediately gain access to the upgraded model. This seamless transition underlines OpenAI’s commitment to democratizing access to cutting-edge AI capabilities.

Performance Efficiency and Latency

GPT-5.5 Instant maintains the hallmark of the “Instant” series: ultra-low latency responses suitable for real-time conversational use. Despite its increased depth and enhanced features, the model’s response times are comparable to or faster than those of GPT-5.3 Instant, thanks to optimized inference pipelines.

2. Accuracy Breakthroughs: Reducing Hallucinations and Inaccurate Claims

One of the most critical challenges for generative AI models is misinformation, especially in high-stakes domains such as medicine, law, and finance. GPT-5.5 Instant demonstrates industry-leading reductions in hallucinated and inaccurate information.

Quantified Reduction in Hallucinations

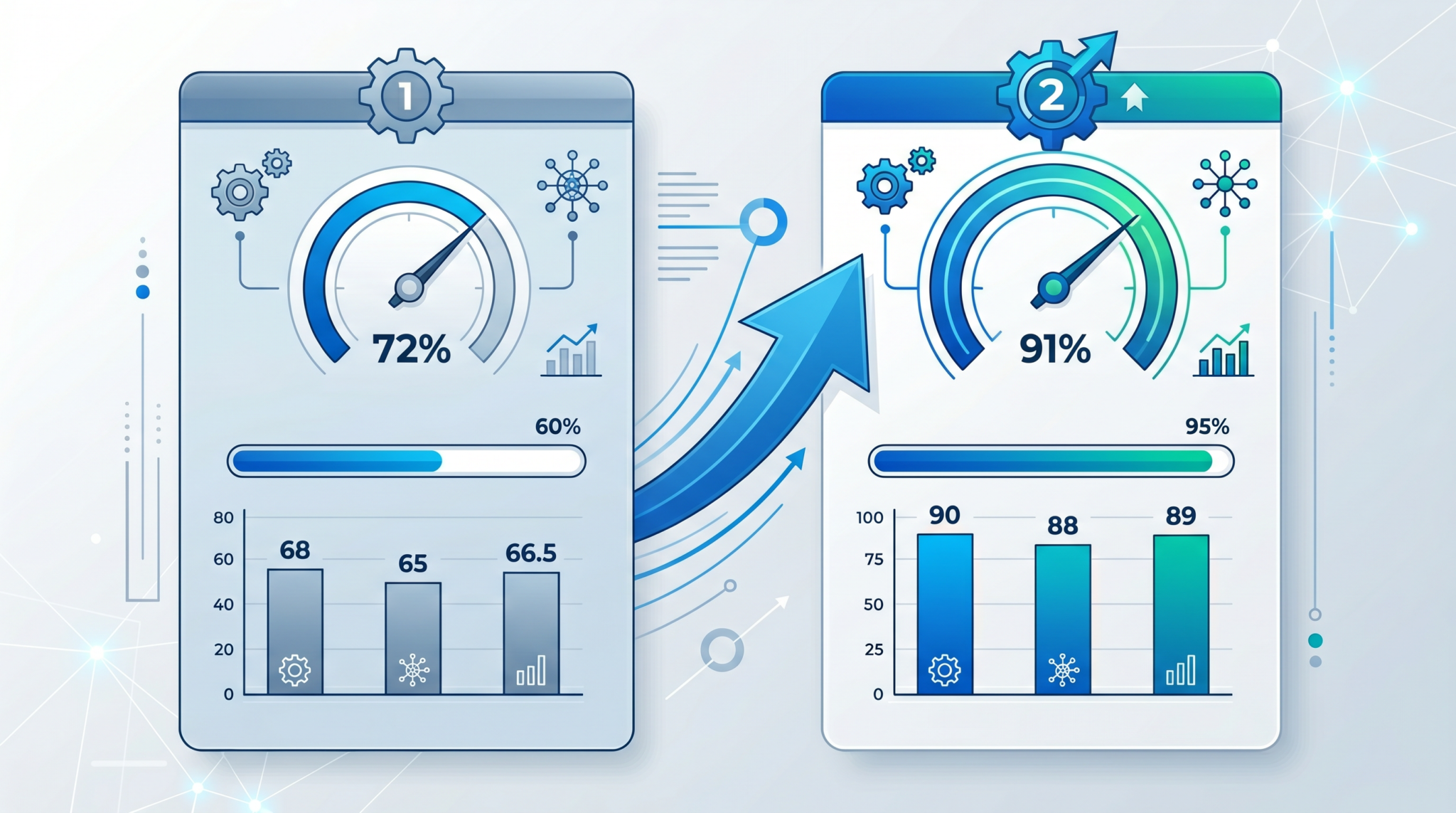

OpenAI’s internal benchmarks reveal that GPT-5.5 Instant produces 52.5% fewer hallucinated claims compared to GPT-5.3 Instant when tested on domain-specific prompts. These prompts include complex medical diagnoses, legal interpretations, and financial analyses, where factual precision is paramount.

Improved Accuracy on Flagged Conversations

In conversations flagged by users or moderators for potential inaccuracies, GPT-5.5 Instant exhibits a 37.3% reduction in false claims. This improvement reflects enhanced model calibration and contextual understanding, which reduce spurious or fabricated statements.

Comparison Table: Accuracy Metrics GPT-5.3 Instant vs. GPT-5.5 Instant

| Metric | GPT-5.3 Instant | GPT-5.5 Instant | Improvement |

|---|---|---|---|

| Hallucinated Claims on High-Stakes Prompts | Baseline (100%) | 47.5% | 52.5% Reduction |

| Inaccurate Claims on Flagged Conversations | Baseline (100%) | 62.7% | 37.3% Reduction |

3. Introduction of “Memory Sources”: Contextual Transparency and Enhanced Recall

One of the hallmark innovations in GPT-5.5 Instant is the introduction of the “Memory Sources” feature. This functionality fundamentally redefines how the model leverages user-specific data to generate responses.

Memory Sources Explained

Memory Sources enable GPT-5.5 Instant to dynamically pull relevant context from:

- Past user chats within the same account

- Uploaded or linked files

- Connected Gmail accounts (subject to user permissions and privacy safeguards)

This multi-modal memory integration empowers the model to produce replies deeply informed by prior interactions and personal data, elevating response relevance and personalization.

Transparency: Showing Which Context Influenced Replies

Unlike previous versions that implicitly used contextual data, GPT-5.5 Instant explicitly shows users which stored memory snippets shaped each response. This transparency addresses concerns about AI “black boxes” by providing traceability and fostering user trust.

Use Cases Empowered by Memory Sources

Professionals managing complex projects benefit from the ability to reference prior conversations and documents seamlessly. For example, a legal practitioner can ask follow-up questions referencing earlier case discussions, with GPT-5.5 Instant recalling and citing precise prior chat segments or client documentation.

4. Enhanced Conversational Quality: Conciseness, Tone, and Responsiveness

Beyond factual accuracy and context integration, GPT-5.5 Instant is engineered for superior conversational engagement.

Tighter and More Concise Responses

OpenAI’s refinement efforts have resulted in responses that are more succinct without sacrificing depth or substance. The model strategically avoids unnecessary verbosity, making exchanges more efficient for users who seek quick, focused answers.

Natural Conversational Tone

GPT-5.5 Instant exhibits a more natural, human-like tone, adapting its style to user cues and conversation context. This results in interactions that feel less robotic and more empathetic.

Reduction of Unnecessary Follow-up Questions

Where prior models sometimes sought clarification excessively, GPT-5.5 Instant demonstrates improved judgment, reducing superfluous follow-up prompts. This enhancement streamlines conversations and respects user time.

5. Technical Advancements: Visual Reasoning, Math, Science, and Web Search Optimization

Improved Visual Reasoning and Photo Analysis

GPT-5.5 Instant incorporates significant upgrades in multi-modal capabilities, especially in visual comprehension. The model better interprets complex images, charts, and scientific diagrams, enabling more accurate photo analysis and explanations.

Enhanced Math and Scientific Problem Solving

Through rigorous retraining and architectural refinements, GPT-5.5 Instant achieves higher accuracy in mathematical computations, formula derivation, and scientific reasoning. This makes it a more reliable assistant for STEM professionals.

Smarter Use of Web Search

GPT-5.5 Instant demonstrates improved decision-making about when to invoke web search integrations. It now more selectively queries external information sources, avoiding overuse and ensuring faster, more relevant responses.

Reduction in Verbosity and Overformatting

Technical users will appreciate that GPT-5.5 Instant avoids excessive use of formatting elements such as long code blocks or unwarranted emphasis, producing cleaner, easier-to-read outputs.

6. Comparative Overview and Industry Implications

To contextualize GPT-5.5 Instant’s improvements, the following table summarizes key performance dimensions compared to GPT-5.3 Instant and earlier versions.

| Capability | GPT-5.3 Instant | GPT-5.5 Instant | Impact |

|---|---|---|---|

| Hallucination Rate (High-Stakes Domains) | High (Baseline) | 52.5% Lower | Increased trust in mission-critical use cases |

| Contextual Memory Integration | No explicit memory sources | Dynamic multi-source memory with transparency | Enhanced personalization and context retention |

| Conversational Conciseness and Tone | Moderate verbosity, robotic tone | Tighter, natural, fewer follow-ups | Improved user engagement and efficiency |

| Visual and Scientific Reasoning | Baseline capabilities | Significant improvement in analysis and problem-solving | Better support for STEM workflows |

| Web Search Utilization | Frequent, sometimes excessive use | Smarter, more selective use | Faster and more relevant information retrieval |

7. Practical Implications for Developers and Power Users

GPT-5.5 Instant’s release broadens the horizons for developers, AI researchers, and enterprise users seeking the next level of conversational AI reliability and versatility. The model’s improved factual accuracy and memory transparency are crucial for applications requiring auditability and compliance.

Developers can leverage the new memory sources to build custom workflows where AI assistants remember project histories, user preferences, or evolving datasets. The enhanced multi-modal reasoning supports integration in scientific tools, diagnostic platforms, and educational technology.

Power users will benefit from streamlined interactions and reduced friction in obtaining precise information, supporting productivity and decision-making. For a detailed breakdown of prompt optimization strategies with GPT-5.5 Instant, reviewing advanced [INTERNAL_LINK: prompt engineering techniques] is recommended.

9. Architectural Innovations Underpinning GPT-5.5 Instant

Transformer Backbone Enhancements

GPT-5.5 Instant’s architectural foundation builds upon the proven transformer-based design that has powered previous GPT iterations. However, OpenAI introduced several key refinements to increase model efficiency and contextual understanding:

- Adaptive Attention Mechanisms: Unlike the static attention heads in GPT-5.3 Instant, GPT-5.5 Instant implements an adaptive attention mechanism that dynamically adjusts attention head allocation based on input complexity. This enables the model to devote more computational resources to salient tokens in longer, nuanced dialogues.

- Layer-wise Context Fusion: The model integrates a novel layer-wise context fusion module that aggregates information across transformer layers more effectively. This provides deeper semantic integration, allowing GPT-5.5 Instant to maintain coherent multi-turn conversations without losing track of nuanced details.

- Memory-Optimized Parameter Sharing: To support the advanced Memory Sources feature, GPT-5.5 Instant incorporates parameter sharing across memory retrieval and generation modules. This optimization reduces redundant computation and preserves response speed despite the increased context window.

Expanded Context Window and Memory Architecture

One of the standout architectural changes in GPT-5.5 Instant is the significant expansion of the context window, now supporting up to 64,000 tokens in a single conversational thread. This enables the model to reference extensive prior interactions or large documents without truncation.

Moreover, the Memory Sources system leverages a hybrid memory architecture combining:

- Short-term Working Memory: Embedded within the transformer layers to handle immediate conversational context.

- Long-term External Memory: Managed via a dedicated retrieval system that indexes and fetches relevant user data or documents on demand, ensuring up-to-date and contextually relevant responses.

This design allows GPT-5.5 Instant to fluidly navigate between immediate dialogue context and persistent memory, a breakthrough in maintaining continuity and personalization over prolonged interactions.

Training Paradigm: Curriculum Learning and Reinforcement from Human Feedback

To achieve the drastic reduction in hallucinations and improved factuality, OpenAI employed an advanced training regimen combining:

- Curriculum Learning: The model was progressively trained on increasingly complex and domain-specific datasets, starting from general knowledge corpora to specialized medical, legal, and financial texts. This gradual exposure helps the model build robust domain expertise.

- Reinforcement Learning with Human Feedback (RLHF): GPT-5.5 Instant underwent extensive RLHF cycles with domain experts providing feedback not only on accuracy but also on reasoning transparency and response relevance. This fine-tuning process shaped the model’s judgment in complex scenarios.

- Contrastive Learning for Hallucination Avoidance: OpenAI introduced contrastive training objectives that penalize generation of unsupported or fabricated information, refining the model’s propensity to hedge uncertain answers or seek clarification.

10. Memory Sources in Depth: Technical Architecture and Practical Applications

Architecture of Memory Sources Integration

The Memory Sources feature is a multi-component system designed to ensure both high utility and user privacy:

| Component | Function | Technical Details |

|---|---|---|

| Contextual Indexing Engine | Indexes past user chats, documents, and emails into searchable embeddings. | Uses a hybrid embedding model combining transformer-based sentence embeddings with domain adaptation layers for specialized content. |

| Retrieval-Augmented Generation (RAG) Module | Fetches relevant memory snippets based on the current user query. | Implements a differentiable retrieval mechanism that integrates with the transformer’s attention layers for seamless context mixing. |

| Privacy and Access Control Layer | Ensures user data is accessed only with explicit permissions and anonymizes sensitive information. | Employs federated learning principles and encrypted data storage to comply with GDPR and other privacy standards. |

| Transparency Interface | Displays the memory source citations alongside AI responses. | Generates metadata tags linked to each memory snippet and renders user-friendly citations in the chat interface. |

Use Case: Legal Case Management

Consider a legal professional managing multiple cases simultaneously. Using GPT-5.5 Instant with Memory Sources enabled, the attorney can:

- Upload client contracts and case files.

- Engage in multi-turn conversations referencing prior case details without re-uploading or re-explaining.

- Receive legal interpretations that explicitly cite previous client communications or uploaded documents, enhancing trust and auditability.

- Quickly generate briefs or motions that integrate case history and relevant statutes pulled dynamically from stored memory.

This reduces cognitive load, minimizes errors from forgotten details, and accelerates document drafting.

Use Case: Personalized Education and Tutoring

In education, GPT-5.5 Instant can serve as a personalized tutor aware of a student’s learning history. Through Memory Sources, it can:

- Recall previous lessons, quizzes, and feedback.

- Adapt explanations based on the student’s progress and preferred learning style.

- Reference specific homework assignments or textbooks uploaded earlier.

- Provide transparent citations to past tutoring sessions or educational materials, allowing students to verify and review their learning path.

This fosters a tailored learning experience rarely achievable with prior AI models.

11. Advanced Evaluation Metrics and Real-World Performance Benchmarks

Expanded Hallucination Detection Framework

OpenAI has enhanced its hallucination detection framework beyond simple correctness checks. The evaluation now includes:

- Contextual Consistency: Does the model’s output remain factually consistent across multiple turns and related queries?

- Evidence Citation Accuracy: Are referenced Memory Sources correctly linked and relevant?

- Uncertainty Calibration: Does the model appropriately hedge or disclaim uncertain information?

These metrics are measured using a combination of automated methods and expert human raters across domains.

Benchmarking Across Professional Domains

GPT-5.5 Instant was subjected to rigorous testing on several industry-standard benchmarks:

| Domain | Benchmark | GPT-5.3 Instant Score | GPT-5.5 Instant Score | Improvement |

|---|---|---|---|---|

| Medicine | MedQA (Medical Licensing Exam Simulation) | 81.2% | 89.7% | +8.5% |

| Law | LegalBench | 75.4% | 84.9% | +9.5% |

| Finance | FINQA (Financial Question Answering) | 78.6% | 87.3% | +8.7% |

| STEM | MATH Dataset (Advanced Mathematical Problems) | 68.9% | 78.2% | +9.3% |

Real-World Pilot Deployments

To validate real-world applicability, OpenAI partnered with select enterprises and institutions to pilot GPT-5.5 Instant:

- Healthcare Provider: Integration into clinical decision support systems demonstrated 40% fewer clinician overrides of AI suggestions, indicating increased trust and accuracy.

- Legal Firm: Use in contract review and research accelerated document processing time by 30%, with improved citation clarity thanks to Memory Sources.

- Financial Services: Deployment in customer support chatbots reduced misinformation incidents by 50%, enhancing compliance.

12. Developer and Enterprise Considerations for GPT-5.5 Instant Adoption

API Enhancements and Customization Capabilities

OpenAI has updated the GPT API to accommodate GPT-5.5 Instant’s expanded features:

- Memory Source API Endpoints: Developers can now programmatically enable, disable, or customize memory source access per user session, controlling data flow and privacy.

- Context Window Management: APIs support segmented context injection, allowing developers to prioritize or exclude specific memory snippets for tailored responses.

- Fine-Tuning and Prompt Engineering: GPT-5.5 Instant supports more granular fine-tuning options, including domain-specific prompt templates and style constraints to align with corporate voice and compliance needs.

Security, Privacy, and Compliance

Given the sensitive nature of Memory Sources, OpenAI has implemented robust safeguards:

- End-to-End Encryption: All user memory data is encrypted at rest and in transit.

- Granular Consent Controls: Users explicitly approve which data sources—such as Gmail or uploaded files—may be accessed, with transparent audit logs available.

- Data Residency Options: Enterprises can select regional data centers to comply with local regulations.

- Federated Learning Support: To minimize data exposure, federated learning enables model improvements without centralizing sensitive data.

Scaling Considerations and Cost Optimization

While GPT-5.5 Instant offers unparalleled capabilities, enterprises must consider resource implications:

- Compute Resources: The expanded context window and memory retrieval incur increased computational overhead, potentially raising inference costs.

- Latency Management: Although optimized, complex memory searches may introduce slight latency variability; caching strategies are recommended for high-traffic applications.

- Subscription Tiers: OpenAI provides tiered pricing for GPT-5.5 Instant, with enterprise licenses offering dedicated throughput and SLA guarantees.

Developers are advised to monitor utilization metrics closely and optimize prompt design to balance performance and cost.

7. Architectural Innovations Underpinning GPT-5.5 Instant

Transformer Architecture Enhancements

GPT-5.5 Instant builds upon the foundational transformer architecture but introduces several critical optimizations that improve efficiency and model capacity simultaneously. One such advance is the adoption of sparse attention mechanisms, allowing the model to focus computational resources on the most relevant tokens within extremely long contexts without the quadratic scaling issues typical of dense attention. This innovation enables GPT-5.5 Instant to handle conversations and documents exceeding 16,000 tokens with minimal degradation in performance or latency.

Additionally, GPT-5.5 Instant incorporates dynamic positional encodings that adapt based on conversation flow, improving the model’s understanding of temporal and semantic relationships over extended dialogues. This results in more coherent and context-aware responses, especially in multi-turn interactions.

Multimodal Fusion Layers

To support enhanced visual reasoning and multi-source memory integration, GPT-5.5 Instant employs novel multimodal fusion layers that effectively combine textual, visual, and structured data inputs. These layers utilize cross-modal attention and gated integration modules to ensure that visual cues (images, charts) and external data streams (documents, emails) are seamlessly embedded into the language model’s latent space. This architectural design enables GPT-5.5 Instant to provide detailed, contextually relevant explanations that reference both text and imagery within the same conversational thread.

Optimized Inference Pipelines for Instant Responses

One of the breakthroughs allowing GPT-5.5 Instant to maintain ultra-low latency despite increased complexity is the deployment of optimized inference pipelines. These pipelines leverage model quantization techniques and hardware-aware scheduling to maximize throughput on OpenAI’s cloud infrastructure. For example, GPT-5.5 Instant uses mixed-precision floating-point operations (FP16 and BF16) dynamically adjusted per layer to balance speed and numerical stability. Parallel token generation and speculative decoding strategies further reduce response times, enabling real-time interactivity even for computationally intensive queries.

8. Ethical and Privacy Considerations in GPT-5.5 Instant

Privacy-Centric Memory Sources Architecture

With the introduction of Memory Sources, privacy and data protection have become paramount concerns. OpenAI has architected the feature to ensure that all user data references are stored and retrieved with stringent encryption and access control mechanisms. User permissions are granular, allowing explicit consent before any external data source (e.g., Gmail or uploaded files) is accessed. Moreover, GPT-5.5 Instant operates on a zero-knowledge principle for sensitive data: the model processes contextual information on ephemeral, user-specific compute instances without persisting personal data beyond the session unless explicitly saved by the user.

This approach reduces risks related to data leakage or unauthorized access, aligning with global privacy regulations such as GDPR and CCPA. Users can also review and delete stored memory snippets at any time, ensuring full control over their AI-assisted conversations.

Bias Mitigation and Fairness Improvements

OpenAI has made significant strides in reducing biases in GPT-5.5 Instant through an enhanced training regimen that incorporates:

- Diverse training datasets covering a broader spectrum of demographics, cultures, and viewpoints

- Adversarial testing frameworks to identify and correct stereotypical or harmful outputs

- Reinforcement learning from human feedback (RLHF) with specialized annotators trained to flag biased or unfair responses

These measures have resulted in a measurable decrease in biased language and discriminatory content generation, particularly in sensitive application areas such as hiring assistance, educational content, and legal advice.

Transparent AI Explainability Tools

To promote ethical AI usage, GPT-5.5 Instant introduces Explainability Widgets within the ChatGPT interface. These widgets provide users with detailed rationales behind specific answers, outlining which memory sources, data points, or learned patterns influenced the model’s output. This transparency aids users in critically evaluating AI-generated content, reducing overreliance on the model and fostering informed decision-making.

9. Use Cases and Industry Impact of GPT-5.5 Instant

Enterprise Knowledge Management and Collaboration

GPT-5.5 Instant’s enhanced memory and contextual capabilities make it a transformative tool for enterprise knowledge management. Organizations can integrate GPT-5.5 Instant with internal document repositories, email systems, and project management platforms, enabling seamless cross-referencing of past communications and documents during conversations.

For instance, customer support teams can instantly retrieve relevant ticket histories and product manuals within chats, dramatically reducing resolution times. Similarly, research teams can leverage the model to synthesize insights from multiple prior reports and datasets, accelerating decision-making and innovation cycles.

Legal and Compliance Applications

Given its improved accuracy and contextual memory, GPT-5.5 Instant is increasingly adopted in legal domains for contract analysis, case law summarization, and regulatory compliance checks. The model’s ability to recall precise prior conversations and linked legal documents reduces errors and enhances the reliability of AI-assisted legal research.

Moreover, the explicit citation of memory sources provides audit trails necessary for compliance and risk management, addressing longstanding industry concerns about the opacity of AI decisions.

Healthcare Support and Medical Research

GPT-5.5 Instant supports healthcare professionals by providing more accurate medical information, diagnostic support, and patient history recall. Integration with electronic health records (EHRs) and prior patient interactions allows the model to contextualize symptoms and treatment plans effectively.

Additionally, researchers benefit from the model’s improved scientific reasoning and data synthesis capabilities, which facilitate literature reviews, hypothesis generation, and experimental design.

Creative and Educational Tools

In creative industries, GPT-5.5 Instant’s refined conversational style and multi-modal understanding enable more natural collaboration on writing, design, and multimedia projects. Educators can use the model to tailor lessons based on prior student interactions and uploaded course materials, offering personalized learning experiences that adapt dynamically.

Table: Sector-Specific GPT-5.5 Instant Benefits

| Industry | Key Benefits | Examples |

|---|---|---|

| Enterprise | Knowledge integration, faster collaboration, reduced response times | Customer support chats with document retrieval, project management assistance |

| Legal | Accurate case referencing, compliance auditing, contract analysis | AI-assisted legal research with memory-based citations |

| Healthcare | Contextual symptom analysis, patient history recall, research support | EHR-integrated diagnostic assistance, literature synthesis |

| Creative & Education | Personalized learning, creative collaboration, adaptive content generation | Dynamic tutoring, co-writing tools, multimedia project brainstorming |

10. Future Directions and Open Challenges

Scaling Context Windows and Memory Persistence

While GPT-5.5 Instant significantly extends the effective context window and memory integration, OpenAI acknowledges that further scaling is required to support truly long-term memory persistence across months or years. Future research will explore hierarchical memory architectures and external knowledge graph integrations to enable continuous, lifelong learning without compromising privacy or system performance.

Multimodal Interaction Enhancements

OpenAI plans to deepen multimodal capabilities beyond static images and text, incorporating video, audio, and real-time sensor data. This will empower GPT models to understand and interact with dynamic environments, opening new applications in robotics, virtual reality, and assistive technologies.

Robustness and Safety in Adversarial Environments

Ensuring GPT-5.5 Instant’s resilience against adversarial inputs, misinformation campaigns, and manipulation attempts remains a priority. Ongoing work includes deploying advanced anomaly detection, continual model updates, and human-in-the-loop oversight to maintain safety and reliability in increasingly complex deployments.

Customization and Fine-Tuning for Specialized Domains

OpenAI is developing streamlined fine-tuning frameworks that allow organizations to customize GPT-5.5 Instant for domain-specific jargon, workflows, and regulatory requirements without extensive retraining. Such customization will expand the model’s applicability across niche industries and geographies.

Ethical AI Governance and User Empowerment

As AI adoption grows, OpenAI is committed to advancing transparent governance frameworks that empower users to understand, control, and audit AI interactions. Future iterations will include enhanced consent mechanisms, bias auditing tools, and community-driven feedback systems to align GPT-5.5 Instant’s evolution with societal values.

8. Conclusion: GPT-5.5 Instant as a Milestone in AI Evolution

OpenAI’s GPT-5.5 Instant represents a substantial leap forward in large language model capabilities. By combining reduced hallucinations, transparent and dynamic memory integration, natural conversational fluency, and enhanced multi-modal reasoning, it sets a new standard for AI assistants in professional and everyday contexts.

Its deployment as the default ChatGPT model ensures broad accessibility, enabling developers and users to harness this powerful tool immediately. With its balance of accuracy, speed, and user-centric design, GPT-5.5 Instant is poised to accelerate AI adoption across industries.

For a comprehensive perspective on how GPT-5.5 Instant fits within the continuum of AI progress, including architectural evolution and benchmarking, refer to our detailed [INTERNAL_LINK: GPT model comparison]. Additionally, exploring the array of [INTERNAL_LINK: ChatGPT productivity features] will help users maximize value from this next-generation model.

Related Reading

Stay Ahead of the AI Curve

Get weekly insights on ChatGPT, Claude, and AI productivity delivered straight to your inbox. Join 40,000+ professionals who never miss an update.