⚡ The Brief

- What it is: Context-aware adaptive prompting is a technique that dynamically integrates conversational context and external data to tailor AI prompts in real-time.

- Who it’s for: This technique is ideal for developers and AI engineers looking to enhance interaction precision with models like ChatGPT and Claude.

- Key takeaways: It involves modular architectures with components like context aggregation, dynamic prompt generation, and feedback loops for continuous optimization.

- Pricing/Cost: Implementing this technique may require investment in advanced AI infrastructure and access to premium APIs for data integration.

- Bottom line: Context-aware adaptive prompting significantly improves AI output relevance, making it a crucial tool for developers in 2026.

✓ Instant access✓ No spam✓ Unsubscribe anytime

Implementing Context-Aware Adaptive Prompting for Enhanced AI Interaction

In 2026, one of the most transformative advancements in prompt engineering is the development of context-aware adaptive prompting. This technique leverages the dynamic integration of evolving conversational context and external data sources to tailor prompts in real-time, significantly improving the relevance and precision of outputs from models like ChatGPT, Claude, and Codex. This section provides a technical deep-dive into the practical implementation of context-aware adaptive prompting, detailing architectures, algorithms, and real-world use cases.

Core Architecture of Context-Aware Adaptive Prompting Systems

At its foundation, context-aware adaptive prompting requires a modular architecture integrating the following components: Wall of Context Prompting: The 2026 Technique That Is Replacing Long ChatGPT Prompts

- Context Aggregation Module: This component continuously collects, normalizes, and encodes the conversational history, user preferences, and relevant external data streams (e.g., databases, APIs, knowledge graphs).

- Dynamic Prompt Generator: Utilizing the aggregated context, this module constructs or modifies prompts on-the-fly by employing rule-based templates, neural prompt rewriters, or reinforcement learning agents.

- Model Interface Layer: This serves as the communication bridge to the underlying LLM (e.g., ChatGPT, Claude, Codex), ensuring prompt formatting aligns with model-specific tokenization and input constraints.

- Feedback Loop and Evaluation Engine: To optimize prompt efficacy, this engine captures model outputs, compares them against predefined success criteria or user feedback, and iteratively refines prompt generation strategies.

This architecture promotes a continuous cycle of context ingestion, prompt adaptation, output evaluation, and prompt optimization, ensuring the AI system remains responsive to evolving user intents and external conditions.

Technical Components and Implementation Strategies

Context Encoding with Embedded Representations

Effective context aggregation requires converting heterogeneous inputs—such as prior dialogue turns, structured data, or sensory inputs—into dense vector representations. State-of-the-art methods employ transformer-based encoders fine-tuned on domain-specific corpora to generate embeddings that encapsulate semantic relationships and temporal dependencies.

- For dialogue history, models like Sentence-BERT variants can generate turn-level embeddings, which are then concatenated or pooled to form a comprehensive context vector.

- External data, such as database entries or API responses, are mapped to embedding spaces using domain-adapted encoders, enabling seamless integration with conversational context.

Neural Prompt Rewriting via Sequence-to-Sequence Models

Rather than relying solely on static templates, advanced systems implement neural prompt rewriters that transform base prompts into contextually enriched versions. These rewriters are typically fine-tuned sequence-to-sequence models that:

- Ingest the base prompt alongside the context embeddings as input.

- Generate a modified prompt that explicitly incorporates relevant context, disambiguates intent, or provides additional instructions to the LLM.

This approach enables flexible and scalable prompt adaptation without manual template engineering, especially beneficial in complex or multi-turn interactions.

Reinforcement Learning for Prompt Optimization

To further enhance prompt quality, reinforcement learning (RL) algorithms can be employed wherein the prompt generator acts as an agent optimizing a reward function based on output quality metrics such as relevance, coherence, or factual correctness. Key considerations include:

- Reward Design: Defining robust reward signals using automated metrics (e.g., BLEU, ROUGE, factuality scores) or human-in-the-loop feedback.

- Exploration Strategies: Balancing exploitation of known effective prompts with exploration of novel prompt formulations.

- Sample Efficiency: Leveraging off-policy RL methods or imitation learning to reduce costly interactions with the LLM.

RL-driven prompt adaptation dynamically tailors the phrasing, length, and instructions embedded in prompts to maximize model performance under varying contexts.

Real-World Applications of Adaptive Prompting

Enterprise Knowledge Management

In corporate environments, context-aware adaptive prompting enables AI assistants to retrieve and synthesize information from vast, heterogeneous knowledge bases. For example:

- By continuously integrating recent document updates, email threads, and project status reports into the context aggregator, the AI can generate highly accurate summaries and recommendations tailored to ongoing initiatives.

- Dynamic prompt rewriting allows the system to clarify ambiguous user queries by embedding relevant organizational context, such as department-specific jargon or current strategic priorities.

Code Generation and Software Development with Codex

Adaptive prompting revolutionizes software engineering workflows by refining coding prompts based on prior generated code snippets, testing results, and user feedback:

- Context encoding of existing codebases allows Codex to generate contextually consistent code completions and refactorings.

- Reinforcement learning optimizes prompt formulations that encourage the generation of syntactically correct, efficient, and maintainable code.

- Integration with continuous integration/continuous deployment (CI/CD) pipelines enables prompts to adapt based on build statuses and test coverage metrics.

Personalized Conversational Agents

AI-powered chatbots and virtual assistants benefit from adaptive prompting by dynamically incorporating user preferences, historical interactions, and external signals such as calendar events or sensor data:

- Prompt generators embed personalized instructions and context to maintain consistent persona and tone.

- Context-aware prompts reduce the need for repetitive user input by inferring unstated intent from accumulated context.

- Feedback loops enable continuous improvement of prompt strategies based on user satisfaction metrics and interaction analytics.

Challenges and Future Directions

While context-aware adaptive prompting demonstrates substantial gains, several challenges persist:

- Context Window Limitations: Despite increased token capacities, large context vectors may exceed model input limits, necessitating intelligent context summarization and pruning.

- Latency Constraints: Real-time prompt adaptation requires efficient encoding and prompt generation pipelines to maintain low response times.

- Robustness to Noisy or Incomplete Data: Ensuring prompt adaptation strategies gracefully handle inconsistent or missing contextual inputs remains an open problem.

Ongoing research aims to address these issues through advances in memory-augmented neural architectures, hierarchical context management, and hybrid symbolic-neural prompt generation methods, positioning context-aware adaptive prompting as a cornerstone technique for next-generation AI interactions.

Industry Impact and Future Implications of Advanced Prompt Engineering in 2026

As advanced prompt engineering techniques become integral to leveraging state-of-the-art AI models like ChatGPT, Claude, and Codex, their influence is reshaping multiple industries and redefining competitive dynamics. The ability to precisely tailor AI outputs through sophisticated prompt strategies is no longer a niche skill but a critical differentiator driving innovation, operational efficiency, and new business models.

Transforming Industry Workflows through Prompt Engineering

Across sectors, the deployment of advanced prompting techniques has shifted the paradigm from AI as a black-box assistant to an interactive, controllable collaborator. Industries such as finance, healthcare, law, and software development are experiencing profound transformations enabled by prompt engineering innovations.

- Finance and Risk Management: In quantitative finance and compliance, prompts designed for dynamic contextual understanding enable real-time risk assessments and regulatory reporting. By embedding domain-specific jargon and scenario-based examples into prompt templates, AI models can generate nuanced financial analyses, reducing reliance on human intervention and accelerating decision cycles.

- Healthcare and Medical Research: Advanced prompting facilitates precision medicine by guiding models to interpret complex clinical data, extract insights from medical literature, and even simulate patient-specific treatment outcomes. Prompt engineering frameworks now incorporate layered clinical context, ethical considerations, and evidence-based guidelines to ensure outputs meet stringent safety and accuracy standards.

- Legal and Contract Analysis: AI systems empowered with few-shot and chain-of-thought prompting are increasingly used to draft, review, and negotiate contracts. Custom prompt sequences help these models understand jurisdiction-specific regulations and identify potential legal risks, boosting productivity while maintaining compliance.

- Software Development and Automation: Codex’s advanced prompt design capabilities enable developers to generate complex code snippets, automate debugging, and refactor legacy systems. Prompt templates that incorporate detailed input-output examples and iterative feedback loops significantly reduce development time and elevate code quality.

Market Analysis: Competitive Differentiators in AI-Driven Solutions

The sophistication of prompt engineering has emerged as a key competitive factor in the AI marketplace, influencing vendor selection and user adoption. Companies that invest in refining their prompt strategies gain a measurable advantage in output reliability, customization, and scalability.

- Customization and Domain Adaptation: Enterprises demand AI solutions that can be precisely tuned to their unique operational contexts. Providers offering advanced prompt engineering toolkits and frameworks—such as prompt versioning, modular prompt components, and context-aware prompt chaining—are positioned to capture significant market share.

- Interoperability and Multimodal Prompting: Market leaders emphasize integrating language models with other AI modalities, such as vision and speech, through unified prompting interfaces. This convergence expands application possibilities and appeals to sectors requiring multimodal insights, from autonomous vehicles to multimedia content generation.

- Real-Time Adaptive Prompting: The ability to dynamically modify prompts based on model feedback or external data streams is a critical differentiator for high-stakes environments. Vendors embedding adaptive prompting algorithms within AI platforms can offer solutions with superior responsiveness and contextual accuracy.

Competitive Landscape: Emerging Trends and Strategic Responses

The rapid maturation of prompt engineering has intensified competition among AI platform providers, independent tool developers, and integrators. Key trends shaping the competitive landscape include:

- Proprietary Prompt Libraries: Leading AI companies are developing extensive, domain-specific prompt libraries optimized for their models. These libraries provide users with pre-built, finely tuned prompt templates that accelerate deployment and improve consistency.

- Prompt Engineering as a Service (PEaaS): Specialized firms are emerging to offer prompt design, optimization, and audit services, catering to clients lacking in-house expertise. PEaaS providers combine domain knowledge with AI proficiency to deliver tailored prompting solutions that maximize model value.

- Open-Source Prompt Frameworks: The community-driven creation of transparent, extensible prompt engineering frameworks fosters innovation and democratizes access. This trend encourages experimentation with novel prompt architectures and cross-model compatibility, challenging proprietary offerings.

- Integration with AutoML and Hyperparameter Tuning: Combining prompt engineering with automated machine learning pipelines allows end-to-end optimization of AI model performance. Competitors leveraging this integration can offer turnkey solutions that automatically refine prompts alongside model parameters.

Future Implications: Toward Autonomous Prompt Optimization and Ethical Considerations

Looking forward, the trajectory of prompt engineering suggests several transformative developments impacting AI utilization and governance:

- Autonomous Prompt Generation and Refinement: Advances in meta-learning and reinforcement learning will enable AI systems to autonomously generate, evaluate, and optimize their own prompts. This self-improving capability will reduce human workload and unlock higher-order reasoning and creativity within models.

- Context-Aware Ethical Guardrails: As AI systems gain greater autonomy through adaptive prompting, embedding real-time ethical constraints into prompt frameworks will become crucial. Techniques such as dynamic bias detection and mitigation prompts will safeguard against harmful or unintended outputs.

- Cross-Model Prompt Transferability: The standardization of prompt representations and benchmarking will facilitate the transfer of effective prompt designs across different AI architectures, fostering interoperability and reducing duplication of effort.

- Human-AI Collaborative Prompting: Future workflows will increasingly emphasize interactive prompt co-creation, where users and AI systems iteratively refine prompts together. This synergy will enhance transparency, trust, and utility in complex decision-making contexts.

In conclusion, the evolution of advanced prompt engineering in 2026 is not merely a technical progression but a catalyst for profound industrial transformation and strategic differentiation. Mastery of these techniques will be indispensable for organizations aspiring to harness the full power of AI models like ChatGPT, Claude, and Codex—driving innovation, competitiveness, and responsible AI deployment in the years ahead.

Section 3: Leveraging Adaptive and Meta-Prompting Techniques for Enhanced AI Interaction

As AI language models such as ChatGPT, Claude, and Codex continue to advance in 2026, prompt engineering has transcended conventional static inputs to embrace dynamic, self-referential, and meta-cognitive strategies. This section delves into adaptive prompting and meta-prompting techniques, which empower developers to create prompts that not only elicit accurate responses but also enable the models to self-optimize their outputs through internal feedback mechanisms.

Adaptive Prompting: Real-Time Contextual Refinement

Adaptive prompting is a methodology where prompts evolve in response to ongoing interaction with the AI, allowing for real-time contextual calibration. Unlike traditional single-shot prompts, adaptive techniques use the model’s prior outputs and external data streams to iteratively refine queries, thus significantly improving relevance and precision.

- Implementation of Feedback Loops: Incorporate response evaluation criteria within the prompt structure itself. For example, prompts can request the model to self-assess confidence levels or identify ambiguous elements before proceeding, enabling conditional rephrasing or elaboration.

- Dynamic Context Injection: Use programmatic interfaces to insert real-time environmental or user-specific context—such as session history, user preferences, or sensor data—directly into the prompt. This enhances personalization and situational awareness, crucial for applications like virtual assistants or autonomous coding agents.

- Multi-turn Prompt Chaining: Structure prompts as part of multi-turn dialogues where each prompt is informed by the previous model response. This technique requires maintaining contextual embeddings and state management outside the model to feed back into the prompt construction process.

Expert practitioners recommend leveraging adaptive prompting in tandem with external validation mechanisms to continually monitor output quality and adjust prompt parameters accordingly. This approach is especially vital when deploying models in high-stakes environments such as healthcare, finance, or legal domains, where precision and accountability are paramount.

Meta-Prompting: Enabling Model Self-Optimization and Explainability

Meta-prompting involves instructing the model to perform higher-order reasoning about its own generation process. By embedding meta-cognitive instructions within prompts, developers can prompt the model not only to generate content but also to critique, explain, or optimize that content autonomously.

- Self-Critique and Error Detection: Prompts can request the model to identify potential contradictions, logical inconsistencies, or factual inaccuracies in its output. For instance, a meta-prompt might ask, “Review the above answer for any unsupported claims or ambiguous statements and suggest corrections.” This significantly enhances reliability, especially when paired with few-shot examples demonstrating error detection.

- Explanation and Justification Generation: In contexts where transparency is critical, prompts can instruct the model to articulate the reasoning behind its responses. This is essential for applications involving decision-making support or educational tutoring, providing end-users with interpretable AI outputs.

- Prompt Optimization via Self-Reflection: Advanced meta-prompting can guide the AI to refine or rewrite its initial prompts or instructions to improve clarity and specificity. For example, after generating a response, the model can be prompted to suggest enhancements to the original query, effectively participating in prompt engineering itself.

Meta-prompting requires careful balancing to avoid overloading the model with recursive instructions that can degrade performance. Expert guidance suggests modularizing meta-tasks into discrete prompt components and limiting recursion depth to maintain computational efficiency.

Best Practices and Common Pitfalls in Advanced Prompt Engineering

- Maintain Explicitness Without Overcomplication: While detailed prompts improve output specificity, excessively verbose or convoluted instructions can confuse the model. Strive for clarity and precision, using structured formats such as bullet points or numbered lists within prompts to delineate tasks.

- Avoid Ambiguous or Compound Queries: Combining multiple distinct questions or instructions in a single prompt often leads to partial or unfocused responses. Break down complex interactions into sequential prompts, leveraging adaptive prompting to manage complexity incrementally.

- Monitor for Model Drift and Context Loss: In long multi-turn interactions, models can lose track of earlier context or subtly shift topics. Implement external session management and context re-injection to mitigate drift, ensuring consistent alignment with user intent.

- Calibrate Temperature and Sampling Parameters: Advanced prompting works synergistically with model hyperparameters. Lower temperature settings can yield more deterministic outputs suited for precise tasks, while higher temperatures may foster creative or exploratory responses. Adjust these in accordance with prompt complexity and desired output style.

- Leverage Model-Specific Feature Sets: Each AI system offers unique capabilities—such as Codex’s code synthesis or Claude’s enhanced factuality filters. Tailor prompts to harness these strengths explicitly, referencing model documentation and empirical performance benchmarks.

Future Directions: Integrating Reinforcement Learning with Prompt Engineering

The frontier of prompt engineering in 2026 increasingly intersects with reinforcement learning from human feedback (RLHF) and automated prompt tuning frameworks. By coupling adaptive and meta-prompting strategies with continuous model fine-tuning, developers can create closed-loop systems where prompts evolve based on performance metrics and user satisfaction.

This integration enables:

- Automated Prompt Refinement: Using reward signals to iteratively optimize prompt templates for specific tasks without manual intervention.

- Personalized AI Behavior: Adapting prompt strategies to individual user interaction patterns, improving engagement and utility.

- Robustness to Distribution Shifts: Dynamically adjusting prompt parameters to maintain performance as input data characteristics evolve.

Mastering these advanced techniques positions AI practitioners at the cutting edge of human-AI collaboration, enabling the development of intelligent systems that are not only powerful but also adaptive, transparent, and aligned with user goals.

Advanced Prompt Engineering Techniques for ChatGPT, Claude, and Codex in 2026

As artificial intelligence models like ChatGPT, Claude, and Codex continue to evolve in 2026, the art and science of prompt engineering have become pivotal in unlocking their full potential. Developers and AI practitioners must adapt to increasingly sophisticated language models by mastering advanced prompting techniques to achieve precise, reliable, and contextually relevant outputs.

This guide explores cutting-edge prompt engineering strategies—including zero-shot, few-shot, and chain-of-thought prompting—tailored for the latest generation of AI systems. It also provides practical principles and optimization methods applicable across multiple AI platforms, enabling developers to design prompts that deliver consistent and accurate results in diverse applications.

Understanding the Evolution of Prompt Engineering in 2026

Prompt engineering has evolved from simple keyword-based queries to complex, structured input strategies that guide AI models more effectively. In 2026, with models like ChatGPT, Claude, and Codex reaching new heights in natural language understanding and generation, prompt design requires a nuanced approach that balances clarity, context, and intent.

Key trends shaping prompt engineering include:

- Contextual Depth: Incorporating richer context to reduce ambiguity and enhance output relevance.

- Dynamic Prompting: Adapting prompts based on previous model responses or external data.

- Multimodal Integration: Combining language prompts with other data types for comprehensive understanding.

Developers must now think beyond static prompts and embrace iterative and adaptive prompting workflows that align with the specific capabilities and limitations of each AI system.

Zero-Shot Prompting: Directing AI Without Examples

Zero-shot prompting involves instructing an AI model to perform a task without providing any example inputs or outputs. This approach relies heavily on the model’s pre-trained knowledge and understanding of natural language instructions.

In 2026, zero-shot prompting remains a powerful technique for rapid prototyping and scenarios where example data is scarce or unavailable. To optimize zero-shot prompts for ChatGPT, Claude, or Codex, consider these best practices:

- Explicit Instructions: Clearly articulate the task, expected format, and constraints.

- Structured Queries: Use bullet points, numbered lists, or clear delimiters to organize the prompt.

- Contextual Anchors: Provide relevant background information or domain-specific terminology within the prompt.

For example, when querying Codex for a programming solution, a zero-shot prompt might specify the desired function behavior, input/output format, and language constraints without example code snippets.

[h3>Few-Shot Prompting: Leveraging Demonstrations for Better AccuracyFew-shot prompting enhances task performance by including a limited number of input-output examples within the prompt. This method helps models understand the expected pattern or style for the desired output.

In 2026, few-shot prompting remains essential when working with complex or nuanced tasks. Developers can improve model accuracy and reduce ambiguity by thoughtfully selecting representative examples. Key considerations include:

- Example Diversity: Include varied cases to cover edge conditions and common scenarios.

- Concise Formatting: Keep examples brief but informative to avoid exceeding token limits.

- Consistent Style: Maintain uniform formatting and language style across examples.

When designing few-shot prompts for Claude or ChatGPT, embedding contextual cues alongside examples often yields superior results, especially for conversational or multi-turn tasks. This approach helps the model generalize the underlying logic rather than memorizing specific responses.

Mastering Prompt Engineering: Advanced Techniques for ChatGPT Power Users in 2026“>Advanced prompt structuring techniques can further enhance the effectiveness of few-shot learning by optimizing example order and clarity.

Chain-of-Thought Prompting: Encouraging Stepwise Reasoning

Chain-of-thought (CoT) prompting guides AI models to generate intermediate reasoning steps before arriving at a final answer. This technique improves performance on complex reasoning, mathematical problems, and multi-step decision-making tasks.

In 2026, CoT has become a mainstream prompting strategy for models like ChatGPT and Claude due to its ability to reduce errors and increase transparency. Best practices for chain-of-thought prompting include:

- Explicitly Request Reasoning: Instruct the model to “think aloud” or “explain your reasoning step-by-step.”

- Provide Example Chains: Include sample reasoning sequences in few-shot prompts to demonstrate the expected detail level.

- Segment Complex Problems: Break down challenging tasks into smaller sub-tasks within the prompt.

Chain-of-thought prompting is particularly effective when combined with interactive API calls, enabling developers to parse and utilize intermediate reasoning outputs for downstream applications.

Practical Principles for Any AI Tool

Despite differences in architecture and training data, ChatGPT, Claude, and Codex share foundational prompt engineering principles that developers can apply universally:

- Clarity and Specificity: Avoid vague instructions; precise language reduces misinterpretation.

- Contextualization: Embed necessary background or domain knowledge to aid understanding.

- Prompt Length Management: Balance detail with token limits to maintain efficiency without sacrificing context.

- Iterative Refinement: Test and adjust prompts based on model responses to optimize output quality.

- Output Constraints: Use delimiters or format instructions to control the structure of AI-generated content.

Incorporating these principles ensures robust prompt design that scales across different AI platforms and use cases.

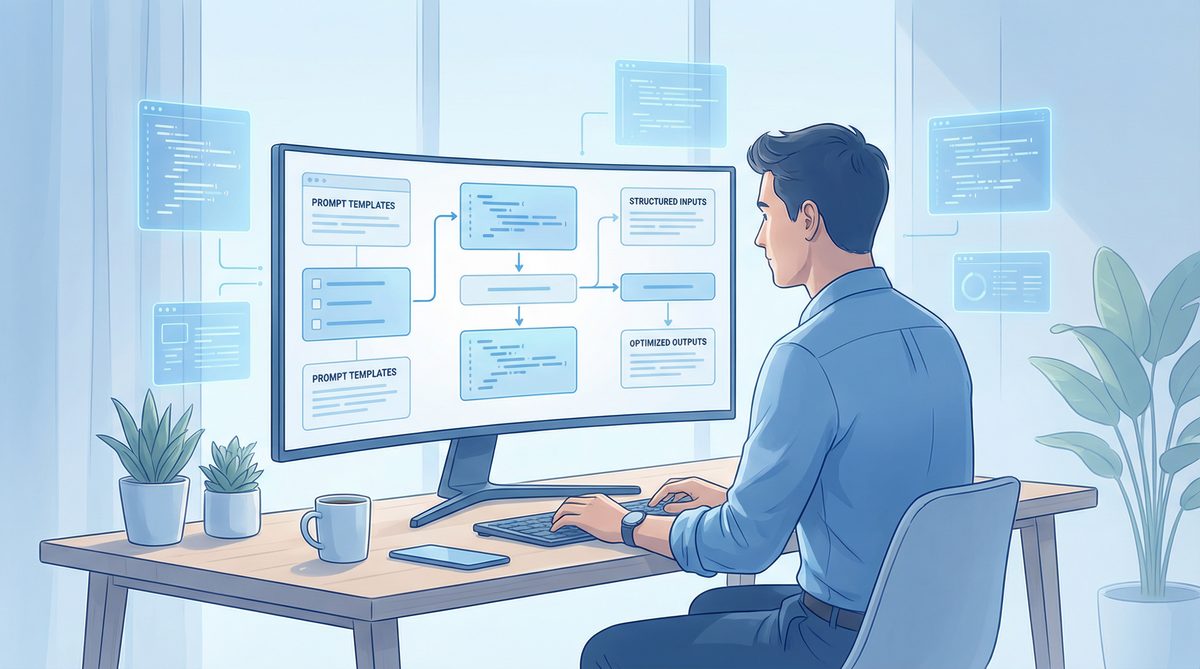

Prompt Optimization Techniques

Optimizing prompts is an ongoing process that involves analyzing model outputs, adjusting input phrasing, and experimenting with different prompting strategies. Developers can employ several techniques to maximize prompt efficiency and effectiveness:

- Token Budgeting: Prioritize essential information to stay within API token limits without losing critical context.

- Prompt Chaining: Divide complex queries into smaller, sequential prompts to reduce cognitive load on the model.

- Temperature and Top-p Tuning: Adjust sampling parameters to balance creativity and determinism in responses.

- Use of System Messages: Leverage system-level instructions in APIs to set tone, style, or behavior constraints globally.

- Automated Prompt Testing: Integrate prompt evaluation into CI/CD pipelines for applications relying on AI-generated content.

These optimization strategies are particularly valuable when designing prompts for production environments, where consistency and reliability are paramount.

Developer-Focused Prompting for API Calls

Modern AI APIs expose extensive customization options that developers can harness through prompt engineering. When integrating ChatGPT, Claude, or Codex into applications via API, the following developer-centric prompting best practices apply:

- Parameter Utilization: Combine prompt design with API parameters such as max tokens, stop sequences, and temperature for fine-grained control.

- Embedding Context: Dynamically insert user inputs or external data into prompts to create personalized and context-aware interactions.

- Response Parsing: Design prompts that produce structured outputs (e.g., JSON or XML) to facilitate automated parsing and downstream processing.

- Error Handling: Include fallback instructions or clarifying queries within prompts to mitigate ambiguous or incomplete responses.

- Security and Privacy: Avoid embedding sensitive information directly in prompts; instead, use tokenization or secure data references.

Developers should also monitor API usage patterns and prompt performance metrics to iteratively refine prompts and optimize cost-efficiency and response quality.

For a deeper dive into Advanced AI Prompting Techniques for 2026: The CTF Method“>API-driven prompt customization, developers can explore advanced SDK features and tooling supported by leading AI platforms.

Useful Links

🕐 Instant∞ Unlimited🎁 Free

- OpenAI API Documentation

- Anthropic Claude Overview

- OpenAI Codex GitHub Repository

- Chain-of-Thought Prompting Research Paper

- Best Practices for Prompt Engineering

Frequently Asked Questions

What is context-aware adaptive prompting?

Context-aware adaptive prompting is a technique that uses evolving conversational context and external data to dynamically tailor AI prompts. This improves the relevance and precision of AI outputs, making interactions more effective and responsive to user needs.

How does context aggregation work?

Context aggregation involves collecting, normalizing, and encoding conversational history, user preferences, and external data. This is done using modules that convert inputs into dense vector representations, often with transformer-based encoders, to maintain semantic and temporal coherence.

What are the benefits of dynamic prompt generation?

Dynamic prompt generation allows for on-the-fly construction or modification of prompts using rule-based templates or neural rewriters. This adaptability ensures that prompts are always aligned with the current context, enhancing the accuracy and relevance of AI responses.

Why is a feedback loop important in this system?

A feedback loop captures AI outputs and compares them against success criteria or user feedback. This iterative process refines prompt strategies, ensuring continuous improvement in AI interaction quality and responsiveness to changing user intents.

What role does the model interface layer play?

The model interface layer acts as a communication bridge to the underlying language model, ensuring that prompts are formatted correctly according to model-specific tokenization and input constraints. This ensures seamless integration and optimal performance.

How can developers implement this technique?

Developers can implement context-aware adaptive prompting by setting up a modular architecture with components for context aggregation, dynamic prompt generation, and feedback loops. They should also leverage transformer-based encoders and ensure compatibility with the AI models they use.

🕐 Instant∞ Unlimited🎁 Free

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.