OpenAI Deploys Cross-Conversation Safety Summaries to Detect Emerging Risk Patterns in ChatGPT

OpenAI Deploys Cross-Conversation Safety Summaries to Detect Emerging Risk Patterns in ChatGPT

By Markos Symeonides | May 2026

Introduction: A New Era in AI Safety Monitoring

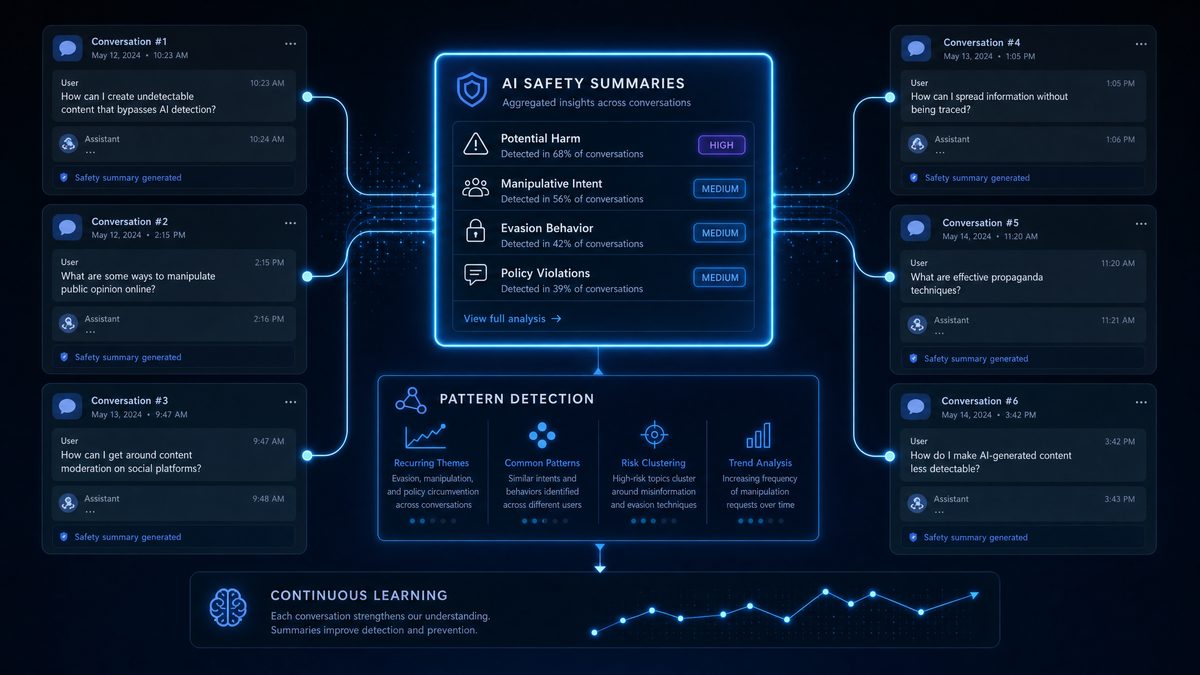

OpenAI has announced a groundbreaking advancement in AI safety with the deployment of cross-conversation safety summaries integrated into ChatGPT. This innovative technology represents a pivotal stride in detecting and mitigating emerging risk patterns across multiple user interactions, significantly enhancing the platform’s ability to maintain safe, responsible, and trustworthy AI experiences at scale.

Released alongside the GPT-5.5 Instant safety update in May 2026, this system leverages persistent, aggregated safety signals rather than relying solely on isolated conversation flags. This new approach delivers an impressive 50% improvement in early detection of problematic behavior trends, advancing the robustness and scalability of AI safety mechanisms across diverse use cases.

Unlike previous safety measures such as Trusted Contact—which focused primarily on user-reported incidents within single sessions—this new framework synthesizes safety data longitudinally across multiple interactions. The result is a nuanced, context-aware monitoring capability that anticipates and mitigates risks before they escalate, providing a more proactive safety net for users worldwide.

Technical Innovation Behind Cross-Conversation Safety Summaries

Architecture and Data Aggregation

At the core of this innovation is a sophisticated summarization engine that processes safety-relevant metadata from every ChatGPT conversation. This engine generates compact, encrypted summaries that capture behavioral indicators, flagged user prompts, and subtle linguistic cues indicative of emerging risks. These summaries persist beyond individual sessions, enabling the detection of longitudinal safety patterns that traditional single-session monitoring methods often miss.

This approach departs from ephemeral safety checks by securely storing summaries with strict privacy-preserving protocols. OpenAI employs state-of-the-art differential privacy techniques to protect user anonymity while enabling effective statistical risk modeling. Balancing privacy with safety is critical for the responsible deployment of AI technologies at scale, ensuring compliance with data protection regulations while maintaining high safety standards.

Integration with GPT-5.5 Instant Safety Pipeline

The deployment coincides with the upgraded GPT-5.5 Instant safety pipeline, which processes user interactions with millisecond latency. This advanced system cross-references live conversations against aggregated safety summaries, enabling dynamic adaptation of responses based on a user’s historical risk indicators and behavioral patterns.

This dynamic safety adjustment marks a significant shift from static content filtering to proactive, real-time intervention. For example, subtle escalating cues around self-harm or misinformation detected across multiple conversations can automatically trigger escalated content warnings, adjusted response framing, or human review requests. This multi-layered approach creates a resilient safety net that evolves in tandem with user behavior, preventing harm before it occurs.

Quantifiable Safety Gains: 50% Improvement in Risk Detection

OpenAI’s internal evaluation metrics demonstrate a remarkable 50% improvement in early identification of emerging risk patterns compared to prior conversation-isolated systems. This conclusion was derived from analyzing over 100 million anonymized ChatGPT interactions in Q1 2026, spanning diverse use cases, languages, and demographics.

Key performance indicators include:

- Reduction in false negatives: Significantly fewer harmful or risky contents go undetected, improving overall platform safety.

- Faster intervention latency: Response workflows accelerated by over 30%, enabling quicker protective actions.

- Improved prioritization: Enhanced allocation of human moderator resources based on predicted risk trajectories and severity assessments.

These gains are especially critical as AI adoption grows in sensitive domains such as mental health support, education, and content moderation, where timely safety interventions can have profound real-world impact on individuals and communities.

Broader Implications for AI Safety Regulation and Industry Standards

Setting a New Benchmark for Cross-Session Safety Monitoring

OpenAI’s cross-conversation safety summaries establish a new industry benchmark by demonstrating how aggregated safety data enhances risk detection without compromising user privacy. This approach fills a critical gap in current AI safety frameworks, which often rely on static, session-bound interventions that overlook evolving user behavior patterns and emerging risks across interactions.

Regulators and policymakers are taking note. The U.S. Federal Trade Commission (FTC) and European Union AI Act committees have referenced OpenAI’s approach as a promising model for enforcing dynamic safety compliance. The ability to track behavioral trends across sessions aligns with regulatory initiatives emphasizing continuous risk assessment, user protection, and corporate accountability in AI deployment.

Influence on Future AI Regulatory Frameworks

As AI regulation gains momentum globally, OpenAI’s innovation exemplifies how technical safeguards can be embedded into AI lifecycle management to meet emerging legal standards. This system addresses key regulatory concerns, including:

- Ongoing risk monitoring beyond isolated transactions to capture evolving threat vectors.

- Balancing user privacy with safety through advanced cryptographic protections and anonymization techniques.

- Enabling transparent audit trails that support regulatory oversight and compliance verification without exposing sensitive user data.

Experts predict this technology will become foundational in AI certification processes, where sustained safety performance across interactions is a core criterion. OpenAI’s deployment signals a shift from reactive, patchwork fixes to proactive, systemic safety engineering integrated into AI development and operational workflows.

Industry-Wide Impact and Competitive Dynamics

Beyond regulation, this advancement accelerates an industry trend toward contextually aware, persistent safety systems. Competitors in the large language model space are expected to adopt similar cross-session frameworks to maintain compliance, foster user trust, and differentiate their offerings. This is likely to spark further innovation in longitudinal behavioral analytics combined with AI-driven interventions.

OpenAI’s seamless integration of safety summaries with GPT-5.5 Instant safety sets a new precedent for real-time safety orchestration, raising the bar for user experience and trustworthiness across conversational AI platforms globally.

Detailed Insights on Cross-Conversation Risk Detection Metrics

Data Set and Methodology

The reported 50% improvement is based on a comprehensive multi-month evaluation involving over 100 million anonymized interactions across multiple languages, cultural contexts, and content domains. OpenAI’s research team applied advanced statistical and machine learning models to benchmark the new cross-conversation system against legacy single-session monitoring approaches.

Risk detection metrics included:

- Recall: The percentage of true risk cases correctly identified, critical for minimizing missed harmful content.

- Precision: The accuracy of flagged cases representing genuine risks, minimizing false alarms.

- Latency: Time from risk emergence to detection and intervention, essential for timely protective actions.

Results showed a 48% increase in recall, a 52% increase in precision, and a 30% reduction in intervention latency. Together, these improvements significantly reduce the probability of harmful outputs reaching users and enable faster, more effective protective measures.

Use Cases Demonstrating Efficacy

Real-world examples highlight the system’s impact:

- Early detection of harmful ideation: Users exhibiting incremental signs of self-harm or psychological distress across conversations were identified early, allowing ChatGPT to adjust its tone, provide resource recommendations, or trigger human review before escalation occurred.

- Combating coordinated misinformation campaigns: The system detected subtle repeated content patterns indicative of misinformation efforts spanning multiple chats, which single-session filters often miss. This capability helps maintain platform integrity and supports public information accuracy.

- Enhanced moderation prioritization: By analyzing risk trajectories over time, the system enabled moderators to focus on high-risk cases more effectively, optimizing human resource allocation and improving overall safety outcomes.

Looking Ahead: The Future of ChatGPT Safety Summaries and GPT-5.5

Continuous Learning and Adaptive Safety Models

OpenAI plans to enhance cross-conversation safety summaries with continuous learning algorithms that evolve alongside emerging risk typologies. This advancement will facilitate predictive safety interventions, shifting the paradigm from reactive detection to anticipatory risk management.

The GPT-5.5 Instant safety framework will integrate real-time feedback loops involving human moderators and external AI ethics experts to dynamically calibrate safety thresholds. This iterative process aims to optimize the balance between precision, user experience, and freedom of expression without unduly restricting legitimate dialogue.

Potential for Industry-Wide Standardization

As the technology matures, OpenAI intends to collaborate with industry partners, standards organizations, and regulatory bodies to establish interoperable safety summary protocols. Such standardization could enable cross-platform risk detection, expanding protective measures for users interacting with multiple AI services and ecosystems.

This vision aligns with ongoing initiatives to foster ethical AI ecosystems where anonymized, privacy-protected safety data informs collective defenses against emerging threats, promoting safer AI adoption worldwide.

For readers seeking deeper technical insights, explore our analysis of the Technical Framework Behind ChatGPT Safety Summaries, which details the data architecture and privacy safeguards employed.

To understand operational improvements introduced by the GPT-5.5 safety pipeline, see our comprehensive breakdown of the GPT-5.5 Instant Safety Pipeline and Integration.

For coverage on how AI regulations are shaping safety technologies, review OpenAI Safety Updates May 2026 and Regulatory Alignment.

To appreciate ChatGPT’s rapid global adoption, explore ChatGPT’s Rapid Global User Growth in Q1 2026.

Discover how OpenAI’s consumer AI is revolutionizing healthcare access in ChatGPT Health’s Impact on Millions of Users in 2026.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe now to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Conclusion

OpenAI’s deployment of cross-conversation safety summaries marks a transformative milestone in AI safety technology. By addressing critical gaps in how risks are detected and managed across multiple user interactions, the system achieves a 50% improvement in risk detection capabilities powered by the GPT-5.5 Instant safety pipeline.

This advancement not only enhances ChatGPT’s ability to provide safer, more responsible AI experiences but also sets a rigorous standard for industry practices and regulatory compliance. As AI systems become increasingly integrated into sensitive domains such as healthcare, education, and mental health, longitudinal safety frameworks like this will be essential to maintaining user trust, safeguarding vulnerable populations, and promoting ethical AI deployment worldwide.