How to Use Claude’s New Dreaming Feature to Build Self-Improving AI Agents

As the AI landscape evolves rapidly, the demand for autonomous, adaptive systems that can learn from experience grows ever stronger. Anthropic’s latest innovation, Claude’s new dreaming feature for Managed Agents, represents a significant leap forward in this domain. This feature enables AI agents to introspectively review their past interactions, extract meaningful patterns, and refine their behavior autonomously—essentially enabling them to “dream,” learn, and improve without direct human intervention.

This tutorial provides a comprehensive walkthrough of Claude’s dreaming feature, exploring its conceptual underpinnings, technical architecture, and practical implementation. Whether you are an AI researcher, developer, or enthusiast, this guide will equip you with the knowledge and tools to build self-improving AI agents leveraging this cutting-edge technology.

1. Understanding Claude’s Dreaming Feature: Context and Fundamentals

1.1 The Evolution of AI Agents and Self-Improvement

AI agents have traditionally been designed to operate within defined parameters, performing tasks based on programmed logic or learned models. While reinforcement learning and continual learning techniques have allowed incremental improvements, these methods often require explicit training signals or human feedback loops. For example, reinforcement learning typically involves an agent interacting with an environment, receiving rewards or penalties, and updating its policy accordingly. However, this process can be data-intensive, time-consuming, and dependent on carefully engineered reward functions.

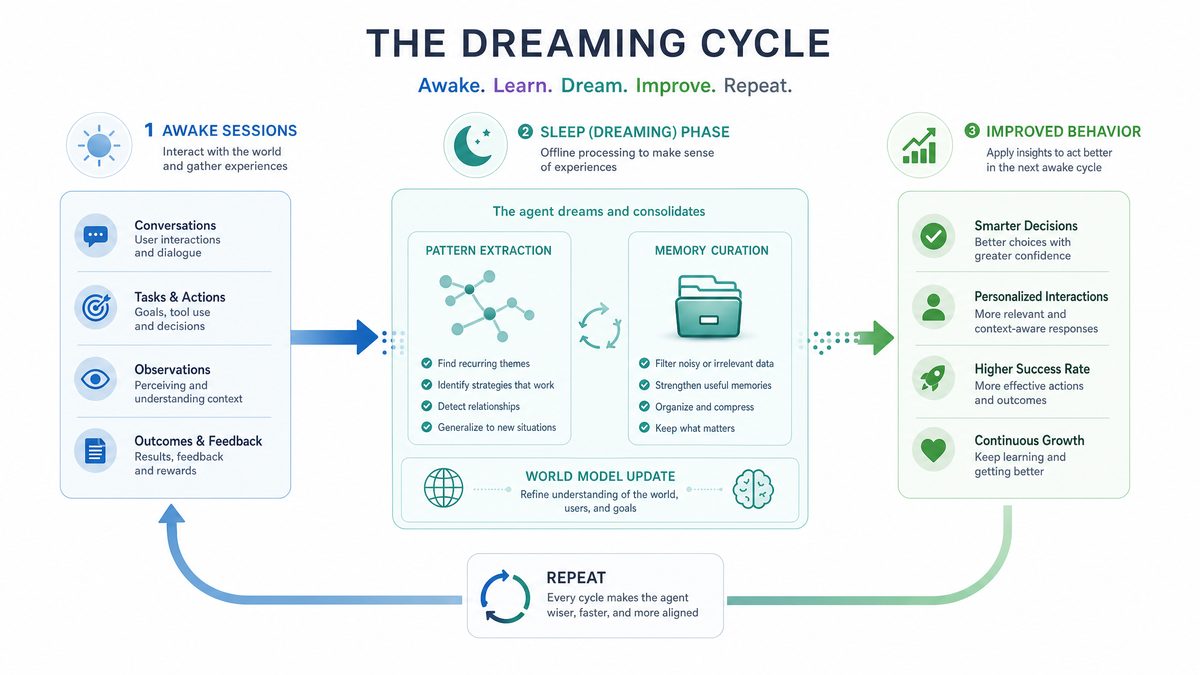

Claude’s dreaming feature introduces a paradigm shift by enabling AI agents to autonomously reflect on their past interactions. This reflective introspection is inspired by biological dreaming, where humans consolidate memories, extract patterns, and optimize cognitive strategies during sleep. Similarly, Claude’s Dreaming mechanism lets Managed Agents analyze historical session data to identify successes, failures, and latent insights that inform future decision-making. This approach leverages accumulated experience without the need for continuous real-time feedback, allowing the agent to improve asynchronously and in a more scalable manner.

To draw an analogy, traditional AI learning resembles a student who learns only during class with the teacher’s guidance, while Claude’s dreaming feature empowers the student to review and synthesize lessons independently overnight, leading to deeper understanding and improved performance.

1.2 What Is Dreaming in Managed Agents?

Managed Agents in Claude’s ecosystem are AI entities designed to perform specific tasks autonomously. The dreaming feature is an internal process where these agents review their past conversations, decisions, and outcomes to derive higher-level abstractions and strategies. Unlike standard logging or monitoring, dreaming goes beyond mere data storage by performing sophisticated analysis and reasoning over the historical data.

Key capabilities enabled by dreaming include:

- Pattern Extraction: Identifying recurring themes and behaviors across sessions. For instance, agents might detect that certain user queries consistently lead to misunderstandings or dissatisfaction, prompting a reevaluation of response strategies.

- Self-Diagnosis: Recognizing failure modes and suboptimal decisions, such as repeated misclassifications or ineffective task prioritization.

- Strategy Refinement: Adjusting internal heuristics or response templates to improve response quality or efficiency.

- Knowledge Consolidation: Synthesizing disparate pieces of information into coherent insights, enabling agents to build a more holistic understanding of user needs and context.

This process occurs asynchronously and transparently, allowing agents to continuously improve without interrupting live interactions. Moreover, dreaming can serve as a catalyst for emergent behaviors where agents develop novel strategies or heuristics not explicitly programmed by developers.

1.3 Historical Inspirations: From Biological Dreams to AI Reflection

The inspiration for Claude’s dreaming feature traces back to neuroscience and cognitive science research on biological dreaming. Studies suggest that during REM sleep, the brain consolidates memories and solves problems by replaying and reorganizing experiences. This memory consolidation process is believed to strengthen neural connections and facilitate learning and creativity.

In the realm of AI, analogous mechanisms have been explored through techniques such as experience replay in reinforcement learning, where an agent reprocesses past experiences to stabilize learning and reduce catastrophic forgetting. Offline policy evaluation similarly allows agents to assess the effectiveness of strategies without real-time interaction.

Claude’s dreaming feature extends these ideas by integrating natural language understanding, pattern recognition, and self-modification capabilities within a managed agent framework. This novel integration enables agents to perform complex reasoning over unstructured conversational data, a significant advancement beyond traditional numeric or state-based experience replay.

By bridging cognitive science insights with state-of-the-art AI architectures, Anthropic has crafted a novel approach that empowers AI to self-improve continuously and autonomously. This convergence of biological inspiration and AI engineering marks a significant milestone in the evolution of intelligent systems.

2. Technical Architecture and Components of Claude’s Dreaming Feature

2.1 Overview of Managed Agents Framework

Before diving into dreaming specifics, it is crucial to understand the underlying Managed Agents framework that Claude operates within. Managed Agents are modular AI entities managed by Anthropic’s platform, capable of executing tasks, maintaining context, and interacting with users or other systems. This framework provides a robust foundation for building scalable, flexible AI applications.

The core components include:

- Session Manager: Handles interaction histories and session metadata, including timestamps, user identifiers (anonymized), and contextual flags.

- Task Planner: Generates action plans based on objectives and context, utilizing goal decomposition and dynamic scheduling.

- Response Generator: Produces natural language or structured outputs, powered by advanced language models fine-tuned for the agent’s domain.

- Memory Store: Archives past sessions and agent states, facilitating retrieval and analysis during dreaming cycles.

The dreaming feature integrates deeply with these components, especially the Session Manager and Memory Store, to retrieve and analyze past data. This tight integration enables seamless data flow and efficient introspection.

2.2 The Dreaming Pipeline

The dreaming process follows a multi-stage pipeline designed to transform raw session data into actionable insights. The main stages are:

- Data Collection: Aggregation of past interaction logs, including user inputs, agent responses, and contextual parameters such as time of day or user sentiment. This data is sourced from the Memory Store and Session Manager.

- Preprocessing: Cleaning, normalizing, and structuring data to facilitate analysis. This involves removing noise, anonymizing sensitive information, tokenizing text, and encoding contextual metadata.

- Pattern Mining: Employing natural language processing (NLP) and machine learning techniques to detect recurring themes, anomalies, and correlations. Techniques include topic modeling, sentiment analysis, and anomaly detection.

- Insight Generation: Synthesizing patterns into strategic recommendations or hypotheses about agent behavior. This may involve generating summaries, identifying bottlenecks, or proposing new heuristics.

- Strategy Update: Modifying the agent’s internal heuristics, prompt templates, or decision policies based on insights. Updates are validated against performance metrics before deployment.

This pipeline runs periodically or is triggered by specific events, such as reaching a predefined number of sessions or performance thresholds. The asynchronous nature ensures minimal impact on real-time user interactions.

2.3 Core Algorithms and Models Used

Claude’s dreaming feature leverages a combination of advanced AI models and algorithms to perform its introspective functions:

- Transformer-based NLP Models: To semantically analyze conversation transcripts and extract relevant information. These models understand context, intent, sentiment, and can summarize or generate hypotheses.

- Clustering Algorithms: For grouping similar interaction patterns and identifying outliers. Methods such as k-means, DBSCAN, or hierarchical clustering help discover latent structures in data.

- Sequence Analysis: To understand temporal dependencies and evolution of agent behavior over time. Techniques include hidden Markov models and recurrent neural networks.

- Reinforcement Learning Adjustments: To update policy parameters based on discovered insights. Insights from dreaming can be translated into updated reward functions or exploration strategies.

- Anomaly Detection: Algorithms to identify rare or unexpected behaviors that may indicate bugs or emerging issues.

These models work synergistically to enable sophisticated introspection and adaptation without manual programming. The modular design also allows for future integration of novel algorithms as research progresses.

2.4 Data Privacy and Security Considerations

Given that dreaming involves processing potentially sensitive interaction data, Anthropic has implemented robust privacy safeguards to protect user information and maintain compliance with legal standards:

- Data Anonymization: Removing personally identifiable information (PII) before analysis, using techniques like token replacement, hashing, or differential privacy.

- Secure Storage: Encrypting session logs both at rest and in transit, with strict access controls and audit trails to prevent unauthorized access.

- Compliance: Ensuring adherence to data protection regulations such as GDPR and CCPA, including user consent management and data minimization principles.

- Data Retention Policies: Implementing configurable retention periods to limit data storage duration according to regulatory and organizational requirements.

- Access Transparency: Providing mechanisms for users and administrators to review what data is collected and how it is used.

These measures ensure that dreaming operations maintain ethical standards and user trust, an essential factor in deploying autonomous AI systems at scale.

3. Step-by-Step Tutorial: Implementing Claude’s Dreaming Feature in Your Managed Agent

3.1 Prerequisites and Setup

Before getting started, ensure the following prerequisites are met to successfully leverage Claude’s dreaming feature:

- Access to Anthropic’s Claude Managed Agents platform with dreaming feature enabled. This may require contacting Anthropic for appropriate API access or platform permissions.

- Basic familiarity with Anthropic’s API and agent configuration paradigms, including Managed Agents lifecycle and capabilities.

- Development environment set up with Python 3.8+ or a preferred programming language that supports Anthropic’s SDK, including necessary dependencies.

- API key and authentication credentials for secure access, with appropriate scopes to manage agents and retrieve dreaming insights.

- Understanding of JSON and configuration file formats for agent setup and customization.

Once you have these ready, you can proceed to build an agent capable of dreaming and self-improvement.

3.2 Creating a Basic Managed Agent

Start by creating a simple Managed Agent to perform a task, such as customer support or content summarization. Below is a minimal example using the Anthropic SDK in Python:

from anthropic import Anthropic, HUMAN_PROMPT, AI_PROMPT

client = Anthropic(api_key="your_api_key")

def simple_agent_response(user_input):

prompt = f"{HUMAN_PROMPT} {user_input} {AI_PROMPT}"

response = client.completions.create(

model="claude-v1",

prompt=prompt,

max_tokens_to_sample=300,

)

return response.completion

user_message = "Can you help me understand the refund policy?"

print(simple_agent_response(user_message))

This script initializes a basic agent that responds to user queries by constructing a prompt and sending it to Claude’s language model. This foundational setup can be expanded with state management, session tracking, and extended capabilities.

The dreaming feature builds upon the foundation of multi-agent architectures; our guide to multi-agent workflows that specialize and cross-check explains how to design agent systems where specialized bots collaborate effectively: Multi-Agent Workflows: Let Your Bots Specialize And Cross-Check Each Other.

3.3 Enabling Dreaming for Your Agent

To enable dreaming, you must configure the Managed Agent to store session data and activate the dreaming pipeline. This typically involves setting flags or parameters in the agent’s configuration file or API calls.

Example configuration snippet:

{

"agent_name": "SupportAgent",

"enable_dreaming": true,

"dreaming_schedule": "daily",

"session_storage": {

"enabled": true,

"retention_period_days": 30

}

}

Here, enable_dreaming activates the dreaming feature, while dreaming_schedule controls how often the agent introspects its data (e.g., daily, weekly, or event-driven). The session_storage block ensures that interaction histories are archived securely, with a retention policy to manage storage costs and compliance. Additional parameters can include filters to exclude sensitive data or specify data granularity.

To see how Claude-based agents perform in enterprise environments, the Walleye Capital case study demonstrates how a financial firm achieved complete AI adoption using Claude Code across their entire engineering organization: How Walleye Capital Achieved 100% AI Adoption: A Claude Code Case Study.

3.4 Accessing and Analyzing Dreaming Insights

Once dreaming is activated, the agent’s backend will periodically perform introspection. You can retrieve the resulting insights via API endpoints or dashboard interfaces provided by Anthropic.

Example API call to fetch latest dreaming insights:

dreaming_insights = client.managed_agents.get_dreaming_insights(agent_id="SupportAgent-001")

print(dreaming_insights)

The returned data includes pattern summaries, flagged issues, and recommended strategy adjustments. A typical output might highlight recurring user complaints about response delays or identify ineffective response templates that confuse users. Insights can be presented as textual summaries, structured JSON objects, or visual analytics in the dashboard.

Below is a simplified example of dreaming insights JSON structure:

| Field | Description | Example |

|---|---|---|

pattern_summaries |

Key recurring themes identified | “Users frequently ask about refund policy after purchase.” |

flagged_issues |

Potential failure modes or anomalies | “Response delay exceeds 2 seconds in 15% of sessions.” |

recommended_prompt_template |

Suggested prompt adjustment for improved responses | “Please provide clear refund instructions with links.” |

confidence_scores |

Reliability of each insight | 0.92 |

3.5 Updating Agent Behavior Based on Dreams

With insights in hand, you can programmatically update your agent to improve performance. For example, you might modify prompt templates, adjust task prioritization, or refine response generation heuristics. This process forms a feedback loop enabling continuous self-improvement without manual retraining.

Example of applying a recommended prompt adjustment:

new_prompt_template = dreaming_insights['recommended_prompt_template']

agent_config = client.managed_agents.get_agent_config(agent_id="SupportAgent-001")

agent_config['prompt_template'] = new_prompt_template

client.managed_agents.update_agent_config(agent_id="SupportAgent-001", config=agent_config)

It is advisable to validate updates in a staging environment or via A/B testing to ensure positive impact before full deployment. Monitoring post-update performance metrics such as user satisfaction scores, response accuracy, and latency is critical to assess effectiveness.

For a practical implementation example of Claude’s agent capabilities in a specific domain, our setup guide for Claude’s financial agents provides step-by-step instructions for configuring specialized agents in financial workflows: How to Use Claude’s New Financial Agents: A Complete Step-by-Step Setup Guide.

3.6 Automating Dreaming-Driven Updates

To fully leverage dreaming, automate the integration of insights into agent updates. This can be achieved through scheduled jobs or event-driven triggers within your development pipeline or orchestration infrastructure.

Consider implementing a pipeline that:

- Fetches dreaming insights daily via API.

- Validates recommendations against performance metrics and thresholds.

- Applies approved updates to agent configurations programmatically.

- Monitors post-update performance for further adjustment.

Automation ensures your agents evolve dynamically in response to real-world usage and reduces the burden on development teams. Incorporating alerting and rollback mechanisms further enhances reliability.

4. Advanced Techniques and Future Directions

4.1 Combining Dreaming with Reinforcement Learning

While dreaming provides high-level introspection, integrating it with reinforcement learning (RL) can accelerate agent optimization. For instance, insights from dreaming can shape reward functions or guide exploration strategies in RL algorithms.

This hybrid approach allows agents to both reflect on past data and actively experiment with new behaviors, creating a powerful synergy. For example, dreaming might identify that certain response styles generate higher user satisfaction, leading to updated reward signals that bias RL agents towards these behaviors. Conversely, RL exploration data can feed back into dreaming for deeper analysis.

Such integration presents challenges, including aligning asynchronous dreaming updates with ongoing RL training and managing stability. However, recent research into meta-learning and hierarchical RL suggests promising pathways for combining introspective and experiential learning.

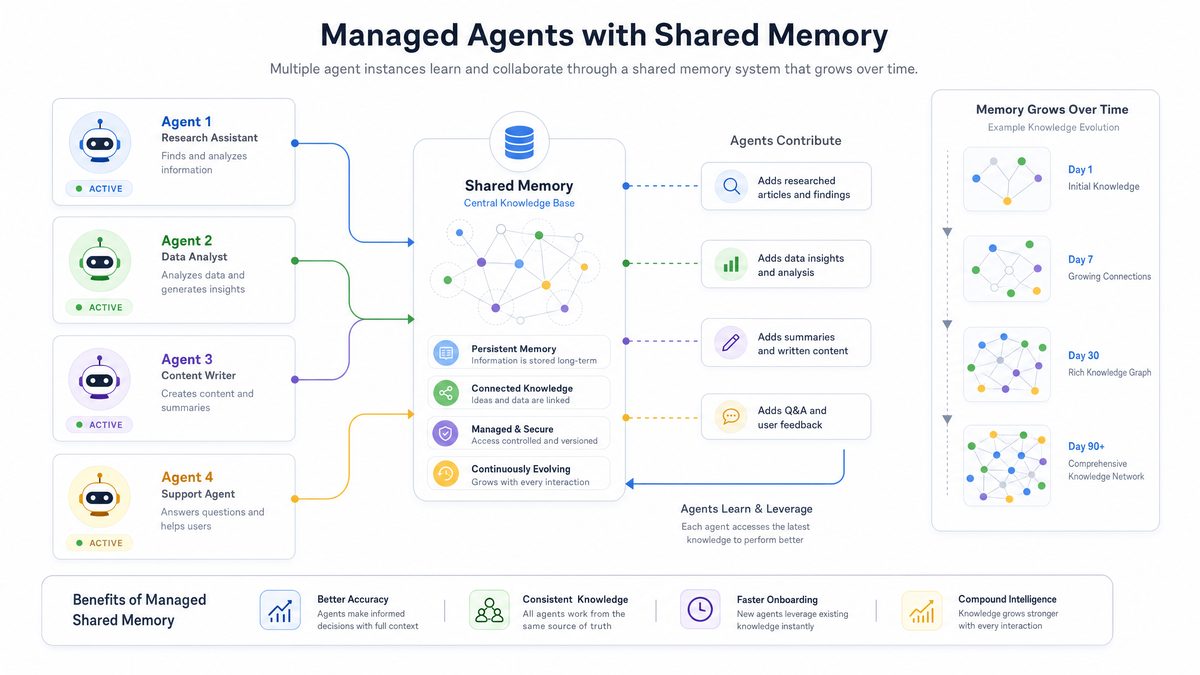

4.2 Multi-Agent Dreaming and Collaboration

In complex environments, multiple Managed Agents might operate simultaneously, each specializing in different domains or tasks. Claude’s dreaming feature can be extended to enable collaborative dreaming, where agents share insights and co-evolve strategies.

This opens avenues for emergent collective intelligence, distributed problem solving, and scalable AI ecosystems. For example, a customer support agent could share insights with a sales assistant agent, enabling cross-functional learning and improved user experiences.

Implementing collaborative dreaming involves challenges such as:

- Data sharing protocols that preserve privacy and security.

- Consensus algorithms to reconcile conflicting insights.

- Scalable architectures to support cross-agent communication.

Future frameworks may include centralized dreaming hubs or decentralized peer-to-peer introspection models to balance efficiency and autonomy.

4.3 Customizing Dreaming for Domain-Specific Applications

Different application domains may require tailored dreaming configurations to maximize relevance and utility. For example, a financial advisory agent might prioritize compliance-related insights, while a healthcare assistant might focus on patient safety patterns.

Anthropic’s framework allows customization of:

- Data filtering rules during dreaming, enabling exclusion or emphasis of certain data types.

- Priority weighting for different types of insights, such as favoring regulatory compliance over conversational style in finance.

- Integration with external knowledge bases or ontologies to enrich analysis with domain-specific context.

- Custom alerting and reporting tailored to stakeholder needs.

Such adaptability enhances dreaming’s utility across diverse industries and regulatory environments.

4.4 Ethical Considerations and Responsible Use

As agents become more autonomous through dreaming, maintaining ethical guardrails is paramount. Developers should ensure:

- Transparency about self-improvement processes: Users and stakeholders should understand how and why agents evolve.

- Bias detection and mitigation: Pattern extraction algorithms must be audited to prevent reinforcement of harmful biases or stereotypes.

- User consent for data usage: Clear policies and mechanisms for obtaining user permission for data inclusion in dreaming.

- Regular audits of agent behavior post-update: Continuous monitoring to detect unintended consequences or degradations.

- Fail-safe mechanisms: Ability to roll back updates or disable dreaming if undesired behaviors emerge.

Responsible deployment safeguards user trust and aligns with societal values, especially as AI agents assume greater decision-making autonomy.

4.5 Future Research and Potential Enhancements

The dreaming feature is at the forefront of AI self-improvement research. Future enhancements may include:

- Real-time dreaming: Enabling continuous adaptation during live sessions rather than periodic batch updates, improving responsiveness to emerging trends.

- Integration with multimodal data sources: Incorporating vision, audio, sensor data, and other modalities for richer introspection and holistic understanding.

- Explainable dreaming outputs: Developing interpretable reports that clarify why certain changes are recommended, aiding developer trust and regulatory compliance.

- Cross-platform dreaming: Allowing agents to learn from heterogeneous environments and applications, promoting transfer learning and generalization.

- Adaptive dreaming schedules: Dynamic adjustment of dreaming frequency and depth based on agent performance and environmental volatility.

These advances will further empower AI agents to reach unprecedented levels of autonomy and intelligence, ushering in a new era of self-sustaining AI systems.

5. Comparative Analysis: Dreaming Feature Versus Traditional AI Learning Approaches

| Aspect | Claude’s Dreaming Feature | Traditional Reinforcement Learning | Supervised Fine-Tuning |

|---|---|---|---|

| Learning Paradigm | Self-reflective analysis of past sessions to derive improvements | Trial-and-error interaction with environment guided by rewards | Training on labeled datasets with human-provided targets |

| Human Intervention | Minimal; autonomous introspection and updates | Moderate; requires reward design and tuning | High; needs curated datasets and annotations |

| Adaptation Speed | Periodic, asynchronous updates based on accumulated data | Potentially incremental during interaction | Batch updates after retraining cycles |

| Data Requirements | Historical session logs and interaction metadata | Simulated or real environment feedback | Large labeled datasets |

| Explainability | Emerging; patterns and recommendations can be inspected | Often opaque; policy learned as black-box | Varies; depends on model and feature engineering |

| Use Cases | Managed conversational agents, adaptive assistants | Robotics, game playing, control systems | Classification, prediction, language models |

6. Case Studies: Real-World Applications of Claude’s Dreaming Feature

6.1 Customer Support Automation

A leading e-commerce platform integrated Claude’s dreaming feature into their customer support Managed Agent. Initially, the agent handled common queries but struggled with ambiguous refund questions and escalations.

After enabling dreaming, the agent autonomously identified frequent misunderstandings around the refund policy and detected slow response times during peak hours. Leveraging these insights, it refined its prompt templates to provide clearer instructions and adjusted task prioritization to handle urgent cases faster.

Results included a 20% reduction in average handling time, 15% increase in customer satisfaction scores, and fewer escalations to human agents, demonstrating the practical impact of autonomous dreaming-driven improvement.

6.2 Healthcare Virtual Assistant

An AI-powered healthcare assistant utilized dreaming to enhance patient interactions over time. By analyzing conversation logs, the agent detected patterns related to medication adherence confusion and potential safety risks.

Dreaming-generated insights informed updates that included clearer medication schedules and proactive reminders. Additionally, the agent integrated with external medical ontologies to better contextualize patient queries.

This approach improved patient engagement and safety compliance, showcasing dreaming’s adaptability to sensitive, high-stakes domains.

6.3 Financial Advisory Agent

A financial services company deployed a Managed Agent for client portfolio advice. Dreaming enabled the agent to continuously monitor compliance-related interactions, flagging potential regulatory risks and improving explanation clarity.

Customized dreaming configurations prioritized compliance insights, ensuring the agent met stringent legal standards while enhancing client trust.

These case studies illustrate dreaming’s versatility and effectiveness across diverse industries and applications.

7. Deep Dive: Algorithmic Foundations of Claude’s Dreaming Feature

7.1 Natural Language Understanding for Pattern Extraction

At the heart of dreaming lies advanced natural language understanding (NLU), which enables semantic analysis of conversation transcripts. Techniques include:

- Named Entity Recognition (NER): Identifying key entities such as products, dates, or user intents within dialogues.

- Sentiment Analysis: Measuring emotional tone to detect user frustration or satisfaction.

- Topic Modeling: Uncovering latent themes through algorithms like Latent Dirichlet Allocation (LDA) or neural topic models.

- Dialogue Act Classification: Categorizing utterances by function (e.g., question, request, confirmation) to understand conversation flow.

These components feed into higher-level clustering and summarization processes, enabling the agent to detect nuanced patterns beyond surface-level keywords.

7.2 Clustering and Anomaly Detection Techniques

To group similar interactions and identify outliers, dreaming employs clustering and anomaly detection algorithms:

| Algorithm | Description | Use Case in Dreaming |

|---|---|---|

| k-means Clustering | Partitions data into k clusters by minimizing within-cluster variance | Grouping similar user queries or agent responses |

| DBSCAN | Density-based clustering to identify clusters of arbitrary shape and outliers | Detecting rare failure modes or unusual interaction patterns |

| Isolation Forest | Anomaly detection via random partitioning to isolate anomalous points | Flagging sessions with abnormal latency or error rates |

| Autoencoders | Neural networks trained to reconstruct input data, deviations indicate anomalies | Spotting subtle deviations in conversation flows |

7.3 Sequence Modeling and Temporal Analysis

Understanding how agent behavior evolves over time is critical for meaningful dreaming. Techniques include:

- Hidden Markov Models (HMMs): Modeling state transitions in dialogue or task execution sequences.

- Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM): Capturing long-range dependencies in interaction histories.

- Temporal Convolutional Networks (TCNs): Providing efficient sequence modeling with parallelism advantages.

- Change Point Detection: Identifying moments where agent behavior or user interactions significantly shift, possibly indicating need for strategy updates.

These methods enable dreaming to not only analyze static snapshots but also to capture dynamic trends and emerging issues.

7.4 Policy Update and Adaptation Mechanisms

The ultimate goal of dreaming is to translate insights into improved agent policies. This can be achieved through:

- Heuristic Rule Updates: Adjusting hard-coded rules or thresholds based on discovered patterns.

- Prompt Template Refinement: Modifying language model prompts to guide generation toward desired behaviors.

- Parameter Fine-tuning: Applying lightweight fine-tuning or prompt tuning using curated datasets derived from dreaming insights.

- Reinforcement Learning Policy Adjustments: Updating reward functions or exploration parameters based on dreaming recommendations.

These mechanisms ensure that dreaming leads to tangible behavioral improvements while maintaining system stability.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Useful Links

- Anthropic Claude Official Website

- Managed Agents Documentation

- Dreaming Feature Technical Guide

- Experience Replay and Biological Inspiration in AI (Research Paper)

- AI Self-Improvement Trends Report

- Anthropic API Reference

- Ethics in AI Technology