How Top Engineering Teams Are Using GPT-5.5 and Codex to Ship 10x Faster

The landscape of software development is undergoing a profound transformation, driven by the rapid advancements in artificial intelligence. While earlier iterations of large language models (LLMs) like GPT-3.5 and GPT-4 offered compelling glimpses into AI’s potential for code generation and analysis, the advent of more sophisticated models such as GPT-5.5 and the specialized OpenAI Codex has moved beyond mere assistance to become integral components of modern engineering workflows. This article delves into how leading engineering teams are leveraging these cutting-edge AI tools not just to incrementally improve productivity, but to achieve a remarkable 10x acceleration in their shipping cycles, particularly in complex areas like debugging and large-scale branch merges.

The promise of AI in software development has always been alluring: intelligent autocomplete, automated testing, and even self-healing code. However, the practical application often fell short of the hype. Early models struggled with context, introduced subtle bugs, or required extensive human oversight. GPT-5.5 and Codex, by virtue of their vastly improved contextual understanding, reasoning capabilities, and domain-specific training on vast code repositories, are fundamentally changing this dynamic. They are evolving from sophisticated assistants to true collaborators, capable of tackling problems that were previously the exclusive domain of highly experienced human engineers.

This deep dive will explore real-world case studies, technical methodologies, and the strategic implications of integrating these powerful AI models into the core of software development processes. We will examine how developers like Dan Shipper and Pietro Schirano, among others, are pioneering new paradigms for code quality, velocity, and innovation. The discussion will cover the architectural considerations for integrating these models, the types of problems they are best suited to solve, and the cultural shifts required within engineering teams to fully harness their potential.

The Evolution of AI in Code: From Assistance to Autonomy

To appreciate the impact of GPT-5.5 and Codex, it’s crucial to understand the evolutionary path of AI in programming. Initial AI tools focused on syntax highlighting, basic refactoring suggestions, and simple code completion. Tools like TabNine and GitHub Copilot (which heavily leverages Codex) marked a significant leap, offering context-aware suggestions and even generating entire functions based on comments or function signatures. These tools were invaluable for reducing boilerplate and accelerating routine coding tasks.

However, the challenges of complex debugging, understanding intricate legacy codebases, or resolving massive merge conflicts remained largely human-centric. These tasks demand a deep understanding of system architecture, data flow, potential side effects, and the ability to reason about multiple interacting components. This is where GPT-5.5 and the latest iterations of Codex demonstrate their disruptive power. Their enhanced capabilities include:

- Vastly Improved Context Window and Retention: GPT-5.5 can process and retain significantly more code and conversational history, allowing it to understand the broader architectural context of a problem, not just isolated functions.

- Superior Reasoning and Problem-Solving: These models exhibit a more advanced ability to reason through complex logic, identify subtle bugs, and propose non-obvious solutions. They can connect disparate pieces of information across a codebase.

- Domain-Specific Expertise (Codex): Codex, being specifically trained on code, excels at understanding programming paradigms, common libraries, error patterns, and best practices across various languages. Its knowledge base is vast and highly specialized.

- Multi-Turn Interaction and Refinement: Developers can engage in iterative dialogues with these models, providing feedback, asking for alternative solutions, or requesting deeper explanations, much like collaborating with a human peer.

- Code Generation with Higher Fidelity: While still not perfect, the code generated by GPT-5.5 and Codex is often closer to production-ready, requiring less human correction and offering better adherence to coding standards.

Case Study: Dan Shipper and the Art of AI-Assisted Debugging

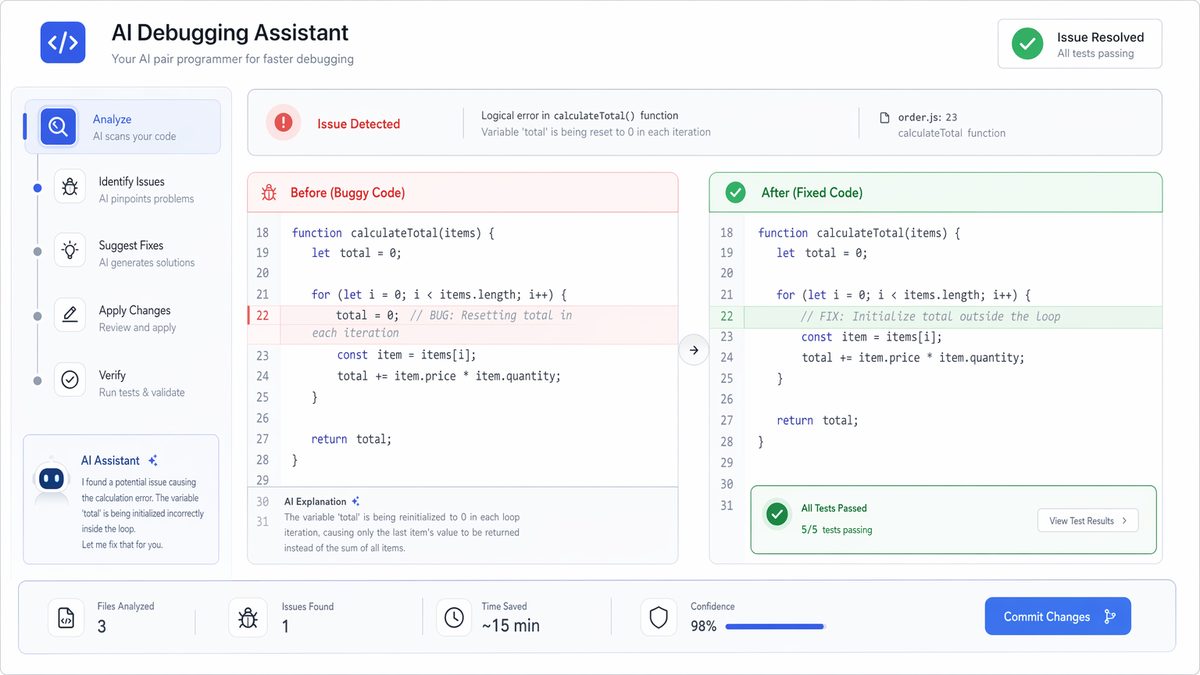

Dan Shipper, co-founder of Every, has been a vocal proponent and early adopter of advanced LLMs in his development workflow. His experiences highlight how GPT-5.5 can transform the often-frustrating process of debugging, particularly in unfamiliar or complex systems. Shipper’s approach isn’t about replacing human intuition but augmenting it with AI’s analytical prowess.

The Challenge: Intermittent, Hard-to-Reproduce Bugs

One of the most insidious types of bugs developers face are those that are intermittent, highly context-dependent, or occur deep within a complex dependency chain. These bugs can consume days or even weeks of engineering time, as developers painstakingly try to isolate the root cause. Traditional debugging tools, while powerful, often require the human to know *where* to look. GPT-5.5, with its ability to ingest vast amounts of log data, code snippets, and system configurations, can act as an intelligent diagnostic engine.

Shipper’s Methodology with GPT-5.5:

- Contextual Ingestion: Shipper feeds GPT-5.5 not just the stack trace, but also relevant code files, configuration settings, recent commit messages, and even descriptions of the system architecture. The prompt might start with something like: “We’re experiencing an intermittent `NullPointerException` in our user authentication service. Here’s the stack trace, the relevant Java file, and our Spring Security configuration. We recently upgraded `library-x` from 1.2.0 to 1.3.0. What are potential causes?”

- Hypothesis Generation: GPT-5.5 analyzes this information and generates a list of potential hypotheses. It might suggest: “The `NullPointerException` could be due to an unhandled optional, a race condition during user session creation, or an incompatibility introduced by the `library-x` upgrade, specifically related to its dependency on `library-y`.”

- Diagnostic Code Suggestions: Instead of just pointing out the problem, GPT-5.5 often suggests specific diagnostic steps. “Add a breakpoint here and inspect the value of `userObject` before it’s dereferenced. Alternatively, add logging statements in the `UserService.authenticate` method to track the state of `authTokens`.”

- Proactive Solutioning: In many cases, the model goes beyond diagnosis to propose solutions. “Consider wrapping the problematic line in an `Optional.ofNullable().orElseThrow()` or implement a synchronized block around the session creation logic. Check the release notes for `library-x` 1.3.0 for breaking changes related to session management.”

- Iterative Refinement: Shipper engages in a dialogue, providing more context or asking for deeper explanations. “You mentioned a race condition. How could that manifest given our current `ConcurrentHashMap` implementation? Are there specific edge cases we should consider?” GPT-5.5 then refines its answers, offering more granular insights.

Technical Deep Dive: The Role of Context and Semantic Search

The effectiveness of this approach hinges on GPT-5.5’s ability to maintain a rich contextual understanding. When Shipper provides a stack trace and code, the model doesn’t just treat them as strings. It semantically parses the code, understands variable scopes, function calls, and potential data flows. The large context window allows it to hold an entire file, or even multiple related files, in its “working memory,” enabling it to identify interactions that would be difficult for a human to spot without extensive manual tracing.

Furthermore, GPT-5.5 leverages its vast training data to perform a kind of “semantic search” over common error patterns, library behaviors, and architectural anti-patterns. If a specific `NullPointerException` pattern is frequently associated with a particular version of a library in its training data, it can quickly infer that as a likely culprit, even if the developer hasn’t explicitly considered it. This capability is particularly powerful when dealing with obscure errors or interactions between complex third-party libraries.

For example, consider a bug related to an asynchronous operation where a callback is expected but doesn’t fire, leading to a deadlock. A human developer might spend hours stepping through the async chain. GPT-5.5, given the relevant code and a description of the observed behavior, could quickly hypothesize: “This looks like a classic promise chain issue where a `then()` block is not returning a promise, or an unhandled rejection is silently failing, preventing subsequent operations. Check the `handleApiResponse` function at line X; ensure it explicitly returns a promise or handles all possible error paths.” This level of insight, often delivered within seconds, drastically cuts down on diagnostic time.

One of the critical aspects of this workflow is the developer’s ability to craft effective prompts. Instead of vague questions, precise and detailed prompts that include code, error messages, expected behavior, and observed behavior yield the best results. This interaction model essentially turns debugging into a collaborative problem-solving session with an incredibly knowledgeable, tireless, and context-aware AI.

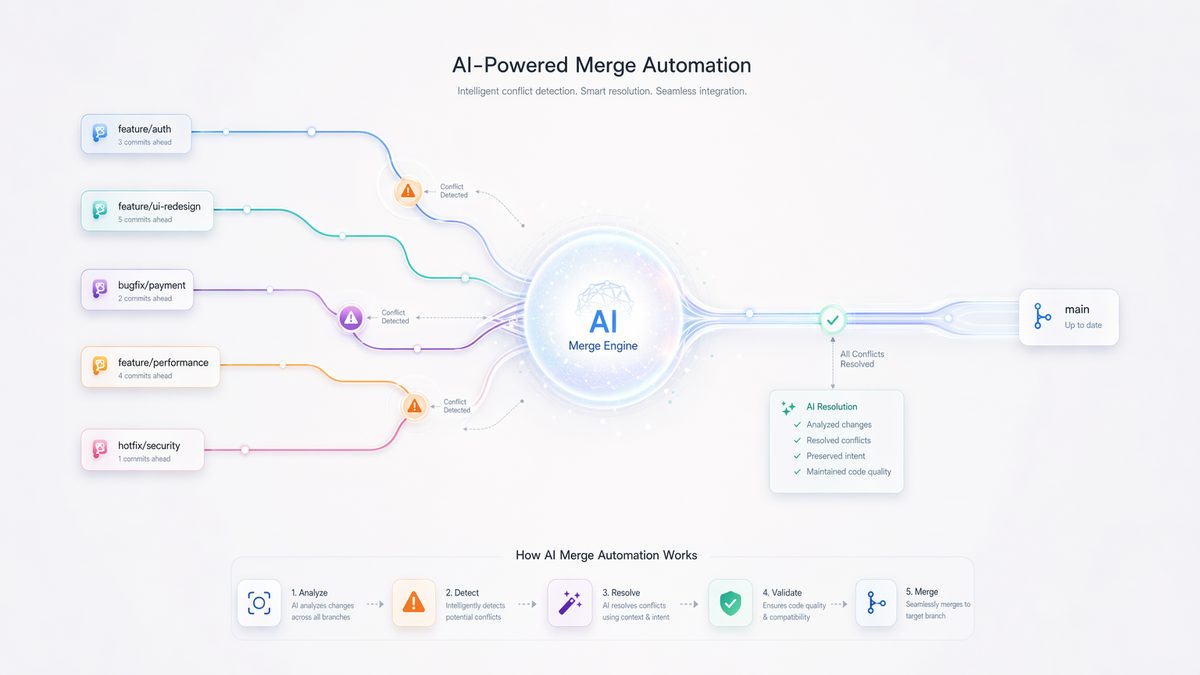

Case Study: Pietro Schirano and Mastering Massive Branch Merges with AI

Merging large feature branches back into a main development line is a perennial headache for engineering teams. Complex merges, especially after weeks or months of parallel development, can lead to “merge hell” — days of painstaking conflict resolution, subtle regressions, and broken builds. Pietro Schirano, a prominent figure in the AI development community, has demonstrated how advanced LLMs, particularly those with strong code understanding like Codex, can turn this arduous task into a manageable, even accelerated, process.

The Challenge: Merge Hell and Regressions

A typical scenario involves two long-lived branches: `main` and `feature/big-new-system`. Over time, `main` has accumulated numerous bug fixes and minor features, while `feature/big-new-system` has introduced a completely new architectural component, altering many core files. When it’s time to merge `feature/big-new-system` into `main`, conflicts are inevitable. These aren’t just line-by-line textual conflicts; they are often semantic conflicts where both branches modified the same logical block of code but with different intentions, or where new dependencies were introduced in one branch that break the other.

Resolving these manually requires a deep understanding of both codebases, the changes introduced in each, and the intended behavior. It’s time-consuming, error-prone, and often leads to regressions that are only discovered much later during testing.

Schirano’s AI-Powered Merge Strategy:

- Pre-Merge Analysis and Conflict Prediction: Before even attempting the merge, Schirano uses Codex to analyze the differences between the two branches at a semantic level. He might feed it `git diff` outputs, along with descriptions of the changes made in each branch. The prompt could be: “Analyze the following `git diff` between `main` and `feature/big-new-system`. Identify potential semantic conflicts in `UserService.java` and `OrderProcessor.java` where both branches modified similar functionality. Suggest how these conflicts might manifest as runtime errors.”

- Automated Conflict Resolution Suggestions: For direct textual conflicts, traditional merge tools provide basic options. However, for semantic conflicts, Codex excels. When a conflict arises in a file, Schirano feeds the conflicted file (with `<<<<<<<`, `=======`, `>>>>>>>` markers) to Codex, along with the relevant surrounding code and potentially the commit messages for both conflicting changes. The prompt: “Resolve the following merge conflict in `PaymentGateway.java`. The `main` branch added a new logging mechanism, while the `feature` branch updated the payment processing logic to support a new vendor. Ensure both functionalities are preserved and integrated correctly.” Codex then proposes a merged version of the code, often with explanations of its choices.

- Refactoring and Harmonization: Beyond simple conflict resolution, Codex can suggest refactoring to better integrate the merged code. If two branches introduced similar but slightly different utility functions, Codex can propose a unified, more robust version. “After merging `feature/user-profiles`, I have two slightly different `DateUtil` classes. Can you suggest a unified `DateTimeFormatter` pattern that handles both `ISO_DATE` and `YYYY-MM-DD` formats, and refactor the code to use it consistently?”

- Post-Merge Validation and Test Generation: Once a merge is complete, the risk of regressions is high. Schirano uses Codex to generate targeted unit and integration tests based on the changes introduced by the merge. “Given the merged `OrderProcessor.java` and the changes in `PaymentGateway.java`, generate new unit tests that cover the updated payment vendor logic and ensure the new logging mechanism is functioning as expected.” This significantly reduces the time spent manually writing regression tests. How AI Coding Agents Boosted Developer Productivity by 40%: A Real-World Case Study

Technical Deep Dive: Semantic Understanding and Code Transformation

The core capability enabling this is Codex’s deep semantic understanding of code. Unlike a simple diff tool that sees lines of text, Codex “understands” the code structure, the purpose of functions, the relationships between classes, and the impact of changes. When presented with a merge conflict, it doesn’t just choose one side or the other; it attempts to synthesize a solution that preserves the intent of both changes.

For example, if `main` added a new parameter to a function `processOrder(orderId, userId)` and `feature` modified the internal logic of `processOrder` but didn’t account for the new `userId` parameter, a manual merge might simply integrate the new parameter, leading to a compilation error or runtime bug if the `feature` logic doesn’t use it or expects a different signature. Codex, however, might suggest not only adding the `userId` parameter but also updating the `feature` branch’s internal `processOrder` logic to correctly handle or ignore the new parameter, or even suggesting a refactor to pass a more comprehensive `OrderContext` object instead.

For developers looking to integrate AI coding tools into their workflow, our detailed analysis of How Notion, Ramp, and Braintrust Scaled OpenAI Codex Across Their Engineering Teams: An Enterprise Case Study provides practical implementation strategies and configuration tips that complement the capabilities discussed in this article.

The implications for team velocity are enormous. What once took days of concentrated effort from multiple senior engineers can now be significantly automated, allowing engineers to focus on new feature development rather than maintenance overhead. This leads to faster release cycles and a higher quality codebase, as the AI can catch subtle interactions that human eyes might miss.

Broader Applications and Strategic Integrations

Beyond debugging and merging, GPT-5.5 and Codex are finding applications across the entire software development lifecycle, driving significant efficiency gains.

Automated Code Review and Quality Assurance

AI can act as a first-pass code reviewer, identifying potential bugs, security vulnerabilities, performance bottlenecks, and deviations from coding standards. This frees human reviewers to focus on architectural decisions and complex logic, rather than stylistic issues or obvious errors. GPT-5.5 can be integrated into CI/CD pipelines to automatically comment on pull requests, suggesting improvements or pointing out potential issues before a human even looks at the code.

Legacy Code Modernization and Refactoring

Many organizations grapple with large, aging codebases that are difficult to maintain and extend. GPT-5.5 and Codex can assist in understanding, documenting, and even refactoring legacy code. They can identify dead code, suggest modern equivalents for deprecated functions, or propose architectural improvements. For example, feeding an old COBOL program or a complex Java 7 codebase into GPT-5.5 with the prompt “Modernize this code to Java 17 best practices, using Streams API where appropriate and replacing deprecated libraries” can yield surprisingly effective results, significantly reducing the manual effort of migration.

Test Case Generation and Coverage Enhancement

For developers looking to integrate AI coding tools into their workflow, our detailed analysis of How to Set Up and Optimize the New ChatGPT Pro Plan for Maximum Codex Productivity provides practical implementation strategies and configuration tips that complement the capabilities discussed in this article.

Documentation and Explanations

Good documentation is vital but often neglected. GPT-5.5 can generate API documentation, inline comments, and even high-level architectural overviews directly from code. It can also explain complex code segments to new team members, accelerating onboarding and knowledge transfer. “Explain how the `GraphQLService.executeMutation` method handles authorization checks and data validation, assuming a `Spring Security` context.”

Architectural Considerations for Integration

Integrating GPT-5.5 and Codex into an existing engineering workflow requires careful consideration of several architectural and operational aspects:

- API Integration and Orchestration: Most interactions will occur via API calls to OpenAI’s services. Engineering teams need to build robust wrappers and orchestration layers to manage API keys, rate limits, error handling, and prompt construction.

- Data Security and Privacy: Sending proprietary code to external AI services raises significant security and privacy concerns. Organizations must evaluate OpenAI’s data usage policies, consider on-premise or private cloud deployments for highly sensitive code (if available for future models), or implement strict sanitization protocols.

- Version Control Integration: Seamless integration with Git and other VCS tools is crucial. Tools need to be able to pull code, apply AI-generated changes, and commit them back, potentially with human review.

- Feedback Loops and Fine-tuning: While general-purpose, these models can be further fine-tuned on an organization’s specific codebase, coding standards, and common bug patterns. Establishing feedback loops where developers rate the AI’s suggestions can help improve performance over time.

- Human-in-the-Loop Design: AI is a powerful assistant, not a replacement for human judgment. All AI-generated code or suggestions should undergo human review and testing. The goal is to accelerate, not automate blindly.

- Cost Management: API usage for these models can be costly, especially for large-scale operations. Monitoring usage, optimizing prompts for token efficiency, and potentially caching results are important for cost control.

The Human Element: Shifting Roles and Skill Sets

The rise of AI in engineering doesn’t diminish the role of human developers; rather, it elevates it. The skills required are shifting:

- Prompt Engineering: The ability to craft effective prompts that elicit precise and useful responses from the AI is becoming a critical skill. This involves understanding the AI’s capabilities, its limitations, and how to structure questions to maximize its utility.

- Critical Evaluation: Developers must become adept at critically evaluating AI-generated code and suggestions, identifying subtle errors, and ensuring alignment with architectural principles and business requirements.

- System Design and Architecture: With AI handling more of the tactical coding, human engineers can dedicate more time to high-level system design, architectural decisions, innovation, and understanding complex business logic.

- Mentorship and Training: Experienced engineers will play a crucial role in mentoring junior developers on how to effectively collaborate with AI, ensuring that AI-generated code adheres to best practices and fits within the broader system.

- Ethical Considerations: Understanding the ethical implications of AI-generated code, including bias, security vulnerabilities, and intellectual property, will become increasingly important.

The transition is not without its challenges. There’s an initial learning curve for developers to effectively integrate AI into their daily routines. Trust in AI-generated code needs to be built over time through rigorous testing and validation. Moreover, organizations need to foster a culture of experimentation and continuous learning to fully embrace these new tools.

Conclusion: The Dawn of Hyper-Accelerated Engineering

The experiences of engineers like Dan Shipper and Pietro Schirano are not isolated incidents but harbingers of a broader trend. GPT-5.5 and OpenAI Codex represent a significant leap forward in AI’s ability to understand, generate, and reason about code. By intelligently augmenting human developers in tasks ranging from intricate debugging to the daunting challenge of massive branch merges, these models are enabling engineering teams to achieve unprecedented levels of velocity and quality. The 10x acceleration isn’t a hyperbolic claim but a tangible outcome for teams that strategically integrate these powerful AI tools.

The future of software development will undoubtedly be a symbiotic relationship between human ingenuity and artificial intelligence. As these models continue to evolve, becoming even more capable and context-aware, the boundaries of what’s possible in software engineering will expand dramatically. Teams that embrace this paradigm shift, invest in the necessary architectural integrations, and cultivate the evolving skill sets required, will be the ones to define the next generation of software innovation, shipping faster, building better, and ultimately, delivering more value than ever before.

Useful Links

- OpenAI Codex Blog Post

- GitHub Copilot (Powered by Codex)

- Every.to (Dan Shipper’s Publication)

- Pietro Schirano’s Personal Website

- AI Pair Programming: The Future of Software Development

- AI and Software Engineering (O’Reilly)

- How GPT-3 is Revolutionizing Software Development

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.