In the rapidly evolving landscape of artificial intelligence, large language models (LLMs) have become indispensable tools for developers, researchers, and businesses alike. These sophisticated models, capable of generating human-like text, translating languages, and writing various kinds of creative content, are constantly being refined and improved. However, the development and deployment of such complex systems are not without their challenges. Even leading AI labs can encounter unexpected issues that impact model performance, especially in critical domains like code generation.

✓ Instant access✓ No spam✓ Unsubscribe anytime

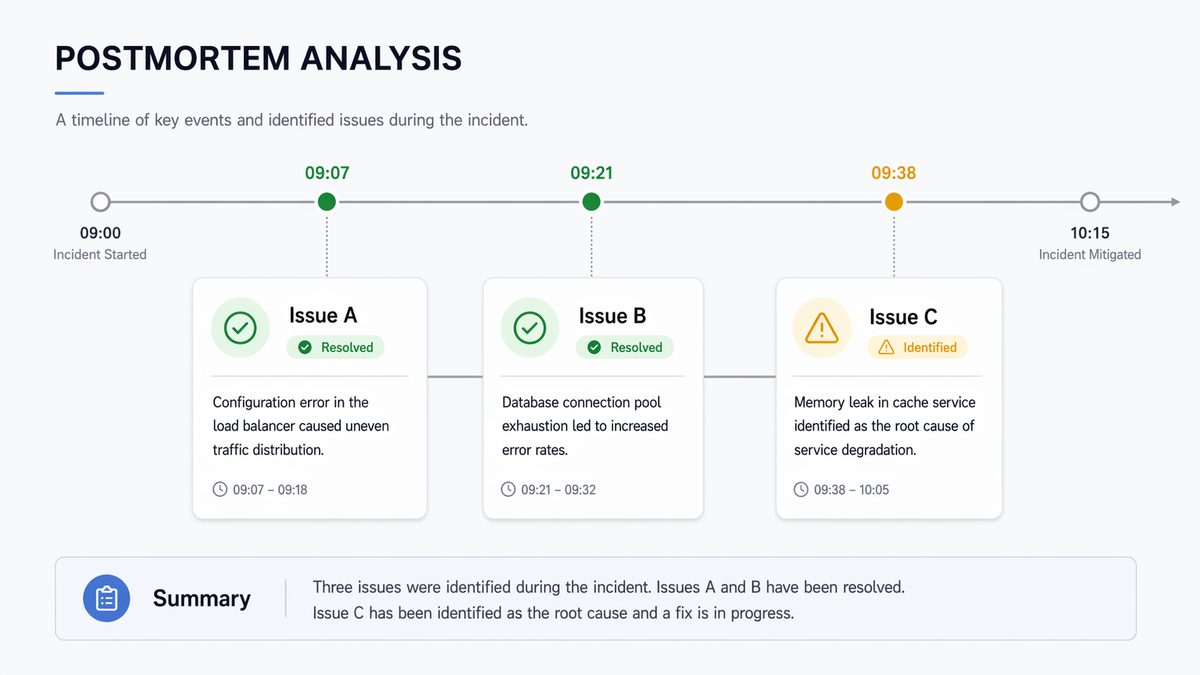

Anthropic, a prominent AI research company, faced such a challenge recently when users reported a noticeable degradation in the code quality generated by their flagship Claude models. This incident, which occurred between late February and early April 2024, sparked concern within the developer community and highlighted the intricate nature of LLM maintenance and improvement. Recognizing the severity of the issue, Anthropic conducted a thorough investigation, culminating in a detailed postmortem report published on April 23, 2024. This report not only identified the root causes of the degradation but also outlined the corrective measures taken, offering valuable insights into the complexities of LLM engineering.

This comprehensive guide delves into Anthropic’s postmortem, dissecting the three primary bugs that led to the code quality issues in Claude. We will explore the technical details of each bug, understand their impact, and analyze the solutions implemented by Anthropic. Furthermore, we will extract key lessons learned from this incident, providing a deeper understanding of the challenges in maintaining and enhancing LLM performance, particularly in specialized tasks like code generation. Our aim is to provide developers, AI enthusiasts, and tech professionals with a detailed, authoritative account of what went wrong and how Anthropic rectified it, offering insights that can inform best practices in AI development and deployment.

The Unraveling: Identifying the Code Quality Degradation

[IMAGE_PLACEACER_SECTION_1]The first signs of trouble emerged in late February 2024. Users, particularly those relying on Claude for coding tasks, began reporting a decline in the quality and correctness of the generated code. These reports were not isolated incidents but rather a growing chorus of feedback across various platforms, including social media, developer forums, and direct channels to Anthropic. The complaints ranged from subtle errors in syntax and logic to more significant issues like incomplete code, incorrect API usage, or failure to adhere to specified constraints.

Initially, such reports might be dismissed as anecdotal or attributed to individual user prompts. However, as the volume and consistency of the feedback increased, it became clear that a systemic issue was at play. Anthropic’s internal monitoring systems also began to register subtle shifts in performance metrics related to code generation, corroborating user observations. This convergence of external and internal signals prompted Anthropic to launch a full-scale investigation.

Initial Observations and User Feedback

- Increased Error Rates: Users reported a higher frequency of compilation errors, runtime exceptions, and logical flaws in Claude-generated code.

- Incomplete Code: Claude sometimes truncated code blocks or failed to complete functions, requiring significant manual intervention.

- Incorrect API Usage: The model struggled with correctly using specific library functions or framework APIs, indicating a potential loss of contextual understanding.

- Failure to Follow Instructions: Users noted that Claude was less effective at adhering to complex coding instructions or specific stylistic requirements.

- Performance Regression: For tasks that Claude previously handled with ease, users observed a noticeable drop in efficiency and accuracy.

The impact of this degradation was significant. Developers relying on Claude for rapid prototyping, code completion, or even complex software architecture suggestions found their workflows disrupted. Trust in the model’s capabilities began to wane, underscoring the critical importance of consistent performance in AI tools, especially for professional use cases. The incident served as a stark reminder that even state-of-the-art LLMs, despite their impressive capabilities, are not immune to regressions, and their maintenance requires continuous vigilance and sophisticated diagnostic tools.

Anthropic’s Response: A Deep Dive into Diagnostics

Upon confirming the existence of a widespread issue, Anthropic assembled a dedicated team to identify the root causes. Their diagnostic process likely involved a multi-pronged approach:

- Analyzing User Reports: Systematically categorizing and analyzing the reported issues to identify common patterns and specific failure modes.

- Reviewing Internal Metrics: Scrutinizing various internal telemetry and evaluation metrics, particularly those related to code generation benchmarks and task-specific performance. This would involve comparing current performance against historical baselines.

- Examining Model Updates and Deployments: Tracing back recent changes to the Claude model architecture, training data, fine-tuning processes, and deployment configurations. Given the continuous development cycle of LLMs, identifying the specific change that triggered the regression is often the most challenging part.

- A/B Testing and Controlled Experiments: Running targeted experiments with different model versions or configurations to isolate the variables contributing to the degradation.

The investigation ultimately pinpointed three distinct bugs as the culprits behind the observed code quality degradation. These bugs were not immediately obvious and required a deep understanding of LLM internals, training dynamics, and deployment pipelines to uncover. The complexity of these issues highlights the non-trivial nature of LLM engineering, where seemingly minor changes can have cascading effects on model behavior.

Bug 1: The “Forgetful” Embeddings & Tokenizer Mismatch

The first bug identified by Anthropic revolved around a subtle but critical interaction between an updated tokenizer and the model’s embedding layer during a continuous pre-training phase. To understand this, we need a brief primer on how LLMs process text.

Understanding Tokenization and Embeddings

- Tokenization: Before an LLM can process human language, the raw text needs to be converted into numerical tokens. A tokenizer breaks down text into smaller units (words, subwords, characters) and maps them to unique numerical IDs.

- Embeddings: These numerical IDs are then looked up in an embedding table, which converts each token ID into a dense vector representation (an embedding). These embeddings capture semantic meaning and relationships between tokens. The quality of these embeddings is paramount for the model’s ability to understand and generate coherent text.

The Problem: A Mismatched Update

Anthropic was conducting continuous pre-training, a process where a model is further trained on new data to keep it updated and improve its capabilities. During this process, they updated the tokenizer used for the pre-training data. This updated tokenizer introduced new tokens and assigned different IDs to existing tokens compared to the tokenizer used to train the original model. Crucially, the embedding layer of the model was not fully re-initialized or properly adapted to this new tokenizer.

Imagine you have a dictionary (the tokenizer) and a translation table (the embedding layer). If you update the dictionary by adding new words or changing the meanings of old ones, but don’t update your translation table, you’ll end up with incorrect translations. This is analogous to what happened with Claude.

Technical Details:

When the new tokenizer was used, it generated token IDs that the existing embedding layer either:

- Didn’t recognize: For new tokens, the embedding layer would produce random or default embeddings, effectively treating these tokens as noise.

- Misinterpreted: For tokens whose IDs had changed, the embedding layer would retrieve the embedding for the old token associated with that ID, leading to semantic confusion.

This mismatch meant that the model was processing input with corrupted or nonsensical embeddings for a significant portion of its vocabulary, particularly for specialized tokens relevant to code. The model essentially “forgot” the meaning of certain code keywords, syntax elements, or programming constructs because their underlying numerical representations were no longer accurate or consistent. This directly impacted its ability to understand prompts involving code and generate correct code outputs.

Impact on Code Quality:

- Syntactic Errors: The model struggled to generate correct syntax because it couldn’t reliably interpret or produce embeddings for keywords like

def,class,import, or operators. - Semantic Misunderstandings: Concepts like variable scope, function calls, or object-oriented structures became muddled, leading to logical errors.

- Reduced Coherence: The generated code often lacked internal consistency and appeared disjointed, reflecting the underlying corruption in token representations.

The Fix: Re-aligning Tokenizer and Embeddings

The solution involved a meticulous re-alignment. Anthropic had to ensure that the tokenizer used for pre-training and the model’s embedding layer were perfectly synchronized. This likely involved:

- Re-initializing or retraining the embedding layer: If the new tokenizer introduced significant changes, the most robust solution would be to retrain the embedding layer from scratch or fine-tune it extensively with the new tokenizer to learn the correct representations.

- Ensuring consistent tokenizer usage: Establishing strict protocols to ensure that any tokenizer update is immediately accompanied by a corresponding update and verification of the embedding layer across all training and inference pipelines.

Understanding the competitive landscape is essential for choosing the right AI tool. Our comprehensive coverage in Anthropic’s Claude Code Pricing Overhaul: What the OpenClaw Cutoff Means for Developers in 2026 examines the technical nuances and real-world performance characteristics that matter most for professional developers.

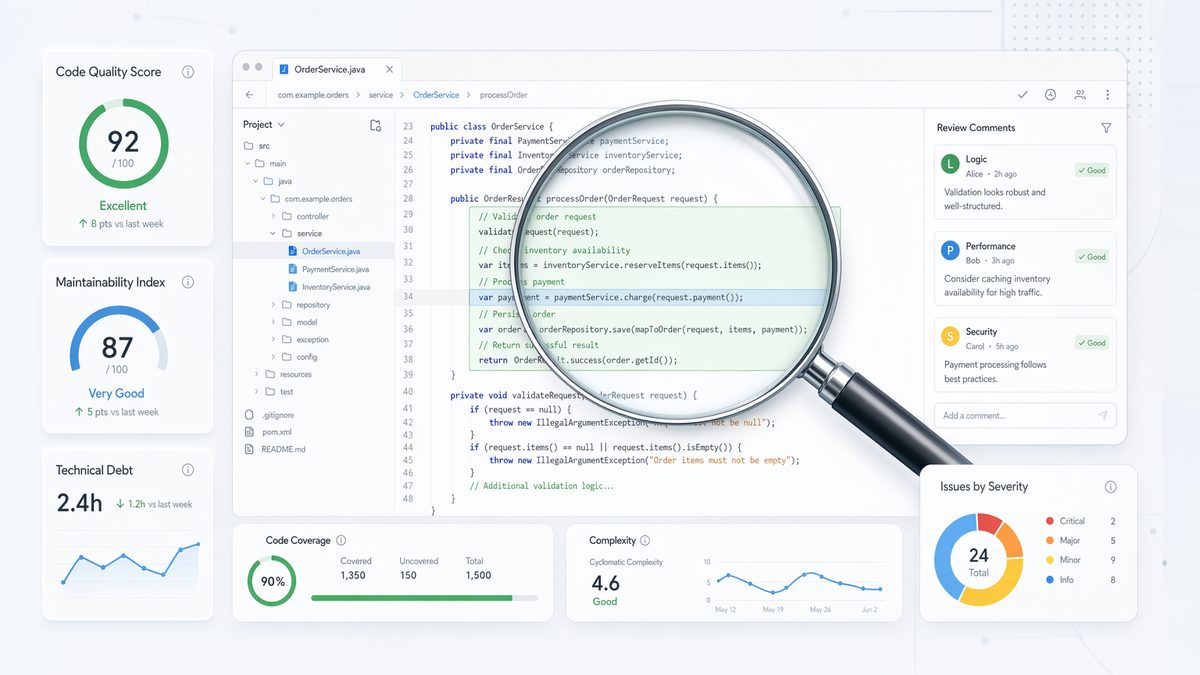

Bug 2: Data Contamination from an Experimental Fine-tuning Dataset

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.

The second bug was a classic case of data contamination, an insidious problem that can severely impact model performance and introduce biases. Anthropic was experimenting with a new fine-tuning dataset designed to improve specific aspects of Claude’s performance. Unfortunately, this dataset contained corrupted or low-quality code examples, and these flaws were inadvertently propagated into the model during the fine-tuning process.

The Fine-tuning Process

Fine-tuning is a crucial step in LLM development where a pre-trained model is further trained on a smaller, task-specific dataset to adapt it for particular applications. For code generation, this might involve fine-tuning on a dataset of high-quality code examples, programming challenges, or code review data. The goal is to imbue the model with specialized knowledge and improve its proficiency in coding tasks.

The Problem: Unintended Data Injection

Anthropic’s experimental fine-tuning dataset, while intended for improvement, contained data that was either:

- Low Quality: The code examples themselves were poorly written, contained bugs, or followed bad programming practices.

- Irrelevant or Out-of-Domain: The data might have been sourced from contexts that didn’t align with the general-purpose coding capabilities Claude was expected to have.

- Incorrectly Labeled or Formatted: Errors in the data labeling or formatting could confuse the model about what constitutes “good” code.

When the model was fine-tuned on this compromised dataset, it began to learn and internalize these undesirable characteristics. Instead of improving, its code generation capabilities deteriorated because it was effectively being taught to produce lower-quality code.

Impact on Code Quality:

- Introduction of Bad Practices: Claude started generating code with common anti-patterns, inefficient algorithms, or insecure practices.

- Increased Bugginess: The model produced more functional bugs, logical errors, and unhandled edge cases, mirroring the flaws in the fine-tuning data.

- Lack of Robustness: The generated code became less resilient to variations in input or environmental conditions, as the model had learned from brittle examples.

- Reduced Adherence to Standards: Claude struggled to follow established coding conventions or style guides, reflecting a lack of exposure to consistent, high-quality examples.

This bug highlights a fundamental challenge in machine learning: “garbage in, garbage out.” The quality of the training data directly dictates the quality of the model’s output. Even a powerful base model can be severely degraded if fine-tuned on subpar data.

The Fix: Rigorous Data Curation and Validation

The solution to data contamination involves a multi-layered approach to data quality assurance:

- Enhanced Data Curation: Implementing stricter filtering, cleaning, and manual review processes for all fine-tuning datasets. This includes automated checks for syntax, style, and common errors, as well as human expert review.

- Source Verification: Ensuring that data sources are reputable and aligned with the desired output quality.

- Pre-deployment Evaluation: Developing robust evaluation benchmarks specifically designed to catch regressions caused by data contamination. This involves testing the model on a diverse set of clean, high-quality coding tasks after every fine-tuning run, before deployment.

- A/B Testing with Control Groups: Conducting A/B tests where a fine-tuned model is compared against a baseline (non-fine-tuned or previously fine-tuned with verified data) to detect subtle degradations.

Understanding the competitive landscape is essential for choosing the right AI tool. Our comprehensive coverage in Inside the Claude Code Leak: What Anthropic’s Biggest Security Incident Reveals About AI Development examines the technical nuances and real-world performance characteristics that matter most for professional developers.

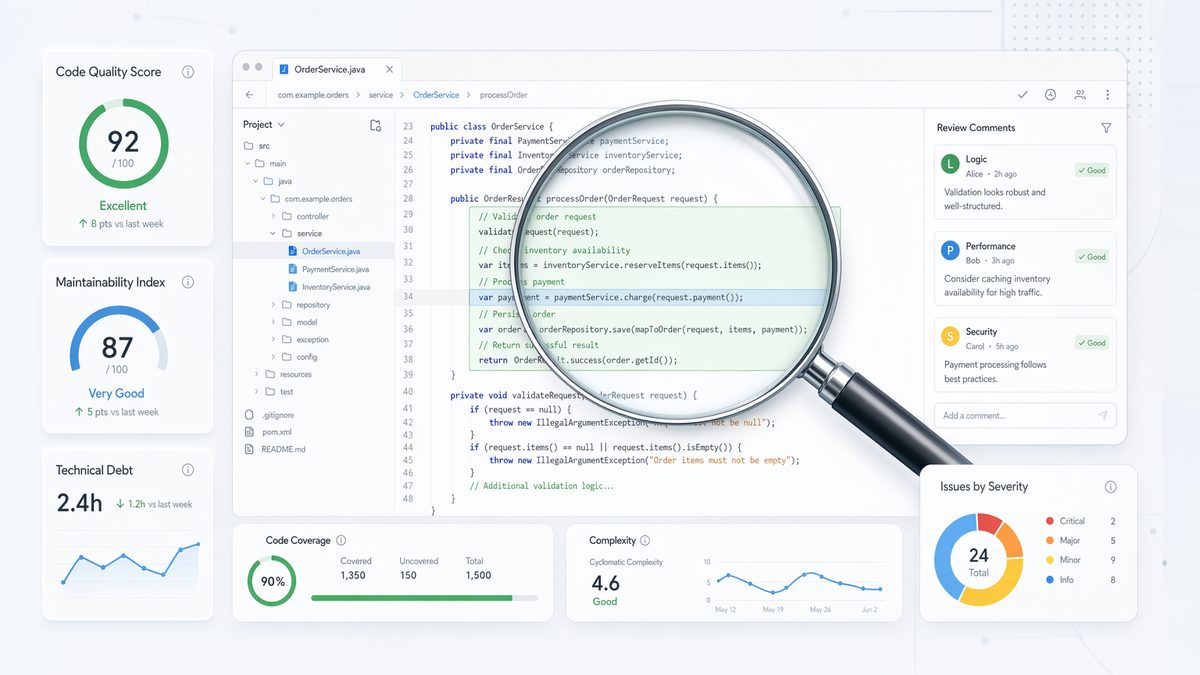

Bug 3: Over-optimization & Catastrophic Forgetting

The third bug is a phenomenon known as “catastrophic forgetting” or “stability-plasticity dilemma” in neural networks. It occurred when Anthropic attempted to fine-tune Claude to improve specific, narrow aspects of its performance, inadvertently causing it to forget previously learned, broader capabilities, particularly in code generation.

The Challenge of Continual Learning

LLMs are designed to be generalists, capable of performing a wide array of tasks. When you fine-tune them on a specific dataset to excel at one particular task (e.g., generating Python code for data analysis), there’s a risk that the model might “forget” how to perform other tasks it was previously good at (e.g., generating JavaScript for web development, or even general conversational abilities).

The Problem: Aggressive Fine-tuning

Anthropic’s postmortem indicated that they were likely too aggressive or unfocused in their fine-tuning efforts. They might have used a fine-tuning regime that:

- Over-emphasized a narrow task: The fine-tuning dataset or loss function might have been heavily skewed towards a very specific coding style or problem domain, causing the model to overfit to this narrow distribution.

- Insufficiently preserved general knowledge: The fine-tuning process didn’t adequately incorporate mechanisms to retain the model’s broader coding knowledge or general reasoning abilities. This could be due to a small fine-tuning dataset relative to the model’s complexity, or a learning rate that was too high, rapidly overwriting existing weights.

- Lacked Regularization for Generalization: Insufficient regularization techniques during fine-tuning could have led the model to specialize too much, losing its ability to generalize across different programming languages, paradigms, or problem types.

The consequence was that while the model might have shown marginal improvement on the specific fine-tuning task, its overall code quality and versatility suffered significantly. It began to generate code that was rigid, less adaptable, and failed to understand nuances outside its narrow fine-tuning scope.

Impact on Code Quality:

- Reduced Versatility: Claude became less proficient across different programming languages or frameworks, struggling with tasks it previously handled well.

- Rigid Code Generation: The model produced code that was less flexible, often adhering to a very specific pattern even when a more general or idiomatic solution was required.

- Loss of Contextual Understanding: The ability to understand complex, multi-faceted coding prompts and generate contextually appropriate solutions diminished.

- Increased Hallucinations in Diverse Code Tasks: When faced with code tasks outside its narrow fine-tuning, the model was more prone to generating incorrect or nonsensical code, a common symptom of forgetting.

This bug highlights the delicate balance required in fine-tuning LLMs. Improving performance on one metric can inadvertently degrade performance on others, especially when the fine-tuning process is not carefully controlled and evaluated across a broad spectrum of capabilities.

The Fix: Balanced Fine-tuning and Robust Evaluation

Addressing catastrophic forgetting requires a more sophisticated approach to fine-tuning:

- Multi-task Fine-tuning: Incorporating a diverse set of tasks and data into the fine-tuning process, not just a single narrow one, to ensure the model retains its general capabilities.

- Regularization Techniques: Employing techniques like Elastic Weight Consolidation (EWC) or Learning without Forgetting (LwF) that selectively slow down learning for weights important to previously learned tasks, preventing them from being overwritten too quickly.

- Lower Learning Rates and Gradual Adaptation: Using smaller learning rates during fine-tuning to allow for more gradual adaptation, giving the model time to integrate new knowledge without discarding old.

- Comprehensive Evaluation Suites: Developing extensive evaluation benchmarks that cover a wide range of coding tasks, languages, and complexities. These benchmarks should be run after every fine-tuning iteration to detect any signs of catastrophic forgetting or performance regressions across various dimensions.

- Architectural Modifications: Exploring architectural changes that enhance the model’s ability to learn continually without forgetting, such as modular networks or memory networks.

Understanding the competitive landscape is essential for choosing the right AI tool. Our comprehensive coverage in Inside the Claude Code Source Leak: What 512,000 Lines of Code Revealed About Anthropic’s AI Architecture examines the technical nuances and real-world performance characteristics that matter most for professional developers.

Anthropic’s Remediation and Lessons Learned

Upon identifying the three root causes, Anthropic systematically implemented corrective measures. Their postmortem outlined a clear path to recovery, focusing on both immediate fixes and long-term preventative strategies.

The Road to Recovery: Corrective Actions

- Re-synchronizing Tokenizer and Embeddings: For Bug 1, Anthropic likely reverted to a known good state of tokenizer and embedding alignment or carefully re-initialized and re-trained the embedding layer to match the updated tokenizer. This ensured that the model’s fundamental understanding of tokens, especially those critical for code, was restored.

- Purging and Re-curating Fine-tuning Data: For Bug 2, the problematic experimental fine-tuning dataset was discarded. Anthropic reinforced its data curation pipeline with more stringent quality checks, automated validation, and human expert review to prevent future contamination. They likely built a new, high-quality code fine-tuning dataset from scratch or heavily revised existing ones.

- Refining Fine-tuning Strategies: For Bug 3, Anthropic adjusted its fine-tuning methodologies. This involved incorporating more diverse data, implementing stronger regularization techniques, and potentially using multi-objective optimization to ensure that improvements in one area did not come at the expense of others. They likely adopted a more conservative approach to fine-tuning, prioritizing stability and generalization over aggressive, narrow optimization.

- Enhanced Evaluation and Monitoring: A crucial part of the remediation was strengthening their evaluation infrastructure. This included developing more comprehensive, automated benchmarks for code quality, covering a wider range of programming languages, tasks, and complexity levels. Continuous monitoring systems were likely put in place to detect subtle performance shifts and regressions in real-time.

- Rollback and Redeployment: Once the fixes were implemented and thoroughly validated internally, Anthropic deployed the corrected model versions. This involved rolling back the affected models and replacing them with versions that incorporated the fixes, ensuring that users received the improved code quality.

Key Lessons Learned from the Incident

The Claude code quality degradation incident, while challenging, offered invaluable lessons for Anthropic and the broader AI community:

- The Criticality of Infrastructure and Tooling: The incident highlighted the need for robust, fault-tolerant infrastructure and sophisticated tooling for LLM development. This includes version control for data, models, and tokenizers; automated validation pipelines; and comprehensive monitoring systems.

- Data Quality is Paramount: The “garbage in, garbage out” principle remains deeply relevant. The quality, relevance, and cleanliness of training and fine-tuning data are foundational to model performance. Continuous investment in data curation and validation is essential.

- The Perils of Over-optimization: Aggressive fine-tuning or optimization for narrow metrics can lead to catastrophic forgetting and a degradation of general capabilities. A balanced approach that preserves existing knowledge while integrating new information is crucial.

- The Importance of Comprehensive Evaluation: Relying solely on a few benchmarks can be misleading. A diverse and extensive suite of evaluation metrics and benchmarks, covering all expected capabilities, is necessary to detect subtle regressions. This includes human-in-the-loop evaluation for subjective qualities like code readability and idiomatic correctness.

- Transparency and Communication with Users: Anthropic’s decision to publish a detailed postmortem was a commendable act of transparency. Open communication about issues, their causes, and solutions builds trust with the user community and provides valuable learning opportunities for the industry.

- The Complexity of LLM Development: LLMs are not monolithic entities but complex systems with interdependent components. Changes in one area (e.g., tokenizer, data) can have unforeseen ripple effects across the entire model. A holistic understanding and rigorous testing across the entire pipeline are indispensable.

- Continuous Learning and Adaptation: The field of AI is dynamic. Models are constantly evolving, and so must the processes for their development, deployment, and maintenance. Incidents like these serve as powerful learning opportunities to refine methodologies and build more resilient AI systems.

Impact on Future LLM Development

This incident will undoubtedly influence how Anthropic and other leading AI labs approach LLM development. We can expect to see:

- Increased investment in automated data quality pipelines and synthetic data generation.

- More sophisticated fine-tuning algorithms that mitigate catastrophic forgetting.

- Advanced monitoring and anomaly detection systems for LLM performance.

- Greater emphasis on modularity and versioning of LLM components (tokenizers, embeddings, model weights).

- A shift towards explainable AI (XAI) tools to better understand why models make certain decisions, especially when errors occur.

The Claude code quality degradation incident serves as a significant case study in the ongoing journey of building reliable and robust artificial intelligence. It underscores that even with advanced research and engineering, the path to perfect AI is fraught with unexpected challenges, and continuous vigilance, rigorous testing, and transparent communication are paramount.

Conclusion: Navigating the Complexities of LLM Evolution

The incident of Claude’s code quality degradation between late February and early April 2024 offers a profound glimpse into the intricate and often unpredictable challenges inherent in the development, maintenance, and continuous improvement of large language models. Anthropic’s transparent postmortem, detailing the three distinct bugs—a tokenizer-embedding mismatch, data contamination from experimental fine-tuning, and catastrophic forgetting due due to over-optimization—provides a invaluable technical deep dive for anyone involved in AI engineering.

What began as anecdotal user reports quickly escalated into a significant concern, highlighting the critical reliance of developers and businesses on the consistent performance of LLMs for specialized tasks like code generation. Anthropic’s systematic investigation, leading to the identification of these subtle yet impactful issues, underscores the complexity of diagnosing problems within multi-layered neural networks. The solutions implemented—re-aligning foundational components, enforcing rigorous data quality, and adopting balanced fine-tuning strategies—were not trivial and required a deep understanding of LLM mechanics.

Beyond the technical fixes, the incident has yielded crucial lessons that resonate across the AI industry:

- The Indispensability of Infrastructure: Robust version control, automated validation, and continuous monitoring are not luxuries but necessities for managing the lifecycle of complex AI models.

- Data as the Lifeblood of AI: The adage “garbage in, garbage out” is more pertinent than ever. Meticulous data curation, cleaning, and validation are non-negotiable, particularly when fine-tuning for specialized capabilities.

- The Delicate Art of Fine-tuning: Optimizing for specific tasks must be balanced with the preservation of general knowledge. Catastrophic forgetting is a real threat, demanding sophisticated regularization and multi-task learning approaches.

- The Power of Comprehensive Evaluation: A broad and diverse suite of benchmarks, including human evaluation, is essential to detect subtle regressions and ensure holistic model performance.

- Transparency and Trust: Openly communicating about failures, their root causes, and corrective actions fosters trust within the AI community and accelerates collective learning.

As AI models become more integrated into critical workflows, their reliability and consistency are paramount. The Claude incident serves as a powerful reminder that the journey of AI development is iterative, requiring constant vigilance, rigorous testing, and a willingness to confront and learn from failures. It reinforces the idea that building truly intelligent and robust systems is an ongoing scientific and engineering endeavor, filled with both remarkable progress and unexpected setbacks.

For developers, researchers, and AI enthusiasts, Anthropic’s experience offers a blueprint for navigating similar challenges. It encourages a proactive approach to quality assurance, a deep respect for the nuances of model training, and a commitment to transparency. By learning from such incidents, the AI community can collectively advance towards building more reliable, powerful, and trustworthy artificial intelligence systems for the future.

Useful Links

- Anthropic’s Claude 3 Post-Mortem (Official Source)

- GPT-3 Paper: Language Models are Few-Shot Learners

- Hugging Face Tokenizers Documentation

- DeepLearning.AI: What are Word Embeddings?

- Anthropic’s Claude 3 Family Announcement

- Overcoming Catastrophic Forgetting in Neural Networks

- O’Reilly: Fine-tuning Pre-trained Language Models

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt Library🕐 Instant∞ Unlimited🎁 Free