Advanced Prompting for Agentic AI: How to Get the Most Out of GPT-5.5

The landscape of Artificial Intelligence is evolving at an unprecedented pace, with each new iteration of large language models (LLMs) pushing the boundaries of what’s possible. The advent of GPT-5.5 marks a significant leap, ushering in an era of truly “agentic” AI. This isn’t just about generating more coherent text or performing better on benchmarks; it’s about equipping AI with the ability to navigate complex, multi-part tasks, reason through ambiguity, and autonomously leverage external tools and knowledge sources. For developers, researchers, and AI enthusiasts, understanding how to effectively prompt these advanced models is no longer a luxury but a necessity for unlocking their full potential.

✓ Instant access✓ No spam✓ Unsubscribe anytime

This guide delves deep into advanced prompting techniques specifically tailored for GPT-5.5’s agentic capabilities. We’ll explore how to structure prompts that encourage sophisticated reasoning, tool utilization, and robust error handling. Our focus will be on “messy,” real-world problems – the kind that defy simple, single-shot instructions and require an AI to plan, execute, and adapt.

The Paradigm Shift: From Reactive to Agentic AI

Before diving into specific prompting strategies, it’s crucial to understand the fundamental shift GPT-5.5 represents. Earlier LLMs, while impressive, were largely reactive. They processed input and generated output based on their training data and the immediate context of the prompt. Their “reasoning” was often a pattern-matching exercise, and their ability to handle multi-step problems was limited by the explicit instructions provided. If a step failed, they’d often get stuck or produce nonsensical output.

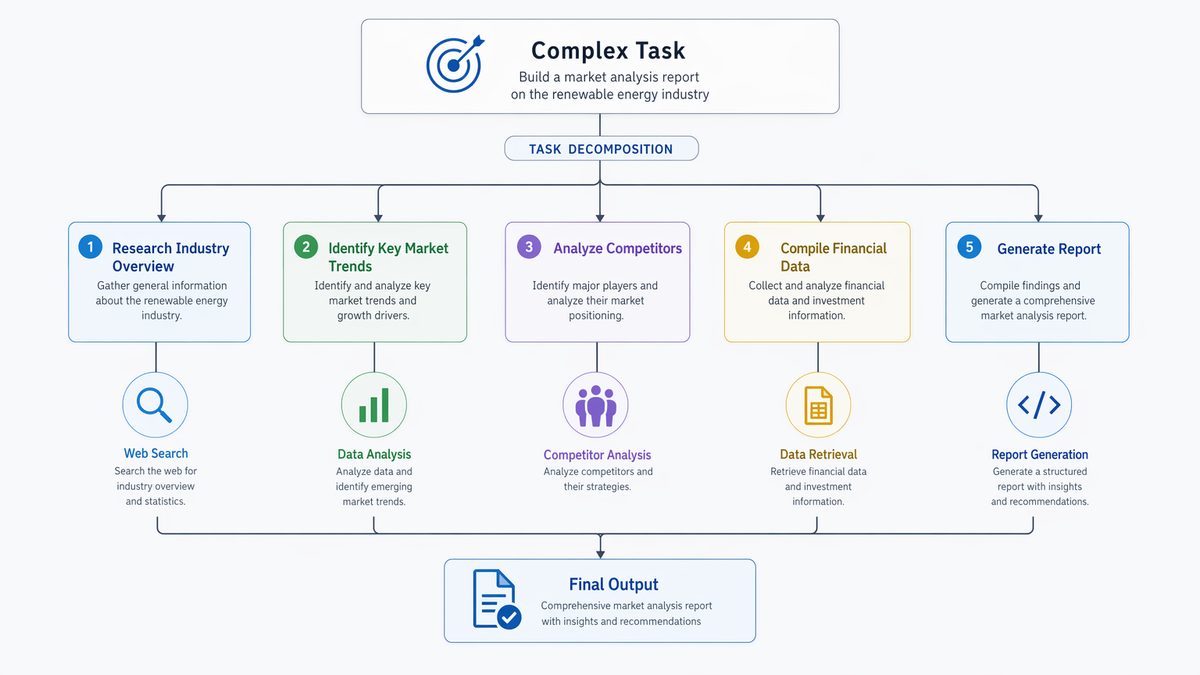

GPT-5.5, however, embodies a more agentic paradigm. This means it can:

- Plan and Decompose: Break down complex tasks into smaller, manageable sub-tasks.

- Reason and Self-Correct: Evaluate its own outputs, identify errors, and adjust its approach.

- Utilize Tools: Seamlessly integrate and interact with external APIs, databases, code interpreters, and web search engines to gather information or perform actions.

- Manage State and Context: Maintain a coherent understanding of the ongoing task across multiple interactions and tool calls.

- Handle Ambiguity: Ask clarifying questions when faced with unclear instructions or insufficient information, rather than making assumptions.

This agentic behavior is powered by a combination of architectural enhancements, vastly expanded training data, and sophisticated fine-tuning specifically designed to foster planning and tool-use capabilities. The implication for prompting is profound: we are no longer just instructing a text generator; we are directing an intelligent assistant capable of autonomous problem-solving.

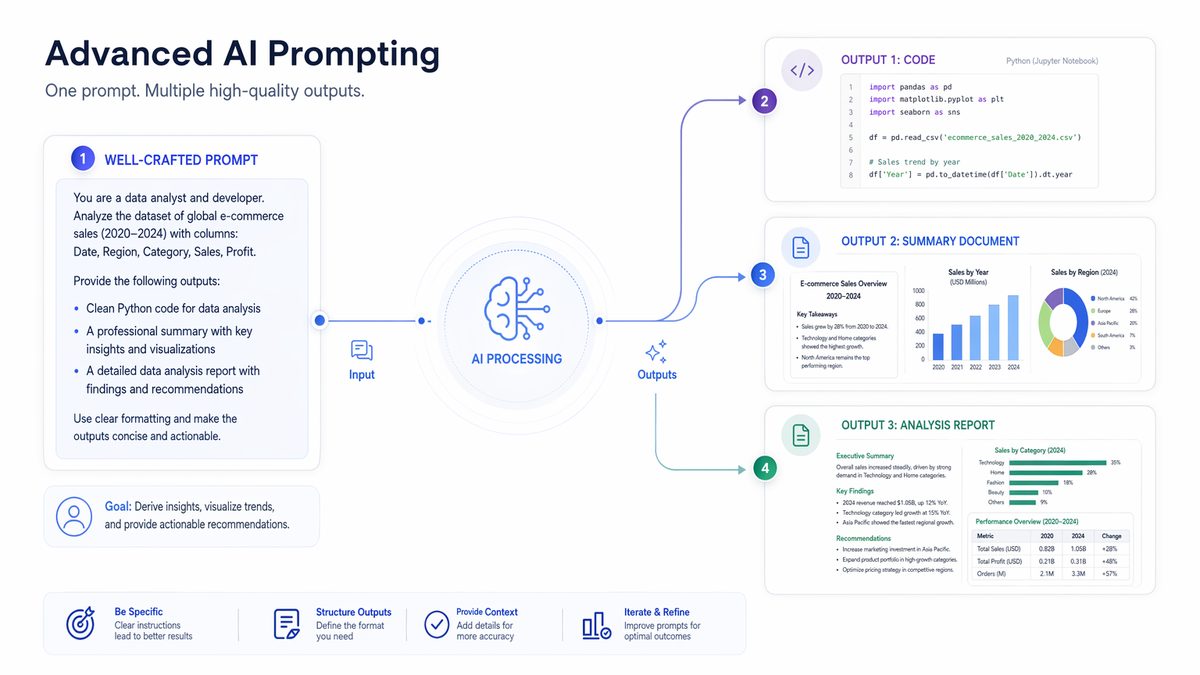

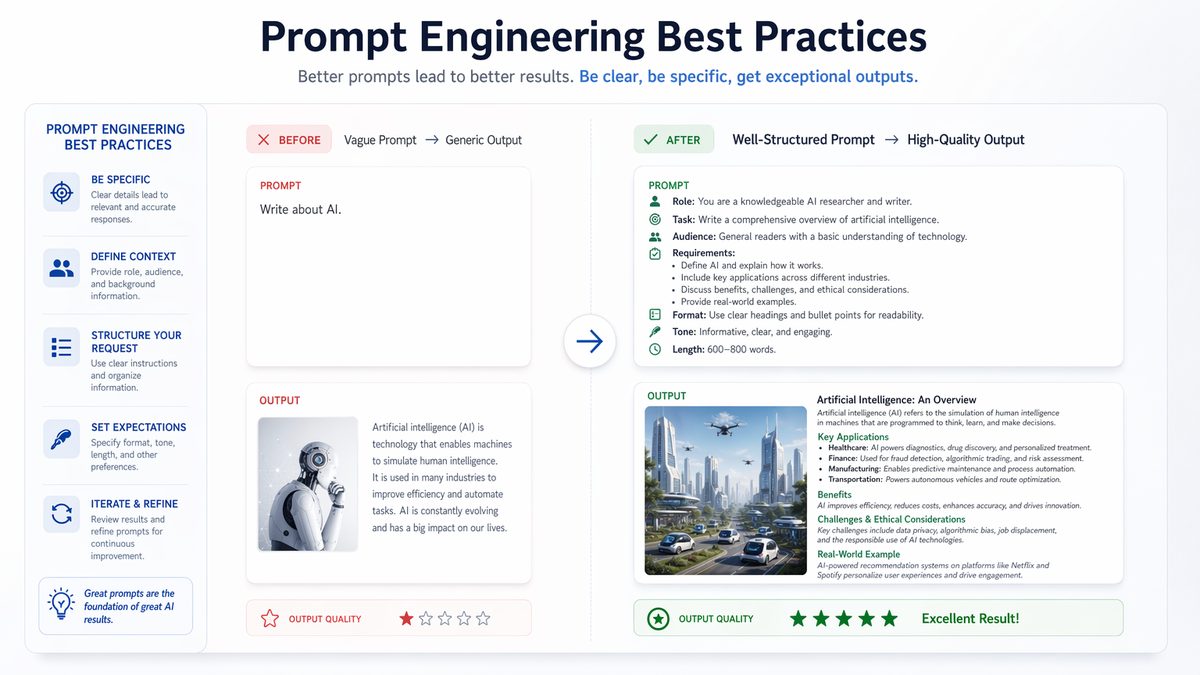

Core Principles of Agentic Prompting with GPT-5.5

Effective prompting for agentic AI moves beyond simple instruction following. It’s about setting up an environment where the AI can thrive, providing it with the necessary context, constraints, and resources to act as a competent agent. Here are the core principles:

1. Define the Goal, Not Just the Steps

Instead of meticulously outlining every single step, articulate the ultimate objective. GPT-5.5 is designed to figure out the optimal path. For example, instead of “First, search for X, then summarize it, then write an email,” try “Draft an email to [Recipient] summarizing the key findings on [Topic X] and suggesting [Action Y].” This allows the model to determine the best sequence of actions, including when and how to use tools.

2. Empower with Tools and Explain Their Capabilities

The ability to use tools is central to agentic AI. You must explicitly define the tools available to GPT-5.5 and explain their functions, expected inputs, and outputs. This is often done through a structured function calling mechanism, where the model can output a JSON-like structure indicating which tool to call and with what arguments.

Consider a scenario where GPT-5.5 needs to interact with a database and a web search engine. Your prompt might include a description of these tools:

You have access to the following tools:

1. `web_search(query: str)`: Searches the internet for the given query and returns relevant snippets. Use this for general knowledge, current events, or external data.

2. `database_query(sql_statement: str)`: Executes an SQL query against the internal product database. Returns results as a list of dictionaries. Use this for specific product information, user data, or inventory levels.

3. `send_email(recipient: str, subject: str, body: str)`: Sends an email to the specified recipient.

When you need to use a tool, respond with a JSON object like this:

`{"tool_name": "tool_name_here", "parameters": {"param1": "value1", "param2": "value2"}}`

After a tool call, I will provide the tool's output. You should then continue your task.

This explicit definition is crucial. GPT-5.5 will then integrate these tools into its planning process.

3. Establish a Persona and Role

Giving the AI a specific persona or role can significantly influence its output and decision-making. If it’s an “expert financial analyst,” it will approach problems differently than a “creative content writer.” This helps align its internal reasoning with the desired outcome. For example: “You are an expert project manager tasked with identifying potential risks for a software development project…”

4. Provide Constraints and Guardrails

Agentic AI, while powerful, still requires guidance. Define boundaries, ethical considerations, and output formats. If a task involves sensitive data, instruct the AI on privacy protocols. If the output needs to be concise, specify a word limit. For example: “Ensure all communication adheres to company privacy policies. Limit the summary to 200 words.”

5. Encourage Self-Reflection and Iteration (Chain of Thought / Tree of Thought)

One of the most powerful techniques for agentic AI is to encourage it to “think aloud” or explicitly plan its steps. This is often achieved through Chain-of-Thought (CoT) or Tree-of-Thought (ToT) prompting. By asking the model to first outline its reasoning or plan before executing, you can guide its process and even intervene if its plan seems flawed.

Chain-of-Thought (CoT): Simply adding “Let’s think step by step” or “Here’s my plan to achieve this:” can dramatically improve complex reasoning tasks. It forces the model to externalize its internal thought process.

Tree-of-Thought (ToT): A more advanced form where the model explores multiple reasoning paths, evaluates them, and prunes less promising ones. While not directly promptable in a single turn, you can simulate this by asking the model to propose multiple approaches and then select the best one or explain its choice.

Example of CoT integration:

Task: Analyze the quarterly sales data for Q3 2023, identify the top 3 performing products, and suggest marketing strategies to boost sales for the bottom 3 performing products in Q4.

Let's think step by step.

1. First, I need to access the sales data for Q3 2023. I will use the `database_query` tool for this.

2. Once I have the data, I will parse it to identify product sales.

3. Then, I will sort products by sales volume to find the top 3 and bottom 3.

4. For the top 3, I will simply list them.

5. For the bottom 3, I will use `web_search` to research common marketing strategies for similar product categories.

6. Finally, I will synthesize this information into a comprehensive report, suggesting specific strategies for each underperforming product.

This explicit planning output from GPT-5.5 makes its process transparent and allows for better debugging and understanding.

6. Handle Ambiguity and Ask for Clarification

Messy, real-world tasks are rarely perfectly defined. Agentic AI should be capable of recognizing ambiguity and asking for more information instead of guessing. You can explicitly instruct it to do so:

If any part of the request is unclear or ambiguous, please ask clarifying questions before proceeding. Do not make assumptions.

This is a critical aspect of robust agentic behavior, preventing errors caused by misinterpretation.

7. Implement Feedback Loops and Iterative Refinement

Agentic AI systems are often part of a larger workflow. Design your interaction to allow for feedback. If the AI produces an unsatisfactory result, provide specific feedback, and ask it to revise. This is where human-in-the-loop interaction becomes powerful.

"The previous summary was too technical for a general audience. Please revise it, simplifying the language and focusing on the business impact."

GPT-5.5 can then take this feedback and refine its output, potentially re-planning or re-executing tool calls.

Advanced Techniques for Complex Scenarios

Orchestrating Multi-Tool Workflows

GPT-5.5 excels when it can orchestrate a sequence of tool calls. Consider a task like “Research the latest trends in AI ethics, summarize them, identify key regulatory bodies, and draft a policy brief for our legal team.”

- Initial Plan: The model might first decide to use `web_search` to find “latest trends in AI ethics” and “key regulatory bodies in AI.”

- Execution & Observation: It makes the `web_search` calls. The system provides the search results.

- Intermediate Step: GPT-5.5 processes the search results, extracts relevant information, and summarizes it internally.

- Second Tool Call (Optional but illustrative): If the policy brief requires specific legal precedents, it might decide to call a `legal_database_query` tool (if available) with targeted search terms.

- Synthesis: Finally, it synthesizes all gathered information and drafts the policy brief, adhering to the specified format and audience.

The key here is that the model dynamically decides which tools to use and when, based on its current state and the overall goal. Your prompt sets the stage, defines the tools, and outlines the objective, allowing the model to fill in the operational details.

Dynamic Prompting and Contextual Adaptation

For long-running tasks or interactive agents, the prompt itself can evolve. This is “dynamic prompting” where subsequent prompts are informed by the AI’s previous outputs, tool results, and ongoing conversation. This is less about a single, static prompt and more about a continuous dialogue where the context is meticulously managed.

- State Management: Maintain a clear record of the conversation history, tool calls, and their outputs. This forms the “memory” of the agent.

- Observation-Action Loop: The agent observes the world (tool outputs, user feedback), decides on an action (tool call, response generation), executes it, and then observes again.

Understanding the competitive landscape is essential for choosing the right AI tool. Our comprehensive coverage in 7 Advanced Prompting Techniques for ChatGPT and Claude That Actually Work in 2026 examines the technical nuances and real-world performance characteristics that matter most for professional developers.

Handling Errors and Fallbacks

Real-world systems fail. Tools might return errors, network requests might time out, or data might be malformed. A truly agentic AI should be able to gracefully handle these situations.

You can prompt GPT-5.5 to include error handling in its plan:

If a tool call fails, analyze the error message. If it's a transient error (e.g., network timeout), retry the call up to 3 times with a brief delay. If it's a persistent error (e.g., invalid parameters), explain the error to the user and suggest alternative approaches or ask for clarification.

For developers looking to integrate AI coding tools into their workflow, our detailed analysis of Advanced Prompting for AI Desktop Agents: Codex and Claude Code provides practical implementation strategies and configuration tips that complement the capabilities discussed in this article.

Few-Shot and CoT-Few-Shot Prompting

While GPT-5.5 is powerful, providing a few examples (few-shot prompting) of successful task completion, especially with explicit Chain-of-Thought reasoning, can significantly improve performance on specific types of tasks. This helps the model align with your desired format and reasoning style.

Example 1:

User: "Find the current stock price of AAPL and tell me if it's a good time to buy based on recent news."

Agent's Thought Process:

1. The user is asking for a stock price and a sentiment analysis based on news.

2. I need a tool to get the stock price. Let's assume `get_stock_price(symbol: str)` is available.

3. I need a tool to search for recent news. `web_search(query: str)` is suitable.

4. Plan: Call `get_stock_price("AAPL")`. Then call `web_search("AAPL stock news recent")`. Analyze the news sentiment. Synthesize results.

Tool Call 1: {"tool_name": "get_stock_price", "parameters": {"symbol": "AAPL"}}

... (Tool output provided) ...

Tool Call 2: {"tool_name": "web_search", "parameters": {"query": "AAPL stock news recent"}}

... (Tool output provided) ...

Final Response: "AAPL's current stock price is [price]. Recent news indicates [summary of sentiment]. Based on this, [investment advice]."

Example 2:

User: "Summarize the latest research on quantum computing applications in medicine."

Agent's Thought Process:

1. The user wants a summary of specific research.

2. I need to search for research papers. `web_search(query: str)` is the appropriate tool.

3. Plan: Call `web_search("quantum computing applications medicine research papers")`. Read and summarize the key findings.

Tool Call 1: {"tool_name": "web_search", "parameters": {"query": "quantum computing applications medicine research papers"}}

... (Tool output provided) ...

Final Response: "Recent research in quantum computing applications in medicine focuses on [key areas] such as [example 1] and [example 2]. Promising breakthroughs include [specific findings]."

Now, your turn:

User: "What's the weather like in London tomorrow, and what should I wear?"

By showing a few complete examples, including the thought process and tool calls, you provide a powerful learning signal to GPT-5.5.

Implementing Agentic Workflows: A Practical Example

Let’s walk through a more comprehensive example: a “Customer Support Agent” that can answer questions, check order status, and log issues.Scenario: Customer Support Agent

The agent needs to:- Answer general FAQs.

- Check the status of a customer’s order using an internal API.

- Log a technical support ticket if an issue is reported.

- Handle cases where information is missing or ambiguous.

Available Tools:

faq_database_search(query: str): Searches the FAQ knowledge base. Returns relevant FAQ entries.get_order_status(order_id: str, customer_email: str): Retrieves the status of an order. Returns order details or an error if not found.log_support_ticket(customer_email: str, issue_description: str, severity: str): Creates a new support ticket. Returns a ticket ID.send_customer_email(recipient: str, subject: str, body: str): Sends an email to the customer.

System Prompt (Initial Setup):

You are a helpful and efficient Customer Support Agent for "TechSolutions Inc." Your primary goal is to assist customers with their inquiries, resolve issues, and provide accurate information.

You have access to the following tools:

1. `faq_database_search(query: str)`: Searches the internal FAQ knowledge base for answers to common questions. Returns a list of relevant FAQ entries.

Example output: `{"faqs": [{"question": "How to reset password?", "answer": "Go to settings > security > reset password."}]}`

2. `get_order_status(order_id: str, customer_email: str)`: Retrieves the current status of a customer's order. Requires both `order_id` and `customer_email`. Returns order details (e.g., status, shipping date, items) or an error if the order is not found or details are incorrect.

Example output (success): `{"order_id": "12345", "status": "Shipped", "shipping_date": "2023-10-26", "items": ["Laptop", "Mouse"]}`

Example output (failure): `{"error": "Order not found or details incorrect."}`

3. `log_support_ticket(customer_email: str, issue_description: str, severity: str)`: Creates a new technical support ticket. Requires `customer_email`, a detailed `issue_description`, and `severity` (Low, Medium, High). Returns the new ticket ID.

Example output: `{"ticket_id": "TS-001-20231025-001"}`

4. `send_customer_email(recipient: str, subject: str, body: str)`: Sends an email to the customer.

Example output: `{"success": true}`

When you need to use a tool, respond with a JSON object like this:

`{"tool_name": "tool_name_here", "parameters": {"param1": "value1", "param2": "value2"}}`

After a tool call, I will provide the tool's output. You should then continue your task.

Always think step by step before responding or using a tool. If you need more information from the user (e.g., order ID, email for logging a ticket), ask for it clearly and politely. If a tool call fails, analyze the error and inform the user, suggesting next steps.

Your response should always aim to be helpful and resolve the customer's query efficiently.

---

Interaction Flow Example:

User: “My laptop is not turning on. I bought it last week.” GPT-5.5 (Agent’s thought process and response):

Agent's Thought Process:

1. The user is reporting a technical issue with a laptop.

2. This sounds like a problem that requires logging a support ticket.

3. To log a support ticket, I need the customer's email, a detailed issue description, and severity.

4. I have a description ("laptop not turning on, bought last week"), but I need the customer's email and a severity level.

5. I should ask the user for their email and suggest logging a ticket.

Response:

"I'm sorry to hear your laptop isn't turning on. That sounds frustrating, especially after just a week! I can help you log a support ticket for this issue. Could you please provide your email address so we can create the ticket and for our support team to contact you? I will set the severity to 'High' given it's a new laptop."

Agent's Thought Process:

1. The user provided their email address.

2. Now I have all the necessary information to log a support ticket: `customer_email` ([email protected]), `issue_description` ("Laptop not turning on, bought last week."), and `severity` ("High").

3. I should use the `log_support_ticket` tool.

Tool Call:

{"tool_name": "log_support_ticket", "parameters": {"customer_email": "[email protected]", "issue_description": "Laptop not turning on, bought last week.", "severity": "High"}}

{"ticket_id": "TS-001-20231025-002"}

Agent's Thought Process:

1. The `log_support_ticket` tool call was successful, and I received a ticket ID.

2. I should inform the user about the successful ticket creation and provide the ticket ID.

3. I should also tell them what to expect next.

Response:

"Thank you, John. I've successfully logged a technical support ticket for you. Your ticket ID is TS-001-20231025-002. Our technical support team will review your case and get in touch with you shortly at [email protected] to assist further. Please keep an eye on your inbox."

Another Interaction – Order Status:

User: “Can I check my order status? My order number is 54321.” GPT-5.5 (Agent’s thought process and response):

Agent's Thought Process:

1. The user wants to check an order status.

2. I have the `get_order_status` tool for this.

3. The `get_order_status` tool requires `order_id` and `customer_email`.

4. The user provided `order_id` (54321) but not `customer_email`.

5. I need to ask for the customer's email.

Response:

"Yes, I can certainly help you check your order status. To do so, I'll need your email address associated with the order, in addition to the order number (54321) you provided. Could you please share your email?"

Agent's Thought Process:

1. The user provided the email address.

2. Now I have both `order_id` (54321) and `customer_email` ([email protected]).

3. I should use the `get_order_status` tool.

Tool Call:

{"tool_name": "get_order_status", "parameters": {"order_id": "54321", "customer_email": "[email protected]"}}

{"error": "Order not found or details incorrect."}

Agent's Thought Process:

1. The `get_order_status` tool call returned an error: "Order not found or details incorrect."

2. This means the order could not be found with the provided details.

3. I should inform the user about the error and suggest possible reasons or next steps.

Response:

"I apologize, but I couldn't find an order with the number 54321 associated with the email [email protected]. Please double-check if the order number or email address is correct. If you used a different email for the purchase, please provide that. Alternatively, if you believe there's an error, I can help you log a general inquiry ticket."

- Understand user intent.

- Identify required parameters for tool calls.

- Ask clarifying questions when information is missing.

- Execute tool calls.

- Interpret tool outputs, including errors.

- Formulate appropriate responses based on the entire interaction context.

Challenges and Considerations

While GPT-5.5’s agentic capabilities are powerful, there are still challenges to consider:1. Hallucinations in Planning

Even with CoT, the model might “hallucinate” a plan or a tool call that doesn’t quite make sense or isn’t optimal. Constant monitoring and refinement of prompts are necessary.2. Tool Definition Fidelity

The quality of the tool definitions directly impacts the agent’s ability to use them correctly. Vague or incomplete definitions will lead to poor tool utilization. It’s essential to provide clear, concise, and accurate descriptions of tool capabilities, inputs, and outputs.3. Cost and Latency

Complex agentic workflows involving multiple tool calls and extensive “thinking” steps can be more computationally expensive and introduce higher latency compared to single-shot prompts. Optimize tool calls and prompt structures to balance effectiveness with efficiency.4. Security and Permissions

When granting an AI access to external tools, especially those that can perform actions (e.g., sending emails, modifying databases), robust security measures and strict permissioning are paramount. The AI should only have access to what it absolutely needs.5. Explainability and Auditability

For critical applications, understanding *why* the AI chose a particular action or tool call is important. Encouraging explicit thought processes (CoT) helps, but comprehensive logging of all interactions, tool calls, and their outputs is essential for auditing and debugging. Context-Aware Adaptive Prompting: Advanced Techniques for ChatGPT and Claude in 2026 The need for explainable AI is a growing concern, particularly as models become more autonomous.The Future is Agentic

GPT-5.5 represents a significant step towards truly autonomous and intelligent agents. The ability to reason, plan, use tools, and self-correct opens up vast new possibilities for automation, research, and problem-solving across virtually every industry. Mastering the art and science of advanced prompting for these agentic models will be a core skill for anyone looking to harness the cutting edge of AI. As these models continue to evolve, the distinction between a “prompt” and a “program” will increasingly blur, giving rise to a new paradigm of AI development where natural language serves as the primary interface for instructing complex, intelligent systems.Useful Links

- OpenAI Function Calling Blog Post

- Chain-of-Thought Prompting Paper

- Tree-of-Thought Prompting Paper

- DeepLearning.AI Prompt Engineering Course

- Anthropic Claude Tool Use Documentation

- Prompting Guide

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt Library🕐 Instant∞ Unlimited🎁 Free