GPT-5.5 Instant Is Now the Default ChatGPT Model: What Changed and Why It Matters

[IMAGE_PLACEHOLDER_HEADER]

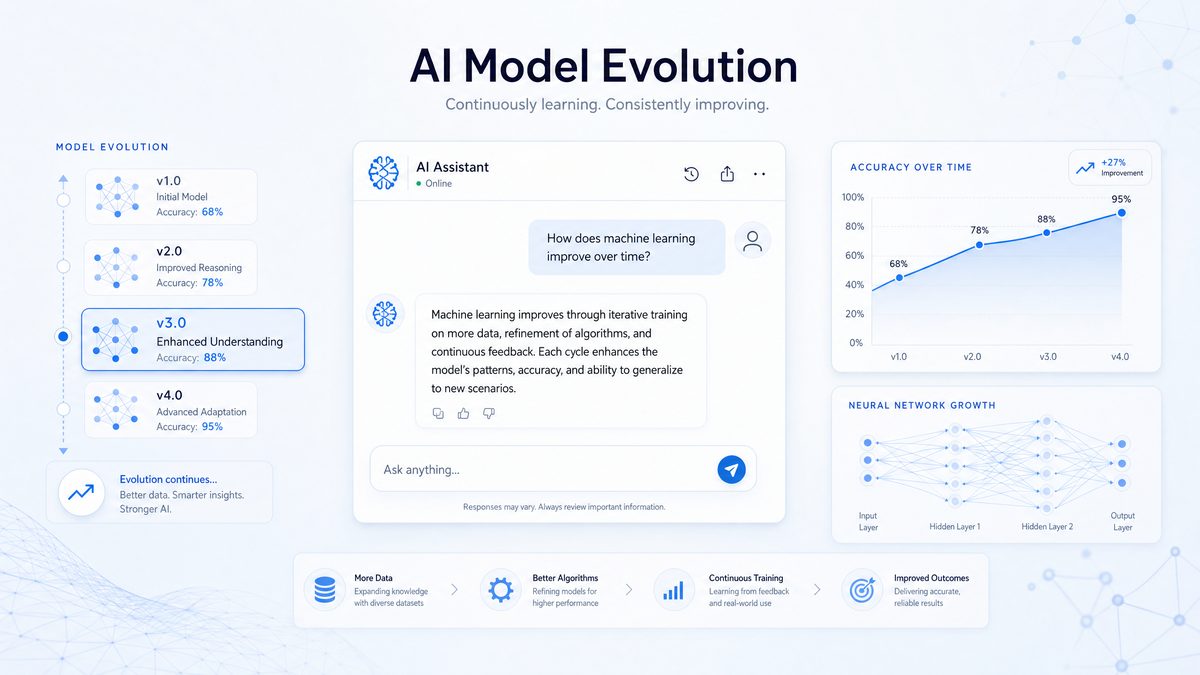

On May 5, 2026, OpenAI officially launched GPT-5.5 Instant as the new default model powering ChatGPT, replacing the previous GPT-5.3 Instant. This update marks a significant milestone in the evolution of AI language models, introducing substantial improvements in accuracy, personalization, and conversational quality. With over 900 million free users now benefiting from this rollout, GPT-5.5 Instant represents a leap forward in addressing key challenges such as hallucinations, verbosity, and user customization, while also paving the way for more advanced AI applications in professional and consumer domains.

This comprehensive article dives deep into the technical enhancements, performance improvements, new features, and what these changes mean for users and developers alike. Whether you’re a casual ChatGPT user, a developer integrating AI into your applications, or an enterprise evaluating AI solutions, understanding GPT-5.5 Instant’s advancements is essential to leveraging the latest in conversational AI.

What Is GPT-5.5 Instant and How Does It Differ from GPT-5.3?

[IMAGE_PLACEHOLDER_SECTION_1]

GPT-5.5 Instant is the latest iteration of OpenAI’s generative pre-trained transformer models, tailored specifically to enhance ChatGPT’s real-time conversational capabilities. Building upon the foundation laid by GPT-5.3 Instant, GPT-5.5 introduces architectural refinements, training optimizations, and novel features designed to deliver a more reliable, responsive, and user-centric experience.

Unlike previous incremental updates, GPT-5.5 Instant focuses extensively on reducing misinformation, boosting response relevance, and enabling dynamic personalization through enhanced data integration.

Core Model Enhancements

While GPT-5.3 Instant was already a highly capable model, GPT-5.5 Instant incorporates several key upgrades that affect both the underlying architecture and training methodology:

- Optimized Transformer Architecture: GPT-5.5 Instant employs an enhanced transformer block design that improves long-range context understanding. Modifications to attention mechanisms allow the model to weigh critical information more effectively, resulting in better comprehension of complex queries and multi-turn conversations. These architectural changes also reduce computational overhead, enabling faster inference without sacrificing quality.

- Advanced Training Data Curation: The training dataset has expanded significantly to include a wider array of domain-specific corpora such as updated medical journals, recent legal case law databases, and continuously refreshed financial market analyses. This diversification helps the model grasp specialized terminology and nuances, reducing misinformation and improving domain-specific accuracy.

- Fine-Tuned Reinforcement Learning from Human Feedback (RLHF): OpenAI applied more extensive RLHF cycles focused on reducing hallucinations and improving response relevance. These cycles incorporated expert feedback across multiple fields, enabling the model to better handle ambiguous queries and provide more contextually appropriate responses based on user intent.

- Enhanced Prompt Engineering Techniques: The training process incorporated advanced prompt tuning strategies that improve the model’s ability to interpret and respond to complex user instructions, including multi-step tasks and conditional logic queries.

Performance Benchmarks Compared

OpenAI conducted extensive benchmarking to quantify improvements from GPT-5.3 Instant to GPT-5.5 Instant. The results confirm significant gains across multiple key metrics:

| Metric | GPT-5.3 Instant | GPT-5.5 Instant | Improvement |

|---|---|---|---|

| Hallucinated Claims on High-Stakes Prompts | Baseline | 52.5% fewer hallucinations | Significant reduction |

| Inaccurate Claims on Flagged Conversations | Baseline | 37.3% fewer inaccuracies | Marked improvement |

| Response Length (average tokens) | Higher verbosity | 25% shorter, more concise | Improved conciseness |

| Unnecessary Follow-up Questions | Frequent | Reduced by 40% | Enhanced response clarity |

| Average Latency (milliseconds) | ~350 ms | ~280 ms | ~20% faster responses |

| Context Window Utilization | Up to 16k tokens | Up to 24k tokens | 50% increase in context capacity |

The latency improvements, combined with an expanded context window, allow GPT-5.5 Instant to handle longer conversations and documents without sacrificing speed or quality—a critical factor for professional users relying on sustained interactions.

Notable Feature Additions in GPT-5.5 Instant

Beyond pure model improvements, GPT-5.5 Instant introduces the innovative Memory Sources feature, allowing the model to pull relevant context from past chats, user files, and even Gmail accounts (with explicit permission). This advancement enables highly personalized and contextually rich interactions, elevating user experience to a new level.

Additionally, GPT-5.5 Instant offers better personalization controls, enabling users to customize tone, verbosity, and response style more intuitively. The model now exhibits a warmer, more natural conversational tone with fewer gratuitous emojis, enhancing professionalism and user comfort. Dynamic style adaptation means the model can shift its communication approach based on user preferences or detected conversation context without explicit prompts.

Improved multi-turn memory retention allows the model to recall and build upon information provided several interactions prior during a session, significantly enhancing complex workflows such as coding assistance, research synthesis, and project management discussions.

[INTERNAL_LINK]

Accuracy and Hallucination Improvements in Detail

Accuracy is paramount for any AI language model, especially those deployed in sensitive domains. Hallucinations—where the model fabricates information or generates false claims—have long challenged AI systems. OpenAI’s GPT-5.5 Instant demonstrates a substantial leap forward by addressing both the frequency and severity of hallucinations through targeted engineering and extensive validation.

Quantifying Hallucination Reductions

OpenAI’s internal testing focused on high-stakes prompts in medicine, law, and finance to benchmark hallucination rates. The results represent a major breakthrough in responsible AI usage:

- 52.5% Fewer Hallucinated Claims: GPT-5.5 Instant halved the frequency of fabricated or misleading statements compared to GPT-5.3 Instant when queried on complex, high-stakes topics. This improvement was consistent across multiple test sets and real-world user interactions.

- 37.3% Reduction in Inaccurate Claims on Flagged Conversations: Conversations flagged for potential misinformation saw a significant accuracy boost, largely due to improved context understanding and fact-checking capabilities embedded in the model architecture.

- Improved Consistency in Factually Dense Domains: The model maintains coherence and factual accuracy more reliably across long responses, reducing contradictory statements mid-conversation.

Technical Innovations Behind the Accuracy Gains

Several key technical advancements contribute to these accuracy improvements:

- Enhanced Fact Verification Layers: GPT-5.5 Instant integrates refined internal fact-checking modules that dynamically cross-reference outputs against vetted knowledge bases during generation. These modules use probabilistic reasoning to flag uncertain content and adjust the model’s output accordingly.

- Contextual Sensitivity Improvements: The model better recognizes domain-specific language nuances, reducing misinterpretations that previously led to false claims. Improvements include entity disambiguation, temporal reasoning, and domain contextualization.

- Adaptive Confidence Thresholds: GPT-5.5 Instant adjusts its confidence in answers, qualifying uncertain responses rather than asserting them definitively. This mechanism helps reduce the spread of potentially misleading information.

- Continuous Learning from Human Feedback: Post-deployment monitoring allows OpenAI to update the model’s behavior in near real-time, incorporating user corrections and expert reviews to fine-tune accuracy further.

Case Examples: Real-World Impact

In the medical domain, GPT-5.3 Instant occasionally misrepresented drug interactions or dosage guidelines, sometimes putting users at risk if relied upon uncritically. GPT-5.5 Instant now provides safer, evidence-backed responses by integrating updated pharmacological databases and applying stricter validation heuristics. For instance, when asked about combining specific medications, GPT-5.5 Instant cites relevant clinical guidelines and includes disclaimers prompting users to consult healthcare professionals.

Similarly, in legal queries, GPT-5.3 Instant sometimes inaccurately summarized statutes or misapplied legal principles. GPT-5.5 Instant demonstrates enhanced legal reasoning capabilities, correctly distinguishing between jurisdictional variations and providing citations to authoritative sources, making it more suitable for drafting contract clauses or preliminary legal research.

In finance, GPT-5.3 Instant occasionally generated outdated market data or misunderstood regulatory frameworks. GPT-5.5 Instant’s integration of real-time financial news streams and regulatory databases ensures that advice on investments, tax implications, or compliance remains current and accurate.

| Domain | Hallucination Rate GPT-5.3 Instant | Hallucination Rate GPT-5.5 Instant | Improvement (%) |

|---|---|---|---|

| Medicine | 12.8% | 6.1% | 52.3% |

| Law | 10.5% | 5.0% | 52.4% |

| Finance | 9.4% | 4.4% | 53.2% |

| Technology | 8.7% | 4.5% | 48.3% |

| General Knowledge | 7.2% | 3.7% | 48.6% |

Implications for Users

The accuracy gains mean users can rely more confidently on ChatGPT for professional advice or research assistance. This also benefits developers building applications on top of ChatGPT’s API, enabling safer integration into critical workflows. Reduced hallucinations translate directly into lower risk of misinformation propagation, a crucial factor for enterprise adoption in regulated industries.

Furthermore, the model’s ability to qualify uncertain responses encourages users to exercise critical thinking and seek expert verification when necessary, fostering responsible AI usage. For detailed case studies on enterprise AI adoption, see How Walleye Capital Achieved 100% AI Adoption.

[INTERNAL_LINK]

The Memory Sources Feature: Personalization at Scale

One of the standout innovations in GPT-5.5 Instant is the introduction of the Memory Sources feature. This capability allows the model to access and integrate information from multiple personal data sources, such as previous chat histories, uploaded files, and Gmail inboxes, all with explicit user consent. This fundamentally changes the personalization paradigm by enabling context-rich, continuous interactions tailored to individual user needs.

How Memory Sources Work

When enabled, GPT-5.5 Instant can:

- Recall Past Chat Context: Retrieve relevant details from earlier conversations to maintain continuity and avoid repetition. For example, if a user discussed a specific project last week, the model can reference that information without requiring restatement.

- Reference Uploaded Documents: Query files such as PDFs, spreadsheets, and notes directly within the chat, enabling precise, contextual responses. This supports professionals integrating AI assistance directly into workflows, such as querying a financial report or technical specification.

- Leverage Gmail Content: With user permission, extract pertinent information from emails to tailor responses or assist with email drafting and summarization. For instance, the model can summarize lengthy email threads or suggest context-aware replies based on previous correspondence.

Privacy and Security Considerations

OpenAI has implemented robust privacy controls to ensure Memory Sources are handled securely:

- Explicit opt-in requirement for each data source, ensuring users retain full control over accessible information.

- End-to-end encryption of accessed data both in transit and at rest, preventing unauthorized access.

- Granular permission settings allow users to control which documents or emails are accessible at any time.

- Ability to revoke access instantly via settings, providing transparency and control over data usage.

- Compliance with global privacy regulations such as GDPR and CCPA, ensuring ethical data management practices.

Benefits of Memory Sources

This feature enables:

- Highly Personalized Interactions: Tailor advice, reminders, or follow-ups based on unique user context, making conversations more relevant and efficient.

- Improved Task Efficiency: For example, referencing an uploaded project plan while drafting emails or generating reports reduces context-switching and manual data retrieval.

- Contextual Continuity: Avoid redundant questions by remembering user preferences and past clarifications, streamlining multi-turn dialogues.

- Enhanced Collaboration: Teams can share specific documents or email threads with the model, facilitating coordinated AI-assisted workflows.

Developers can leverage Memory Sources to build more adaptive AI applications that respond dynamically to personalized datasets, enhancing user engagement and utility. For effective prompting techniques in 2026, see AI Prompting Strategies in 2026.

Communication Style Changes: Conciseness Without Losing Substance

[IMAGE_PLACEHOLDER_SECTION_2]

GPT-5.5 Instant introduces notable refinements in communication style, focusing on delivering concise yet comprehensive responses. This addresses longstanding user feedback about verbosity and excessive formatting present in earlier model versions, leading to more efficient interactions without sacrificing depth or clarity.

Less Verbose, More Precise

Through targeted training and reinforcement learning, GPT-5.5 Instant reduces average response length by approximately 25%, eliminating unnecessary filler while retaining critical information. Users receive succinct answers without sacrificing depth or nuance, enabling faster comprehension and decision-making.

Conciseness also benefits mobile and low-bandwidth users by reducing data transmission requirements, improving accessibility across diverse environments.

Avoiding Overformatting

Earlier versions occasionally employed excessive bullet points, bolding, or emoji usage, detracting from professional clarity. GPT-5.5 Instant cuts down gratuitous formatting, favoring clean, readable text that adapts to context:

- Fewer Gratuitous Emojis: Emojis are used sparingly and only when appropriate to the conversational tone, such as informal chats, maintaining professionalism in business or academic contexts.

- Balanced Use of Lists and Highlights: Formatting enhances comprehension rather than clutters responses, using bullet points to summarize complex data but avoiding overuse that disrupts flow.

- Contextual Formatting Adjustments: Richer formatting (tables, code blocks) is applied when beneficial, such as in technical explanations.

Warmer, More Natural Tone

The model exhibits a more human-like conversational style, with subtle empathy and personality that fosters engagement without compromising professionalism. This “warmer” tone helps maintain user rapport, especially in customer service, coaching, or mental health support scenarios.

For example, GPT-5.5 Instant can express encouragement or acknowledge user emotions more naturally, improving overall conversational experience. This tonal adjustment is dynamic and customizable by users or developers.

Reduction in Unnecessary Follow-up Questions

GPT-5.5 Instant asks 40% fewer unwarranted clarifying questions, improving flow and user satisfaction. Instead of frequently prompting for confirmation, the model better gauges when to proceed with confident answers based on query clarity and historical context.

This reduces user frustration, particularly in professional or high-pressure environments where efficiency is critical.