Claude Dreaming Explained: How Anthropic’s AI Agents Self-Improve Between Sessions

Anthropic’s “Dreaming”: A Paradigm Shift in Autonomous AI Agent Improvement

On May 6, 2026, during the landmark Code with Claude developer event in San Francisco, Anthropic unveiled a transformative suite of features for its Claude Managed Agents platform. Central to this announcement was the introduction of “Dreaming,” a groundbreaking capability that marks a significant leap forward in the evolution of AI agents. Unlike traditional AI systems that rely heavily on static programming or require frequent human intervention for updates, Dreaming enables agents to autonomously reflect on their past interactions, learn from them, and improve their future performance without constant oversight.

This innovation addresses one of the most persistent challenges in artificial intelligence development: the ability of agents to self-optimize continuously. Autonomous self-improvement not only enhances the reliability of AI agents by reducing recurring errors but also dramatically increases their scalability across enterprise applications. By allowing agents to analyze their own behavior and adapt accordingly, Dreaming promises to reduce the operational burden on human developers and administrators, enabling AI solutions to evolve organically as they accumulate experience.

In addition to Dreaming, Anthropic introduced two complementary capabilities: Outcomes, a rubric-based evaluation framework that systematically measures agent performance, and Multi-agent Orchestration, a powerful mechanism that coordinates multiple specialized agents to collaboratively solve complex tasks. Together, these features represent a holistic approach to creating intelligent, adaptive, and enterprise-ready AI agents capable of handling evolving business requirements.

These advancements position Claude Managed Agents as a robust, flexible platform tailored for enterprises and developers seeking intelligent AI solutions that can scale intelligently and maintain high levels of accuracy and relevance over time. The integration of Dreaming with Outcomes and Multi-agent Orchestration reflects Anthropic’s commitment to pushing the boundaries of autonomous AI while maintaining transparency, control, and customization for end users.

Understanding “Dreaming”: The Technical Mechanics Behind Autonomous Agent Reflection

Dreaming is a novel, introspective process designed to empower Claude Managed Agents with the ability to autonomously review and learn from their historical interactions. Traditional AI memory systems store static or user-curated context, which agents reference during conversations or task execution. However, these systems lack the capability to analyze aggregated experiences, identify subtle patterns, or correct recurring mistakes without explicit intervention.

Dreaming fills this gap by operating as a scheduled offline process that synthesizes insights from past sessions and interactions. This meta-cognitive function enables agents to develop a form of evolving self-awareness and self-improvement, grounded in empirical data rather than manual updates.

How Dreaming Operates

- Session Review: The process begins by compiling comprehensive logs from previous agent sessions, including user queries, agent responses, contextual metadata such as timestamps, session identifiers, and any relevant environmental variables.

- Pattern Extraction: Using a combination of transformer-based language models and unsupervised clustering algorithms, Dreaming identifies recurring workflows, frequently encountered errors, and common user intents. For example, it may detect that the agent often misinterprets requests related to scheduling meetings or frequently provides incomplete answers in a particular domain.

- Memory Curation: From these extracted patterns, Dreaming distills concise, actionable heuristics or memories. These are not raw data points but refined insights that guide future agent behavior, such as “Prioritize clarifying ambiguous date requests” or “Use a specific template when responding to compliance questions.”

- Self-Improvement: These curated memories are integrated into the agent’s long-term memory repository and influence decision-making, prompt engineering, and response generation during subsequent interactions. This leads to measurable reductions in error rates, improved task completion times, and higher user satisfaction.

- User Control: Importantly, Dreaming offers configurable levels of user oversight. Organizations can opt for fully automated integration of these new memories or require manual review and approval before updates are applied, ensuring that sensitive or mission-critical applications maintain strict governance.

Advanced Technical Components

Dreaming leverages a sophisticated blend of AI technologies to balance exploration (discovering new patterns) and exploitation (applying learned heuristics). Key technical components include:

- Transformer-based Language Models: These models analyze textual data from session logs to understand context, semantics, and user intent.

- Unsupervised Clustering: Algorithms such as k-means, DBSCAN, or hierarchical clustering group similar sessions or interaction patterns without requiring labeled data.

- Reinforcement Learning Paradigms: To evaluate the effectiveness of newly derived heuristics, Dreaming uses reinforcement learning techniques that reward successful improvements and penalize regressions, refining agent policies iteratively.

- Scheduling and Resource Optimization: Dreaming processes are configured to run during off-peak hours or when agents are idle, ensuring that computational resources are used efficiently without degrading real-time responsiveness.

Enhancing Collaborative AI Environments

In multi-agent systems where multiple Claude Managed Agents interact to achieve shared objectives, Dreaming plays a critical role by recognizing convergent workflows and team preferences. For example, in a customer service environment, Dreaming can identify that multiple agents frequently escalate similar issues to human supervisors and suggest refined escalation criteria or automated resolution steps. This collaborative reflection enhances overall system coherence and performance.

Practical Example of Dreaming in Action

// Pseudocode illustrating Dreaming workflow

function runDreaming(agentSessionLogs) {

// Step 1: Aggregate session data

const aggregatedData = aggregateLogs(agentSessionLogs);

// Step 2: Identify patterns via clustering

const clusters = clusterSessions(aggregatedData);

// Step 3: Extract heuristics from clusters

const heuristics = extractHeuristics(clusters);

// Step 4: Validate heuristics using reinforcement learning

const validatedHeuristics = validateHeuristics(heuristics);

// Step 5: Update agent memory with curated heuristics

updateAgentMemory(validatedHeuristics);

// Step 6: Notify user/admin for manual approval if configured

if (userApprovalRequired()) {

sendForReview(validatedHeuristics);

}

}This example highlights the modular nature of Dreaming, where each stage can be customized or extended to fit specific organizational needs or agent configurations.

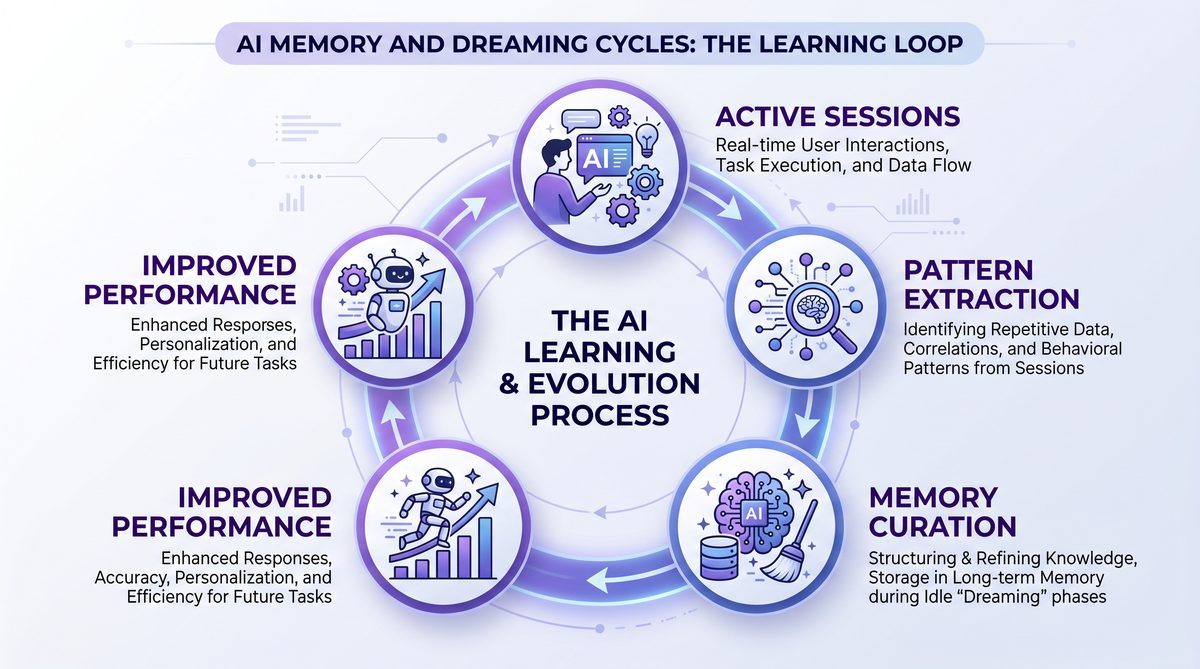

Memory vs. Dreaming: Complementary Mechanisms for Agent Intelligence

Claude Managed Agents have historically incorporated sophisticated memory systems that store explicit contextual information and user-specific data. These memories enable agents to personalize interactions, recall preferences, and maintain continuity across sessions. However, Dreaming introduces a complementary, higher-order cognitive layer that transforms raw memory data into refined knowledge assets through meta-cognitive processes.

Key Differences and Synergies

| Aspect | Memory | Dreaming |

|---|---|---|

| Purpose | Store explicit session data and user context | Analyze past sessions to identify patterns and self-improve |

| Update Frequency | Real-time or per session | Scheduled, offline process |

| Control | User-driven or automatic | User-configurable: automatic or manual review |

| Output | Contextual facts, preferences | Heuristics, error corrections, workflow optimizations |

| Impact on Agent | Directly informs responses | Refines agent policy and prompt strategies over time |

While memory systems provide the foundational data necessary for contextual understanding, Dreaming acts as a meta-layer that transforms this data into actionable insights that enhance agent intelligence sustainably. This relationship can be likened to the distinction between short-term recall and long-term strategic learning in human cognition.

Integration Strategies for Developers

Developers building with Claude Managed Agents can leverage the interplay between memory and Dreaming to maximize agent effectiveness. Best practices include:

- Memory Management: Ensure that explicit facts and preferences are accurately captured and updated in real time to maintain conversational relevance.

- Dreaming Configuration: Schedule Dreaming processes during low-usage periods, and define heuristics review policies aligned with organizational risk tolerance.

- Feedback Loops: Use Dreaming outputs to inform prompt engineering and agent policy adjustments, creating a continuous improvement cycle.

- Customization: Tailor Dreaming algorithms to prioritize domain-specific errors or workflows critical to the application’s success.

Illustrative Example: Customer Support Agent

Consider a customer support agent that uses memory to recall a user’s previous issue and preferences. Over time, Dreaming analyzes multiple sessions and identifies that the agent frequently fails to recognize when a user is dissatisfied, leading to unnecessary escalations. Dreaming then generates a heuristic prompting the agent to ask clarifying questions when negative sentiment is detected, improving resolution efficiency and customer satisfaction.

Outcomes: Introducing Rubric-Based Agent Evaluation

Alongside Dreaming’s introspective capabilities, Anthropic introduced “Outcomes,” a rubric-based evaluation framework designed to quantify and qualify agent outputs systematically. Outcomes addresses the enterprise imperative for transparent, objective, and granular assessment of AI agent performance, which is essential for governance, compliance, and continuous improvement.

Technical Overview of Outcomes

- Rubric Definition: Developers or enterprise users create detailed rubrics specifying success criteria for tasks. These criteria can include accuracy, completeness, tone consistency, adherence to regulatory compliance, response timeliness, and user satisfaction metrics.

- Grader Agent: Outcomes deploys a dedicated grader agent—an independent large language model distinct from the primary agent—that evaluates outputs by comparing them against rubric benchmarks using context-sensitive scoring algorithms. This separation ensures unbiased and consistent evaluation.

- Feedback Loop: Evaluation results are integrated into agent dashboards, providing real-time visibility into performance metrics. These results can also trigger automatic adjustments via Dreaming or prompt engineering updates, enabling rapid iteration cycles.

- Transparency and Auditing: Outcome reports include detailed logs and scoring rationales, facilitating auditing, compliance reporting, and stakeholder communication.

Use Cases and Benefits

Outcomes is particularly valuable in regulated industries such as finance, healthcare, and legal services, where AI-generated outputs must meet strict standards. For example, a financial advisory agent can be evaluated on compliance with disclosure requirements and risk management guidelines, ensuring that advice provided to clients adheres to regulatory frameworks.

By formalizing evaluation criteria and automating assessment, Outcomes reduces reliance on manual QA processes and subjective judgment, leading to more consistent and trustworthy AI deployment.

Example Rubric for a Content Generation Agent

| Criterion | Description | Weight | Scoring Scale |

|---|---|---|---|

| Accuracy | Factual correctness of generated content | 40% | 0-5 |

| Completeness | Coverage of all requested topics | 30% | 0-5 |

| Tone Consistency | Alignment with brand voice and style | 15% | 0-5 |

| Compliance | Adherence to legal and ethical guidelines | 15% | 0-5 |

Such rubrics can be customized for various domains, enabling tailored evaluation strategies that reflect organizational priorities.

Integration with Continuous Deployment Pipelines

Outcomes can be integrated into continuous integration/continuous deployment (CI/CD) pipelines for AI models, providing gatekeeping mechanisms that prevent subpar agent versions from reaching production. Automated grading results can be used to trigger rollbacks or require additional training, ensuring quality assurance at scale.

Multi-agent Orchestration: Coordinating Specialist Agents for Complex Workflows

Anthropic’s Multi-agent Orchestration framework represents a sophisticated approach to managing complex AI workflows by decomposing high-level objectives into discrete subtasks handled by specialized agents. This modular architecture enables parallelism, specialization, and scalability, vital for enterprise deployments involving multifaceted problem-solving.

Core Architecture and Workflow

- Lead Agent Management: The lead Claude Managed Agent functions as the orchestrator, interpreting high-level goals and decomposing them into smaller, manageable tasks based on the problem domain and workflow requirements.

- Specialist Delegation: Each subtask is assigned to a specialist agent configured with custom models, prompts, and toolsets optimized for specific functions such as data extraction, software coding, compliance verification, or creative ideation.

- Parallel Execution: Specialist agents operate concurrently, leveraging a shared filesystem or data exchange layer to synchronize intermediate results and maintain state consistency.

- Result Synthesis: Upon completion of subtasks, the lead agent consolidates outputs, performs validation checks, and synthesizes a comprehensive response or solution to deliver to the end user or system.

Advantages of Multi-agent Orchestration

This design offers several key benefits:

- Efficiency: Parallel execution reduces overall task completion time by distributing workload.

- Modularity: Specialist agents can be independently developed, maintained, and upgraded without impacting the entire system.

- Scalability: The framework supports scaling out by adding more specialist agents or increasing concurrency, accommodating growing enterprise demands.

- Domain Expertise: Customization of specialist agents allows embedding of domain-specific knowledge, improving accuracy and relevance.

- Fault Tolerance: The modular nature enables isolation and recovery from failures within individual agents without collapsing the entire workflow.

Technical Implementation Details

The underlying technical infrastructure includes:

- Shared Filesystem: A secure, high-performance shared storage system enables data exchange and state sharing among agents.

- Task Queueing and Scheduling: The lead agent manages queues to prioritize and balance workloads, ensuring optimal resource utilization.

- Inter-agent Communication Protocols: Lightweight messaging or API calls enable coordination and status updates between lead and specialist agents.

- Customizable Agent Configurations: Developers can define specialist agent parameters such as model versions, prompt templates, tool access, and memory settings via declarative configuration files or APIs.

Example Workflow: Legal Document Review

In a legal firm, a multi-agent orchestration workflow could involve:

- A lead agent receiving a document review request.

- Specialist agents tasked with:

- Extracting relevant clauses.

- Checking for compliance with jurisdictional regulations.

- Identifying potential risks or ambiguities.

- Generating summaries for client communication.

- The lead agent consolidating these outputs into a comprehensive report.

This approach enables efficient handling of complex, multi-dimensional tasks while maintaining accuracy and compliance.

Real-World Deployment: Netflix’s Pioneering Use of Multi-agent Orchestration

Netflix’s engineering teams have been among the earliest adopters of Anthropic’s Multi-agent Orchestration framework, integrating it into their platform engineering workflows to automate infrastructure monitoring, incident diagnosis, and remediation planning. This real-world deployment offers valuable insights into the practical benefits and challenges of orchestrated AI agents at scale.

Key Achievements

- Reduced Mean Time to Resolution (MTTR): By parallelizing diagnostics across specialist agents focused on different infrastructure components, Netflix has significantly accelerated issue detection and resolution.

- Improved Accuracy: Specialist agents with tuned prompts and domain-specific training data have reduced false positives and enhanced root cause analysis fidelity.

- Scalability: The shared filesystem and modular agent architecture support high concurrency, enabling Netflix to manage a vast, distributed infrastructure without bottlenecks.

- Developer Productivity: Engineers leverage agent-generated insights as decision support tools, freeing them from routine monitoring tasks and enabling focus on higher-value activities.

Workflow Example at Netflix

When an infrastructure anomaly is detected:

- The lead agent initiates a diagnostic workflow, delegating subtasks such as log analysis, service health checks, and network latency measurement to specialist agents.

- Each specialist agent operates concurrently, writing intermediate findings to the shared filesystem.

- The lead agent aggregates the findings, runs validation heuristics, and generates a remediation plan or escalates to human operators if required.

This system has enabled Netflix to maintain high service availability and rapid incident recovery, critical for its global streaming platform.

Lessons Learned and Best Practices

- Robust Configuration Management: Maintaining clear versioning and configuration controls for specialist agents is essential to prevent cascading errors.

- Security and Access Control: Fine-grained permissions on the shared filesystem and inter-agent communication channels are critical to safeguard sensitive data.

- Monitoring and Logging: Comprehensive observability into agent workflows ensures early detection of failures and facilitates troubleshooting.

- Human-in-the-Loop Integration: While automation is powerful, retaining mechanisms for human review and intervention remains vital for critical decisions.

Netflix’s success with Multi-agent Orchestration serves as a compelling case study for enterprises considering AI-driven workflow automation at scale.

Implications for Enterprise AI Adoption

Anthropic’s introduction of Dreaming, Outcomes, and Multi-agent Orchestration arrives at a pivotal moment in enterprise AI adoption, as organizations increasingly seek AI agents that are not only capable but also trustworthy, adaptive, and aligned with evolving business objectives.

Addressing Core Enterprise Imperatives

- Autonomous Self-Improvement: Dreaming reduces the human overhead of continuous retraining and manual fine-tuning, enabling AI agents to evolve organically and maintain high performance as workloads and contexts change.

- Objective Performance Metrics: Outcomes provides transparent, rubric-based evaluation essential for governance, regulatory compliance, and stakeholder confidence in AI-driven processes.

- Workflow Complexity Management: Multi-agent Orchestration supports decomposition of enterprise-scale problems into manageable, parallel tasks, improving efficiency, fault tolerance, and scalability.

Strategic Infrastructure Enhancements

Anthropic’s recent strategic data center partnership with SpaceX signifies a commitment to providing cutting-edge infrastructure optimized for low latency, high throughput, and robust security. Coupled with the launch of a dedicated enterprise AI services company, Anthropic is positioning itself to support mission-critical deployments where reliability, customization, and oversight are paramount.

Customizability and Governance

Enterprises benefit from the configurability of these features, enabling them to enforce policies around data privacy, ethical AI use, and auditability. For example, user control over Dreaming’s integration process ensures sensitive heuristics are vetted before deployment, while Outcomes’ detailed reporting facilitates compliance with industry standards such as GDPR, HIPAA, or SOX.

Future Directions in Enterprise AI

As AI agents become more autonomous and integrated into business-critical workflows, features like Dreaming and Outcomes will serve as foundational pillars for trust and continuous learning. Multi-agent orchestration will enable increasingly sophisticated AI ecosystems capable of handling complex, interdependent tasks that span departments and external partners.

Organizations adopting these capabilities early will gain competitive advantages through improved operational efficiency, reduced risk, and enhanced innovation velocity.

Understanding the interplay between enterprise AI infrastructure and these breakthrough features is essential for designing future-proof AI solutions that can adapt to rapidly changing business landscapes.

Claude Managed Agents vs. Other Agent Frameworks: A Detailed Comparison

To contextualize Anthropic’s advancements, it is instructive to compare Claude Managed Agents with other leading AI agent frameworks, including OpenAI Agents, Google Bard Agents, and Microsoft Azure AI Agents. The following detailed comparison highlights key differentiators across autonomous learning, evaluation frameworks, multi-agent coordination, customization, enterprise support, and usage limits.

| Feature | Claude Managed Agents (Anthropic) | OpenAI Agents | Google Bard Agents | Microsoft Azure AI Agents |

|---|---|---|---|---|

| Autonomous Self-Improvement | Dreaming: scheduled, pattern-based self-reflection and memory curation enabling continuous learning | Limited; relies on manual retraining or prompt tuning, no built-in autonomous improvement | Experimental; no formal self-improvement feature, exploratory research stage | No built-in autonomous learning; external feedback loops required for model updates |

| Evaluation Framework | Outcomes: rubric-based grader agent for granular, objective task success measurement with audit trails | Basic evaluation via user feedback and usage metrics; lacks formal rubric system | Some internal metrics collected; no public or customizable rubric evaluation framework | Customizable evaluation pipelines via Azure Machine Learning services, but not tightly integrated with agents |

| Multi-agent Coordination | Lead-specialist orchestration with parallel execution, shared filesystem, and modular agent design | Limited multi-agent orchestration; primarily sequential or chained dialogue flows | Basic multi-turn dialogue chaining; lacks specialist delegation or parallelism | Supports orchestration via Azure Logic Apps and Functions; requires manual setup and integration |

| Customization | Specialist agents configurable with custom models, prompts, toolsets, and memory settings | Prompt engineering supported; model customization limited to fine-tuning and few-shot learning | Prompt tuning available; limited model customization options | Extensive customization via Azure Cognitive Services and custom models |

| Enterprise Support | Dedicated AI services company; strategic SpaceX data center partnership for scalable, low-latency deployments | Strong Microsoft partnership enhances enterprise reach and support | Google Cloud AI enterprise offerings with compliance certifications | Full enterprise AI stack with compliance certifications and global data centers |

| Usage Limits | Pro and Max Claude Code limits doubled (announced May 2026) to support higher concurrency and workloads | Varies by subscription tier; increasing but generally conservative limits to manage demand | Standardized usage limits with tiered access plans | Flexible limits depending on subscription and usage patterns |

This comparison underscores Anthropic’s leadership in autonomous self-improvement and integrated evaluation, as well as its innovative approach to multi-agent orchestration. Enterprises seeking advanced AI agent capabilities should consider these differentiators when selecting platforms for mission-critical deployments.

The Business Context: Anthropic’s Growth Trajectory and Market Positioning

During the Code with Claude keynote, Anthropic’s CEO outlined an ambitious growth plan projecting up to 80x expansion in company scale throughout 2026. This rapid scale-up is underpinned by several strategic pillars that reinforce Anthropic’s market positioning as a leading AI agent provider.

Strategic Pillars Driving Growth

- Innovation Leadership: With pioneering features such as Dreaming, Outcomes, and Multi-agent Orchestration, Anthropic is establishing itself at the forefront of autonomous AI agent research and commercialization.

- Enterprise Partnerships: The strategic data center partnership with SpaceX provides Anthropic with cutting-edge infrastructure capabilities, including low-latency networking and high reliability, critical for supporting large-scale, mission-critical AI deployments.

- Dedicated Enterprise Services: The launch of a specialized enterprise AI services company reflects Anthropic’s commitment to delivering tailored AI solutions, professional support, and compliance-ready offerings to large organizations.

- Platform Expansion: Doubling usage limits for Pro and Max Claude Code users signals a focus on empowering developers and enterprises with greater computational bandwidth, enabling more complex and resource-intensive AI applications.

Market Positioning and Competitive Landscape

Anthropic’s strategy combines technological innovation with enterprise readiness, differentiating it from competitors who may focus primarily on consumer-facing or research-oriented AI products. By emphasizing autonomous agent improvement and rigorous evaluation, Anthropic appeals to organizations seeking reliable, scalable, and governable AI solutions.

The company’s focus on building a robust ecosystem—including developer tools, enterprise services, and infrastructure partnerships—positions it well to capture significant market share in the rapidly expanding AI agent market, projected to grow exponentially over the next decade.

Investment and Talent Acquisition

To support this growth trajectory, Anthropic is investing heavily in talent acquisition, particularly in AI research, systems engineering, and enterprise sales. These efforts aim to sustain innovation velocity and ensure high-quality customer engagement.

What This Means for Developers Building with Claude

For developers and AI practitioners leveraging Claude Managed Agents, Anthropic’s new feature set unlocks a host of actionable benefits and new possibilities that streamline development workflows, improve agent quality, and enable scalable complexity management.

Key Developer Benefits

- Reduced Maintenance Burden: Dreaming’s autonomous self-improvement capability minimizes the need for manual retraining cycles, allowing developers to focus on feature innovation and integration rather than constant fine-tuning.

- Enhanced Quality Assurance: Outcomes provides a structured framework for systematically measuring and improving agent outputs, facilitating continuous integration and deployment (CI/CD) of AI models with objective performance metrics.

- Scalable Complexity Handling: Multi-agent orchestration supports building sophisticated workflows that decompose complex problems into manageable, parallel tasks, greatly improving modularity and performance.

- Customization and Control: The ability to configure specialist agents with custom prompts, models, and toolsets empowers developers to fine-tune workflows to specific domain requirements and organizational policies.

- Expanded Usage Capacity: Doubled usage limits on Pro and Max tiers enable more extensive experimentation, prototyping, and production deployments without immediate resource constraints.

Practical Tips for Developers

- Integrate Dreaming Processes: Incorporate scheduled Dreaming cycles into your agent lifecycle management to enable continuous self-improvement and faster iteration.

- Define Robust Rubrics: Leverage Outcomes to create detailed, domain-specific evaluation rubrics that reflect your application’s quality and compliance standards.

- Architect Multi-agent Workflows: Design agent workflows that exploit multi-agent orchestration for parallel processing, fault tolerance, and modularity, improving scalability and maintainability.

- Leverage Customization: Utilize specialist agent configurations to embed domain expertise and optimize prompt engineering for each subtask.

- Monitor Usage and Performance: Use dashboards and reporting tools to track agent performance metrics and usage limits, proactively managing resource allocation and scaling.

Example Developer Scenario: Automating Technical Support

A developer building a technical support chatbot can employ Multi-agent Orchestration to delegate tasks such as:

- Issue classification to a specialist trained on product documentation.

- Knowledge base search by a dedicated retrieval agent.

- Response generation by a conversational agent optimized for tone and clarity.

- Compliance verification by a regulatory specialist agent.

Dreaming can then analyze past support interactions to refine heuristics, such as identifying questions that frequently require escalation, while Outcomes evaluates the quality and compliance of agent responses, feeding insights back into the development cycle.

In conclusion, Anthropic’s announcement at Code with Claude marks a pivotal moment in the evolution of AI agents. By equipping Claude Managed Agents with the ability to dream, evaluate, and orchestrate, Anthropic is delivering a platform that not only meets but anticipates the demands of tomorrow’s AI-driven enterprises and developers.

The Dreaming feature builds on Claude’s existing capabilities in Opus 4.7, which already includes extended thinking, improved tool use, and enhanced code generation. Understanding the base model’s strengths helps contextualize why autonomous self-improvement through dreaming is so powerful. Our full breakdown of what’s new in Claude Opus 4.7 for developers covers the foundation that makes features like Dreaming and Managed Agents possible.

Anthropic’s multi-agent orchestration formalizes patterns that developers have been building manually with Claude and other models. The key insight is that specialized agents with focused prompts and tools outperform single general-purpose agents on complex tasks. Our guide to multi-agent workflows with bot specialization and cross-checking explores the architectural principles that Anthropic has now productized in Managed Agents.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.