ChatGPT Trusted Contact: Complete Setup Guide for the New Safety Feature in 2026

What is ChatGPT Trusted Contact and Why It Matters

In May 2026, OpenAI introduced a significant advancement in AI safety with the rollout of the Trusted Contact feature for ChatGPT. This optional safety mechanism allows adult users to designate a trusted individual—such as a family member, close friend, or mentor—who can be contacted by OpenAI in specific safety-related situations. Unlike traditional AI safety controls that rely solely on system-level safeguards and automated moderation, Trusted Contact introduces a human-centric approach that integrates real-world relationships into the AI safety ecosystem.

The feature reflects OpenAI’s commitment to responsible AI, emphasizing not only technical robustness but also human oversight and connection. By giving users the choice to involve someone they trust, OpenAI aims to enhance the safety net around AI usage, particularly in sensitive scenarios where human intervention can provide context, support, or intervention beyond automated systems.

Trusted Contact matters because it leverages social trust networks to augment AI safety. This represents a novel paradigm shift in AI governance by recognizing that AI tools operate within human communities and that safety can be strengthened through collaborative human involvement. It also addresses concerns around mental health, crisis situations, and misuse by enabling a direct, yet privacy-respecting, communication channel to a trusted third party.

Historically, AI safety has focused on algorithmic safeguards such as content filters, anomaly detection, and usage policies. While these are essential, they often lack the nuance and empathy that human judgment can provide. The Trusted Contact feature bridges this gap by embedding a trusted human element into the safety infrastructure. This approach acknowledges that AI interactions are often deeply personal and that human relationships can play a critical role in preventing harm.

Moreover, Trusted Contact empowers users by giving them control over who is involved in their safety network, fostering a sense of agency and reassurance. For vulnerable populations, such as those experiencing mental health challenges or at risk of exploitation, this feature can be a vital lifeline, ensuring timely support without compromising autonomy or privacy.

OpenAI’s innovation demonstrates a forward-thinking integration of technology and social dynamics, setting a precedent for future AI safety frameworks that combine automated intelligence with human empathy and community support. As AI continues to permeate everyday life, such human-centered safety mechanisms will be increasingly important to maintain trust and promote positive outcomes.

How the Feature Works: Technical and User-Facing Explanation

The ChatGPT Trusted Contact feature operates through a dual-layered mechanism involving user interface integration and backend safeguards designed to handle sensitive notifications responsibly. This ensures both user empowerment and rigorous privacy protections.

User-Facing Workflow

- Nomination: The user nominates a Trusted Contact by providing their name, email address, and relationship to the user within the ChatGPT settings interface. This step is designed to be intuitive and accessible, with contextual guidance to help users select an appropriate contact.

- Verification: OpenAI sends a verification email to the nominee to confirm their willingness to serve as a Trusted Contact. This prevents unauthorized or unaware individuals from being nominated and ensures consent.

- Activation: Once verified, the Trusted Contact is linked to the user’s ChatGPT account but receives no ongoing updates or data unless triggered by a safety event. This preserves the privacy of the user’s AI interactions under normal circumstances.

- Triggering Notification: In predefined safety-related situations—such as detected signals of user distress, account compromise, or potential misuse—OpenAI’s safety systems may initiate contact with the Trusted Contact. These scenarios are carefully defined to avoid false alarms and respect user autonomy.

- Notification Delivery: The Trusted Contact receives a secure, privacy-preserving message detailing the nature of the concern and recommended next steps. This message is crafted to balance transparency and confidentiality, providing enough information for effective intervention without exposing sensitive data.

Technical Architecture

The backend architecture supporting Trusted Contact is designed with privacy, security, and compliance at its core, incorporating state-of-the-art technologies and best practices:

- Data Encryption: All identification and contact data are encrypted both in transit and at rest using AES-256 encryption standards, a widely recognized industry benchmark for data security. This prevents unauthorized access during storage or transmission.

- Event-Driven Triggers: The system integrates with OpenAI’s existing AI content moderation and behavioral analytics pipelines. When certain risk thresholds are met—such as language signaling distress, account anomalies, or suspicious activity—an event triggers the Trusted Contact notification process. This event-driven architecture ensures timely responses without continuous monitoring overhead.

- Minimal Data Sharing: To preserve user privacy, only the essential information regarding the safety concern and contact instructions are shared with the Trusted Contact. No chat history, personal messages, or sensitive user data is transmitted. This minimal disclosure safeguards user confidentiality.

- Audit Logging: All Trusted Contact-related events are logged for internal auditing to ensure compliance with safety protocols and to prevent misuse. These logs are securely stored and accessible only to authorized personnel under strict governance policies.

- Access Controls: The notification system is strictly access-controlled, limiting visibility to authorized safety operations personnel and automated systems only. Role-based access controls and multi-factor authentication protocols are enforced to mitigate insider threats.

- Redundancy and Reliability: The notification infrastructure is designed for high availability, employing redundant servers and failover mechanisms to ensure that critical safety messages are delivered reliably even under adverse network conditions.

The design balances the need for timely human intervention with stringent privacy protections, creating a secure bridge between AI safety systems and trusted humans. This architecture reflects a holistic approach to AI safety that combines technical precision with ethical considerations.

Example Scenario: How the System Responds

Consider a scenario where a user’s language in ChatGPT conversations indicates signs of acute distress or suicidal ideation. The behavioral analytics module flags this conversation based on predefined linguistic and contextual markers. Upon surpassing a risk threshold, an event is triggered that activates the Trusted Contact notification workflow. The system compiles a carefully worded message describing the concern and suggested actions, then delivers it securely to the Trusted Contact. This individual can then reach out to the user or seek professional help, providing a critical human safety net that automated systems alone cannot offer.

Integration with Other Safety Features

The Trusted Contact feature operates in concert with other OpenAI safety mechanisms such as automated content moderation and user reporting. Its integration ensures a layered defense model where automated filters handle routine risks, while Trusted Contact provides human intervention for complex or high-stakes situations. This synergy optimizes both responsiveness and user privacy.

Who Can Use It: Eligibility Requirements

The Trusted Contact feature is currently available to adult ChatGPT users across specific subscription tiers. OpenAI has carefully defined eligibility criteria to balance accessibility with regulatory compliance and safety considerations.

- Age Restriction: Users must be 18 years or older to nominate a Trusted Contact. This age threshold ensures legal consent for data sharing and notifications, aligning with global privacy laws and ethical standards.

- Subscription Tiers: Trusted Contact is available to users on ChatGPT Plus and Enterprise plans. These tiers generally represent users with more intensive or professional usage patterns, who may benefit most from enhanced safety features. Free-tier users do not have access to this feature at present, though OpenAI plans to evaluate future expansions.

- Verification Required: Users must have a verified OpenAI account with up-to-date contact information. This verification process helps maintain the integrity of the Trusted Contact nominations and ensures reliable communication channels.

- Geographic Availability: Initially available in countries where OpenAI’s safety and privacy compliance requirements are met, including jurisdictions with stringent data protection regulations such as the European Union (GDPR) and California (CCPA). OpenAI plans to expand availability globally as compliance frameworks evolve.

This tiered availability aligns with OpenAI’s goal to prioritize safety features for users who engage deeply with ChatGPT in professional or personal contexts requiring enhanced safeguards. It also allows OpenAI to pilot and refine the feature in controlled environments before broader rollout.

Considerations for Organizations and Enterprises

Enterprise customers can enable Trusted Contact functionality for their teams, integrating it into organizational safety policies. This can be particularly valuable in industries where AI usage involves sensitive or high-stakes information, such as healthcare, finance, or education. Enterprises may also deploy supplementary training to help users select appropriate Trusted Contacts and understand the feature’s benefits.

Future Plans for Eligibility Expansion

OpenAI is exploring ways to extend Trusted Contact access to younger users through parental or guardian controls, as well as to free-tier users with tailored privacy safeguards. These expansions will be guided by ongoing research, user feedback, and evolving regulatory landscapes.

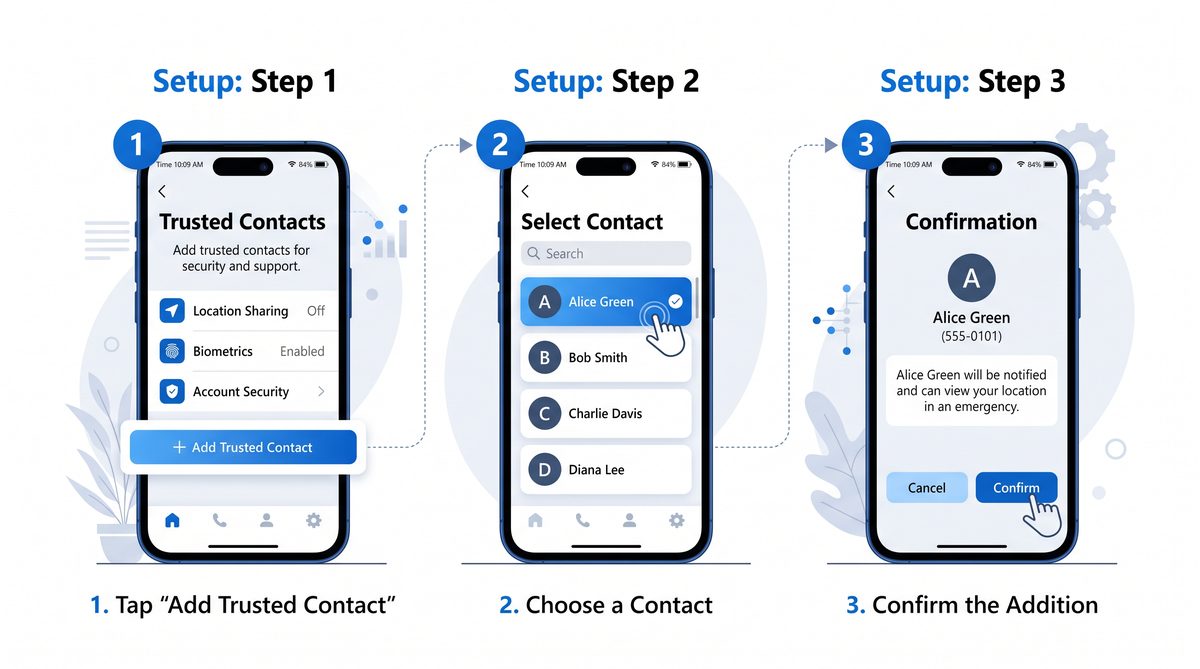

Step-by-Step Setup Instructions

Setting up a Trusted Contact within ChatGPT is straightforward and designed for ease of use, with clear prompts and privacy explanations to guide users through each step. Below is a detailed walkthrough:

- Access Account Settings: Log into your ChatGPT account via the web or desktop app and navigate to the Settings menu. This menu is accessible from the user profile icon or sidebar navigation.

- Locate Trusted Contact Section: Within settings, find the Safety & Privacy tab, then select Trusted Contact. This section includes an overview of the feature and its privacy implications.

- Enter Contact Details: Provide the full name, email address, and your relationship to the person you trust (e.g., friend, family member, mentor). The interface includes validation to ensure correct email formatting and prompts to encourage thoughtful selection.

- Send Invitation: Submit the nomination to trigger an automated email sent by OpenAI for contact verification. The invitation explains the responsibilities and privacy protections to the nominee.

- Contact Verification: The nominee must confirm their willingness to serve by clicking a secure link and completing a brief verification step, which may include identity confirmation to prevent misuse.

- Confirmation and Activation: Once verified, you will receive confirmation in your account settings that your Trusted Contact is active. You can also view the contact’s status and resend verification invites if needed.

Users can update or remove their Trusted Contact nomination at any time through the same settings interface. Changes take effect immediately, with notifications sent to the new or removed contacts as appropriate.

Practical Tips for Setup

- Notify Your Trusted Contact in Advance: Inform the person you intend to nominate so they expect the verification email and understand their role.

- Keep Contact Information Current: Ensure the email address you provide is accurate and regularly monitored by the nominee to avoid delays in critical notifications.

- Review the Feature Periodically: Revisit your Trusted Contact settings to confirm that the nominated individual remains appropriate for the role as your circumstances evolve.

What Happens When a Trusted Contact Is Notified

When OpenAI’s AI moderation or behavioral analytics systems detect a safety-related risk involving a user with an active Trusted Contact, a carefully orchestrated process unfolds to ensure timely and respectful intervention:

- Risk Assessment: The system evaluates the severity of the detected issue based on predetermined thresholds covering scenarios such as suicidal ideation, threats of violence, or account security breaches. These thresholds are continuously refined through machine learning and expert input to minimize false positives and negatives.

- Notification Drafting: A privacy-conscious message is generated containing essential context about the safety concern without exposing sensitive user data. The message is designed to be clear, actionable, and reassuring, emphasizing the Trusted Contact’s role in supporting the user.

- Secure Delivery: The message is sent via encrypted email or a secure notification channel to the Trusted Contact. Delivery mechanisms include Transport Layer Security (TLS) for emails and optional integration with secure messaging platforms for enterprise users.

- Recommended Actions: The notification includes recommended next steps, such as contacting the user directly, referring to professional support services, or alerting emergency responders if appropriate. OpenAI provides resources and guidance to Trusted Contacts to assist in decision-making.

- Follow-up Monitoring: OpenAI safety teams monitor the situation and may engage further if necessary, but the Trusted Contact serves as a vital human bridge for immediate support. The system also tracks the status of notifications and may escalate alerts if no response is detected.

This notification mechanism is designed to respect user autonomy while ensuring critical intervention can occur in time-sensitive situations. It balances the need for privacy with the imperative of safety, leveraging human judgment where automated systems alone are insufficient.

Example Notification Message

Subject: Trusted Contact Alert – Important Safety Information

Dear [Trusted Contact Name],

OpenAI’s safety system has identified a concern involving [User Name] that may require your attention. While respecting privacy, we want to inform you that [User Name] might be experiencing a situation where your support could be valuable.

We recommend reaching out to [User Name] to check in and encourage them to seek professional help if needed. Please find additional resources and suggested next steps attached.

Thank you for being a trusted part of their safety network.

Sincerely,

The OpenAI Safety Team

Limitations and Safeguards

OpenAI has implemented several safeguards to prevent misuse or over-notification:

- Notifications occur only for high-confidence safety events, minimizing unnecessary alerts.

- Trusted Contacts cannot initiate account actions or access private data.

- Users can revoke Trusted Contact status at any time.

This ensures that the Trusted Contact role remains supportive and respectful of user rights.

Privacy Protections and Data Handling

OpenAI has implemented robust privacy protections around the Trusted Contact feature to safeguard user data and maintain trust. These measures address the sensitive nature of safety-related communication and comply with international standards.

- Consent-Driven Sharing: No information is shared with the Trusted Contact without the user’s prior consent established at setup. This ensures user autonomy over data dissemination.

- Minimal Data Exposure: Only the necessary information related to the safety concern is disclosed; chat logs, personal messages, or AI interaction contents remain confidential. This principle of data minimization reduces privacy risks.

- Data Encryption: Contact details and notification contents are encrypted using industry-standard protocols during storage and transmission. End-to-end encryption is employed where feasible to prevent interception.

- Data Retention: Contact and event data are retained only as long as necessary to fulfill safety and compliance obligations, after which they are securely deleted following strict data lifecycle management policies.

- User Control: Users retain full control to revoke Trusted Contact access, change nominees, or opt out of the feature at any time. These controls are accessible via the account settings interface.

- Compliance: The feature complies with applicable data protection laws including GDPR, CCPA, HIPAA (where applicable), and other international privacy standards. OpenAI conducts regular audits and impact assessments to maintain compliance.

- Transparency: Users receive clear information about data handling practices, including privacy notices and terms of use specific to Trusted Contact.

These measures ensure that the Trusted Contact system enhances safety without compromising user privacy or autonomy, fostering trust in OpenAI’s AI ecosystem.

Technical Data Flow and Security Measures

When a user nominates a Trusted Contact, their contact information is encrypted and stored in a dedicated secure database with strict access controls. Upon triggering a safety event, the notification system retrieves only the necessary data, composes the message, and dispatches it through encrypted channels.

OpenAI employs intrusion detection systems, regular penetration testing, and continuous monitoring to safeguard these processes. Additionally, any data access is logged with detailed metadata for auditing purposes.

Comparison with Other AI Safety Features

| Feature | Description | User Control Level | Human Involvement | Privacy Considerations | Availability |

|---|---|---|---|---|---|

| Trusted Contact | Nominate a trusted individual to be contacted in safety-related situations involving the AI user. | High (user nominates and controls contact) | Human (trusted third party) | Minimal data shared; consent-based; encrypted | ChatGPT Plus, Enterprise, Adults 18+ |

| Automated Content Moderation | AI-driven filtering and flagging of harmful or inappropriate content in user interactions, including hate speech, harassment, and misinformation. | Low (system-controlled) | None (automated) | Data processed internally; no external sharing | All users |

| Usage Reporting and Blocking | User reports abusive or harmful AI behavior; system blocks offending content and escalates issues to human moderators if necessary. | Moderate (user-initiated) | Human review in escalation cases | Report details retained for moderation and compliance | All users |

| Parental Controls (Future roadmap) | Controls for guardians to monitor and restrict AI use for minors, including usage limits and content filters. | High (guardian-controlled) | Human (guardian) | Guardian access limited to minor’s usage data; privacy safeguarded | Planned |

| Behavioral Analytics & Anomaly Detection | Automated systems analyze user behavior patterns to detect unusual or risky activity, informing safety interventions. | Low (system-controlled) | Mostly automated, with human oversight in critical cases | Data anonymized where possible; internal use only | Enterprise and Plus users |

This comparison illustrates how Trusted Contact uniquely combines high user control, human involvement, and privacy safeguards, complementing other AI safety tools to form a comprehensive protective framework.

Best Practices for Choosing a Trusted Contact

Selecting the right Trusted Contact is crucial for maximizing the safety benefits of this feature. The effectiveness of Trusted Contact depends heavily on the nominee’s ability and willingness to act responsibly and sensitively.

- Trustworthiness: Choose someone you trust implicitly to respect your privacy and act with your best interest. This person should understand the sensitive nature of their role and maintain confidentiality.

- Accessibility: Ensure the person can be reached reliably through email or other contact methods and is likely to respond promptly. Consider their availability during various times of day and their responsiveness in emergencies.

- Understanding: Ideally, select someone familiar with your circumstances and capable of recognizing when you might need support or intervention. This familiarity enables more empathetic and effective responses.

- Willingness: Confirm that the individual consents to this responsibility before nominating them. They should be comfortable receiving potentially sensitive notifications and prepared to act accordingly.

- Stability: Prefer contacts who are emotionally stable and capable of handling sensitive situations calmly. Their ability to remain composed can make a significant difference during crises.

- Proximity: While not mandatory, contacts who live nearby may be more effective in crisis scenarios requiring in-person assistance or local emergency intervention.

- Experience: If possible, choose someone with experience in crisis support, mental health awareness, or caregiving roles. Their expertise can improve outcomes in difficult situations.

Review and update your Trusted Contact nomination periodically to reflect changes in your relationships or circumstances. Life events such as relocation, changes in contact information, or evolving personal dynamics may affect the suitability of your Trusted Contact.

Additional Recommendations

- Communicate Expectations: Discuss the scope and limits of the Trusted Contact role with your nominee to ensure mutual understanding.

- Backup Plan: Although currently only one Trusted Contact is supported, consider informing a close circle of trusted individuals about your safety preferences as a precaution.

- Educate Yourself: Familiarize yourself with the Trusted Contact feature’s privacy policies and procedures so you can confidently manage your safety network.

Frequently Asked Questions

- Is Trusted Contact mandatory for ChatGPT users?

- No, it is an optional feature designed to enhance safety for those who choose to participate. Users can enable or disable it at any time without affecting their access to ChatGPT.

- Can a Trusted Contact access my chat history?

- No, Trusted Contacts only receive notifications about safety concerns and do not have access to your AI chat logs, personal data, or interaction contents.

- What types of situations trigger Trusted Contact notifications?

- Situations include detected mental health crises (e.g., suicidal ideation), threats of harm to self or others, account security breaches, or other high-risk safety scenarios as determined by OpenAI’s safety systems.

- Can I have more than one Trusted Contact?

- Currently, ChatGPT supports nominating only one Trusted Contact per account. OpenAI is exploring options for multiple contacts in future updates.

- How does OpenAI protect my data in this process?

- OpenAI employs rigorous encryption, access controls, and data minimization to ensure your information remains secure and private. Data is only shared with Trusted Contacts under strict consent and necessity criteria.

- What happens if my Trusted Contact does not respond?

- OpenAI’s safety team continues to monitor situations and may take additional actions as required, including direct intervention or escalation to emergency services. The Trusted Contact serves as a key first responder but is not the sole mechanism for safety.

- Can I change my Trusted Contact later?

- Yes, you can update or remove your Trusted Contact anytime through your account settings. Changes take effect immediately, with notifications sent to involved parties as appropriate.

- Will my Trusted Contact receive regular updates about my AI usage?

- No, Trusted Contacts do not receive ongoing updates or monitoring information. They are only contacted in specific, predefined safety-related situations.

- Are Trusted Contact notifications anonymous?

- Notifications identify the user by the name provided during nomination but do not disclose detailed chat content or sensitive personal data, maintaining a balance between transparency and privacy.

The Bigger Picture: OpenAI’s Safety Roadmap

The Trusted Contact feature is part of OpenAI’s broader roadmap to build AI systems that are safe, responsible, and aligned with human values. This roadmap encompasses multiple layers designed to address the complex challenges of AI deployment.

- Technical Safeguards: Enhancing AI content moderation, behavior analysis, and anomaly detection algorithms to identify and mitigate risks proactively. This includes improving natural language understanding to detect subtle signs of distress or misuse.

- Human-Centered Safety: Introducing mechanisms like Trusted Contact to incorporate trusted humans into AI safety workflows, recognizing the importance of social context in AI use. This approach leverages empathy and judgment that AI alone cannot replicate.

- Transparency and Control: Empowering users with greater visibility and control over their AI interactions, including data usage, privacy settings, and safety preferences. OpenAI aims to create a user-centric model where individuals can tailor their AI experience responsibly.

- Research and Collaboration: Partnering with academic institutions, policymakers, and civil society to develop best practices and standards for responsible AI deployment. This collaborative approach ensures that safety innovations are grounded in diverse expertise and societal needs.

- Future Features: Planned expansions include parental controls, enhanced crisis response protocols, and broader tools to support mental health and wellbeing within AI applications. These features aim to create a holistic ecosystem that addresses the varied dimensions of AI safety.

This multi-faceted approach aligns with OpenAI’s mission to ensure that AI benefits all humanity safely and equitably. By integrating technical innovation with human values, OpenAI seeks to foster AI systems that are not only powerful but also trustworthy and ethical.

Trusted Contact joins a growing suite of ChatGPT features designed to make AI interactions safer and more personalized. The platform recently upgraded its default model to GPT-5.5 Instant, which prioritizes factual reliability and auditable memory. Our explainer on GPT-5.5 Instant as OpenAI’s new default ChatGPT model covers how these safety-focused improvements complement features like Trusted Contact.

The Trusted Contact feature arrives at a time when AI safety and security are under intense scrutiny across the industry. Earlier in 2026, Anthropic’s Mythos model was breached, highlighting the real-world risks that AI companies must address. Our analysis of the Anthropic Mythos breach and its implications for AI security provides important context for understanding why features like Trusted Contact represent a proactive approach to user protection.

Trusted Contact is part of OpenAI’s broader strategy of shipping safety features alongside capability upgrades. The company has maintained an aggressive release cadence throughout 2026, including major Codex updates with desktop computer use and multi-agent workflows. Our coverage of OpenAI Codex’s desktop computer use and multi-agent workflow update shows how the company balances capability expansion with responsible deployment.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.