7 Advanced Prompting Techniques for ChatGPT and Claude That Actually Work in 2026

⚡ The Brief

- What it is: Seven advanced prompting techniques that consistently improve output from ChatGPT and Claude in 2026 — with examples and when to use each.

- Who it’s for: Anyone who writes prompts more than once a day and wants dramatically better results.

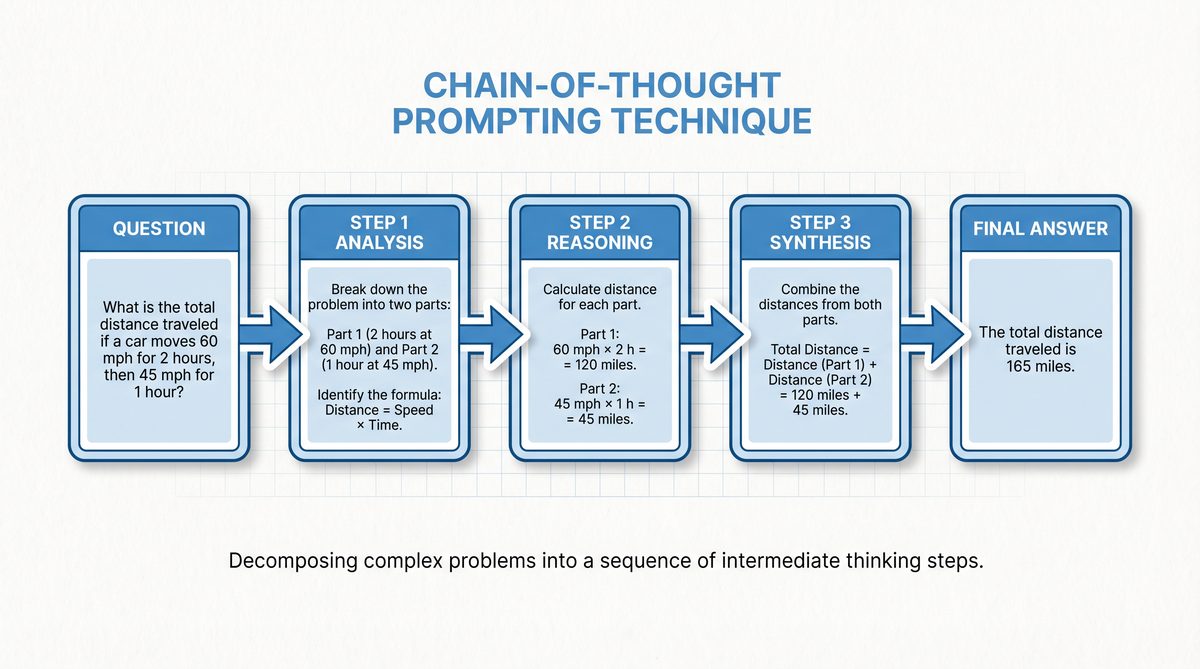

- Techniques covered: Role prompting, chain-of-thought, few-shot, self-consistency, ReAct, meta-prompting, and chain-of-verification.

- Biggest ROI: Chain-of-verification + answer-first formatting — typically 30–50% fewer hallucinations.

- Bottom line: Spend 10 minutes learning these and recover hours per week in model rework.

✓ Instant access✓ No spam✓ Unsubscribe anytime

7 Advanced Prompting Techniques for ChatGPT and Claude That Actually Work in 2026

As artificial intelligence continues its rapid evolution, AI language models in 2026 have reached unprecedented capabilities, fundamentally transforming how humans interact with technology. Among the most sophisticated are ChatGPT and Claude, two state-of-the-art conversational agents that offer expansive versatility across industries, from content creation to coding assistance and data analysis. However, unlocking the full potential of these models requires more than just basic queries; it demands mastery of advanced prompting techniques that can guide the AI to deliver highly accurate, context-aware, and tailored responses.

Advanced prompting is no longer a luxury but a necessity for professionals and enthusiasts aiming to harness AI’s power efficiently. While ChatGPT and Claude share many similarities, understanding their distinct architectures and response behaviors is crucial in designing prompts that work optimally with each. This comprehensive guide explores seven cutting-edge prompting strategies that transcend generic command structures, enabling users to fine-tune interactions, direct complex workflows, and integrate multimodal inputs seamlessly.

Whether you are a developer, content strategist, or AI researcher, this detailed manual offers actionable insights and practical examples, empowering you to elevate your prompting skills for ChatGPT and Claude. From contextual layering to ethical considerations, the scope of this guide ensures a holistic approach to prompt engineering in 2026’s AI landscape.

1. Contextual Layering: Building Multi-Tiered Prompts

1.1 Understanding Contextual Layering

Contextual layering is an advanced prompting methodology that involves structuring inputs to AI models in multiple, hierarchically organized tiers of context. Instead of delivering a single, monolithic prompt, contextual layering breaks down information into discrete segments that build upon each other logically. This technique is vital because it significantly enhances the precision of responses by allowing the AI to consider nuanced background information, intermediate reasoning steps, and targeted queries sequentially.

In contrast to simple prompts that might overwhelm a model with too much information at once, layered prompts mimic human thought processes, where complex problems are decomposed into manageable subtopics. This approach is especially beneficial when dealing with intricate subject matter or tasks requiring multi-step reasoning — for example, technical troubleshooting, legal analysis, or multi-faceted creative writing.

1.2 Step-by-Step Approach to Layered Prompts

Implementing contextual layering effectively requires a systematic approach:

- Define the overarching objective: Clearly identify the end goal of your interaction with the AI, whether it be generating a detailed report, coding a specific function, or synthesizing research material.

- Break down the problem into sub-contexts: Segment the information into logical components or questions that progressively narrow the focus.

- Compose recursive prompts: Use the AI’s output from one prompt as input context for the next, enabling refinement and iterative enhancement of responses.

- Maintain context continuity: Ensure that each prompt explicitly references relevant details from previous layers to prevent loss of critical information.

For example, when requesting a technical explanation, you might first prompt the AI to outline key concepts, then follow up with more detailed questions about each concept, thereby constructing a layered understanding that culminates in a comprehensive response.

1.3 Practical Examples with ChatGPT and Claude

To illustrate, consider the task of generating a market analysis report:

- Initial prompt (Layer 1): “Provide an overview of the current state of the electric vehicle market globally.”

- Follow-up prompt (Layer 2): “Based on the overview, list the top five companies by market share and summarize their competitive advantages.”

- Final prompt (Layer 3): “Analyze the potential impact of emerging battery technologies on these companies’ market positions.”

ChatGPT tends to excel at maintaining coherent threads across layered prompts due to its transformer-based architecture optimized for contextual retention. Claude, while equally capable, often demonstrates strengths in nuanced understanding of complex queries, adapting well to recursive refinement but occasionally requiring more explicit context cues.

By leveraging contextual layering, users can steer both models toward generating structured, in-depth outputs rather than general or superficial answers, thus maximizing the value of each interaction.

2. Persona Embedding: Tailoring AI Responses with Character Profiles

2.1 What Is Persona Embedding?

Persona embedding is a prompting technique that involves defining a specific character profile or role for the AI to adopt during interaction. This approach enhances the relevance and engagement level of responses by aligning the AI’s tone, style, expertise, and perspective with the desired persona. Rather than eliciting generic outputs, persona embedding contextualizes responses as if the AI were a domain expert, a particular professional, or even a fictional character.

The benefits of persona embedding extend beyond mere stylistic adjustments; it enables deeper alignment with user expectations. For example, a prompt requesting investment advice can be framed so the AI responds as a seasoned financial analyst, employing jargon, risk assessments, and market insights appropriate to that role. This not only improves user trust but also aids in generating domain-specific knowledge tailored to the user’s needs.

2.2 Designing Effective Personas

Crafting an effective persona requires careful consideration of several factors:

- Trait selection: Identify key personality attributes such as professionalism, empathy, humor, or assertiveness that suit the context.

- Tonal consistency: Decide on the tone—formal, conversational, technical, or casual—that best fits the intended audience and purpose.

- Expertise level: Specify the depth of knowledge, ensuring the AI embodies either a novice, intermediate, or expert perspective.

- Avoiding over-specification: Excessively detailed personas can restrict creativity or lead to unnatural responses. Instead, balance clarity with flexibility to allow the model to generate rich and contextually appropriate answers.

2.3 Implementing Persona Prompts in ChatGPT and Claude

Both ChatGPT and Claude support persona embedding through prompt engineering, but their syntax nuances differ slightly. For ChatGPT, embedding a persona often involves preambles such as, “You are an experienced software engineer with 10 years of expertise in AI development,” followed by specific instructions. Claude, known for its safety and alignment optimizations, responds well to direct role declarations combined with ethical guidelines to prevent misuse.

Case studies reveal that persona embedding markedly improves engagement metrics. For example, customer support bots adopting empathetic personas reduce user frustration, while creative writing prompts framed with authorial personas yield more stylistically coherent narratives. These improvements demonstrate the practical impact of persona embedding on real-world AI applications.

Mastering persona embedding is indispensable for users aiming to customize AI behavior and extract nuanced, domain-specific insights from ChatGPT and Claude. This technique dovetails with broader prompt engineering practices that prioritize context and user intent.

3. Instruction Chaining: Directing Complex Workflows

3.1 The Power of Sequential Instructions

Instruction chaining is a prompting technique that involves directing AI models through a series of ordered, stepwise commands to execute complex workflows efficiently. Rather than a single broad instruction, chaining breaks down tasks into discrete, logically connected steps, enhancing the AI’s ability to manage multifaceted processes without losing focus or context.

This method substantially improves output quality by minimizing ambiguity and ensuring that each instruction builds upon the previous one. For example, in coding assistance, instruction chaining can guide the AI to first generate a function skeleton, then add error handling, and finally optimize performance, resulting in cleaner and more reliable code.

3.2 Techniques for Effective Instruction Chaining

Successful implementation of instruction chaining involves several best practices:

- Clear stepwise commands: Formulate each instruction as a distinct, actionable step, avoiding compound or ambiguous directives.

- Conditional instructions: Incorporate “if-then” logic to enable dynamic branching based on AI outputs or user inputs.

- Iterative loops: Use iterative instructions to refine outputs, such as requesting multiple drafts or reviews before finalizing.

- Explicit transitions: Signal when one step concludes and the next begins to maintain coherence.

3.3 Examples in Real-World Applications

Instruction chaining shines in diverse domains. For instance, in content creation, a prompt sequence might be:

- “Generate a blog post outline on renewable energy trends in 2026.”

- “Expand each outline point into a detailed paragraph with citations.”

- “Summarize key takeaways in a compelling conclusion.”

In data analysis:

- “Load the dataset on global carbon emissions from 2010 to 2025.”

- “Identify trends and anomalies over the years.”

- “Visualize data with line charts and interpret results.”

By applying instruction chaining, users leverage ChatGPT and Claude’s strengths in managing sequential logic and producing high-fidelity outputs tailored to evolving tasks.

Instruction Chaining🕐 Instant∞ Unlimited🎁 Free

4. Dynamic Prompt Adjustment Using Feedback Loops

4.1 Integrating AI Output Feedback into Prompts

Dynamic prompt adjustment is an advanced technique where the user incorporates feedback from the AI’s own outputs back into subsequent prompts, creating a self-correcting iterative process. This feedback loop allows for continuous refinement, enabling the model to correct errors, clarify ambiguities, or enrich content progressively.

This approach mimics human editing and revision cycles, making it particularly effective for complex or creative tasks where initial outputs may require polishing. By explicitly referencing previous responses, the AI can learn from its own mistakes or omissions and adapt accordingly, resulting in higher quality and more targeted results.

4.2 Methods to Implement Feedback Loops

There are two primary methods to implement feedback loops in prompting:

- Manual adjustment: The user reviews AI outputs and crafts follow-up prompts that specify corrections or enhancements. This method offers granular control but can be time-consuming for large datasets or complex workflows.

- Automated adjustment: Leveraging APIs and scripts, users can programmatically feed AI outputs into re-prompting sequences, enabling rapid iteration without manual intervention. This is particularly useful in data pipelines, chatbot systems, or batch content generation.

Key to effective feedback loops is maintaining clarity in instructions and managing context size to prevent loss of relevant information across iterations. Prompt templates and predefined correction patterns can streamline this process.

4.3 Tools and Scripts Supporting Feedback Integration

Both ChatGPT and Claude provide robust API frameworks that facilitate dynamic prompting workflows. Users can employ Python scripts, JavaScript, or other programming languages to automate feedback loops, using techniques such as:

- Extracting key phrases or error indicators from AI outputs.

- Appending these as context in subsequent prompts.

- Applying conditional logic to determine when to halt iterations.

Third-party platforms, including prompt management tools and AI orchestration frameworks, also enable dynamic prompt adjustment by integrating user feedback channels and version control. These solutions enhance scalability and consistency in professional environments where prompt quality is mission-critical.

5. Leveraging Multimodal Inputs for Richer Interactions

5.1 Multimodal Prompting in 2026 AI Models

By 2026, AI language models like ChatGPT and Claude have advanced to support multimodal inputs, expanding beyond text to incorporate images, audio, and even video data within prompts. This integration allows for richer, more nuanced interactions, enabling users to combine diverse data types to achieve more comprehensive and contextually aware outputs.

Multimodal prompting capitalizes on the synergy between different sensory inputs, enabling applications such as image captioning, audio transcription with contextual understanding, and cross-modal content generation. This evolution represents a significant leap from traditional text-only prompting paradigms, opening new frontiers for creativity, accessibility, and professional use cases.

5.2 Crafting Prompts that Utilize Multimodal Data

To effectively utilize multimodal inputs, prompt designers must consider several best practices:

- Clear specification: Explicitly describe the role of each input type and how the AI should integrate them. For example, “Analyze the attached image of product packaging and generate marketing copy based on its visual elements.”

- Contextual linkage: Ensure that text prompts reference multimodal inputs cohesively to avoid fragmented responses.

- Input quality: Provide high-resolution images or clear audio files to maximize AI interpretability.

- Format compatibility: Adhere to model-specific input formats and size limitations to prevent processing errors.

5.3 Case Studies: ChatGPT and Claude’s Multimodal Capabilities

In practice, ChatGPT’s multimodal functionality enables applications such as:

- Generating detailed descriptions of artworks or photographs uploaded by users.

- Providing step-by-step instructions based on schematic diagrams.

- Interpreting audio clips for transcription and sentiment analysis.

Claude, recognized for its safety and interpretability, excels at integrating multimodal inputs in contexts requiring sensitive data handling, such as medical imaging interpretation combined with patient history text prompts.

These capabilities empower professionals in creative industries, healthcare, education, and customer service to leverage AI for more insightful and contextually rich outputs, pushing the boundaries of what conversational agents can achieve.

6. Prompt Optimization Through Temperature and Token Control

6.1 Understanding Temperature and Token Parameters

Temperature and token limits are critical model parameters that influence the style, creativity, and length of AI-generated responses. Temperature controls randomness in output generation: lower values (close to 0) yield deterministic and precise responses, while higher values (up to 1 or beyond) increase creativity and variability. Token limits define the maximum length of the generated output, measured in discrete units representing words or subwords.

Mastering these parameters is essential to optimize AI behavior for specific tasks, balancing between creativity and accuracy, brevity and detail.

6.2 Strategies for Parameter Tuning

Effective tuning strategies include:

- Balancing creativity and precision: For technical or legal content, set temperature low (0.2–0.4) to minimize hallucinations. For brainstorming or creative writing, higher temperatures (0.7–0.9) encourage diverse ideas.

- Managing response length: Adjust token limits based on task complexity. Short summaries require fewer tokens; in-depth reports need higher limits.

- Iterative experimentation: Test different combinations on sample prompts to identify optimal configurations.

6.3 Practical Guidelines for ChatGPT and Claude Users

In ChatGPT, temperature tuning is accessible via API parameters or interface sliders, allowing users to tailor outputs dynamically. Claude similarly supports temperature adjustment but emphasizes safer defaults to reduce harmful content generation. Token management is crucial when chaining prompts or feeding back outputs to avoid truncation or context loss.

Consider the following example for generating an executive summary:

| Parameter | Setting | Effect |

|---|---|---|

| Temperature | 0.3 | Concise, factual summary with minimal speculation |

| Max Tokens | 200 | Response limited to key points without excess detail |

Adjusting these parameters in parallel with advanced prompting techniques enhances the overall quality and relevance of AI outputs.

Prompt Optimization7. Ethical and Responsible Prompting Practices

7.1 Importance of Ethical Prompt Design

As AI capabilities grow, so too does the responsibility of users and developers to ensure ethical and responsible prompting practices. Poorly designed prompts can inadvertently propagate biases, misinformation, or harmful content, posing risks to individuals and society. Ethical prompt design prioritizes fairness, inclusivity, and the prevention of misuse, aligning technological innovation with societal values.

7.2 Techniques to Ensure Responsible AI Use

To promote responsible AI interactions, practitioners should:

- Implement bias mitigation: Craft prompts that avoid stereotypes or discriminatory language and include neutrality where appropriate.

- Safeguard privacy: Refrain from inputting sensitive personal data unless necessary and ensure compliance with data protection regulations.

- Include content filters: Use model settings or external tools to detect and prevent generation of harmful or inappropriate outputs.

- Transparency and disclosure: Clearly communicate AI involvement in outputs to users or stakeholders to maintain trust.

7.3 Future-Proofing Prompts for Compliance

Looking ahead, the AI ecosystem is poised to face increasingly stringent regulatory frameworks governing data use, content moderation, and user safety. Prompt engineers must anticipate these changes by designing adaptable and auditable prompt structures. This includes maintaining detailed prompt version histories, incorporating ethical guardrails within prompts, and collaborating with legal and compliance teams to align with emerging standards.

Responsible prompting not only safeguards users but also enhances the credibility and longevity of AI applications in professional and public domains.

Conclusion

This guide has explored seven advanced prompting techniques that empower users to unlock the full potential of ChatGPT and Claude in 2026. From the layered complexity of contextual prompts and the personalization of persona embedding to the precision of instruction chaining and the adaptability of dynamic feedback loops, each technique contributes uniquely to elevating AI interactions.

Incorporating multimodal inputs, optimizing key parameters such as temperature and token limits, and upholding ethical standards round out a comprehensive framework for responsible and effective prompt engineering. As AI models continue to evolve, practitioners are encouraged to experiment and adapt these techniques, tailoring them to their specific use cases and advancing the state of the art in human-AI collaboration.

The future of AI prompting lies in the synthesis of technical expertise, creative ingenuity, and ethical stewardship, ensuring that these powerful tools serve humanity’s best interests in an increasingly interconnected world.

Understanding the nuances of effective prompting is becoming an essential skill for anyone who works with artificial intelligence systems on a regular basis. The techniques outlined in this guide represent the current state of the art in prompt engineering, but the field continues to evolve rapidly as AI models become more capable and researchers discover new approaches to eliciting optimal responses. Practitioners who invest time in mastering these foundational techniques will find themselves well-equipped to adapt to future developments in the field.

One critical aspect of advanced prompting that deserves additional emphasis is the importance of iterative refinement. The most effective prompt engineers rarely achieve optimal results on their first attempt. Instead, they treat prompting as a systematic process of hypothesis testing, where each interaction with the AI model provides valuable feedback that can be used to refine and improve subsequent prompts. This iterative approach is particularly important when working with complex, multi-step tasks that require precise control over the AI’s reasoning process and output format.

The practical applications of these advanced prompting techniques extend far beyond simple question-and-answer interactions. Professional prompt engineers are using these methods to build sophisticated AI-powered workflows that automate complex business processes, generate detailed analytical reports, create comprehensive educational materials, and develop production-ready code. As AI models continue to improve, the gap between skilled and unskilled prompt engineers will only widen, making proficiency in these techniques an increasingly valuable professional asset.

Useful Links

- OpenAI Codex Major Update: Desktop Computer Use, Subagents, and Persistent Memory

- Advanced Prompt Engineering for ChatGPT, Claude, and Codex in 2026

- How to Use Claude Design for Rapid Prototyping and Mockups

- OpenAI Codex Major Update: Desktop Computer Use and Multi-Agent Workflows

- GPT-Rosalind and GPT-5.4-Cyber: How OpenAI’s Specialized Models Are Reshaping Industries

Related Reading

Additional Resources

- Comprehensive tutorials on prompt engineering methodologies for ChatGPT and Claude.

- Curated libraries of effective prompt templates and persona profiles.

- Official API documentation and developer guides for integrating advanced prompting techniques.

Frequently Asked Questions

What's the single highest-ROI prompting technique?

Chain-of-verification — explicitly asking the model to generate an answer, then critique and revise its own answer before finalizing. It adds one extra round trip but cuts hallucinations and factual errors by 30–50% on reasoning-heavy tasks, according to benchmark studies across GPT-5 and Claude Opus 4.7.

Does role prompting actually work?

Yes, but subtly. Assigning a role like 'You are a senior SRE with 15 years of production experience' shifts vocabulary, risk tolerance, and reasoning patterns in a measurable way. It won't turn a bad model into a good one, but it reliably improves style, tone, and domain accuracy.

When should I use few-shot examples?

When you need specific formatting, tone matching, or edge-case handling. Three well-chosen examples beat a paragraph of instructions for teaching a model what 'good' looks like. Skip few-shot for simple, generic tasks — you're just adding tokens with no benefit.

What is ReAct prompting?

ReAct (Reason + Act) interleaves reasoning steps with tool calls. Instead of 'answer this question,' the prompt structures responses as: Thought → Action → Observation → Thought → Action, etc. It's the foundation of most modern agent frameworks and dramatically improves tool-use reliability.

Do these techniques work on Claude, GPT-5, and Gemini?

All seven techniques are model-agnostic and work across ChatGPT, Claude, and Gemini. The specifics differ — Claude responds better to XML-tagged structure, GPT-5 handles markdown natively, Gemini prefers shorter system prompts — but the underlying patterns transfer directly.

Are these techniques still relevant as models get smarter?

Yes. Smarter models raise the ceiling but also widen the variance between casual and crafted prompts. The same techniques that made GPT-4 useful still compound on GPT-5 and Opus 4.7 — you just get better output from a better starting point.

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.