Complete Guide to AI Coding Assistants in 2026: Codex vs Claude Code vs Gemini

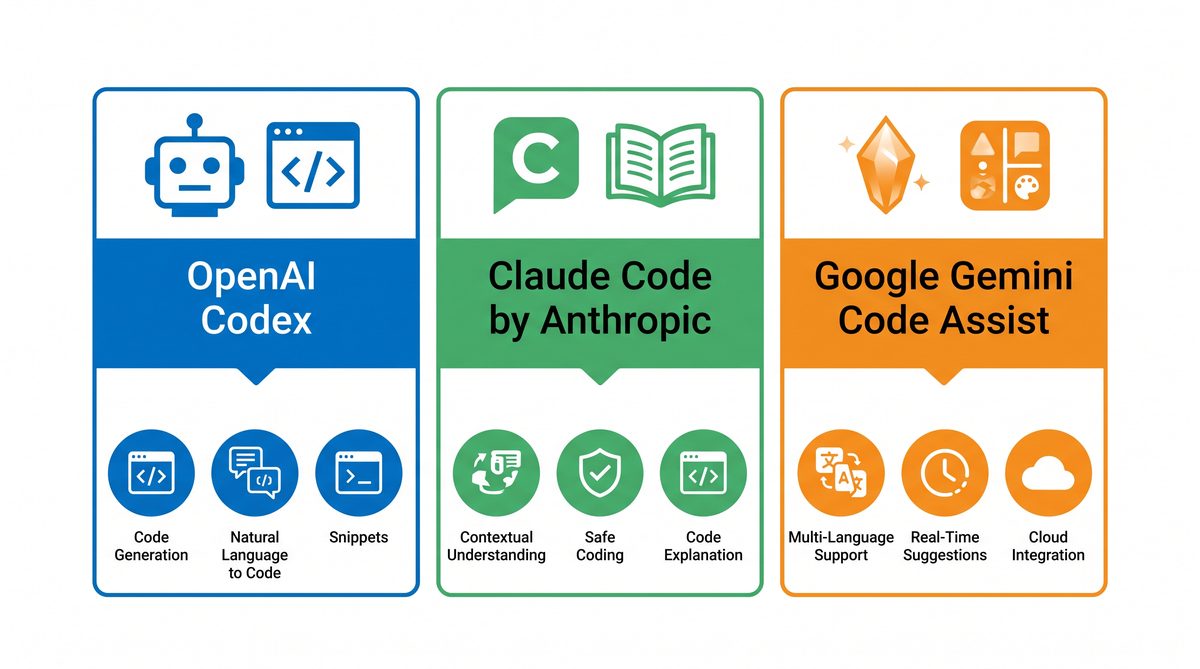

As software development continues to evolve at a breakneck pace, AI-powered coding assistants have become indispensable tools for developers worldwide. In 2026, the landscape of AI coding assistants is dominated by three major players: OpenAI’s Codex, Anthropic’s Claude Code, and Google’s Gemini Code Assist. Each platform offers unique capabilities, architectural innovations, and pricing models that cater to diverse developer needs—from individual programmers to large-scale enterprise deployments.

✓ Instant access✓ No spam✓ Unsubscribe anytime

This comprehensive guide dives deep into the comparative analysis of these AI coding assistants, exploring their core features, technical architectures, contextual capacities, pricing structures, and real-world use cases. Further, we examine enterprise adoption trends backed by authoritative industry reports from Deloitte and a16z, shedding light on how organizations are leveraging these tools to accelerate software delivery and innovation.

OpenAI Codex: Desktop-Centric AI Coding with Subagent Architecture

Overview and Evolution of OpenAI Codex

OpenAI Codex, initially launched as a cloud-based API integrated with GitHub Copilot, has evolved significantly by 2026. Its latest incarnation emphasizes a desktop-native application designed for seamless integration with local development environments. This shift addresses common concerns around latency, data privacy, and offline capabilities, making Codex more appealing to enterprises with stringent security requirements.

The desktop app version of Codex supports popular integrated development environments (IDEs) like Visual Studio Code, JetBrains suite, and even terminal-based editors such as Vim and Emacs. Codex leverages a hybrid architecture where core language models run locally, supplemented by cloud-based subagents that handle specialized tasks such as security audits, automated refactoring, and contextual code reviews.

Subagent Architecture: Modular Intelligence for Specialized Tasks

One of Codex’s standout innovations is its subagent framework. Subagents are lightweight AI modules trained on specific coding domains or workflows. For example:

- Security Subagent: Detects vulnerabilities and suggests secure coding patterns in real-time.

- Testing Subagent: Generates unit and integration tests tailored to the project’s architecture.

- Legacy Refactoring Subagent: Assists in transforming legacy codebases into modern frameworks.

This modular approach enables Codex to provide highly contextual assistance without overwhelming the primary language model, optimizing both accuracy and computational efficiency.

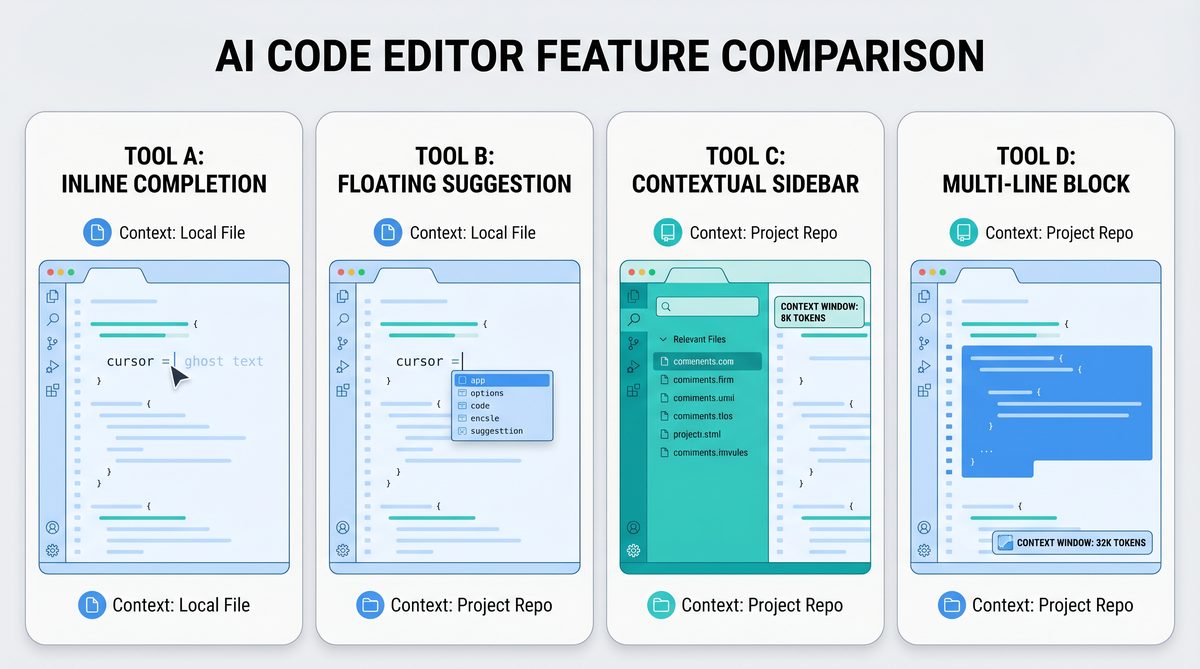

Context Window and Language Model Size

Codex 2026 operates with a context window of approximately 128K tokens in the desktop environment, enabling it to ingest entire codebases or extensive documentation in a single session. This marks a significant improvement over earlier versions, allowing for better understanding of complex multi-file projects and cross-module dependencies.

Pricing and Licensing

OpenAI offers Codex under a tiered subscription model:

| Plan | Target Users | Features | Price (Monthly) |

|---|---|---|---|

| Individual | Solo developers, freelancers | Desktop app, 128K token context, basic subagents | $29 |

| Team | Small dev teams, startups | Multi-user support, advanced subagents, priority support | $99 per user |

| Enterprise | Large organizations | On-premise deployment, custom subagents, compliance certifications | Custom pricing |

Use Cases and Developer Feedback

Codex has found widespread adoption in scenarios requiring deep codebase understanding and high trust levels, such as fintech, healthcare, and aerospace software development. Developers praise its desktop integration and the subagent system for significantly reducing context switching. However, some users note the steep learning curve associated with configuring and optimizing subagents for niche workflows.

When implementing these advanced AI workflows, understanding the underlying model architecture is crucial. Our comprehensive analysis of Claude Code vs OpenAI Codex: The Complete 2026 Comparison Guide for AI-Powered Development explores how parameter scaling affects reasoning capabilities in modern LLMs.

Anthropic Claude Code: Pushing Boundaries with Massive Context Windows and Claude 4.6 Series

Overview of Claude Code’s Technological Edge

Anthropic’s Claude Code represents the cutting edge in large-context AI coding assistants. The latest model iteration, Claude 4.6 series, powers the platform with unprecedented capabilities, including an enormous context window ranging from 200,000 to 1 million tokens. This allows developers and organizations to feed entire repositories, extensive documentation, and even complex project histories into the model simultaneously.

Claude Code is accessible via a cloud-hosted interface with integration plugins for major IDEs and cloud development environments like Gitpod and AWS Cloud9. Unlike Codex’s desktop focus, Claude Code emphasizes cloud scalability and collaborative coding sessions.

Claude 4.6 Series: Architectural Innovations

The Claude 4.6 series leverages advanced sparsity techniques and memory-augmented transformers to handle mega-scale contexts efficiently. Key features include:

- Dynamic Context Compression: Automatically summarizes less relevant code sections to maintain focus on critical logic.

- Multi-Session Awareness: Tracks changes and user interactions across multiple sessions, enabling persistent project understanding.

- Ethical and Safety Guardrails: Incorporates Anthropic’s constitutional AI principles to minimize biased or unsafe code suggestions.

Pricing Structure and Accessibility

Claude Code is positioned as a premium service with flexible pricing based on context window size and user concurrency:

| Plan | Context Window | Concurrent Users | Price (Monthly) |

|---|---|---|---|

| Basic | 200K tokens | 1 | $59 |

| Pro | 500K tokens | Up to 5 | $249 |

| Enterprise | 1M tokens | Unlimited | Custom pricing |

Use Cases and Developer Reception

Claude Code excels in large codebase management, multi-repository projects, and enterprise-grade software systems. Its ability to process vast contexts enables developers to perform complex refactorings, cross-repository dependency analysis, and even generate detailed architectural documentation automatically.

Some developers highlight Claude Code’s collaborative features which facilitate real-time pair programming and code review sessions powered by AI. However, the reliance on cloud connectivity and subscription costs may limit adoption among independent developers or projects with strict data residency requirements.

Comparative Insights and Advanced Workflows

For teams working on extensive legacy systems or multi-module applications, Claude Code’s massive context window offers unparalleled situational awareness. Leveraging these capabilities effectively requires understanding how to chunk and query large datasets efficiently. Our detailed examination of From Prompts to AI Skills: How to Build Reusable Prompt Workflows for ChatGPT, Claude, and Codex provides best practices for optimizing prompt engineering in extended context AI assistants.

Google Gemini Code Assist: Integrating AI Coding into the Google Ecosystem

Introduction to Gemini Code Assist

Google’s Gemini Code Assist represents the company’s foray into AI-assisted coding, tightly integrated within the Google Cloud Platform and the broader Google Workspace ecosystem. Gemini is distinguished by its multimodal capabilities, combining code generation with natural language understanding, voice commands, and even diagrammatic input interpretation.

Gemini Code Assist is available as a cloud-based tool with seamless plugins for Google Cloud Shell, Google Colab, and popular IDEs. This integration enables developers to leverage Google’s extensive AI infrastructure, including TPU acceleration and proprietary data indexing systems.

Core Features and Innovations

- Multimodal Input: Developers can interact with the assistant via text, voice, or sketches, allowing for more intuitive coding workflows.

- Project Context Awareness: Gemini ingests project metadata, issue tracker data, and CI/CD pipeline logs to provide contextualized code suggestions.

- Auto-Optimization: Uses Google’s AI to recommend performance improvements tailored for cloud deployment.

- Cross-Language Support: Supports an extensive array of programming languages, including emerging ones popular in quantum computing and AI research.

Pricing and Licensing Model

| Plan | Included Features | Price (Monthly) |

|---|---|---|

| Free Tier | Basic code generation, 50K token context, limited multimodal inputs | $0 |

| Developer | Extended context (200K tokens), voice and sketch inputs, priority support | $49 |

| Enterprise | Custom integrations, on-premise options, enhanced security features | Custom pricing |

Use Cases and Market Positioning

Gemini Code Assist is particularly well-suited for cloud-native development teams leveraging Google Cloud products. Its multimodal inputs and project metadata integration enable developers to generate code that is optimized for deployment pipelines and cloud resource management.

Developers appreciate the assistant’s ability to handle diverse programming languages and its integration with Google’s robust AI tools such as Vertex AI. However, some critiques focus on the learning curve involved with multimodal inputs and the occasional latency issues in complex voice-command scenarios.

Enterprise organizations adopting Google Cloud have shown strong interest in Gemini as a way to unify AI-assisted coding with existing Google Workspace tools, streamlining developer productivity within a single ecosystem.

Feature & Capability Comparison: Codex vs Claude Code vs Gemini

| Feature | OpenAI Codex | Anthropic Claude Code | Google Gemini Code Assist |

|---|---|---|---|

| Deployment | Desktop app + cloud subagents | Cloud-based, multi-session | Cloud-based, Google ecosystem |

| Context Window | 128K tokens | 200K – 1M tokens | 50K – 200K tokens |

| Model Architecture | Hybrid local/cloud with subagents | Sparse transformers, memory-augmented | Multimodal transformer with metadata integration |

| Multimodal Inputs | No | Limited (text/code) | Yes (text, voice, sketches) |

| Pricing (entry-level) | $29/month | $59/month | Free tier available |

| Enterprise Features | On-premise, custom subagents | Unlimited concurrency, max context | Custom integrations, security compliance |

| Primary Use Cases | Deep codebase understanding, offline usage | Large codebases, collaborative coding | Cloud-native, multimodal workflows |

Enterprise Adoption Trends in 2026

Recent industry reports from Deloitte and a16z provide critical insights into how enterprises are embracing AI coding assistants in 2026. The findings highlight several key trends:

Deloitte Insights on AI Coding Integration

Deloitte’s 2026 Tech Trends report emphasizes the rise of AI assistants as catalysts for accelerating software development lifecycles. Key takeaways include:

- 70% of surveyed enterprises have integrated at least one AI coding assistant into their development pipelines.

- Security and compliance remain top priorities, driving adoption of solutions like OpenAI Codex with on-premise and hybrid deployment options.

- Cross-team collaboration benefits from cloud-based assistants like Claude Code, which enable shared AI-powered code reviews and pair programming.

- Training and upskilling programs now regularly include AI assistant usage, reducing onboarding time by 30-40%.

a16z Report: Economic Impact and Developer Productivity

Andreessen Horowitz (a16z) in their 2026 Developer Ecosystem report, projects that AI coding assistants will contribute to a 25% average increase in developer productivity over the next three years. Highlights include:

- Enterprise adoption is strongest among fintech, healthcare, and automotive sectors, where coding complexity and regulatory demands are high.

- Hybrid models combining desktop and cloud assistants are preferred to balance security and scalability.

- Investment in custom AI subagents is rising, with organizations developing proprietary AI tools layered on Codex or Claude APIs.

- Integration with DevOps and CI/CD pipelines is becoming standard practice, enhancing automated code quality checks and deployment readiness.

For organizations seeking to evaluate AI coding assistants in their existing workflows, understanding integration capabilities and compliance certifications is critical. Our exploration of How to Use OpenAI Codex as Your AI Coding Agent: Complete Setup and Workflow Guide offers in-depth coverage of enterprise-grade AI tooling and security considerations.

Choosing the Right AI Coding Assistant for Your Needs

With multiple powerful options available, selecting the ideal AI coding assistant depends on several factors:

- Project Size and Complexity: Large codebases and multi-module projects benefit from Claude Code’s extended context windows, while smaller teams may prefer Codex’s desktop approach.

- Deployment Preferences: Organizations with strict data governance may lean towards Codex’s on-premise capabilities, whereas cloud-first companies might adopt Gemini for its seamless Google Cloud integration.

- Collaboration Requirements: Teams emphasizing real-time collaborative coding might find Claude Code’s multi-session awareness invaluable.

- Budget Constraints: Pricing tiers vary widely; free or lower-cost tiers like Gemini’s free plan offer entry points for individual developers or experimental use.

- Feature Priorities: Multimodal inputs and integration with voice and sketch commands in Gemini might be key for innovative UX-driven coding workflows.

Ultimately, trialing multiple assistants in pilot projects is advisable to identify which aligns best with organizational workflows and developer preferences.

Future Outlook: AI Coding Assistants Beyond 2026

The trajectory of AI coding assistants suggests continued innovation in several domains:

- Context Window Expansion: Research into trillion-token context windows promises even deeper understanding of entire software ecosystems.

- Multimodal and Multilingual Support: Enabling coding across languages and modalities will democratize software creation further.

- Explainability and Debugging: Assistants will evolve to not just generate code but to provide rationale, error tracing, and dynamic debugging guidance.

- Integration with Low-Code/No-Code Platforms: Bridging AI coding assistants with visual development tools will empower citizen developers.

The interplay between AI and human developers will redefine software craftsmanship, with coding assistants acting as collaborators rather than mere tools.

Useful Links

- OpenAI Codex Official Blog

- Anthropic Claude Code Overview

- Google Vertex AI and Gemini Integration

- Deloitte Technology Predictions 2026

- a16z Developer Ecosystem Report 2026

- OpenAI Codex GitHub Repository

- Anthropic’s Constitutional AI Principles

- Google Cloud Code Assist Documentation

Useful Links and Resources

Here are some valuable resources to help you explore the topics covered in this article:

- OpenAI Codex

- Anthropic Claude Code

- Google Gemini Code Assist

- GitHub Copilot

- Cursor AI Editor

- SWE-bench Leaderboard

🕐 Instant∞ Unlimited🎁 Free

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.