As the generative AI landscape accelerates toward widespread enterprise adoption, competition among leading AI providers is intensifying. Anthropic, a prominent developer of large language models, recently announced tighter usage limits on its Claude subscription plans. This strategic adjustment marks a significant shift in Anthropic’s commercial approach amid mounting pressure from OpenAI, whose Codex model is gaining notable traction in the enterprise segment. The evolving dynamics between these two AI powerhouses underscore the increasingly nuanced battleground for market share, developer mindshare, and enterprise integration.

Anthropic’s decision to reduce usage caps on its paid tiers reflects broader challenges faced by AI providers balancing rapid growth, infrastructure costs, and customer demand. Originally lauded for its commitment to safety and ethical AI deployment, Anthropic has positioned Claude as a versatile assistant for a variety of business applications. However, as more enterprises seek scalable and cost-effective AI solutions, usage limits become a critical factor influencing buyer decisions. By tightening these limits, Anthropic appears to be recalibrating its revenue model while simultaneously encouraging customers to consider higher-tier subscriptions or alternative platforms.

Meanwhile, OpenAI’s Codex, the AI system powering advanced coding and automation tools, is steadily expanding its enterprise footprint. Codex’s integration into developer workflows and productivity tools has made it a formidable competitor, especially in sectors where automated code generation and AI-assisted software development accelerate project delivery timelines. OpenAI’s ability to bundle Codex’s capabilities seamlessly with its broader GPT offerings provides a compelling value proposition for enterprises seeking comprehensive AI solutions. This momentum has positioned OpenAI not only as a pioneer in conversational AI but also as a leader in developer-centric AI innovation.

The competitive dynamics between Anthropic and OpenAI now extend beyond technical prowess to encompass subscription economics, platform scalability, and customer retention strategies. Anthropic’s newly imposed restrictions on Claude subscriptions could prompt some users to reassess their long-term commitments, especially as OpenAI continues to enhance Codex and expand its enterprise integrations. These developments highlight the strategic balancing act AI providers face in optimizing user experience, managing operational costs, and sustaining growth in an increasingly crowded market.

This article explores the implications of Anthropic’s subscription limit changes, the factors driving OpenAI’s Codex expansion, and the broader impact on enterprise AI adoption. We analyze how these shifts affect developers, businesses, and the evolving AI ecosystem, offering a detailed examination of the competitive landscape shaping the future of intelligent automation and natural language understanding.

Anthropic’s Strategic Shift: Tightening Claude Subscription Limits

Anthropic’s recent announcement to tighten usage limits on its Claude AI subscription tiers signals a pivotal moment in the company’s commercial strategy. The adjustments primarily affect the paid tiers of Claude, reducing the volume of tokens or interactions users can access within a given billing period. This move has stirred discussions among developers and enterprise customers alike, as it directly impacts how organizations plan their AI utilization and budgeting.

Originally launched with relatively generous usage allowances, Claude’s subscription plans were designed to attract a broad base of users ranging from individual developers to large enterprises. Anthropic positioned Claude as a safe, reliable, and versatile large language model (LLM) with a focus on responsible AI deployment. Its emphasis on mitigating bias and ensuring user trust distinguished it in a market often criticized for opacity and unchecked AI behavior. However, as Claude’s adoption grew, so too did the operational costs associated with maintaining high-availability, low-latency AI services at scale.

By tightening subscription limits, Anthropic is likely aiming to align its infrastructure expenses with revenue streams more closely. Hosting and serving advanced LLMs require significant computational resources, especially as usage scales and user expectations for responsiveness increase. This recalibration can help prevent unsustainable cost inflation while fostering a more predictable revenue model. Furthermore, the stricter limits may incentivize users to upgrade to higher-tier plans, boosting average revenue per user (ARPU) and stabilizing financial forecasts.

From a customer perspective, the impact varies depending on use case and scale. Smaller teams and individuals with moderate AI interaction needs might find the new limits manageable, while large enterprises with intensive usage patterns could face constraints. Such customers will need to evaluate whether to invest in premium subscriptions, seek custom enterprise agreements, or explore alternative solutions. This decision-making process involves balancing cost considerations against the value derived from Claude’s capabilities, including its advanced safety features and contextual understanding.

The shift also invites a broader reflection on how AI providers structure access to their models. Usage limits serve as a lever to control demand, prevent abuse, and manage quality of service. However, overly restrictive caps risk alienating customers who require elastic scalability, particularly in fast-moving sectors like software development, customer support automation, and content generation. As a result, Anthropic’s approach may prompt some enterprise clients to reassess their AI partner strategies.

Notably, Anthropic’s strategy contrasts with OpenAI’s recent moves to enhance accessibility and scalability of its Codex and GPT models, particularly within enterprise environments. OpenAI has focused on integrating its AI capabilities deeply into developer tools and cloud platforms, enabling seamless scaling and flexible pricing models. This approach appeals to organizations prioritizing agility and cost-efficiency, especially those investing heavily in AI-driven automation and developer productivity.

Furthermore, Anthropic’s emphasis on AI safety and ethical considerations remains a distinctive element of its value proposition. Its models are designed to minimize harmful outputs and ensure compliance with emerging AI governance standards. This commitment can resonate strongly with enterprises in regulated industries or those with stringent corporate responsibility mandates. Nonetheless, the tighter subscription limits may temper enthusiasm if they hinder operational flexibility.

To navigate this landscape, Anthropic must balance competing priorities: maintaining a reputation for safety and quality, managing infrastructure costs, and delivering competitive pricing and scalability. The company’s next steps will likely include refining tier structures, exploring enterprise-specific offerings, and enhancing integration capabilities to retain and grow its customer base.

For developers and businesses interested in understanding how subscription models affect AI usage patterns and cost management, it is useful to explore related concepts such as token-based billing and service-level agreements

As Anthropic tightens subscription limits on Claude, enterprises are increasingly evaluating alternative AI coding assistants to optimize their development workflows. The article OpenAI Codex vs Claude Code: Complete Business Adoption Guide for Enterprise Teams in 2026 provides a comprehensive comparison of both platforms, detailing their capabilities, integration options, and scalability considerations to help decision-makers select the best fit for their organizational needs.

. These frameworks provide insight into how AI consumption is quantified and monetized across different platforms, informing strategic decisions in AI adoption.

In summary, Anthropic’s tightened subscription limits represent a calculated response to market and operational realities. While potentially constraining for some users, this move aims to sustain Claude’s viability and competitiveness in a rapidly evolving AI ecosystem. The company’s ability to adapt its offerings and communicate effectively with its user base will be critical in maintaining momentum against intensifying competition from OpenAI and others.

Anthropic’s New Usage Limits and the Intensifying Competitive Dynamics with OpenAI

Anthropic’s recent decision to tighten usage limits on its Claude AI subscription tiers marks a significant strategic shift amid escalating competition in the enterprise AI market. The company, known for positioning Claude as a safer and more controllable alternative to OpenAI’s models, has traditionally offered generous usage allowances aimed at attracting a broad array of developers and businesses. However, the introduction of stricter caps on paid plans signals a recalibration that may reflect both operational cost pressures and a response to intensifying market competition, particularly from OpenAI’s expanding foothold with Codex and other enterprise offerings.

By reducing usage thresholds for paid subscribers, Anthropic is effectively encouraging more selective and efficient use of Claude’s API, potentially prioritizing high-value customers and enterprise clients with predictable demand patterns. This move also suggests an effort to better manage the computational expenses associated with running large language models at scale, as well as to curb any overuse that could degrade service quality or inflate costs disproportionately. While this adjustment could be perceived as a limitation by some existing customers accustomed to more liberal usage, it aligns with broader industry trends where AI providers are balancing accessibility with sustainable business models.

The competitive dynamics between Anthropic and OpenAI have become particularly pronounced as OpenAI aggressively pursues enterprise adoption. OpenAI’s Codex, specialized in code generation and integration, has rapidly gained traction among developer teams and organizations seeking to automate programming tasks and augment software development workflows. The enterprise appeal of Codex is bolstered by its seamless integration with Microsoft’s ecosystem and a growing portfolio of customer success stories, which together have created a strong moat that Anthropic must contend with.

This evolving landscape is shaping strategic decisions on both sides. Anthropic’s tightening of Claude’s subscription limits can be interpreted as a defensive posture aimed at preserving service quality and customer satisfaction while recalibrating the product’s value proposition. Meanwhile, OpenAI leverages its extensive developer network and partnerships to capitalize on the growing demand for AI-driven coding assistance, thereby solidifying its position in the enterprise segment. The competition is not merely about pricing or raw capability but also about ecosystem compatibility, developer experience, and trust in AI safety and reliability.

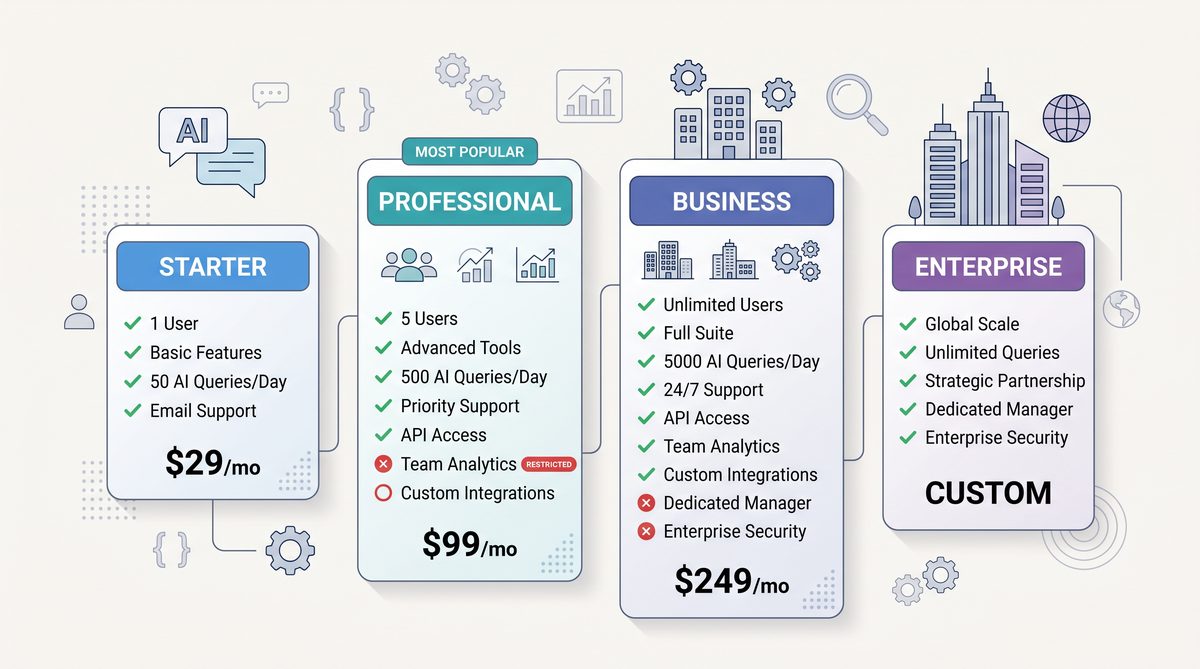

To better understand the implications of Anthropic’s new usage limits relative to OpenAI’s offerings, the following table compares key subscription features and usage policies of Claude’s paid tiers with OpenAI’s Codex enterprise plans:

| Feature | Anthropic Claude (Paid Tiers) | OpenAI Codex (Enterprise) |

|---|---|---|

| Monthly Usage Limit | Reduced to 100,000 tokens for mid-tier, 250,000 tokens for top-tier (previously higher) | Flexible, based on negotiated enterprise contracts; typically exceeds 1 million tokens |

| Pricing Model | Tiered subscription with token caps; overage charges apply | Custom pricing based on usage volume and integration scope |

| Primary Use Case | General-purpose natural language understanding and generation | Code generation, completion, and developer tooling integration |

| Integration Ecosystem | API access with limited plug-and-play integrations | Deep integration with Microsoft products, GitHub Copilot, and IDEs |

| Safety and Control | Emphasis on alignment and safe outputs through Claude’s design | Robust security protocols and enterprise-grade compliance |

| Target Audience | Developers, startups, and mid-sized enterprises | Large enterprises, software development teams, and technology firms |

The table highlights the strategic differences that define the competitive positioning of the two AI providers. Anthropic’s Claude remains attractive for customers prioritizing linguistic nuance, safety, and versatility across general AI tasks. However, its tightened usage limits may constrain scalability for enterprises requiring large volumes of interaction or intensive usage, potentially pushing these customers to evaluate alternatives. Conversely, OpenAI’s Codex, with its flexible enterprise agreements and rich integration environment, is well-poised to capture the growing demand for AI-augmented development workflows, supported by Microsoft’s extensive enterprise reach.

Anthropic’s move also reflects a broader trend in the AI industry, where providers are increasingly tailoring their offerings to align with specific customer segments and use cases. The days of unlimited or near-unlimited access to powerful language models are gradually giving way to more nuanced pricing and access controls that balance innovation with operational sustainability. This shift is critical as compute costs remain a significant factor in delivering AI services, especially when targeting enterprise clients with stringent performance and security requirements.

Furthermore, the market dynamics underscore the importance of ecosystem partnerships and enterprise trust. OpenAI’s collaboration with Microsoft has been a game-changer, enabling Codex to be embedded within widely adopted developer tools such as Visual Studio Code and GitHub Copilot. This integration drives adoption by reducing friction and aligning AI assistance directly with developers’ daily workflows. Anthropic, in contrast, must forge its own partnerships or enhance API capabilities to remain competitive in this environment, a challenge compounded by the new usage restrictions which may limit large-scale experimentation and deployment by enterprise clients.

Industry observers note that Anthropic’s subscription changes may also be a prelude to forthcoming product updates or differentiated offerings aimed at addressing these competitive pressures. By tightening limits on existing tiers, the company might be preparing to introduce premium or bespoke enterprise solutions that provide higher usage quotas, enhanced support, and tailored compliance features. Such a tiered approach would allow Anthropic to segment its customer base more effectively and compete on both price and value-added services.

In this context, developers and businesses evaluating AI platforms must carefully weigh not only the raw capabilities of Claude and Codex but also factors such as usage policies, integration potential, pricing flexibility, and vendor roadmaps. The evolving subscription models and competitive positioning will play a decisive role in influencing procurement decisions and long-term platform commitments. For those interested in a detailed breakdown of Claude’s pricing and usage policies,

As Anthropic tightens subscription limits on Claude amidst OpenAI Codex’s expanding presence in the enterprise sector, understanding the nuanced differences between these AI coding assistants becomes increasingly critical. The article Claude Code vs OpenAI Codex CLI in 2026: Performance, Pricing, and Workflow Comparison provides a comprehensive analysis of both platforms, evaluating their performance metrics, cost structures, and integration workflows to inform strategic decisions in AI-driven development environments.

offers comprehensive guides and analyses.

Ultimately, Anthropic’s tightened subscription limits are a clear indicator of the maturing AI market and the strategic recalibrations companies must undertake to thrive. While this may impose new constraints on some users, it also reflects a commitment to sustainable growth, service quality, and competitive differentiation. OpenAI’s aggressive enterprise push with Codex further intensifies these dynamics, setting the stage for an increasingly sophisticated and segmented AI services landscape where innovation, integration, and customer alignment are paramount.

Anthropic’s New Usage Limits on Paid Tiers: Competitive Dynamics and OpenAI’s Strategic Advantage

In the unfolding competition between Anthropic and OpenAI, the recent implementation of tightened usage limits on Claude’s paid subscription tiers marks a significant strategic shift. Anthropic’s decision to restrict usage reflects both an attempt to control operational costs amid rising demand and a defensive maneuver to recalibrate its service offering in the face of intensifying market pressure. This recalibration is particularly noteworthy given OpenAI’s concurrent momentum with its Codex model, which has steadily gained traction in enterprise applications, providing OpenAI with a competitive edge in the lucrative business segment.

Anthropic’s move to impose stricter caps on Claude’s paid tiers effectively limits the volume of requests users can make within specified billing cycles, a departure from the more generous or flexible usage policies seen previously. While exact limits vary by subscription plan, the overarching pattern is clear: customers are now subject to lower thresholds on API calls and token usage. This shift inevitably impacts developers and enterprises relying on Claude for high-volume, resource-intensive tasks such as content generation, customer support automation, and code assistance. By constraining usage, Anthropic aims to manage infrastructure costs and ensure service stability; however, this also risks alienating power users who require expansive access to the AI’s capabilities.

From a competitive standpoint, these usage restrictions could be interpreted as a sign of Anthropic’s cautious approach to scaling. Unlike OpenAI, which has leveraged extensive cloud partnerships and substantial capital to support large-scale deployments, Anthropic appears to be adopting a more measured growth strategy. This conservative posture may stem from the company’s relatively smaller operational scale and the financial realities of sustaining a cutting-edge AI model with considerable compute demands. While this approach prioritizes long-term sustainability, it also opens an opportunity window for OpenAI to capitalize on unmet demand in the enterprise sector.

OpenAI’s Codex, an AI model specifically optimized for code generation and programming assistance, has gained notable enterprise adoption, particularly within software development environments and integrated development platforms. OpenAI’s ability to embed Codex into widely used tools such as GitHub Copilot has created a seamless user experience for developers, fostering deeper integration and stickiness. This embedded approach contrasts with Anthropic’s more standalone Claude interface, which, while powerful, lacks the same level of entrenched tooling partnerships. Consequently, OpenAI is not only expanding its user base but also entrenching its technology within the fabric of enterprise workflows.

The competitive dynamics between Anthropic and OpenAI highlight differing strategic priorities. Anthropic’s focus on safety, alignment, and ethical AI development is well-regarded within the industry, but it must be balanced against commercial viability. The imposition of stricter limits on Claude’s paid tiers may signal a recalibration to ensure that the company’s resources are allocated efficiently, preserving quality and reliability over aggressive expansion. However, this approach inherently places Anthropic at risk of ceding ground to a more aggressively scaling OpenAI, whose Codex enterprise traction is buoyed by significant investment and broader ecosystem integration.

Moreover, OpenAI’s approach benefits from a virtuous cycle of feedback and improvement driven by its large and diverse user base. As more enterprises incorporate Codex into their development pipelines, OpenAI gains invaluable data to refine model performance, enhance contextual understanding, and reduce error rates. This iterative process strengthens OpenAI’s competitive moat, making it more difficult for rivals like Anthropic to match both the scale and quality of service. Anthropic, constrained by usage limits, may find it challenging to replicate this cycle at the same speed or scale.

Anthropic’s tightened limits may also affect developer sentiment and community engagement. Developers and enterprises value flexibility and scalability, especially when integrating AI models into production environments. Sudden reductions in usage allowances can disrupt workflows and force businesses to reconsider their AI provider options. This dynamic underscores the importance of transparent communication and predictable pricing models in maintaining customer loyalty. While Anthropic has emphasized its commitment to responsible AI usage and alignment, these operational adjustments must be carefully managed to avoid perceptions of instability or unpredictability.

It is important to consider that Anthropic’s limitations might also be a calculated short-term trade-off to enable longer-term innovation. By controlling demand and focusing on sustainable growth, Anthropic could be positioning Claude for enhanced capabilities, improved safety features, and more refined alignment in future iterations. Nonetheless, in a competitive environment where rapid enterprise adoption and integration are paramount, such a strategy demands precise execution to prevent erosion of market share.

The broader AI landscape continues to evolve rapidly, with enterprises increasingly viewing AI not just as a novelty but as a core component of digital transformation strategies. OpenAI’s ability to capitalize on this trend, particularly through Codex’s robust enterprise positioning, gives it a tangible advantage. Anthropic’s recent usage restrictions highlight the operational challenges inherent in scaling next-generation AI models, but also underscore the urgency for companies to balance innovation with commercial pragmatism.

For developers and enterprises evaluating AI providers, these developments suggest a nuanced landscape. OpenAI’s Codex offers compelling integration benefits and scalability, making it an attractive choice for organizations seeking immediate productivity gains. Conversely, Anthropic’s Claude, while committed to ethical AI principles, may require careful consideration regarding usage needs and cost management, especially under the new subscription constraints. As the competition intensifies, companies will need to assess trade-offs between operational flexibility, model capabilities, and alignment priorities.

As the AI market matures, the competitive interplay between Anthropic and OpenAI will likely continue shaping subscription models, feature sets, and enterprise adoption patterns. Interested readers can explore further analysis on AI subscription economics and enterprise integration strategies in our in-depth coverage at

As Anthropic tightens subscription limits on its Claude AI in response to OpenAI Codex’s growing enterprise adoption, understanding the nuances of Claude’s SDK credits, pricing structures, and usage constraints becomes increasingly critical. The Complete Guide to Anthropic’s Claude Agent SDK Credits: Pricing, Limits, and Optimization Strategies provides an in-depth analysis of these factors, offering strategic insights to help organizations optimize their use of Claude within the newly imposed limitations.

. Understanding these dynamics is crucial for developers and business leaders aiming to harness AI’s full potential in a rapidly shifting technology environment.

Conclusion and Future Outlook

Anthropic’s decision to tighten usage limits on Claude’s paid subscription tiers signals a strategic recalibration in response to intensifying competition within the AI language model market. By imposing stricter caps, Anthropic appears to be managing capacity constraints and controlling operational costs while attempting to maintain service quality for its enterprise customers. However, this move could also reflect a defensive posture aimed at preserving resource availability amidst rising demand and emerging competitive pressures.

Meanwhile, OpenAI’s Codex continues to consolidate its position, particularly in the enterprise segment where its integration with developer tools and expansive API ecosystem offer compelling value propositions. OpenAI’s ability to leverage its extensive user base and robust infrastructure gives Codex a strategic advantage, enabling it to scale effectively while offering flexible subscription and usage models. This dynamic intensifies the competitive environment, compelling Anthropic and other players to revisit their pricing and service strategies to retain and grow their market share.

The competitive dynamics between Anthropic and OpenAI illustrate broader industry trends where AI providers are balancing scalability, cost management, and user experience. Anthropic’s subscription tightening could risk alienating some high-usage clients, potentially accelerating migration to alternative platforms like Codex that offer more generous or adaptable usage terms. Conversely, Anthropic’s emphasis on responsible AI and alignment-focused development may continue to attract organizations prioritizing safety and ethical considerations in AI deployment.

Looking ahead, the enterprise AI landscape is poised for further evolution as both providers innovate on product capabilities, integration ease, and pricing flexibility. OpenAI’s expanding enterprise outreach and ongoing enhancements to Codex’s programming assistance capabilities will likely sustain its competitive momentum. Anthropic, on the other hand, may need to refine its subscription model and infrastructure investments to better support heavier usage patterns without compromising its operational sustainability.

Ultimately, enterprises stand to benefit from these competitive pressures through improved AI service offerings and more tailored subscription plans. The ongoing rivalry will likely accelerate innovation and drive more nuanced approaches to AI usage limits, balancing accessibility with performance and cost-efficiency. As this market segment matures, monitoring how Anthropic and OpenAI adapt their strategies will provide critical insights into the future contours of AI-powered software development and deployment.

Useful Links

- Anthropic Claude Official Product Page

- OpenAI Codex API Documentation

- OpenAI Codex GitHub Repository

- Anthropic Blog: Claude Usage Limits Update

- OpenAI Blog: Codex for Enterprise

- Anthropic Research and Publications

- OpenAI Developer Resources

- Comparative Analysis: Claude vs. Codex

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.