Introduction

In the rapidly evolving landscape of software development, leveraging artificial intelligence to automate coding tasks has become increasingly essential. Among the leading AI models, OpenAI Codex stands out for its ability to understand and generate code across multiple programming languages. However, harnessing the full potential of Codex requires more than isolated prompt-response interactions. To build sophisticated, scalable, and efficient coding workflows, developers need to orchestrate multiple AI agents in a coordinated pipeline. This is where Symphony orchestration, an open-source specification designed for chaining AI agents, plays a pivotal role.

Symphony provides a structured framework to integrate various AI models and agents, enabling them to collaborate seamlessly on complex tasks. By combining Symphony with OpenAI Codex, developers can create multi-agent pipelines that automate the entire software development process—from generating code snippets and debugging to testing and documentation. This hands-on tutorial will guide you through building a multi-agent coding pipeline using OpenAI Codex agents orchestrated via the Symphony specification. You will gain practical insights into setting up the orchestration framework, designing agent roles, and implementing communication protocols to ensure your AI agents work in harmony.

The tutorial is designed for developers with a foundational understanding of AI language models and software automation. Whether you are an AI enthusiast looking to explore agent orchestration or a software engineer aiming to streamline your coding workflows, this guide will provide the technical depth and actionable steps needed to build a robust multi-agent system. By the end of this tutorial, you will have a clear understanding of how to leverage Symphony orchestration to maximize the capabilities of OpenAI Codex, enabling you to tackle coding projects with enhanced efficiency and precision.

We will begin by exploring the core concepts behind multi-agent orchestration and the Symphony open-source specification. Understanding these principles is critical before diving into the practical implementation. This foundation will also prepare you to customize and extend the pipeline for your unique development scenarios. As you progress, you will encounter detailed explanations, code snippets, and best practices that ensure your multi-agent pipeline is scalable, maintainable, and effective.

Understanding Multi-Agent Orchestration with Symphony

Before assembling a multi-agent coding pipeline, it is essential to grasp the concept of orchestration in the context of AI agents. Multi-agent orchestration refers to the systematic coordination and management of multiple autonomous agents working together to achieve a complex goal. Unlike single-agent systems where one model handles all tasks, multi-agent systems delegate specific functions to specialized agents, each optimized for distinct subtasks. This division of labor enhances the overall performance, reliability, and scalability of AI-driven workflows.

Symphony is an open-source orchestration specification designed to facilitate the creation and management of such multi-agent systems. It provides a standardized protocol for defining agents, their roles, the communication channels between them, and the sequence of operations. Symphony’s modular design allows developers to integrate diverse AI models, including OpenAI Codex, into a cohesive pipeline where each agent contributes its expertise in a structured manner.

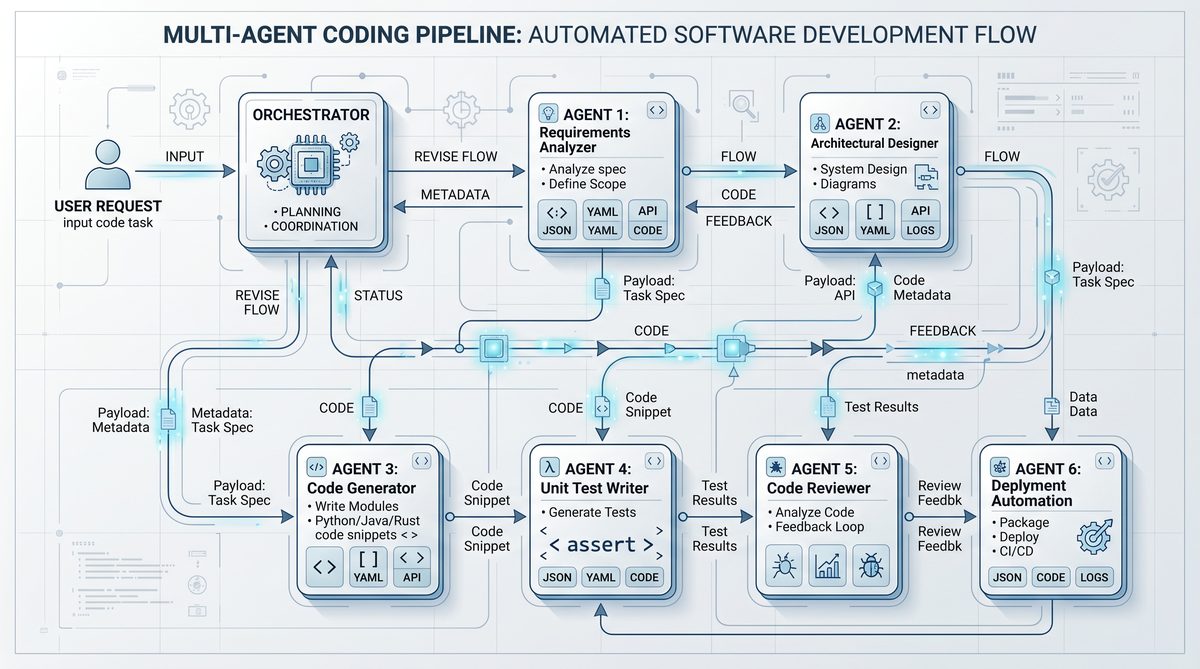

The core architectural elements of Symphony include agents, messages, workflows, and the orchestrator. Agents are autonomous units that execute specific tasks based on their capabilities. For example, in a coding pipeline, one agent might be responsible for generating initial code snippets, while another focuses on debugging or optimizing the code. Messages serve as the medium for inter-agent communication, ensuring that data, instructions, and feedback are exchanged accurately and efficiently. Workflows define the sequence and logic of agent interactions, outlining how tasks flow from one agent to another. The orchestrator oversees the entire process, managing agent lifecycles, message routing, and error handling.

One of the standout features of Symphony is its extensibility. Developers can create custom agents tailored to their project requirements and plug them into existing workflows without disrupting the overall system. This flexibility is particularly valuable when integrating OpenAI Codex agents, which can be specialized for different programming languages or coding functions. Additionally, Symphony supports asynchronous communication, enabling agents to operate concurrently and thus improving throughput and responsiveness.

To illustrate, consider a multi-agent pipeline designed for automating the development of a web application. The initial agent might use Codex to generate frontend components based on user specifications. The next agent could perform unit testing on the generated code, while another handles backend integration. Finally, a documentation agent produces comprehensive developer guides. Symphony orchestrates these agents, ensuring that outputs from one stage become inputs for the next, maintaining consistency and quality throughout the pipeline.

The Symphony specification also incorporates best practices for error handling and recovery, critical for maintaining robustness in production environments. Agents can be configured to retry failed tasks or escalate issues to human operators if necessary. Furthermore, logging and monitoring capabilities embedded within Symphony facilitate debugging and performance optimization, providing transparency into agent interactions and workflow execution.

Before we proceed with the practical implementation, it’s beneficial to familiarize yourself with key concepts such as asynchronous messaging patterns, agent lifecycle management, and workflow definition formats used by Symphony. If you need a refresher on these foundational topics, refer to our detailed overview of agent communication protocols and orchestration strategies

Building a multi-agent coding pipeline with OpenAI Codex and Symphony orchestration establishes a robust framework for collaborative AI-driven development, but the next frontier lies in mobility and accessibility. The article OpenAI Codex Goes Mobile: Work With Your AI Coding Agent From Anywhere in 2026 delves into how advancements in mobile integration are empowering developers to interact with their AI coding agents seamlessly from any location, thus extending the capabilities of multi-agent pipelines beyond traditional environments.

. This background will empower you to design multi-agent systems that are not only functional but also scalable and maintainable.

In the next section, we will dive into setting up the Symphony environment and configuring OpenAI Codex agents. You will learn how to define agent roles, establish communication channels, and implement the orchestration logic that enables your multi-agent coding pipeline to operate efficiently.

Implementing the Multi-Agent Pipeline Using the Symphony Open-Source Specification

Building a multi-agent coding pipeline with OpenAI Codex requires a well-structured orchestration framework to coordinate interactions between different AI agents. Symphony, an open-source specification for agent orchestration, offers precisely that: a declarative way to define and chain multiple Codex agents, enabling complex workflows that handle code generation, review, testing, and debugging in an automated, scalable manner.

To begin, it is essential to understand how Symphony structures its orchestration. At its core, Symphony uses a specification language that defines agents as modular units with inputs, outputs, and triggers. Each agent represents a distinct step in the coding pipeline, such as generating an initial code snippet, performing static analysis, or running test cases. These agents are then connected using event-driven triggers, which allow the output of one agent to become the input of the next. This chaining mechanism is crucial for building a cohesive pipeline where data flows seamlessly between specialized AI modules.

In practical terms, you start by defining your agents in a Symphony spec file, typically using YAML or JSON format. Each agent definition includes the agent’s name, the OpenAI Codex model to invoke, prompt templates, expected inputs, and the outputs it produces. This declarative approach abstracts away much of the orchestration complexity and lets you focus on designing effective prompts and agent logic.

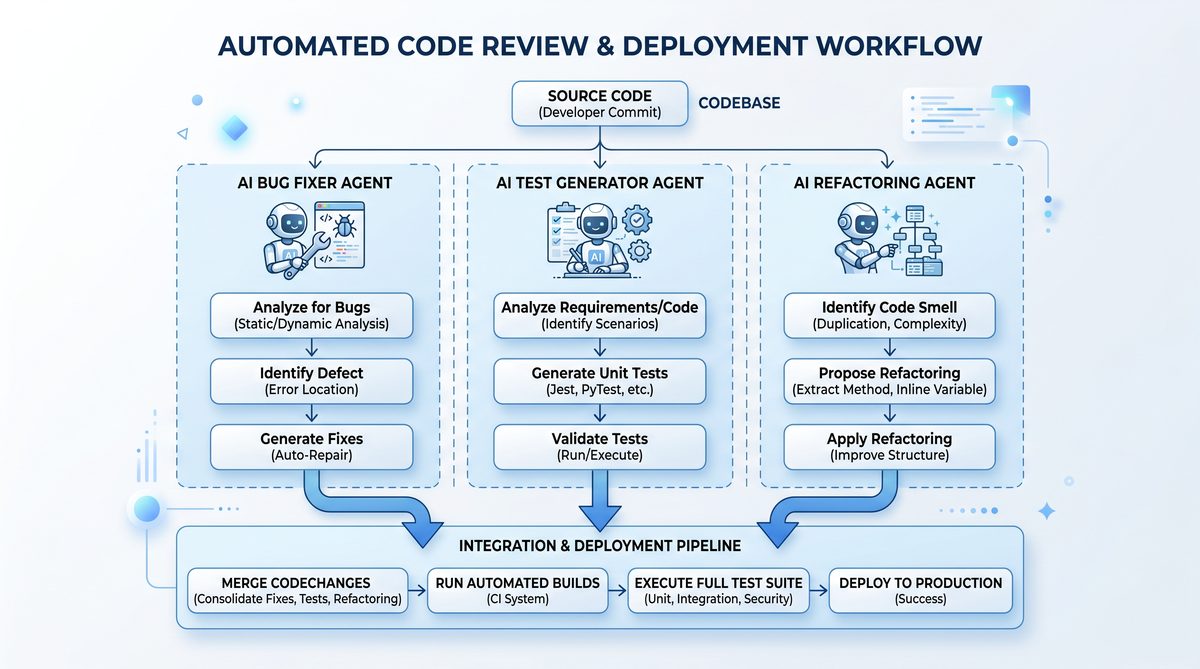

For example, the first agent might be a “Code Generator” that takes a natural language description of a feature and produces a relevant code snippet. Downstream, a “Code Reviewer” agent analyzes this snippet for style and logical errors, while a “Test Generator” agent creates unit tests based on the code. Finally, a “Test Runner” agent executes these tests and reports results back to the orchestrator. Symphony specifies how these agents communicate and in what order they execute, ensuring that the pipeline runs autonomously and in the correct sequence.

One of the key advantages of Symphony’s orchestration is its ability to handle conditional branching and parallel execution. For instance, if the “Code Reviewer” detects issues, the pipeline can automatically trigger a “Code Fixer” agent to refine the output before proceeding. Alternatively, multiple test cases can be run in parallel agents to speed up validation. This flexibility makes Symphony ideal for iterative and complex development workflows involving multiple AI agents.

To illustrate these concepts, the following table compares core Symphony orchestration features with traditional monolithic pipeline approaches and simple chained prompt calls to OpenAI Codex:

| Aspect | Symphony Orchestration | Monolithic Pipeline | Simple Chained Prompts |

|---|---|---|---|

| Modularity | High: Agents defined independently with clear interfaces | Low: Single large codebase with intertwined logic | Medium: Separate prompts but limited modular control |

| Scalability | High: Supports parallel and conditional execution | Medium: Scaling requires complex refactoring | Low: Difficult to manage multiple agents simultaneously |

| Error Handling | Robust: Built-in mechanisms for retries and branching | Basic: Manual error-catching logic required | Minimal: Errors propagate easily through chains |

| Maintainability | High: Declarative spec simplifies updates and debugging | Low: Complex code leads to maintenance burden | Medium: Prompt changes can be isolated but lack orchestration |

| Integration | Flexible: Easily integrates external tools and APIs | Variable: Depends on pipeline design | Limited: Mostly limited to Codex prompt chaining |

| Reusability | High: Agents can be reused across different pipelines | Low: Code tightly coupled to specific use case | Medium: Prompt templates reusable but less structured |

After defining the agents and their orchestration in the Symphony spec, the next step is implementing the runtime environment. This runtime loads the specification, initializes the Codex agents using OpenAI’s API, and manages the execution flow according to the defined dependencies. Several open-source implementations of Symphony runtimes exist, often written in Python or Node.js, and they provide essential features such as state management, event dispatching, and error recovery.

When coding the prompt templates for each agent, it is critical to tailor them to the agent’s specific responsibility while maintaining a consistent style to facilitate interpretability across the pipeline stages. For instance, the code generation agent’s prompt should clearly describe the programming task and any constraints, while the code review agent’s prompt needs to instruct Codex to identify potential bugs or style violations. The Symphony spec allows these templates to be parameterized, enabling flexible input injection and output parsing.

Another important aspect is the management of outputs between agents. Symphony supports structured outputs, often in JSON, which simplifies passing complex data structures between agents without losing semantic information. This approach contrasts with plain text chaining, where outputs must be parsed heuristically, increasing the risk of errors.

Throughout the development process, it is advisable to iteratively test the Symphony pipeline by executing individual agents in isolation and then progressively integrating them into the full workflow. This method helps quickly identify bottlenecks or misconfigurations in the orchestration. Additionally, logging and monitoring the runtime execution provide valuable insights into agent performance and interaction patterns, which can guide refinements to prompts or orchestration logic.

For developers interested in hands-on examples and advanced orchestration patterns using Symphony and OpenAI Codex, our detailed guide on agent chaining and orchestration best practices offers comprehensive walkthroughs and code samples

While building a multi-agent coding pipeline with OpenAI Codex and Symphony orchestration streamlines complex development workflows, ensuring the security of these AI-driven processes is paramount. The article Running AI Coding Agents Safely: Enterprise Security Best Practices for Codex delves into critical strategies for safeguarding AI coding agents within enterprise environments, addressing risk mitigation, access controls, and compliance considerations that are essential for maintaining secure and reliable AI operations.

. This resource complements the foundational knowledge established here and equips you to build robust multi-agent coding pipelines tailored to your specific development needs.

In conclusion, Symphony’s open-source specification provides a powerful and flexible framework for chaining OpenAI Codex agents into sophisticated multi-agent coding pipelines. By leveraging its declarative orchestration model, modular agent design, and runtime execution capabilities, developers can automate complex software development workflows with high scalability, maintainability, and resilience. The following sections of this tutorial will guide you through an end-to-end implementation, including setting up your environment, writing agent specs, and deploying the pipeline to production.

Implementing the Multi-Agent Pipeline with Symphony Open-Source Spec

Building a robust multi-agent coding pipeline requires more than simply connecting individual AI models; it demands a structured orchestration layer that manages complex workflows, data exchanges, and error handling. Symphony, an open-source orchestration framework, provides an ideal solution with its specification designed to chain AI agents such as OpenAI Codex effectively. In this section, we will walk through the hands-on process of implementing a multi-agent pipeline using Symphony’s open-source spec, demonstrating how to coordinate multiple Codex agents seamlessly.

To begin, it is essential to understand the core components of the Symphony spec. At its heart, Symphony defines agents, tasks, and workflows in a declarative JSON or YAML format. Each agent represents a distinct AI service or function—in this case, an OpenAI Codex instance configured for specific coding tasks like code generation, debugging, or documentation. Tasks define the input and output contract for each agent invocation, while workflows chain these tasks together, specifying the order of execution and data dependencies.

The first step in our practical setup is to define the individual Codex agents within the Symphony configuration. For instance, you may have a “CodeGenerator” agent that accepts a natural language prompt and produces initial code snippets. Another agent, “CodeReviewer,” could take the generated code and analyze it for potential bugs or style issues. A third agent might be designated “CodeDocumenter,” responsible for creating inline comments and documentation based on the code structure. Each of these roles is encapsulated as an agent in the Symphony orchestration spec, with clearly defined input and output schemas.

Once agents are defined, tasks are created to invoke these agents with specific inputs. For example, a task named “GenerateInitialCode” would pass the user’s programming request to the “CodeGenerator” agent. The output from this task becomes the input to the subsequent “ReviewCode” task, which triggers the “CodeReviewer” agent. This chaining ensures that each agent operates on the results produced by its predecessor, maintaining a coherent flow of information.

The workflow configuration is where the orchestration truly comes alive. Symphony allows you to specify conditional logic, parallel processing, and retries within the workflow, offering fine-grained control over the pipeline’s behavior. For example, if the “CodeReviewer” agent detects critical errors, the workflow can be designed to loop back and trigger the “CodeGenerator” agent with refined instructions, effectively implementing an iterative improvement cycle. Alternatively, if the review passes, the pipeline can proceed to the “CodeDocumenter” agent to enrich the code with explanations and annotations.

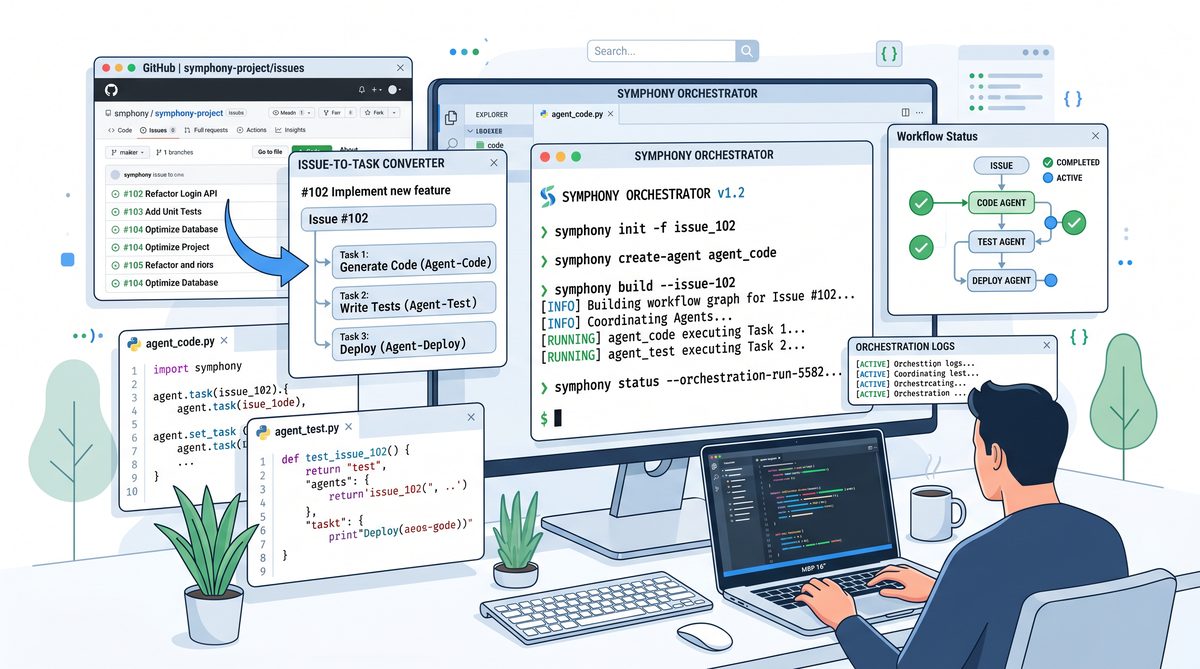

To implement this in practice, you start by installing Symphony’s open-source CLI and runtime environment. This setup provides the tools necessary to parse the orchestration spec, manage agent invocations, and monitor workflow execution. Once installed, you create your agents’ configuration files, including API keys and endpoint details for OpenAI Codex. Symphony’s modular design ensures you can easily swap or upgrade agents without rewriting the entire pipeline.

Next, author the workflow spec using Symphony’s JSON or YAML schema. The spec should clearly outline the sequence of tasks, the agents they call, and the data passed between them. For example, a simplified workflow might resemble the following structure:

1. Receive user prompt.

2. Invoke “CodeGenerator” with the prompt.

3. Pass generated code to “CodeReviewer.”

4. If review fails, loop back to step 2 with revised instructions.

5. If review succeeds, invoke “CodeDocumenter.”

6. Return the final documented code to the user.

After defining the workflow, you deploy it using Symphony’s CLI, which validates the spec and prepares the runtime environment. At this point, you can initiate the pipeline by sending a user query to Symphony’s API gateway. The framework then handles invoking the Codex agents in the defined order, managing retries, and collecting outputs.

An important aspect of using Symphony is observability. The framework provides detailed logs and metrics on each agent invocation, including response times, errors, and data payloads. This visibility is crucial for debugging and optimizing the pipeline. For instance, if the “CodeReviewer” agent frequently flags errors, you might need to adjust its prompt template or increase its temperature settings for better code analysis.

Moreover, Symphony’s open-source nature means you can extend the system with custom plugins or integrate additional AI models beyond Codex. For example, incorporating a natural language understanding agent to preprocess user queries or a testing agent to execute and validate generated code can significantly enhance the pipeline’s capabilities. This extensibility ensures that your multi-agent system evolves alongside your development needs.

Throughout this implementation, it is advisable to maintain clear documentation and version control for your Symphony specs and agent configurations. Since these files define the entire pipeline logic, changes must be tracked meticulously to avoid regressions or unexpected behaviors. Integrating Symphony with CI/CD pipelines can automate deployment and testing, ensuring consistent performance and reliability.

For developers seeking a deeper dive into Symphony’s orchestration features and best practices for chaining AI agents, the comprehensive resources and tutorials available at

Building a multi-agent coding pipeline with OpenAI Codex and Symphony orchestration establishes a robust framework for distributed task management, yet optimizing continuous, autonomous coding sessions requires a deeper understanding of Codex’s extended capabilities. The article How to Use OpenAI Codex /goal for Autonomous Multi-Hour Coding Sessions in 2026 provides an in-depth exploration of leveraging the /goal command to enable Codex to maintain focus and adapt over prolonged coding periods, complementing the orchestration techniques discussed here by enhancing session autonomy and efficiency.

provide invaluable guidance. These materials cover advanced topics such as dynamic task routing, multi-agent communication patterns, and error recovery strategies.

In conclusion, leveraging Symphony’s open-source spec to orchestrate multiple OpenAI Codex agents enables the creation of sophisticated, automated coding pipelines. This approach not only improves efficiency by distributing responsibilities across specialized agents but also enhances code quality through iterative refinement and documentation. By following the steps outlined in this section—defining agents, configuring tasks, and authoring workflows—you can build a scalable multi-agent system tailored to complex software development challenges.

Conclusion and Future Outlook

Building a multi-agent coding pipeline using OpenAI Codex in conjunction with Symphony orchestration offers a powerful approach to automating complex software development workflows. This tutorial demonstrated how the Symphony open-source specification facilitates the seamless chaining of multiple Codex agents, each responsible for distinct stages of the coding process—from requirement analysis and code generation to testing and documentation. By leveraging Symphony’s declarative workflow definitions, developers gain fine-grained control over agent interactions, enabling modular, maintainable, and scalable AI-driven pipelines.

The key takeaway is that Symphony acts as a crucial abstraction layer that manages concurrency, data flow, and error handling among Codex agents, preventing bottlenecks and ensuring smooth pipeline execution. The hands-on example highlighted typical challenges such as prompt design for individual agents, state management across tasks, and integrating outputs into a coherent final product. The approach also illustrated how orchestration enables iterative refinement cycles, where agents can review and improve code generated by their predecessors, enhancing overall code quality.

Looking ahead, the synergy between large language models like Codex and orchestration frameworks such as Symphony will continue to evolve. As AI models improve in contextual understanding and code synthesis capabilities, orchestration will play an even more critical role in coordinating complex, multi-step workflows that involve diverse tasks such as code review, security auditing, and deployment automation. Additionally, incorporating real-time feedback mechanisms and integrating human-in-the-loop checkpoints will further increase pipeline robustness and trustworthiness.

Future enhancements may also focus on extending Symphony’s capabilities to support heterogeneous agent models, including specialized AI tools for different programming languages or domains. This modularity will allow teams to tailor pipelines to specific project requirements and leverage the strengths of various AI systems collaboratively. Moreover, advances in explainability and transparency for AI-generated code will be vital to ensure maintainability and compliance with software engineering best practices.

In summary, the combination of OpenAI Codex and Symphony orchestration represents a significant step forward in automated software engineering. By mastering these tools, developers can build sophisticated multi-agent pipelines that accelerate development cycles, reduce human effort, and improve code reliability. Continued experimentation and community contributions to open-source orchestration frameworks will drive innovation and broaden the practical applications of AI-assisted coding.

Useful Links

- OpenAI Codex Official Blog

- Symphony Open-Source Specification Repository

- OpenAI Codex API Documentation

- OpenAI Cookbook on GitHub

- Symphony Orchestration Documentation

- Developer Community Articles on AI Coding

- Research Paper: Multi-Agent Systems for AI Programming

- Stack Overflow: OpenAI Codex Questions

- Symphony Orchestration Tutorial Video

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.