Running AI Coding Agents Safely: Enterprise Security Best Practices for Codex

Running AI Coding Agents Safely: Enterprise Security Best Practices for Codex

In recent years, AI coding agents have revolutionized software development by automating code generation, assisting developers, and accelerating time-to-market. Among these, OpenAI’s Codex stands out as a powerful AI model designed specifically for code synthesis and comprehension. Enterprises increasingly leverage Codex to streamline coding workflows, enforce standards, and enhance productivity. However, with great power comes great responsibility: deploying AI coding agents like Codex in enterprise environments introduces unique security challenges that must be rigorously addressed.

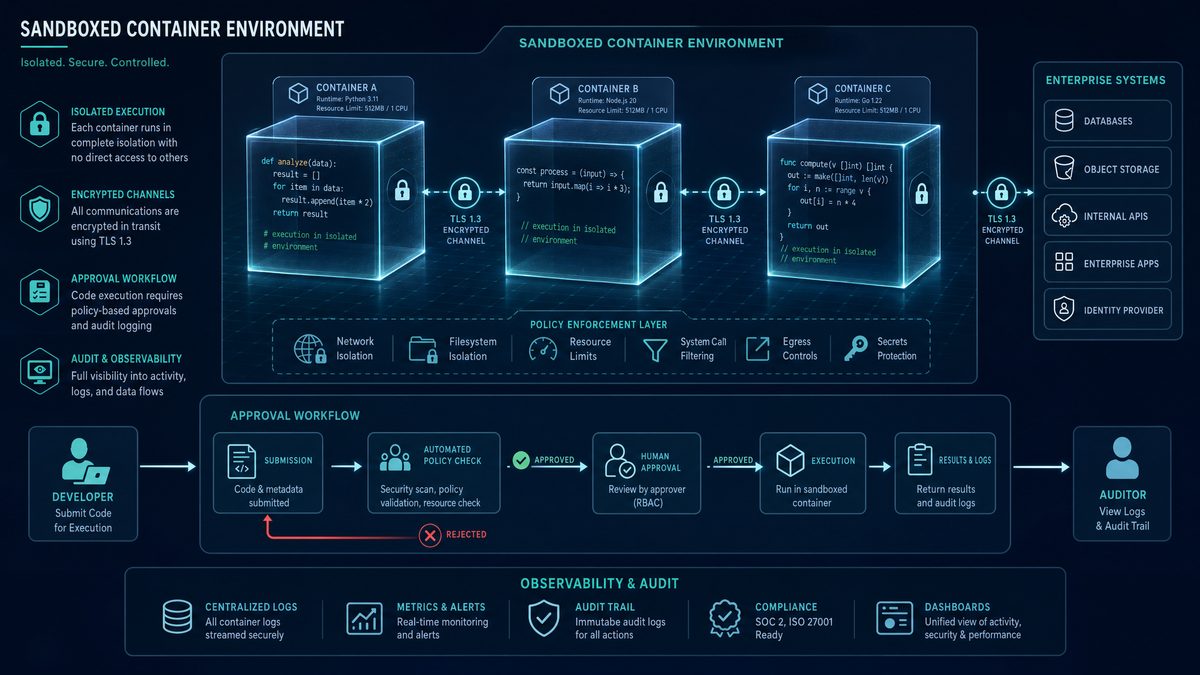

This article presents an exhaustive examination of the security landscape surrounding AI coding agents, focusing on best practices to run Codex safely within enterprise infrastructures. We explore essential mechanisms such as sandboxing, approval workflows, auto-review modes, network policies, and OpenTelemetry audit trails. By understanding these security pillars and integrating them effectively, organizations can harness Codex’s capabilities while mitigating risks related to data privacy, code integrity, and regulatory compliance.

The roadmap of this article is structured into eight comprehensive sections, beginning with foundational security concepts and culminating in future trends in AI coding agent security. Whether you are a security engineer, DevOps professional, or AI practitioner, this guide will equip you with the knowledge to implement robust, multi-layered security frameworks for Codex deployments.

1. Understanding the Security Landscape of AI Coding Agents

1.1 What are AI Coding Agents?

AI coding agents are software systems powered by artificial intelligence models designed to understand, generate, or modify source code. Leveraging sophisticated natural language processing and machine learning techniques, these agents can interpret developer prompts, write programmatic logic, refactor codebases, and even suggest bug fixes or optimizations.

OpenAI’s Codex is a prominent example, trained on vast amounts of open-source and proprietary code to generate code snippets across multiple programming languages. Use cases include automated pair programming, code completion, generation of boilerplate code, and integration into CI/CD pipelines for automated scripting.

Despite their utility, AI coding agents pose significant risks in enterprise settings. The automated nature of code generation, combined with potential opacity in decision-making, can introduce vulnerabilities if not carefully managed. Understanding these risks is the first step toward establishing safe deployment strategies.

1.2 Why Security is Paramount for AI Coding Agents

Security considerations for AI coding agents extend beyond traditional software vulnerabilities. Key concerns include:

- Data privacy: AI agents interact with proprietary codebases containing sensitive business logic and intellectual property. Leakage of this data through generated code or logs can result in severe breaches.

- Code injection and malicious code generation: The model may inadvertently produce insecure code patterns, backdoors, or inject malicious payloads if exploited through crafted prompts or training data biases.

- Compliance and regulatory requirements: Enterprises must adhere to industry-specific regulations such as GDPR, HIPAA, or SOX, which mandate strict controls over data handling and auditing. AI-generated code and interactions must comply accordingly.

These factors underscore the necessity of embedding security controls directly into the AI coding agent lifecycle, from generation to deployment.

1.3 Threat Vectors Specific to Codex

Codex introduces unique threat vectors that enterprises must address:

| Threat Vector | Description | Enterprise Impact |

|---|---|---|

| Unauthorized Code Execution | AI-generated code may execute commands or scripts beyond intended scope, potentially compromising systems. | Data breaches, system downtime, lateral movement within networks. |

| Data Leakage through Generated Code | Codex could inadvertently embed sensitive information such as API keys, credentials, or proprietary algorithms in output. | Intellectual property loss, regulatory fines, reputational damage. |

| Network Vulnerabilities | AI agents require network access, which if misconfigured, could expose internal services or allow data exfiltration. | Unauthorized access to internal resources, data exfiltration, compliance violations. |

Understanding these threat vectors informs the design of comprehensive security controls discussed in subsequent sections.

2. Deep Dive into Sandboxing for Codex Agents

2.1 What is Sandboxing?

Sandboxing is a security technique that isolates code execution within a controlled environment to prevent unintended interactions with the host system or network. By restricting the permissions, resources, and capabilities available to executable code, sandboxing mitigates the risks posed by potentially unsafe or untrusted code.

For additional context on this topic, see our detailed coverage of ChatGPT Prompts.

There are several levels and types of sandboxing:

- OS-level Sandboxing: Utilizing operating system features like chroot jails, user namespaces, or Windows AppContainers to isolate processes.

- Container-based Sandboxing: Employing container technologies such as Docker or Kubernetes pods to encapsulate AI agent runtimes with restricted capabilities.

- Language-level Sandboxing: Restricting code execution within managed runtime environments (e.g., Java Virtual Machine, Python sandboxes) that enforce security policies.

2.2 Implementing Sandboxing for AI Coding Agents

For Codex, sandboxing is critical since AI-generated code may be executed or tested automatically. Recommended sandboxing strategies include:

- Containerized Execution: Running Codex-generated code inside minimal containers with explicitly defined resource limits (CPU, memory) and no privileged access.

- Ephemeral Environments: Employing disposable sandboxes that reset after each execution to prevent persistence of malicious code or artifacts.

- CI/CD Pipeline Integration: Embedding sandboxed execution stages into CI/CD workflows ensures automated testing and validation occur in isolated contexts.

These approaches enable enterprises to safely evaluate Codex outputs before integrating them into production environments.

2.3 Benefits of Sandboxing in Enterprise

Sandboxing delivers multiple security and operational advantages:

- Isolation: AI-generated code executes separately from critical systems, preventing unauthorized access or damage.

- Resource Control: Limits on compute and network usage prevent denial-of-service (DoS) attacks or resource exhaustion.

- Auditability: Sandboxed environments facilitate detailed logging and monitoring of code behavior for forensic analysis.

2.4 Best Practices for Sandboxing Codex

To maximize sandboxing effectiveness, enterprises should adopt these best practices:

- Apply Least Privilege: Grant only essential permissions and capabilities to sandboxed processes.

- Monitor Sandbox Behavior: Continuously observe resource usage, system calls, and network activity for anomalies.

- Regular Updates: Keep sandboxing platforms and container images patched against known vulnerabilities.

- Use Immutable Infrastructure: Deploy sandbox environments as immutable artifacts to prevent unauthorized changes.

3. Approval Workflows: Ensuring Human Oversight

3.1 The Need for Approval Mechanisms

While AI coding agents automate many tasks, unchecked automated code deployment can introduce critical risks. Approval workflows ensure human oversight by requiring validation of AI-generated code before integration. This balances the efficiency of automation with the risk mitigation inherent in manual review.

For additional context on this topic, see our detailed coverage of Claude 3.5.

Approvals help:

- Prevent propagation of insecure or non-compliant code

- Ensure accountability and traceability of changes

- Maintain alignment with organizational coding standards and policies

3.2 Designing Approval Workflows for Codex Outputs

Effective approval workflows for Codex should incorporate multiple layers of review:

- Developer Review: Initial inspection by the author or a peer to confirm correctness and quality.

- Security Review: Validation by security teams focusing on vulnerability risks, secrets exposure, and compliance.

- Compliance Review: Final assessment for regulatory adherence and auditability.

Integration with code review tools such as GitHub Pull Requests (PRs) or GitLab Merge Requests enables seamless workflow enforcement and traceability.

3.3 Automating Approval Triggers

To optimize efficiency, enterprises can define criteria for auto-approval versus manual review by leveraging metadata and risk scoring:

- Low-risk Changes: Minor code formatting or comments may be auto-approved after passing automated tests.

- High-risk Changes: Code that modifies critical infrastructure or handles sensitive data requires mandatory manual review.

- Risk Scoring: AI or heuristic-based scoring models analyze code for vulnerability patterns or compliance issues to inform approval decisions.

This hybrid approach reduces bottlenecks while maintaining rigorous security controls.

3.4 Case Studies: Successful Approval Implementations

Several enterprises have pioneered effective approval workflows for AI coding agents:

- Global Financial Institution: Implemented a three-tiered approval process integrated with GitHub PRs, reducing security incidents by 40% within six months.

- Technology Firm: Leveraged risk scoring algorithms to auto-approve low-risk code changes, increasing developer throughput by 30% without compromising security.

- Healthcare Provider: Enforced compliance reviews for all AI-generated code handling patient data, ensuring HIPAA adherence and audit readiness.

Common pitfalls include insufficient reviewer training and lack of integration with existing DevOps tools, which can delay approvals and frustrate developers.

For additional context on this topic, see our detailed coverage of OpenAI API.

4. Auto-Review Mode: Leveraging AI for Preliminary Code Assessment

4.1 What is Auto-Review Mode?

Auto-Review Mode refers to automated preliminary assessment of AI-generated code using AI and static analysis tools before human review. Codex can be configured to automatically scan its outputs for common security flaws, code smells, or policy violations.

This functionality accelerates feedback loops and improves code quality by catching issues early.

4.2 How Auto-Review Enhances Security

Auto-Review adds a critical security layer by:

- Detecting insecure coding patterns: Identifying use of deprecated functions, unsafe APIs, or insecure cryptography.

- Flagging potential vulnerabilities: Highlighting buffer overflows, injection risks, or privilege escalations.

- Enforcing compliance: Checking adherence to coding standards, license restrictions, and data handling policies.

By automating these checks, organizations reduce human error and improve consistency of reviews.

4.3 Setting up Auto-Review in Codex

Configuring Auto-Review involves:

- Defining rulesets: Customizing static code analysis tools such as ESLint, SonarQube, or Bandit to suit organizational policies.

- Integrating with Codex: Embedding these tools into Codex workflows to automatically scan outputs before approvals.

- Customizing thresholds: Setting severity levels and determining which violations block approvals versus warnings.

This setup ensures that auto-reviews complement human oversight rather than replace it.

4.4 Limitations and Risks of Auto-Review

Despite its advantages, Auto-Review has limitations:

- False positives: Overly sensitive rules may flag benign code, causing unnecessary delays.

- False negatives: Sophisticated vulnerabilities may evade automated detection.

- Over-reliance: Sole dependence on auto-review can create blind spots if human review is neglected.

Therefore, it is imperative to maintain a balanced approach combining automated and manual reviews.

5. Network Policies: Controlling Connectivity and Data Flow

5.1 Why Network Policies Matter for AI Coding Agents

AI coding agents like Codex require network connectivity for model access, telemetry, and integration with repositories. Without strict network policies, there is a risk of:

- Data exfiltration through unauthorized channels

- Access to sensitive internal resources by untrusted code

- Exposure to external threats via open network paths

Network policies act as gatekeepers, defining and enforcing permitted data flows and connectivity.

5.2 Defining Network Policies for Codex Deployments

Key network policy strategies include:

- IP and Domain Whitelisting: Restricting network connections to known, trusted endpoints essential for Codex operations.

- Blacklisting Suspicious Domains: Blocking access to unverified or malicious external sites.

- Environment Segmentation: Isolating AI agent environments into separate network segments or VLANs to contain breaches.

5.3 Implementing Network Controls

Enterprises can leverage multiple tools for robust network control:

- Firewalls: Configured with granular rules to enforce whitelisting and block unauthorized traffic.

- Virtual Private Networks (VPNs): Securing communications and restricting access to internal networks.

- Proxies: Mediating outbound connections for inspection and filtering.

- Role-Based Access Control (RBAC): Limiting network resource access based on user or service roles.

5.4 Monitoring and Responding to Network Anomalies

Continuous network monitoring is essential to detect and respond to anomalies such as unusual data flows or connection attempts:

- Traffic Analysis Tools: Deploy network intrusion detection systems (NIDS) or flow analyzers to monitor real-time traffic.

- Alerting and Incident Response: Establish automated alerts for suspicious activity and predefined procedures to investigate and remediate incidents.

These capabilities enable rapid containment and minimize damage from network-based threats.

6. OpenTelemetry Audit Trails: Achieving Visibility and Compliance

6.1 Introduction to OpenTelemetry

OpenTelemetry is an open-source observability framework that standardizes the collection of telemetry data including logs, metrics, and traces across distributed systems. In the context of AI coding agents, OpenTelemetry enables enterprises to gain deep insights into AI workflows, facilitating security audits and compliance monitoring.

6.2 Setting up OpenTelemetry for Codex Agents

Instrumentation of Codex workflows with OpenTelemetry involves:

- Embedding SDKs: Integrating OpenTelemetry SDKs into AI agent platforms or CI/CD pipelines to capture telemetry data.

- Data Collection: Aggregating detailed logs of code generation requests, user interactions, and system events.

- Traceability: Capturing spans to track the lifecycle of AI-generated code from prompt to deployment.

6.3 Leveraging Audit Trails for Security

Audit trails collected via OpenTelemetry provide the following security benefits:

- Accountability: Logging user actions and AI output ensures traceability for forensic investigations.

- Anomaly Detection: Identifying unusual patterns such as repeated failed code approvals or suspicious prompt inputs.

- Compliance Reporting: Generating evidence for regulatory audits demonstrating control adherence.

6.4 Integrating OpenTelemetry with Enterprise Monitoring

Enterprises typically correlate OpenTelemetry data with existing monitoring infrastructure:

- SIEM Systems: Feeding telemetry into Security Information and Event Management platforms for centralized analysis.

- SOAR Platforms: Integrating with Security Orchestration, Automation, and Response systems to automate incident responses based on telemetry insights.

- Dashboards and Reporting: Creating customized dashboards for real-time visibility into Codex security posture.

7. Holistic Security Framework for Safe Codex Deployment

7.1 Combining Sandboxing, Approvals, Auto-Review, Network Policies, and Auditing

Each security mechanism discussed so far serves a distinct purpose, but their true power lies in integration. A layered defense strategy combines:

- Sandboxing: Isolates execution and limits system impact.

- Approval Workflows: Enforces human oversight and accountability.

- Auto-Review: Provides automated preliminary scanning to boost efficiency.

- Network Policies: Controls data flow and external interactions.

- OpenTelemetry Audit Trails: Enables visibility, monitoring, and compliance.

These layers collectively reduce attack surfaces, detect anomalies early, and enforce policy adherence throughout the Codex lifecycle.

7.2 Continuous Improvement and Feedback Loops

Security is not static. Enterprises must regularly assess and refine their defenses through:

- Security Assessments: Conducting audits, penetration testing, and red team exercises targeting AI coding agents.

- Policy Updates: Revising network rules, approval criteria, and sandbox configurations based on emerging threats.

- Feedback Loops: Incorporating lessons learned from incidents and audit findings to strengthen controls.

Such ongoing vigilance ensures resilience against evolving attack vectors.

7.3 Training and Awareness for Teams Using Codex

Technical controls must be complemented by well-informed personnel. Key training initiatives include:

- Developer Education: Teaching secure coding practices and awareness of AI-specific risks.

- Security Staff Training: Equipping security teams with knowledge to monitor and respond to AI-generated code threats.

- Promoting Security-First Culture: Encouraging collaboration between developers, security, and compliance teams to prioritize safety.

8. Future Trends and Enhancements in AI Coding Agent Security

8.1 Advances in AI Explainability and Trustworthiness

Emerging research focuses on making AI coding agents more transparent and interpretable. Explainability techniques aim to:

- Provide rationales for code generation decisions

- Highlight confidence levels and uncertainty

- Expose potential biases or training data limitations

These advances will enhance approval and review workflows by enabling informed human judgment and building trust in AI outputs.

8.2 Enhanced Sandbox Technologies

Future sandboxing innovations include:

- Hardware-Level Isolation: Leveraging technologies like Intel SGX or AMD SEV to create secure enclaves for AI code execution.

- AI-Driven Sandbox Monitoring: Employing machine learning models to detect anomalous sandbox behavior in real-time.

These developments will strengthen isolation guarantees and improve threat detection capabilities.

8.3 Evolving Standards and Regulations

Regulatory bodies and industry consortia are actively developing frameworks governing AI-generated code:

- Standards for auditability and traceability of AI outputs

- Certification processes for AI coding agents in critical sectors

- Guidelines on data privacy and intellectual property protection

Enterprises must stay abreast of these changes to ensure compliance and future-proof security strategies.

Useful Links

- Official OpenAI Codex Documentation

- OpenTelemetry Project

- CIS Controls for Enterprise Security

- OWASP Top Ten Security Risks

- NIST AI Risk Management Framework

- Best Practices for Secure Container Sandboxes

- Introduction to Network Security Policies

- Building Secure Code Review Processes

By leveraging these resources, teams can deepen their understanding and implementation of security best practices for AI coding agents.

Conclusion

Deploying AI coding agents like OpenAI’s Codex safely within enterprise environments demands a multi-faceted security approach. Sandboxing provides a robust containment mechanism to isolate AI-generated code execution, preventing unauthorized system interactions. Approval workflows ensure essential human oversight, balancing automation efficiency with security assurances. Auto-review modes leverage AI to perform preliminary code assessments, catching vulnerabilities early while complementing human review.

Network policies regulate data flows and connectivity, crucial to preventing data exfiltration and limiting exposure to threats. OpenTelemetry audit trails deliver comprehensive observability, enabling accountability, anomaly detection, and compliance adherence. Together, these components form a holistic security framework that protects enterprise assets while unlocking the productivity benefits of AI coding agents.

As AI coding technology evolves, enterprises must commit to continuous improvement, ongoing training, and adoption of emerging standards to maintain a resilient security posture. By implementing these best practices, organizations can confidently integrate Codex into their development ecosystems, harnessing innovation without compromising security.