How Anthropic’s SpaceX Compute Deal Changes the AI Landscape: Scaling Claude for Enterprise

How Anthropic’s SpaceX Compute Deal Changes the AI Landscape: Scaling Claude for Enterprise

Introduction

Anthropic, a pioneering AI research company founded by former OpenAI members, has rapidly emerged as a leading force in developing advanced AI assistants focused on safety, reliability, and enterprise utility. At the heart of its innovation is Claude, Anthropic’s flagship AI assistant and large language model designed to enable natural and safe human-AI interactions. Claude distinguishes itself through its emphasis on interpretability and ethical AI principles, positioning Anthropic as a key player in the maturing AI ecosystem.

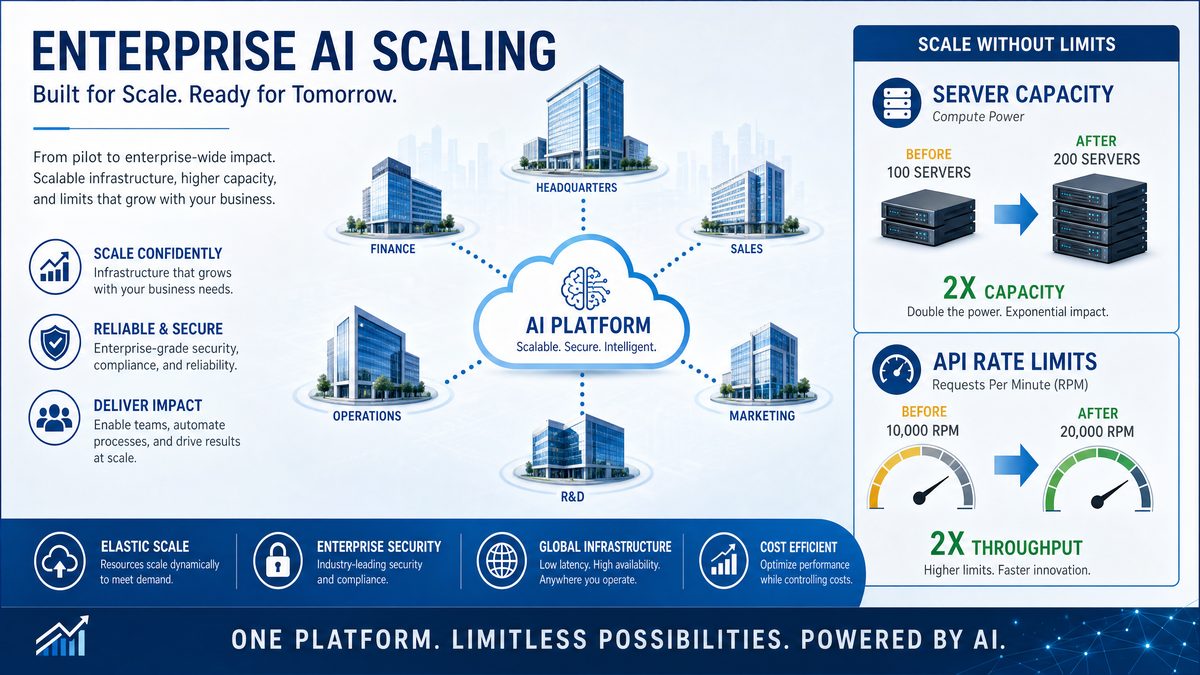

The recent announcement of a groundbreaking compute partnership between Anthropic and SpaceX marks a monumental milestone for the company and the AI industry at large. This deal, involving over 300 megawatts (MW) of compute power, is unprecedented in scale and signals a fundamental shift in how AI infrastructure is provisioned to meet the growing demands of enterprise-scale AI applications. The magnitude of this compute allocation not only addresses critical AI training and inference bottlenecks but also enables Anthropic to dramatically enhance Claude’s capabilities, including doubling code context windows and significantly increasing API rate limits.

This article aims to provide a comprehensive examination of the Anthropic-SpaceX compute deal, exploring its technical implications for scaling Claude, the resulting benefits for enterprise customers, and the broader impact on the competitive AI landscape. Readers will gain detailed insights into how this partnership transforms Anthropic’s operational capacity, empowers complex AI workflows, and reshapes industry standards for AI compute infrastructure.

1. Understanding the Anthropic-SpaceX Compute Deal

1.1. Details of the 300+ MW Compute Agreement

The core of Anthropic’s recent announcement is a strategic partnership with SpaceX providing access to a staggering compute infrastructure exceeding 300 megawatts of power dedicated to AI workloads. To contextualize, 300 MW represents the energy equivalent to powering a small city, exclusively devoted to Anthropic’s AI model training and inference needs.

This allocation goes far beyond typical AI compute agreements, which often range in the tens of megawatts for leading AI labs. For example, OpenAI’s early compute arrangements were estimated in the low tens of megawatts, and even the largest hyperscale cloud providers rarely dedicate this scale exclusively to a single AI research entity. The partnership allows Anthropic to deploy hundreds of thousands of GPUs or AI accelerators simultaneously, enabling parallelism and throughput at a scale previously unimaginable.

Such a magnitude of power translates directly into expanded operational capacity. Anthropic can now train larger models faster, run inference at greater scale, and support a vastly increased number of API calls. This compute abundance mitigates prior constraints that limited model size, context length, and throughput, empowering the company to push the boundaries of AI performance and enterprise usability.

1.2. Role of SpaceX’s Infrastructure and Technology

SpaceX is renowned not only for its aerospace innovations but also for its cutting-edge technology infrastructure and energy management capabilities. Partnering with SpaceX affords Anthropic access to advanced facilities optimized for high-density compute, low-latency networking, and green energy sourcing.

SpaceX’s energy infrastructure emphasizes sustainability, leveraging renewable energy and efficient power distribution systems that reduce the environmental footprint of running massive AI workloads. This synergy aligns with Anthropic’s commitment to responsible AI development, as it addresses growing concerns about the carbon intensity of training large AI models.

Beyond raw compute power, the strategic collaboration offers potential expansion opportunities. With SpaceX’s expertise in satellite communications and global networking, future integrations could enhance distributed compute, edge AI deployments, and real-time data processing capabilities, extending Anthropic’s technological reach beyond traditional data centers.

1.3. Strategic Timing and Market Context

The timing of this deal is no coincidence. The AI industry is witnessing an unprecedented surge in demand for more powerful models capable of handling complex tasks across diverse enterprise verticals. Market conditions characterized by accelerated digital transformation, remote work, and AI adoption have exponentially increased compute requirements.

Anthropic’s move positions it strategically against competitors such as OpenAI, Google DeepMind, and Meta AI, who are similarly scaling infrastructure but have yet to announce compute partnerships of this scale. This compute advantage enables Anthropic to challenge incumbents by offering enhanced model capabilities, reliability, and enterprise-grade service levels.

Ultimately, this partnership cements Anthropic’s role as a formidable enterprise AI powerhouse, capable of delivering next-generation AI solutions with unmatched scale, speed, and safety assurances.

2. Technical Impact: Scaling Claude with Massive Compute

2.1. What Scaling Claude Means

Claude’s architecture is based on transformer models optimized for natural language understanding, generation, and reasoning. However, to unlock its full potential, Claude requires extensive compute resources for both training and inference. Scaling Claude means increasing the model size, expanding context windows, accelerating training iterations, and enhancing response throughput.

With the infusion of 300+ MW of compute, Anthropic can train larger versions of Claude with billions or potentially trillions of parameters. Larger models can capture more nuanced language patterns, improving accuracy, coherence, and the ability to perform complex reasoning tasks. Additionally, faster training cycles mean Anthropic can iterate on model improvements more rapidly, reducing time-to-market for new features.

2.2. Doubling of Claude Code Limits

One of the most impactful technical enhancements enabled by this compute scaling is the doubling of Claude’s code context window limits. Context windows define the maximum length of input (text, code, or dialogue history) the AI model can process at once. Larger context windows allow the model to understand and generate longer sequences without losing track of previous information.

Previously, Claude had code context windows that allowed it to process approximately 100,000 tokens of code or text. With the new infrastructure, this limit has been doubled, enabling Claude to handle up to 200,000 tokens in a single context. This expansion is transformative for use cases like:

- Complex software development where entire codebases or multi-file projects can be analyzed in one interaction.

- Extended multi-turn conversations retaining long histories, crucial for customer support or collaborative workflows.

- Processing large documents, contracts, or datasets without truncation, improving accuracy and relevance.

The ability to maintain a larger context window materially improves Claude’s performance on tasks requiring deep understanding of extended inputs, making it a more powerful tool for developers and enterprises alike.

2.3. API Rate Limit Increases and Their Technical Implications

Beyond model improvements, the deal also facilitates significant increases in API rate limits and throughput. API rate limits dictate how many requests an application can send to the AI model per unit time, impacting concurrency and responsiveness.

Prior to the deal, Anthropic’s API rate limits were conservative to balance system stability with demand. The expanded compute capacity allows for dramatically higher throughput, enabling:

- Lower latency responses, enhancing real-time applications such as chatbots and interactive assistants.

- Higher concurrency, allowing thousands of simultaneous requests from enterprise clients without degradation.

- Improved robustness in burst workloads, critical for scenarios like live customer support or coding events.

These enhancements unlock new possibilities for integrating Claude into mission-critical business workflows that require consistent, scalable AI performance at enterprise scale.

Claude 3.53. Enterprise Implications of the Compute Expansion

3.1. Enabling More Complex and Customized Workflows

The substantial increase in Claude’s compute resources and capabilities directly empowers enterprises to build more sophisticated AI-powered workflows. Key benefits include:

- Longer, Multi-turn Conversations: Businesses can deploy conversational agents capable of sustaining detailed, context-rich interactions over extended periods without losing track of prior dialogue, improving user satisfaction and issue resolution rates.

- Complex Code Generation and Analysis: Developers benefit from Claude’s doubled code limits to generate, review, and debug large codebases efficiently, accelerating software development cycles.

- Customized AI Solutions: Enterprises can fine-tune Claude or build domain-specific applications that leverage its expanded context and throughput to meet unique business requirements.

These capabilities enable a new class of AI applications that were previously infeasible due to compute and model limitations.

3.2. Improving Reliability and Availability for Business Use

Enterprise adoption demands stringent Service Level Agreements (SLAs) with high availability and minimal downtime. The increased compute capacity allows Anthropic to:

- Distribute workloads across redundant hardware clusters, reducing single points of failure.

- Implement real-time autoscaling, adjusting resources dynamically to meet fluctuating demand.

- Enhance disaster recovery mechanisms, ensuring business continuity for critical AI services.

These improvements translate to more reliable AI service delivery, enabling businesses to integrate Claude into mission-critical systems with confidence, including regulated industries with strict compliance requirements.

3.3. Cost Efficiency and Pricing Models for Enterprises

The economies of scale realized through the SpaceX compute deal have the potential to shift Anthropic’s pricing models favorably for enterprise customers. Key considerations include:

- Lower marginal costs per API call due to bulk infrastructure utilization.

- Flexible pricing tiers based on usage volume, allowing enterprises to optimize costs according to demand.

- Potential for dedicated compute offerings or reserved capacity for large clients seeking predictable performance and pricing.

These developments make it feasible for enterprises to adopt advanced AI tools without prohibitive expenses, accelerating AI democratization across industries.

OpenAI API4. Competitive Advantage: How Anthropic Stands Out

4.1. Comparison with OpenAI’s GPT and Other AI Models

In the competitive AI landscape, Anthropic’s strategic compute investment and resulting model improvements position Claude favorably against leading alternatives such as OpenAI’s GPT-4 and Google’s PaLM models.

| Aspect | Anthropic Claude (Post-Deal) | OpenAI GPT-4 | Google PaLM |

|---|---|---|---|

| Compute Power Allocated | 300+ MW dedicated via SpaceX partnership | Estimated ~50 MW (varies by deployment) | Estimated ~70 MW (varies) |

| Maximum Context Window | ~200,000 tokens (doubled code limits) | Up to 128,000 tokens (GPT-4 Turbo) | Up to 64,000 tokens |

| API Rate Limits | Significantly increased concurrency and throughput | High, but with some rate throttling during peak | Moderate, varies by customer |

| Focus on AI Safety | Core company mission with controlled scaling | Strong focus, but less transparent | Research-driven safety initiatives |

| Enterprise SLA & Reliability | Enhanced via compute scaling and redundancy | Robust, widely adopted | Growing enterprise focus |

This comparison underscores Anthropic’s ability to leverage compute scale for both technical superiority and enterprise readiness, while maintaining a distinct emphasis on AI safety and ethics.

4.2. Differentiation Through Ethical AI and Safety

Anthropic’s founding ethos centers on building AI systems that are interpretable, controllable, and safe. The massive compute deal supports this mission by enabling rigorously tested scaling approaches that avoid reckless model expansion.

With access to abundant compute, Anthropic can implement extensive safety evaluations, adversarial testing, and controlled model rollouts. This approach mitigates risks associated with unpredictable AI behavior, hallucinations, or biased outputs, making Claude a more trustworthy choice for enterprise applications where compliance and reputation are paramount.

4.3. Impact on AI Ecosystem and Partnerships

The ripple effects of Anthropic’s compute expansion extend beyond its own products. The partnership signals to cloud providers, infrastructure vendors, and startups that large-scale, dedicated AI compute is a viable and necessary frontier.

We can anticipate:

- New collaborations between AI startups and infrastructure providers modeled on the Anthropic-SpaceX example.

- Increased investment in specialized AI hardware and networking optimized for massive-scale training and inference.

- Acceleration of integrated AI application ecosystems combining compute, APIs, and tooling for seamless enterprise adoption.

This ecosystem growth fosters innovation and competition, ultimately benefiting AI end-users worldwide.

5. Use Case Deep Dive: Practical Enterprise Applications

5.1. Software Development and Code Generation

The doubling of Claude’s code context limits revolutionizes software development workflows by enabling the AI to understand, generate, and refactor entire codebases or complex multi-file projects in a single interaction. Developers can:

- Leverage Claude to write large modules or microservices with minimal manual input.

- Use AI-assisted debugging that considers entire program context rather than isolated snippets.

- Collaborate with Claude as a coding partner throughout extended development cycles.

This capability accelerates development velocity, reduces bugs, and democratizes coding expertise across teams.

5.2. Customer Support and Conversational AI

Extended context windows and higher API throughput enable customer support bots powered by Claude to sustain long, detailed conversations with users, retaining context over multiple interactions. This results in:

- More personalized and accurate support experiences.

- Efficient handling of complex, multi-step queries without restarting context.

- Improved automation of routine tasks, freeing human agents for higher-value work.

Businesses benefit from enhanced customer satisfaction and reduced operational costs.

5.3. Data Analysis and Knowledge Management

Enterprises often grapple with massive volumes of text data, reports, and unstructured knowledge. Claude’s expanded context allows for:

- Processing and summarization of large datasets or documents in a single pass.

- Real-time querying and report generation with improved accuracy and depth.

- Automated knowledge extraction and decision support integrated into business workflows.

This transforms how organizations leverage their data assets for strategic advantage.

ChatGPT Prompts6. Challenges and Considerations

6.1. Infrastructure and Energy Consumption Concerns

While the 300+ MW compute deal enables unprecedented AI scaling, it also raises legitimate concerns about energy consumption and environmental impact. Training and running large AI models are energy-intensive processes, contributing to carbon emissions if powered by non-renewable sources.

Anthropic addresses this challenge through its partnership with SpaceX, which emphasizes sustainable energy practices, including solar and other renewables integrated into the compute infrastructure. This approach mitigates the carbon footprint and aligns with broader industry efforts to develop greener AI technologies.

6.2. Managing Model Complexity and Latency

Scaling Claude to larger sizes and longer contexts introduces technical complexities. Larger models require sophisticated optimization to maintain acceptable latency and cost efficiency. Key challenges include:

- Reducing inference time despite increased model size.

- Balancing model accuracy with computational resource consumption.

- Implementing efficient memory management and caching strategies for long context windows.

Anthropic continues investing in research and engineering to optimize these trade-offs, ensuring Claude performs effectively in enterprise real-time environments.

6.3. Security, Privacy, and Compliance Risks

With increased API throughput and enterprise adoption, safeguarding sensitive data becomes paramount. Anthropic employs robust encryption, access controls, and compliance frameworks to protect customer data. Additionally:

- Strict monitoring and anomaly detection guard against misuse or data leakage.

- Compliance with regulations such as GDPR, HIPAA, and SOC 2 is continuously maintained.

- Enterprises can deploy Claude within private or hybrid cloud environments for enhanced security.

These measures ensure that scaling AI capabilities does not compromise privacy or regulatory obligations.

7. Future Outlook: What’s Next for Anthropic and AI Compute?

7.1. Potential for Further Scaling and Model Evolution

The current SpaceX compute deal lays the foundation for continuous scaling of Claude and other future models. Anthropic is likely to explore:

- Training even larger models with trillions of parameters capable of deeper reasoning.

- Innovative architectures that leverage sparse attention, mixture-of-experts, or retrieval-augmented generation to optimize compute usage.

- Real-time adaptation and personalization powered by abundant inference capacity.

This evolution will push the boundaries of AI capabilities, enabling new classes of applications and interactions.

7.2. Expansion into New Markets and Industries

Anthropic’s enhanced compute capabilities open doors to vertical-specific AI solutions tailored for healthcare, finance, legal, manufacturing, and more. These sectors require:

- High accuracy and domain expertise.

- Compliance with stringent regulations.

- Robust, scalable AI services integrated with existing enterprise systems.

Claude’s scalable architecture and expanded context windows make it an ideal platform for these specialized applications, accelerating AI adoption in critical industries.

7.3. Broader Impact on the AI Industry and Infrastructure Development

Anthropic’s compute partnership with SpaceX sets a precedent for future large-scale AI infrastructure collaborations, signaling a trend toward integrated compute and application ecosystems. This may drive:

- Increased investments in energy-efficient data centers and specialized AI hardware.

- Deeper collaboration between AI research, cloud infrastructure, and telecommunications providers.

- Emergence of turnkey AI platforms combining compute, APIs, security, and domain expertise.

These developments will shape the next decade of AI innovation and deployment.

Conclusion

The landmark 300+ MW compute deal between Anthropic and SpaceX fundamentally transforms the AI landscape by unlocking new levels of scale, speed, and reliability for Anthropic’s Claude model. This infrastructure expansion enables doubling of code context windows, significant API rate limit increases, and superior model performance, all of which directly benefit enterprise customers through more complex workflows, enhanced reliability, and cost-efficient AI services.

Anthropic’s strategic positioning not only challenges industry incumbents but also advances ethical AI practices by coupling compute scale with safety and controlled deployment. As the AI ecosystem evolves, this partnership exemplifies the critical role of dedicated, sustainable compute infrastructure in powering the future of AI across industries worldwide.

Useful Links

- Anthropic Official Blog: SpaceX Compute Partnership Announcement

- SpaceX Newsroom: Supporting AI Compute at Scale

- Research Paper: Scaling Large Language Models with Massive Compute

- OpenAI Research: Expanding Context Windows in GPT Models

- Industry Report: AI Data Center Energy Consumption Trends

- Gartner Report: AI Enterprise Adoption and Compute Infrastructure

- Google AI Blog: Advances in Large Language Models