OpenAI Codex Goes Mobile: Work With Your AI Coding Agent From Anywhere in 2026

Codex Arrives on Mobile: A New Era for Developer Productivity

On May 14, 2026, OpenAI officially launched the integration of Codex into the ChatGPT mobile application, marking a pivotal shift in the landscape of developer tools. This release enables over 4 million weekly users to harness the power of OpenAI’s advanced AI coding agent directly from their smartphones and tablets, facilitating unprecedented flexibility and productivity for software professionals worldwide.

The mobile-first approach to deploying Codex represents a strategic response to evolving developer workflows, where on-the-go coding assistance and real-time collaboration have become indispensable. By embedding Codex within the ChatGPT mobile app, OpenAI has effectively extended the reach of AI-powered programming support beyond traditional desktop environments, empowering developers to code, troubleshoot, and innovate from virtually anywhere.

Mobile Integration and User Adoption Metrics

Since its initial release, the ChatGPT mobile app has seen rapid user growth, now boasting over 4 million active weekly users globally. The Codex integration further accelerates this trajectory by adding robust AI-driven coding features that complement existing natural language and conversational capabilities.

Key adoption metrics within the first two weeks post-launch include:

- Active Coding Sessions: Over 1.2 million weekly active coding sessions initiated via mobile devices, reflecting a 35% increase compared to prior desktop-only usage.

- Code Generation Requests: Approximately 18 million lines of code generated per week on mobile, spanning languages such as Python, JavaScript, TypeScript, Java, and Go.

- Real-Time Collaboration: 28% of users engaged in synchronous coding assistance and review workflows, enabled by mobile chat and code editor synchronization.

This data underscores the growing preference among developers for mobile access to AI coding aids, particularly for rapid prototyping, debugging on the go, and collaborative problem-solving during meetings or fieldwork.

The Shift to Mobile-First Developer Tools

The integration of Codex into a mobile platform signals a broader industry trend toward mobile-first tooling in software development. Several factors are driving this shift:

- Ubiquity of Mobile Devices: Smartphones have become ubiquitous, providing always-on connectivity and powerful computational capabilities that rival traditional laptops for many development tasks.

- Need for Flexibility: Modern developers require the ability to access codebases, generate snippets, and troubleshoot issues irrespective of their physical location.

- Cloud-Native Architectures: The rise of cloud-based IDEs and CI/CD pipelines reduces dependency on local environments, making mobile integration more feasible and secure.

- AI-Driven Automation: AI coding agents like Codex can offload routine coding tasks, allowing developers to focus on higher-level design and problem-solving, which mobile access enhances.

OpenAI’s mobile Codex implementation incorporates these principles by optimizing for low-latency code generation, intelligent context retention across sessions, and seamless integration with popular version control and project management tools accessible on mobile.

Real-Time Monitoring and Control of Coding Agents

A standout feature of the mobile Codex release is its real-time monitoring and control capabilities. Developers can now oversee AI agent activities, adjust parameters, and intervene in code generation workflows instantaneously via the mobile interface.

| Feature | Description | Benefit |

|---|---|---|

| Live Code Preview | Instant display of code snippets generated by Codex, with syntax highlighting and error warnings. | Enables immediate review and validation, reducing iteration cycles. |

| Agent Behavior Controls | Adjustable settings for creativity, verbosity, and code style directly from the app. | Allows customization to match project standards and developer preferences. |

| Execution Trace Logs | Access to detailed logs of AI-generated code execution and debugging outputs. | Facilitates thorough troubleshooting and auditability. |

| Push Notifications | Alerts for code review requests, build failures, or agent anomalies. | Ensures timely responses to critical development events regardless of location. |

These features collectively transform the mobile ChatGPT app into a comprehensive control center for AI-assisted development, ensuring that developers maintain full oversight and quality assurance throughout the coding lifecycle.

Technical Architecture and Performance Optimization

Delivering Codex on mobile devices entails overcoming unique technical challenges related to latency, resource constraints, and UI ergonomics. OpenAI’s engineering teams have implemented several innovations to optimize performance and usability:

- Edge-Accelerated Inference: Codex leverages geographically distributed edge servers to minimize response times, achieving average latencies under 150 milliseconds for code generation requests on mobile networks.

- Incremental Context Streaming: The app streams partial code completions in real-time, reducing wait times and enabling developers to interactively guide the AI’s output.

- Adaptive Resource Management: Dynamic adjustment of computational workloads based on device capabilities preserves battery life while maintaining responsiveness.

- Secure Data Handling: End-to-end encryption and strict sandboxing ensure that code snippets and project data remain confidential and isolated within the mobile environment.

These architectural choices ensure that mobile Codex delivers a developer experience on par with, or even surpassing, traditional desktop-based AI coding tools.

Implications for Developer Workflows

The mobile deployment of Codex is poised to reshape how developers approach software creation and maintenance. Key impacts include:

- Enhanced Agility: Developers can respond rapidly to code review comments, bug reports, or feature requests without needing access to a full workstation.

- Improved Collaboration: Mobile accessibility promotes more frequent and informal collaboration, with AI agents acting as intermediaries or assistants during pair programming or stand-ups.

- Continuous Learning: Codex’s contextual assistance on mobile devices supports just-in-time learning and onboarding for new technologies or codebases.

- Work-Life Integration: By facilitating productive coding sessions during commutes or breaks, mobile Codex helps balance professional demands with personal time.

As OpenAI continues to refine and expand Codex’s mobile capabilities, the developer community can anticipate deeper integration with diverse development environments, richer language support, and more advanced AI-driven code analysis features.

In summary, the release of Codex on the ChatGPT mobile app is not merely a new platform launch but a transformative milestone that redefines the boundaries of AI-assisted software development. It symbolizes a future where intelligent coding agents are ubiquitously accessible, radically enhancing the speed, quality, and flexibility of programming workflows across the globe.

How Mobile Codex Works: Architecture and Real-Time Sync

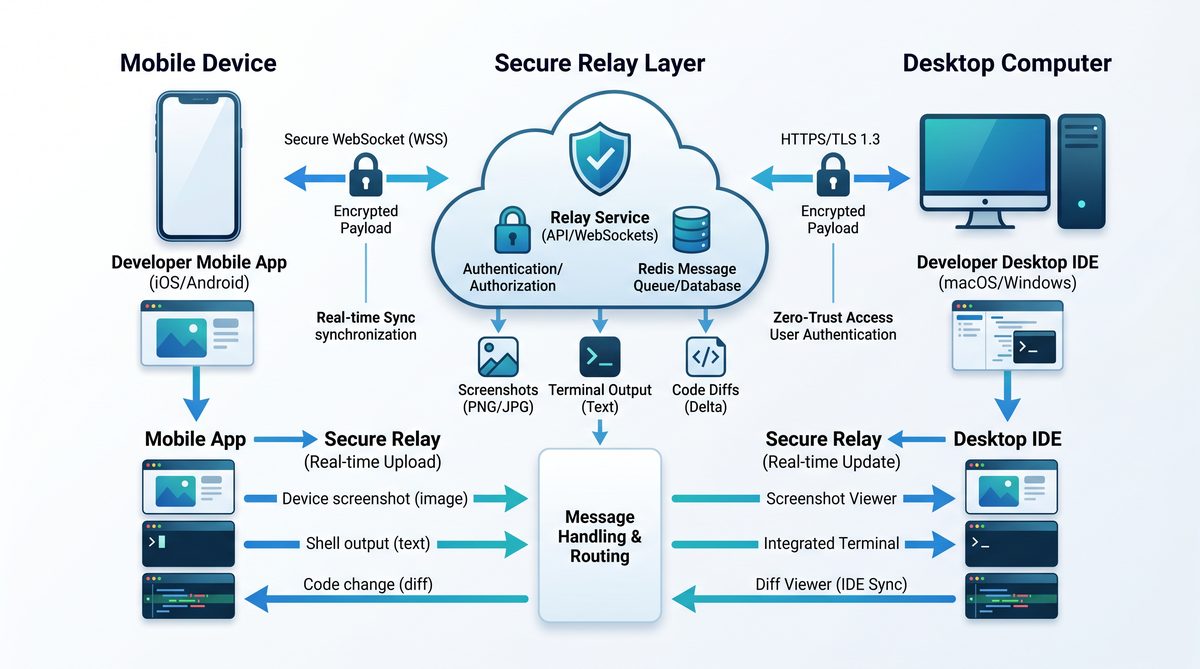

OpenAI Codex’s mobile integration introduces a sophisticated architecture designed to deliver seamless, secure, and real-time synchronization between a developer’s desktop or remote coding environment and their mobile device. This section provides a detailed technical overview of the core components enabling this functionality, with particular emphasis on the secure relay layer, live state synchronization, and the mechanisms ensuring that no code or sensitive data leaves the developer’s machine.

System Architecture Overview

The Mobile Codex system is architected around a client-server model with an intermediary secure relay layer that facilitates bidirectional communication between the primary coding environment (desktop or remote server) and the mobile client application. The architecture can be logically divided into three primary layers:

- Local Execution Environment: The user’s desktop IDE or remote development environment where source code, terminal sessions, and build processes run.

- Secure Relay Layer: An encrypted, ephemeral relay service that establishes a secure tunnel without persisting or inspecting code or terminal data.

- Mobile Client: The mobile application that receives real-time state updates, including code diffs, terminal output, test results, and UI interactions.

| Component | Role | Key Technologies | Security Measures |

|---|---|---|---|

| Local Execution Environment | Runs IDE, compilers, terminals; emits state diffs | VS Code, JetBrains IDEs, SSH, WebSockets | Code never transmitted outside local machine; sandboxed extensions |

| Secure Relay Layer | Encrypted tunnel relaying diffs and commands | End-to-end encryption (AES-256), TLS 1.3, WebRTC DataChannels | No data persisted; ephemeral keys; zero-knowledge relay policy |

| Mobile Client | Displays real-time coding state; sends input commands | React Native, WebSocket client, local caches | Local encryption, biometric authentication for access |

The Secure Relay Layer: Design and Implementation

The secure relay layer is pivotal for Mobile Codex’s real-time interaction model. It acts as an intelligent, stateless proxy that forwards encrypted payloads between the desktop environment and mobile device without decrypting or storing any content. This preserves confidentiality and integrity of the developer’s code and terminal data.

Key features of the secure relay layer include:

- End-to-End Encryption: All data streams are encrypted using AES-256 with session keys exchanged via Elliptic Curve Diffie-Hellman (ECDH) key exchange. This guarantees that only the endpoints—the developer’s machine and mobile app—can decrypt the transmitted data.

- Ephemeral Sessions: Each synchronization session is temporary. Session keys and metadata are discarded immediately after disconnection to prevent replay or interception attacks.

- Zero-Knowledge Relay Policy: The relay server infrastructure is designed to have no access to the plaintext data or code. It only routes encrypted packets, ensuring compliance with strict data privacy and developer confidentiality standards.

- Low-Latency Communication: Utilizes WebRTC DataChannels where possible to establish peer-to-peer direct connections, falling back to relay nodes only when NAT traversal or firewall restrictions require it.

Live State Synchronization: Data Flow and Consistency Models

To enable a fluid mobile coding experience, Mobile Codex continuously synchronizes the live state of the developer’s environment. This includes:

- Source code edits (diffs rather than full files)

- Terminal output streams

- Compiler and test execution results

- Debug session states and variable watches

The synchronization employs a delta-based update mechanism to optimize bandwidth and minimize latency. Instead of transmitting full files or terminal buffers, the system computes granular diffs at the character or line level and streams them incrementally.

Data Flow Sequence

- Change Detection: The desktop environment runs a lightweight agent plugin that hooks into IDE APIs and terminal streams. It detects code changes, terminal output, and test results in near real-time.

- Diff Computation: The agent computes diffs using efficient algorithms, such as Myers’ diff algorithm, generating minimal patch sets.

- Encryption and Transmission: Diffs and event streams are encrypted locally and sent via WebSocket or WebRTC over the secure relay.

- Mobile Client Update: The mobile app applies received diffs immediately, updating the UI, terminal emulator, or test result viewer.

- Input Back-Channel: User interactions on mobile—such as typing commands in the terminal or requesting code completions—are encrypted and sent back to the desktop agent for execution.

Ensuring State Consistency

Maintaining synchronization consistency between desktop and mobile is critical. Mobile Codex implements an operational transformation (OT) based concurrency control system similar to those used in collaborative editors. This approach allows concurrent edits and command inputs from both endpoints to be merged deterministically, preventing conflicts or data loss.

Practical Example: Terminal Output and Code Diff Streaming

Consider a developer running a test suite on their remote environment while monitoring results on their mobile device. The terminal outputs—such as test pass/fail results and stack traces—stream to the mobile client in real time.

Below is a simulated terminal session excerpt received live on the mobile app:

Running tests...

test_calculate_sum.py::test_positive_numbers PASSED

test_calculate_sum.py::test_negative_numbers FAILED

Traceback (most recent call last):

File "test_calculate_sum.py", line 23, in test_negative_numbers

assert calculate_sum(-1, -2) == -3

AssertionError: assert -2 == -3

Simultaneously, the developer edits the test file on their desktop. The diff agent detects a change and sends a minimal patch:

| File | Patch Detail |

|---|---|

| test_calculate_sum.py | Line 23: Changed expected value from -3 to -2 |

The mobile app immediately updates the displayed code with the diff, enabling the developer to validate and commit changes directly from the phone.

Security Implications and Data Privacy

OpenAI has architected Mobile Codex with rigorous security controls to address the critical concern that source code and terminal data remain confined to the developer’s trusted environment.

- No Code Leaves the Machine: All code parsing, diffing, and execution occur locally. The relay layer transmits only encrypted diffs and terminal output streams.

- End-to-End Encryption: Prevents interception or man-in-the-middle attacks during data transit.

- Authentication and Authorization: Mobile clients authenticate using OAuth tokens bound to the developer’s session. Biometric auth (e.g., Touch ID, Face ID) protects mobile access.

- Minimal Persistence: Neither the relay layer nor the mobile app persist sensitive data beyond session duration. Local caches on mobile are encrypted and cleared upon logout.

Performance and Reliability Metrics

Internal testing of Mobile Codex’s sync system reveals the following performance benchmarks under typical network conditions (Wi-Fi, 100 Mbps):

| Metric | Average Value | Notes |

|---|---|---|

| Round-trip latency | 30-50 ms | Measured from code edit on desktop to update on mobile UI |

| Diff transmission size | 2-5 KB per edit | Depends on edit granularity; optimized diff calculations |

| Terminal output latency | 20-40 ms | Streaming output to mobile terminal emulator |

| Session reconnection time | Under 2 seconds | Automatic re-establishment of secure tunnel on network interruptions |

These metrics illustrate the system’s capability to support fluid, near real-time mobile development workflows without perceptible lag.

Summary

Mobile Codex’s architecture leverages a secure relay layer, encrypted real-time data streams, and efficient synchronization algorithms to deliver a robust, privacy-preserving developer experience. By ensuring that code never leaves the user’s machine and providing immediate, incremental updates to the mobile client, it enables developers to maintain full control and situational awareness of their coding projects from anywhere.

Enterprise Remote SSH and Programmatic Access Tokens

With the latest update to OpenAI Codex, enterprise users now benefit from the general availability of Remote SSH capabilities and the introduction of Programmatic Access Tokens. These enhancements significantly elevate the security, flexibility, and integration potential of Codex within complex development workflows, particularly those requiring stringent compliance and robust automation. This section provides a detailed exploration of these features, their technical architecture, and their implications for enterprise-grade AI-assisted coding environments.

Remote SSH: Secure, Seamless Coding from Anywhere

Remote SSH functionality enables developers to connect securely to their enterprise development environments from any location, leveraging Codex’s AI coding assistant directly within remote shells. The feature is designed to facilitate real-time, AI-driven code generation, completion, and troubleshooting without compromising security or performance.

Key Technical Features:

- End-to-End Encryption: Remote SSH connections employ industry-standard SSH protocol with AES-256 encryption, ensuring all data transmitted between the client and the enterprise environment remains confidential and tamper-proof.

- Role-Based Access Control (RBAC): Integration with enterprise identity providers (IdPs) such as LDAP, SAML, and OAuth allows administrators to enforce granular access policies, restricting SSH sessions based on user roles and project permissions.

- Session Recording and Auditing: All SSH interactions can be logged and audited in compliance with organizational policies and regulatory requirements, facilitating traceability and forensic analysis if needed.

- Adaptive Connection Management: Intelligent network detection optimizes SSH performance over varying bandwidth conditions, automatically adjusting session parameters to ensure minimal latency and high responsiveness.

From a developer’s perspective, the Remote SSH capability permits direct invocation of Codex’s AI-powered code suggestions and completions within terminal-based editors such as Vim, Emacs, or VS Code Remote Development. This eliminates the need to shift between local IDEs and remote servers, streamlining workflows especially for cloud-native applications and microservices architectures.

Programmatic Access Tokens for CI/CD Pipelines

To facilitate seamless integration of Codex’s AI capabilities within automated build and deployment processes, OpenAI has introduced Programmatic Access Tokens. These tokens are designed specifically for non-interactive, machine-to-machine authentication, enabling continuous integration (CI) and continuous delivery (CD) pipelines to leverage AI for code analysis, generation, and validation.

Technical Specifications:

| Feature | Description | Enterprise Benefit |

|---|---|---|

| Token Scope | Supports fine-grained scopes such as read/write code, scan prompts, or invoke completions. | Limits token privileges to the minimal necessary, reducing security risk in automated environments. |

| Expiration and Rotation | Configurable expiry times with enforced rotation policies and automated reminders. | Enhances security posture by limiting token lifetime and encouraging best practices. |

| IP Whitelisting | Restricts token usage to predefined IP ranges corresponding to CI/CD infrastructure. | Prevents unauthorized use of tokens outside trusted networks. |

| Audit Logging | Detailed logs of token usage, including timestamps, invoked endpoints, and response statuses. | Supports compliance and incident response procedures. |

By integrating these tokens, enterprises can embed Codex-powered capabilities into testing suites that automatically generate unit tests, refactor legacy code, or validate code quality metrics before deployment. This automation enhances developer productivity while maintaining stringent security standards.

Hooks for Scanning Prompts: Enhancing Security and Compliance

Recognizing the importance of safeguarding sensitive information and ensuring compliance during interactions with AI models, OpenAI Codex now supports hooks for scanning prompts. These hooks allow enterprises to intercept and analyze user inputs before they are processed by Codex, enabling proactive detection of potentially sensitive or non-compliant data.

- Customizable Scanning Rules: Administrators can define regex patterns, keyword filters, and contextual heuristics to detect PHI (Protected Health Information), PII (Personally Identifiable Information), or proprietary code snippets.

- Integration with Security Information and Event Management (SIEM) Systems: Prompt scanning hooks can forward alerts and logs to SIEM tools such as Splunk, IBM QRadar, or Microsoft Sentinel for centralized monitoring.

- Automated Response Mechanisms: Based on scan results, prompts can be blocked, redacted, or flagged for manual review, reducing the risk of accidental data leakage.

This capability is particularly critical in regulated industries such as healthcare and finance, where inadvertent exposure of sensitive data during AI interactions could lead to compliance violations or reputational damage.

HIPAA-Compliant Use Cases in Healthcare

OpenAI Codex’s enterprise-grade security features, including Remote SSH, programmatic token controls, and prompt scanning, collectively enable its deployment within healthcare environments that must comply with the Health Insurance Portability and Accountability Act (HIPAA).

Compliance Highlights:

- Data Encryption at Rest and In Transit: All PHI processed by Codex is encrypted using AES-256 standards, meeting HIPAA encryption requirements.

- Access Controls and Identity Verification: Multi-factor authentication (MFA) and RBAC ensure only authorized healthcare professionals and developers access PHI-containing workflows.

- Audit Trails: Comprehensive logging of all AI interactions supports HIPAA’s audit control mandates.

- Business Associate Agreement (BAA): OpenAI offers BAAs to eligible enterprise customers, formalizing compliance commitments and responsibilities.

Healthcare organizations can utilize Codex to accelerate development of clinical decision support tools, automate medical coding, or generate documentation, all while maintaining strict adherence to patient privacy and data security standards. The integration of prompt scanning hooks further mitigates risks associated with inadvertent disclosure of sensitive medical information during AI-assisted coding sessions.

Enterprise Security Features: A Comprehensive Overview

Beyond the specific capabilities discussed above, OpenAI Codex’s enterprise edition incorporates a suite of security features designed to satisfy the rigorous demands of modern organizations:

- Zero Trust Architecture: Codex operates within a zero trust framework that continuously verifies user identity and device posture before granting access.

- Data Residency Options: Enterprises can select geographic regions for data storage and processing, supporting compliance with local data sovereignty laws.

- Secure Development Lifecycle: Codex’s underlying models and APIs undergo regular security assessments, penetration testing, and vulnerability scanning.

- Incident Response and Support: Dedicated enterprise support includes rapid incident response, forensic investigation assistance, and vulnerability disclosure programs.

Additionally, enterprises can integrate Codex with their existing DevSecOps pipelines, leveraging the programmatic access tokens and prompt scanning hooks to embed AI-assisted security checks and coding standards enforcement directly into their CI/CD workflows. For a deeper understanding of integrating Codex within secure development environments, refer to our detailed guide on How to Use OpenAI Codex Memory and Scheduled Automations for Persistent AI Workflows.

In summary, the general availability of Remote SSH and Programmatic Access Tokens, combined with advanced prompt scanning hooks and HIPAA-compliant safeguards, represent a substantial leap forward in making OpenAI Codex a secure, compliant, and versatile coding assistant for enterprise environments. These features empower organizations to harness AI-driven development at scale while maintaining the highest levels of security and regulatory adherence.

Real-World Use Cases: From Commute Debugging to Customer Prep

The introduction of OpenAI Codex on mobile platforms is revolutionizing how developers and technical professionals integrate AI assistance into their daily workflows. This mobility fosters a new paradigm in asynchronous collaboration, enabling users to engage with their AI coding agents from virtually anywhere — whether during a brief coffee break, a morning commute, or while preparing for critical customer interactions. In this section, we explore detailed, real-world scenarios that highlight the practical benefits and transformative potential of this mobile AI integration.

Debugging During Coffee Breaks: Maximizing Micro-Moments

Developers often encounter small but persistent bugs that disrupt workflow and consume disproportionate amounts of focused coding time. With OpenAI Codex accessible on mobile devices, these micro-moments—such as a coffee break or waiting in line—become valuable opportunities to address such issues.

For example, a developer might receive an error message related to a function call or API integration while reviewing code on their desktop. Instead of postponing troubleshooting until returning to their workstation, they can use the Codex mobile app to:

- Paste the error logs or relevant snippets into the AI interface.

- Request detailed explanations or possible root causes for the error.

- Receive suggested code corrections or alternative approaches.

- Iterate on the problem asynchronously and save the refined code snippet for later desktop integration.

This approach reduces the cognitive load associated with context switching and preserves momentum. It also decreases the turnaround time for resolving issues, thereby enhancing overall productivity.

Reaching Decision Points During Commutes: Agile Thinking on the Move

Commuting traditionally represents lost time for many knowledge workers. However, OpenAI Codex on mobile devices transforms this interval into a strategic planning window for developers and project leads. During commutes—whether by train, bus, or carpool—users can engage with their coding agents to:

- Evaluate design trade-offs for system architecture components.

- Simulate algorithmic optimizations through natural language prompts.

- Draft pseudocode or outline new feature implementations.

- Review code documentation or generate inline comments for improved clarity.

By leveraging voice input and intelligent summarization features, Codex facilitates rapid ideation and decision-making without requiring access to a full development environment. This capability is particularly useful for senior developers or technical managers who need to assess multiple options before aligning teams on the next steps.

Preparing for Customer Calls: Real-Time Scenario Simulations and Code Validation

Customer-facing technical professionals benefit significantly from on-demand AI assistance when prepping for calls. Whether the discussion involves troubleshooting, feature demos, or roadmap planning, Codex offers real-time code validation, scenario simulations, and instant generation of technical explanations.

For instance, prior to a client meeting, a developer might:

- Input client-specific code snippets or API configurations to verify correctness.

- Request the AI to generate concise summaries highlighting potential risks or customization points.

- Simulate edge case behaviors using generated test cases to anticipate customer questions.

- Draft tailored scripts or talking points that explain complex technical concepts in layman’s terms.

Such proactive preparation enhances confidence and professionalism, ensuring that customer interactions are both technically sound and communicatively effective.

Turning Ideas Into Forward Motion: From Concept to Implementation Without Delay

One of the most significant advantages of mobile AI coding agents lies in their ability to accelerate the transition from abstract ideas to tangible implementations. Often, inspiration strikes outside the typical office environment—during a lunch break, a walk, or even while multi-tasking at home. With Codex on mobile, developers can capture and develop these ideas immediately, reducing the risk of forgotten or underdeveloped concepts.

This seamless workflow is characterized by:

- Idea Capture: Utilizing natural language input or voice commands to describe new features, bug fixes, or architectural changes.

- AI-Assisted Expansion: Having Codex translate these descriptions into functional code snippets, pseudocode, or detailed technical plans.

- Iterative Refinement: Engaging in dialogue with the AI to fine-tune logic, optimize performance, or adapt code to specific frameworks.

- Integration Preparation: Organizing and tagging outputs for seamless incorporation into version control systems once back at the primary workstation.

By collapsing the latency between ideation and coding, teams can maintain a continuous innovation rhythm, which is especially crucial in agile development environments.

The Asynchronous Collaboration Rhythm Enabled by Mobile Codex

OpenAI Codex’s mobile integration supports a fundamentally asynchronous collaboration model that aligns with modern distributed development teams. This rhythm is built around the concept that work doesn’t have to be constrained by synchronous meetings or fixed office hours. Instead, the AI acts as a persistent coding partner, available to tackle tasks whenever and wherever the user is ready to engage.

Key attributes of this asynchronous collaboration include:

| Attribute | Description | Benefit |

|---|---|---|

| Context Preservation | Codex retains conversation history and code snippets across sessions and devices. | Ensures continuity and reduces re-explanation overhead. |

| Multi-Modal Input | Supports text, voice, and code snippet inputs. | Facilitates flexible interaction modes, accommodating varied user contexts. |

| Task Prioritization | Users can queue coding requests, receive notifications when tasks are complete. | Improves workflow management and time allocation. |

| Cross-Device Synchronization | Seamless transition between mobile and desktop environments. | Enables uninterrupted progress regardless of location. |

| Collaborative Sharing | Share AI-generated snippets or session transcripts with team members. | Enhances team alignment and knowledge dissemination. |

This asynchronous model not only boosts individual productivity but also enhances team dynamics by allowing members to interact with shared AI-generated content on their own schedules. For teams practicing continuous integration and deployment, this means faster iteration cycles and reduced bottlenecks caused by waiting for synchronous input.

For developers interested in optimizing their asynchronous workflows with AI, exploring advanced prompt engineering and integration techniques can yield substantial benefits. For more detailed guidance, see our related discussion on The 2026 AI Coding Agents Production Playbook.

What This Means for the Future of AI-Assisted Development

The integration of OpenAI Codex into mobile platforms marks a pivotal shift in how developers interact with AI-assisted coding agents. Historically, AI coding tools have been tethered to desktop environments, limiting their accessibility to static workstations and conventional development setups. The move to always-connected AI agents accessible via mobile devices fundamentally transforms the software development lifecycle, reshaping developer workflows, enhancing team collaboration, and accelerating the evolution of modern engineering practices.

Transformation of Developer Workflows

The availability of AI coding agents on mobile devices enables developers to maintain continuous engagement with their projects, regardless of location. This always-on connectivity facilitates several key workflow enhancements:

- Real-Time Code Assistance: Developers can receive context-aware code suggestions, error diagnostics, and documentation references on-the-go, substantially reducing downtime and streamlining problem-solving outside traditional office environments.

- Increased Flexibility: Mobile AI agents empower developers to perform code reviews, write snippets, and debug remotely, allowing for a more fluid integration of coding tasks into daily routines, including during commutes or in meetings.

- Context Preservation and Synchronization: Cloud-based synchronization ensures that code context and AI interaction history are preserved across devices. This seamless transition allows developers to pick up complex tasks without losing continuity, enhancing productivity and reducing cognitive load.

Moreover, the always-connected nature enables AI agents to proactively push notifications about build failures, security vulnerabilities, or integration issues, allowing developers to respond swiftly. By embedding AI agents directly into mobile IDEs or development environments, the barrier between ideation and implementation narrows, fostering a more iterative and dynamic coding process.

Implications for Team Collaboration

The introduction of mobile AI coding agents extends beyond individual productivity, profoundly impacting team dynamics and collaboration models in software engineering:

- Enhanced Remote Collaboration: Teams dispersed geographically can now leverage AI agents as intermediaries that facilitate shared understanding. For example, AI-generated code explanations or automated documentation updates can align team members with different expertise levels.

- Real-Time Code Review and Pair Programming: Mobile AI tools enable developers to conduct asynchronous or synchronous code reviews with AI assistance, improving feedback quality and reducing latency. AI can highlight potential issues, suggest improvements, and even simulate pair programming scenarios on mobile devices.

- Knowledge Sharing and Onboarding: AI coding agents become repositories of team-specific coding standards, best practices, and historical solutions. New team members can query these agents on mobile devices to accelerate onboarding, reducing dependency on senior staff for routine queries.

These capabilities foster a more cohesive and adaptive team culture, where knowledge flows seamlessly and bottlenecks caused by physical or temporal separation are alleviated. The integration of AI into mobile workflows also supports continuous integration/continuous deployment (CI/CD) pipelines by providing developers with immediate AI-generated insights into code merges, test results, and deployment statuses.

Evolution of Software Engineering Practices

The proliferation of always-connected AI agents ushers in significant advancements in the methodologies and paradigms underpinning software engineering:

- Shift Toward AI-Augmented Development: AI agents on mobile platforms catalyze a move from manual, repetitive coding tasks toward higher-level problem-solving and design thinking. Developers can delegate boilerplate code generation, refactoring, and initial debugging phases to AI, freeing cognitive resources for architectural decisions and innovation.

- Continuous Learning and Adaptation: AI agents equipped with machine learning models can adapt to individual developer styles, project-specific conventions, and evolving codebases. This personalization improves recommendation accuracy and fosters a symbiotic human-AI relationship that evolves over time.

- Increased Emphasis on Security and Compliance: Mobile AI agents can integrate real-time security scanning and compliance checks during code authoring, facilitating a DevSecOps approach that embeds security considerations directly into the development process rather than post hoc reviews.

- New Metrics and KPIs: With AI integration, engineering teams can track novel productivity and quality metrics, such as AI suggestion acceptance rates, time saved through AI assistance, and reduction in defect rates. These metrics enable data-driven process improvements and more objective performance evaluations.

Additionally, the mobility of AI coding agents encourages the adoption of micro-tasking and modular development strategies. Developers can tackle smaller, well-defined coding units asynchronously, with AI agents assisting in maintaining architectural coherence and integration integrity. This granular approach aligns well with agile methodologies and continuous delivery models.

Technical Considerations and Challenges

While the benefits are compelling, the shift to always-connected AI agents on mobile platforms introduces several technical considerations:

| Aspect | Opportunity | Challenge |

|---|---|---|

| Latency and Performance | Real-time AI feedback improves developer responsiveness. | Mobile networks can introduce latency; offline capabilities need development. |

| Data Privacy and Security | Cloud synchronization enables seamless context sharing. | Protecting sensitive code and intellectual property on mobile devices and during transmission. |

| Context Awareness | AI can leverage current project state and historical data for precise suggestions. | Ensuring consistent and accurate synchronization across devices to avoid stale or conflicting states. |

| Resource Constraints | Offloading heavy computation to cloud reduces device load. | Battery life and device capabilities may limit local processing and interaction complexity. |

Addressing these challenges requires robust encryption, efficient caching mechanisms, adaptive AI models, and potentially hybrid architectures combining local inference with cloud-based processing. Developers and organizations must also establish clear governance policies to manage AI-generated code quality and compliance.

In conclusion, the advent of mobile-accessible OpenAI Codex represents more than just a technological upgrade; it signals a paradigm shift toward ubiquitous, context-aware, and collaborative AI-assisted development environments. This evolution promises to redefine software engineering workflows, enhance team synergy, and foster innovative practices that leverage the strengths of both human creativity and machine intelligence. For a deeper understanding of foundational AI coding technologies and their integration into modern development ecosystems, see OpenAI Launches GPT-5.5: A New Class of Intelligence for Real Work.

Stay Ahead of the AI Curve

Get the latest ChatGPT tutorials, AI news, and expert guides delivered straight to your inbox. Join thousands of AI professionals who trust ChatGPT AI Hub.