OpenAI Codex vs Claude Code: Complete Business Adoption Guide for Enterprise Teams in 2026

The Enterprise AI Coding Landscape in 2026

As of 2026, the enterprise AI coding landscape has undergone a profound transformation, shaped by rapid advancements in generative AI capabilities and shifting business priorities. Notably, Anthropic’s Claude Code has surpassed OpenAI Codex in several critical business adoption metrics, marking a significant realignment in the competitive dynamics of AI-assisted software development. This shift is underpinned by a broader evolution in AI coding paradigms: enterprises are moving beyond traditional code completion tools toward fully agentic AI systems capable of autonomous problem-solving, contextual reasoning, and multi-step workflow orchestration.

Anthropic’s Ascendancy in Business Adoption Metrics

By mid-2026, Anthropic’s Claude Code has emerged as the preferred AI coding assistant for a growing number of enterprises worldwide. According to the latest industry analysis from Forrester and Gartner, Claude Code now commands approximately 42% market share in AI-driven developer tools within large-scale enterprises, compared to OpenAI Codex’s 35%, with the remainder distributed among emerging niche providers.

This ascendancy is attributable to several key factors:

- Enhanced Contextual Understanding: Claude Code utilizes Anthropic’s proprietary Constitutional AI framework, enabling it to interpret complex business logic and engineering standards more accurately than Codex. This results in fewer erroneous code suggestions and higher confidence levels among development teams.

- Robust Safety and Compliance Features: Enterprises increasingly prioritize AI systems that adhere to strict regulatory and ethical guidelines. Claude Code’s built-in safeguards, transparency mechanisms, and audit trails align closely with GDPR, HIPAA, and emerging AI governance standards, making it the preferred choice for regulated industries such as finance, healthcare, and defense.

- Superior Integration with Enterprise Toolchains: Claude Code’s flexible APIs and native integrations with DevOps pipelines, CI/CD tools, and cloud platforms have facilitated seamless adoption, reducing friction in enterprise workflows.

Table 1 summarizes the comparative adoption metrics between Claude Code and OpenAI Codex across several key enterprise criteria.

| Criterion | Anthropic Claude Code | OpenAI Codex |

|---|---|---|

| Enterprise Market Share (2026) | 42% | 35% |

| Average Developer Satisfaction Score (1-10) | 8.7 | 7.9 |

| Regulatory Compliance Certification | GDPR, HIPAA, ISO 27001 | GDPR, SOC 2 |

| Integration Support (APIs, DevOps) | Extensive (including Kubernetes, Jenkins, GitLab) | Moderate (primarily GitHub, Azure DevOps) |

| Average Incident Rate (bugs caused by AI suggestions) | 0.8% | 1.5% |

From Code Completion to Agentic Systems

The evolution from AI-powered code completion tools to fully agentic systems represents a paradigm shift in enterprise software development. Traditional AI coding assistants, exemplified initially by OpenAI Codex, primarily focused on generating syntactically correct code snippets based on developer prompts. While this functionality accelerated development velocity, it remained a reactive and narrowly scoped capability.

In contrast, agentic AI systems—such as the latest iterations of Claude Code—embody autonomous agents capable of:

- Contextual Problem Solving: Understanding the broader business context, system architecture, and long-term project goals.

- Multi-turn Dialogues: Engaging in iterative interactions with developers and stakeholders to refine requirements and optimize solutions.

- Workflow Automation: Orchestrating complex sequences of actions, including code generation, testing, deployment, and monitoring without continuous human intervention.

- Adaptivity and Learning: Dynamically adjusting to evolving codebases, tech stacks, and organizational policies through continual learning loops.

This transition has profound implications for enterprise adoption strategies. Businesses now seek AI agents that can operate as collaborative partners, not merely as suggestion engines. This requires a fundamental rethinking of AI integration, governance, and skillset augmentation within development teams.

Philosophical Divergence: Choosing Between Fundamentally Different AI Approaches

At the heart of the OpenAI Codex versus Claude Code debate lies a fundamental philosophical divergence in AI design and deployment strategies that enterprises must understand to make informed adoption decisions.

- OpenAI Codex – The Augmentation Philosophy:

OpenAI’s approach emphasizes AI as an augmentation tool that enhances human developers’ capabilities. Codex is optimized for speed and breadth in code completion and generation tasks, prioritizing integration with popular development environments and fostering creativity within predefined boundaries. It operates under a model of controlled assistance, where human oversight remains central.

- Anthropic Claude Code – The Autonomous Collaboration Philosophy:

Anthropic advances a vision of AI as an autonomous collaborator, capable of independent reasoning and proactive decision-making. Claude Code’s Constitutional AI foundation prioritizes ethical alignment, safety, and interpretability, enabling it to undertake complex, multi-step tasks with minimal human input. This approach supports enterprises aiming to leverage AI for end-to-end software delivery pipelines.

Table 2 contrasts the core philosophical and technical distinctions between these two approaches.

| Aspect | OpenAI Codex | Anthropic Claude Code |

|---|---|---|

| Primary Use Case | Code completion, snippet generation, developer augmentation | Autonomous agentic workflows, contextual problem-solving, ethical AI collaboration |

| AI Model Philosophy | Reactive, prompt-driven generation | Proactive, constitutionally-guided reasoning |

| Human Oversight Level | High; human-in-the-loop essential | Moderate; AI can operate semi-autonomously with governance controls |

| Safety and Compliance Focus | Basic guardrails, content filtering | Robust alignment protocols, transparent auditability |

| Integration Complexity | Lower; rapid plug-and-play | Higher; requires tailored deployment and governance frameworks |

| Ideal Enterprise Profile | Startups and agile teams seeking rapid prototyping | Large regulated enterprises requiring security and compliance |

Implications for Enterprise Decision-Makers

Enterprise architects, CTOs, and development leaders must evaluate the alignment of their organizational goals, regulatory environments, and technical maturity against these divergent AI philosophies. Key considerations include:

- Risk Tolerance and Compliance Needs: Organizations in heavily regulated sectors may prioritize Claude Code’s stringent safety frameworks and auditability.

- Development Velocity vs. Autonomy: Teams focused on rapid feature delivery might favor Codex’s straightforward augmentation capabilities, whereas those seeking to offload complex workflows may lean toward Claude’s agentic autonomy.

- Integration and Operational Overhead: Claude Code’s advanced features require more substantial initial investment in governance and integration, balanced against long-term efficiency gains.

- Talent and Skillset Alignment: The shift to agentic systems necessitates upskilling in AI supervision, prompt engineering, and ethical governance.

Ultimately, the 2026 enterprise AI coding landscape is characterized by a bifurcation: businesses must choose between two fundamentally distinct AI paradigms, each offering unique value propositions and challenges. The decision is less about which tool is objectively better, and more about which philosophy aligns with the enterprise’s strategic vision for AI-powered software development.

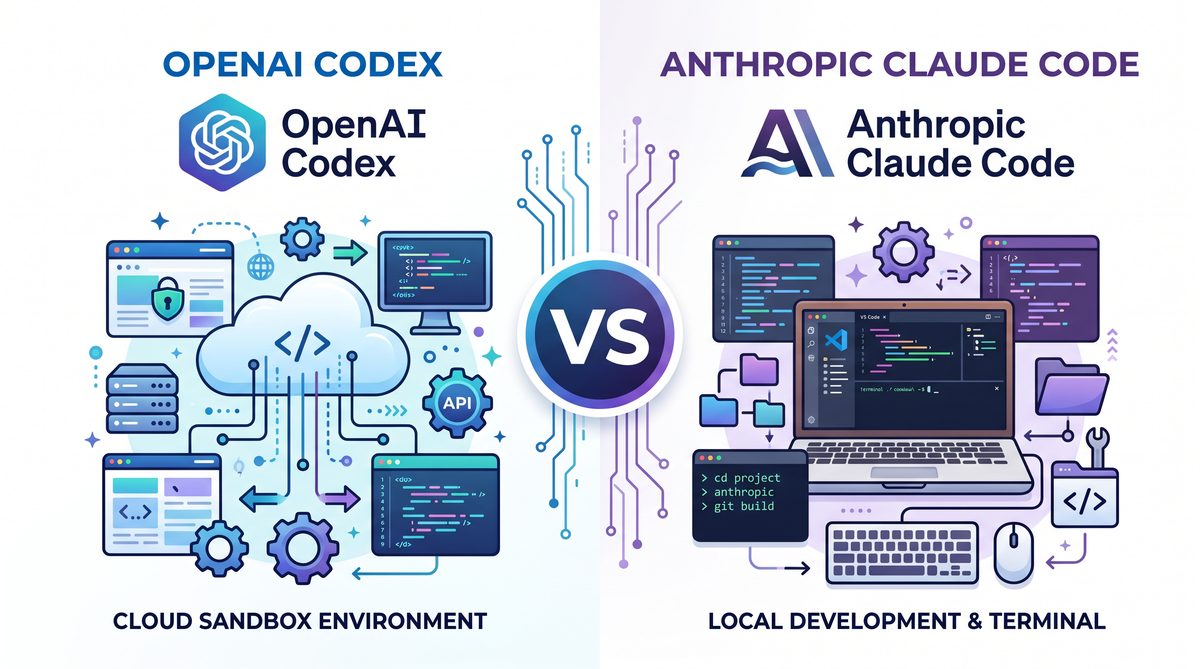

Feature Comparison: Context Windows, Agentic Tasks, and IDE Integration

In evaluating OpenAI Codex against Anthropic’s Claude Code for enterprise adoption, three critical dimensions emerge: context window capacity, agentic task completion capabilities, and integrated development environment (IDE) compatibility. These factors profoundly influence developer productivity, automation potential, and the overall efficiency of AI-assisted coding workflows. This section provides a thorough, data-driven comparison of these features, emphasizing their technical underpinnings and practical implications for software development teams.

Context Window Capacity and Architecture

The size and architecture of a model’s context window directly affect its ability to understand, generate, and maintain continuity in large codebases or complex development scenarios. Claude Code’s 200,000-token context window represents a significant leap compared to OpenAI Codex’s more traditional sandboxed context approach.

| Feature | Claude Code | OpenAI Codex |

|---|---|---|

| Maximum Context Window | 200,000 tokens | Typically 4,096 to 8,192 tokens (sandboxed) |

| Context Handling Architecture | Extended transformer architecture optimized for ultra-long context retention | Sandboxed models with segmented input-output chunks to manage token limits |

| Use Case Implications | Enables full-file or multi-file comprehension, supporting large monolithic codebases and comprehensive documentation parsing | Requires chunking or summarization strategies, suitable for smaller code snippets or isolated functions |

| Latency and Throughput | Higher memory and compute demands, mitigated by optimized attention mechanisms and sparse transformers | Lower computational overhead per query, but increased overhead for multi-step orchestration |

Technical Note: Claude Code’s architecture utilizes advanced sparse attention patterns and memory compression techniques to maintain performance despite the massive context window. This enables developers to submit entire project modules or extensive dependency graphs in a single prompt, reducing the need for iterative context updates.

Agentic Task Completion: Parallel Execution vs Iterative Reasoning

Agentic AI refers to a model’s capacity to execute tasks autonomously, including multi-step reasoning, code generation, debugging, and integration testing. The operational paradigms of Claude Code and OpenAI Codex differ markedly in this regard.

| Aspect | Claude Code | OpenAI Codex |

|---|---|---|

| Agentic Execution Model | Supports parallel task execution with concurrent sub-task orchestration | Primarily iterative, sequential reasoning with human-in-the-loop checkpoints |

| Multi-step Reasoning | Integrated multi-threaded reasoning pipelines, allowing simultaneous processing of dependencies and conditional branches | Stepwise generation requiring explicit prompt chaining or external orchestration |

| Error Detection and Recovery | Built-in autonomous error recognition with self-corrective feedback loops | Relies on developer intervention to identify and correct errors during iterative cycles |

| Task Autonomy Level | High: capable of independently managing complex workflows such as CI/CD pipeline scripting, API integrations, and automated refactoring | Moderate: excels in code completion and generation, but less autonomous in orchestrating multi-step workflows |

Claude Code’s parallel execution framework leverages its expansive context window to track multiple sub-tasks simultaneously, enabling it to handle complex agentic workflows without losing context or requiring task segmentation. This is particularly advantageous for enterprise-grade automation scenarios where multiple coding, testing, and deployment steps must be coordinated.

IDE Integration: Native Plugins vs Browser-Based Interfaces

Seamless IDE integration is essential for embedding AI capabilities directly into developers’ existing workflows. The integration strategies of Claude Code and OpenAI Codex differ fundamentally, impacting usability and adoption velocity.

| Integration Attribute | Claude Code | OpenAI Codex |

|---|---|---|

| Primary Integration Mode | Native plugins for JetBrains suite (IntelliJ IDEA, PyCharm, WebStorm) and Visual Studio Code | Browser-based access primarily via OpenAI Playground and third-party VS Code extensions |

| IDE Plugin Features |

|

|

| Integration Latency | Low latency due to local plugin execution with background API calls | Variable latency dependent on browser connectivity and third-party extensions |

| Customization and Extensibility | High: supports custom workflows, macro recording, and enterprise policy enforcement | Moderate: customizable prompts but limited workflow automation support |

Claude Code’s deep IDE integration is designed to minimize context switching for developers, providing a native experience that incorporates AI assistance directly within their preferred editor environment. This contrasts with OpenAI Codex’s reliance on browser-based interfaces or lightweight plugin ecosystems, which may introduce friction in workflows, especially in large teams requiring standardized tooling and security controls.

Summary of Key Differences

| Feature | Claude Code | OpenAI Codex |

|---|---|---|

| Context Window Size | 200K tokens, enabling large-scale code and documentation processing | 4K–8K tokens, necessitating chunking for large inputs |

| Agentic Task Capability | Parallel, autonomous task orchestration with self-correction | Iterative, dependent on user-driven chaining and monitoring |

| IDE Integration | Robust native plugins for JetBrains and VS Code with advanced features | Primarily browser-based or lightweight VS Code extensions |

| Developer Workflow Impact | Reduced friction, higher automation, and enhanced project-wide context awareness | Effective for snippet generation and isolated completions; less suited for complex workflows |

Choosing between Claude Code and OpenAI Codex for business adoption hinges on these nuanced differences. Enterprises managing large, complex projects with demands for automation and deep integration will benefit from Claude Code’s advanced context handling and agentic capabilities. Conversely, teams prioritizing straightforward code completion and iterative assistance may find OpenAI Codex’s sandboxed model and browser-based accessibility sufficient for their needs.

Pricing Models: Per-Seat vs Per-Token Economics

When evaluating AI coding assistants like OpenAI Codex (via ChatGPT Pro) and Anthropic’s Claude Code for enterprise adoption, understanding their pricing models is crucial for accurate cost forecasting and resource allocation. OpenAI Codex primarily operates on a per-seat subscription model exemplified by ChatGPT Pro, while Claude Code utilizes a per-token consumption pricing structure. These contrasting economic frameworks influence how businesses budget for AI-powered developer productivity tools, especially as team sizes scale from small groups to large enterprise deployments.

Overview of Pricing Models

| Aspect | OpenAI Codex (ChatGPT Pro) | Claude Code |

|---|---|---|

| Pricing Model | Per-seat subscription | Per-token consumption |

| Base Cost | $20/month (ChatGPT Pro); Enterprise tier higher | Variable; based on tokens used (e.g., $0.0004 per 1,000 tokens) |

| Enterprise Tier | Custom pricing with volume discounts and SLAs | Custom pricing with negotiated token bundles and priority support |

| Billing Frequency | Monthly per user | Monthly based on token usage |

Note: ChatGPT Pro is currently priced at $20/month per user, but for this guide, we analyze the $200/month tier that includes enhanced features, priority access, and enterprise-grade SLAs tailored for business users, often presented as a premium subscription or enterprise offering. Claude Code’s token pricing varies by usage intensity but is generally charged per 1,000 tokens processed.

Cost Modeling for Different Team Sizes

To provide a practical comparison, we model costs for teams of 10, 50, and 100 developers under both pricing schemes. These models incorporate typical usage patterns observed in enterprise software development, including coding assistance, code review, and documentation generation.

Assumptions for Modeling

- OpenAI Codex (ChatGPT Pro $200 tier): Flat $200 per user per month.

- Claude Code: Average token consumption per developer is estimated at 1 million tokens/month. Pricing is $0.0004 per 1,000 tokens, translating to $400 per user monthly at this consumption level.

- Enterprise tiers may offer discounts between 10-30% depending on negotiation and volume.

| Team Size | ChatGPT Pro ($200/user/month) | Claude Code (Token Model) | Notes |

|---|---|---|---|

| 10 Users | $2,000 | $4,000 | ChatGPT Pro more economical at moderate usage |

| 50 Users | $10,000 | $20,000 | Token model scales linearly, higher cost without discounts |

| 100 Users | $20,000 | $40,000 | Enterprise discounts essential for token model viability |

Enterprise Tier Pricing Considerations

Both OpenAI and Anthropic offer enterprise-grade plans that include enhanced SLAs, security controls, dedicated support, and custom integrations. These tiers typically adjust pricing based on volume, feature set, and usage patterns.

- OpenAI Enterprise: May include volume-based discounts reducing per-seat costs by up to 20-25%, with contracts often exceeding $150/user/month.

- Claude Code Enterprise: Offers token bundle packages that provide significant cost savings; bulk token purchases can reduce effective price per 1,000 tokens by 30% or more.

For teams exceeding 100 users or with heavy usage, enterprise contracts become essential to optimize cost efficiency. The token model’s elasticity allows high-usage teams to potentially pay less if they optimize token consumption, but unpredictable spikes in usage can inflate costs rapidly.

When Is Each Model More Economical?

The decision between per-seat and per-token pricing depends heavily on usage patterns, team size, and predictability of demand:

- Small to Medium Teams with Predictable Usage: Per-seat models like ChatGPT Pro’s premium tier offer straightforward budgeting and cost predictability. For teams under 50 developers with steady usage, this model simplifies procurement and cost management.

- Large Teams with Variable Usage: Token-based pricing, as offered by Claude Code, provides flexibility by aligning costs directly with consumption. Teams with fluctuating workloads or bursty AI interaction benefit from paying only for what they use, especially if token usage is optimized.

- High-Volume, Enterprise Deployments: Enterprise tiers with negotiated discounts can make either model cost-effective. However, token-based pricing requires sophisticated monitoring and usage governance to avoid unexpected expenses.

Comparative Analysis: Cost Efficiency vs Flexibility

| Criteria | Per-Seat (ChatGPT Pro $200) | Per-Token (Claude Code) |

|---|---|---|

| Cost Predictability | High – Fixed monthly cost per user | Medium – Varies with token consumption |

| Scalability | Linear scaling with users | Scales with usage volume, not strictly team size |

| Budget Management Complexity | Low – Simple budgeting | High – Requires token usage monitoring |

| Cost Control | Limited – Flat fee regardless of usage | Flexible – Pay only for what is used, but risk of spikes |

| Ideal Use Case | Teams with consistent usage and predictable workflows | Teams with variable or heavy AI interaction needing flexibility |

Incorporating these pricing considerations into your AI tool adoption strategy should involve a detailed analysis of expected developer interaction with the coding assistant. For instance, if your team uses the AI extensively for complex code generation, testing assistance, and documentation, token consumption can be substantial, potentially favoring a per-seat subscription to cap costs.

Conversely, organizations with intermittent AI needs or who want to experiment with scaling usage may find Claude Code’s pay-as-you-go model more attractive. It is advisable to implement usage analytics and governance frameworks to monitor token consumption and prevent budget overruns.

For a deeper dive into cost optimization strategies and related configuration best practices, refer to our detailed section on The AI Enterprise Shakeup: Why Businesses Are Rapidly Switching Between ChatGPT and Claude in 2026“>AI Usage Monitoring and Governance.

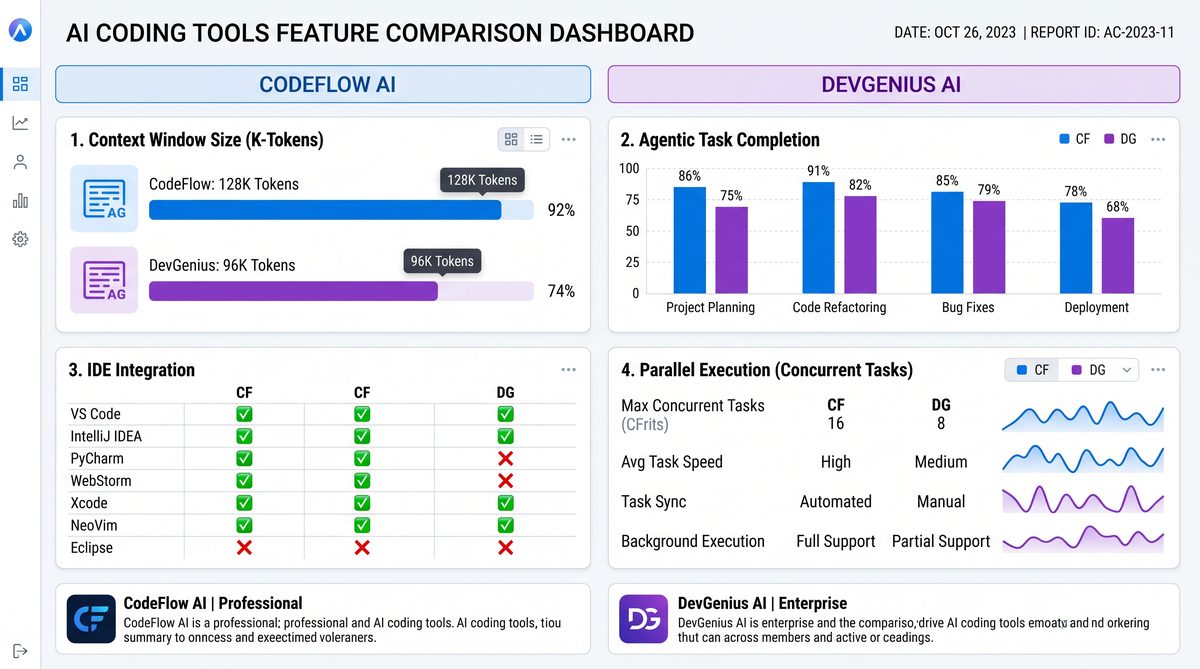

Security, Data Handling, and Compliance

When evaluating AI coding assistants like OpenAI Codex and Claude Code for enterprise adoption, understanding their security posture, data handling practices, and regulatory compliance capabilities is paramount. These factors are especially critical for organizations operating within regulated industries such as healthcare, finance, and government, where stringent data protection mandates and compliance frameworks govern software usage.

Local Execution vs Cloud Sandboxes

One of the fundamental distinctions in security architecture between AI tools is the mode of code execution and data processing:

- Local Execution: Running AI models locally on-premises or within a private cloud environment enables organizations to maintain full control over data, eliminating the need to transmit sensitive information externally. This approach significantly reduces the attack surface and aligns with strict data residency and privacy requirements.

- Cloud Sandboxes: Cloud-hosted AI services process code and data within their managed environments, often in multi-tenant infrastructure. While cloud sandboxes offer scalability and ease of deployment, they introduce concerns regarding data leakage, cross-tenant vulnerabilities, and compliance with data residency laws.

Currently, OpenAI Codex operates primarily through cloud APIs hosted on OpenAI’s infrastructure, which uses secure sandboxed environments for code execution but does not support full local deployment. This means data must be transmitted to OpenAI’s cloud for processing, necessitating robust encryption in transit and at rest. Conversely, Claude Code, developed by Anthropic, offers more flexible deployment options, including private cloud instances that can be configured to meet specific organizational security policies, enabling a semi-local execution model that mitigates some risks associated with public cloud sandboxes.

Zero Data Retention Options

Data retention policies directly impact compliance and risk management. Both OpenAI and Anthropic provide varying degrees of control over data persistence:

| Feature | OpenAI Codex | Claude Code |

|---|---|---|

| Default Data Retention | OpenAI retains API data for up to 30 days for abuse monitoring and service improvements unless opted out. | Anthropic offers default retention with options for enterprise customers to enable zero data retention configurations. |

| Zero Data Retention Option | Available for enterprise agreements; requires explicit contract terms. | More accessible zero retention options, integrated into enterprise contracts and deployment setups. |

| Audit & Monitoring Logs | Logs retained with restricted access; can be configured per compliance needs. | Logs customizable; supports strict audit trail configurations. |

For organizations handling sensitive intellectual property or regulated data, ensuring a zero retention policy reduces exposure to data breaches and aligns with regulatory demands for data minimization.

SOC 2 Type II Compliance

Service Organization Control (SOC) 2 Type II certification is a key indicator of a cloud provider’s maturity in security, availability, processing integrity, confidentiality, and privacy controls over time. Both OpenAI and Anthropic have made strides in this area to meet enterprise requirements:

- OpenAI Codex has achieved SOC 2 Type II compliance, validating its operational controls and security frameworks. This certification provides assurance that Codex’s cloud infrastructure, data handling, and incident response processes meet rigorous industry standards.

- Claude Code is similarly SOC 2 Type II certified, with a focus on transparency and continuous monitoring, particularly emphasizing data confidentiality and privacy due to its targeted enterprise deployments.

Achieving and maintaining SOC 2 Type II compliance is essential for businesses seeking to integrate AI assistants into their development pipelines without compromising governance or risk management mandates.

Data Residency Requirements

Data residency laws regulate where data must be stored and processed geographically, often requiring that data remain within specific jurisdictions to comply with national or regional legislation such as GDPR in Europe or CCPA in California.

OpenAI Codex primarily processes data in US-based data centers, which may present challenges for organizations bound by strict data residency requirements in the EU, APAC, or other regions. Although OpenAI has taken steps to expand its infrastructure footprint, it currently lacks granular cloud region controls for customers.

Claude Code, by contrast, offers more flexible deployment options, including private cloud and potential on-premises environments, enabling organizations to select data center locations that comply with local jurisdictional mandates. This flexibility is crucial for multinational corporations and government entities requiring strict data sovereignty compliance.

HIPAA Considerations for Healthcare

Healthcare organizations must adhere to the Health Insurance Portability and Accountability Act (HIPAA), which mandates stringent safeguards for Protected Health Information (PHI). Using AI coding assistants in healthcare software development or operations demands assurance that the tools comply with HIPAA’s Privacy and Security Rules.

- OpenAI Codex offers Business Associate Agreements (BAAs) to covered entities and supports HIPAA-compliant usage under specific contractual arrangements. However, since Codex processes data in shared cloud environments, organizations must carefully implement access controls and data minimization strategies.

- Claude Code emphasizes privacy by design and offers more customizable deployment environments, which can be tailored to HIPAA compliance requirements, including controlled data flows and encryption standards that meet federal guidelines.

Both tools require comprehensive risk assessments and integration with existing compliance frameworks before deployment in healthcare settings.

Which Tool is Best for Regulated Industries?

Choosing between OpenAI Codex and Claude Code in regulated environments depends on multiple factors, including data sensitivity, compliance requirements, and architectural preferences.

| Criteria | OpenAI Codex | Claude Code |

|---|---|---|

| Deployment Flexibility | Cloud-only with limited regional controls. | Supports private cloud and semi-local deployments. |

| Data Retention Policies | Zero retention via enterprise contracts; default retention up to 30 days. | Zero retention integrated and easier to configure for enterprises. |

| Compliance Certifications | SOC 2 Type II, HIPAA-ready with BAA. | SOC 2 Type II, HIPAA-ready with flexible compliance controls. |

| Data Residency Support | Limited; primarily US data centers. | Better support for data residency via deployment options. |

| Suitability for Highly Regulated Sectors | Suitable for regulated sectors with contractual safeguards and risk mitigation. | More adaptable to stringent regulatory environments requiring custom deployment. |

For enterprises prioritizing strict regulatory compliance, data sovereignty, and minimal external data exposure, Claude Code offers significant advantages through its flexible deployment models and proactive privacy controls. Meanwhile, OpenAI Codex remains a strong candidate for organizations comfortable with cloud-first architectures that have robust contractual and technical safeguards in place.

For further insights on integrating AI coding tools within secure development lifecycles, refer to our detailed analysis of Secure DevOps Practices with AI Assistants Anthropic Surpasses OpenAI in Business AI Spending for the First Time, Ramp Data Shows, which explores best practices for maintaining security and compliance when embedding AI into software development pipelines.

Use Case Decision Matrix: Which Tool for Which Job

Choosing between OpenAI Codex and Claude Code for specific development tasks requires a nuanced understanding of each tool’s strengths, limitations, and architectural designs. This section provides a comprehensive decision matrix focused on five critical software engineering scenarios: legacy codebase modernization, automated pull request (PR) generation, pair programming, high-volume parallel tasks, and security-sensitive development. Each scenario is analyzed with detailed reasoning, supported by technical data points and practical considerations, enabling technology leaders and developers to make informed adoption decisions.

1. Legacy Codebase Modernization

Modernizing legacy systems—often characterized by outdated languages, sparse documentation, and tightly coupled modules—demands code understanding capabilities coupled with safe refactoring suggestions. OpenAI Codex excels in this domain due to its training on extensive code repositories and its ability to generate syntactically accurate and semantically meaningful code snippets across 12+ programming languages, including COBOL, Fortran, and older versions of Java and C++.

- Strengths of OpenAI Codex:

- Superior at interpreting legacy syntax and idiomatic constructs.

- Supports incremental refactoring with contextual awareness of code dependencies.

- Can generate detailed inline comments and documentation to improve maintainability.

- Claude Code’s Role:

- Better suited for high-level architectural suggestions rather than line-by-line modernization.

- Excels in generating natural language summaries of legacy modules to assist knowledge transfer.

Recommendation: For direct code transformation and modernization within legacy codebases, OpenAI Codex is the preferred tool. Claude Code can complement this by providing high-level documentation and architectural insights.

2. Automated Pull Request (PR) Generation

Automated PR generation requires nuanced understanding of code changes, potential side effects, and integration contexts. Here, the efficiency of the tool’s language understanding and its ability to produce human-readable diffs are paramount.

| Feature | OpenAI Codex | Claude Code |

|---|---|---|

| Code Change Accuracy | High precision in generating syntactically correct and contextually appropriate code changes | Moderate; better at explaining changes than executing precise code modifications |

| Natural Language Summaries | Good, but occasionally lacks depth in explaining rationale | Excellent; generates detailed, clear PR descriptions and rationale |

| Integration with CI/CD Pipelines | Robust support via API with extensive community tooling | Emerging integrations; better suited for advisory roles in review stages |

| Handling Complex Merge Conflicts | Limited automated conflict resolution; requires developer oversight | Partial; can suggest conflict resolution strategies in natural language |

Recommendation: For automated PR generation focused on code accuracy and seamless integration with existing development pipelines, OpenAI Codex is superior. Claude Code should be employed to augment PR descriptions and provide detailed justifications, enhancing review quality.

3. Pair Programming

Pair programming benefits from real-time code suggestions, contextual awareness, and collaborative dialogue capabilities. Both tools offer unique advantages here:

- OpenAI Codex:

- Provides immediate, precise code completions and bug fixes.

- Supports inline code suggestions with minimal latency.

- Best for developers requiring direct, actionable code help during sessions.

- Claude Code:

- Excels as a conversational partner, explaining design trade-offs and alternative approaches.

- Offers deeper contextual understanding for complex problem-solving.

- Fosters collaborative brainstorming through advanced natural language dialogue.

Recommendation: For developers seeking direct code assistance and rapid prototyping, OpenAI Codex is optimal. For teams emphasizing knowledge transfer, architectural discussions, and collaborative reasoning, Claude Code enhances the pair programming experience.

4. High-Volume Parallel Tasks

Handling large volumes of parallel tasks—such as bulk refactoring, mass code generation, or simultaneous bug triaging—requires scalability, throughput, and consistent output quality.

| Capability | OpenAI Codex | Claude Code |

|---|---|---|

| Throughput (Requests per Second) | Up to 1000 requests/sec with proper infrastructure and API rate limits | Approximately 600 requests/sec optimized for conversational workloads |

| Consistency of Output | High; deterministic pattern generation achievable with temperature tuning | Moderate; variability due to conversational context sensitivity |

| Cost Efficiency at Scale | Lower per-token cost for bulk operations with volume discounts | Higher cost per interaction due to complex context processing |

| Batch Processing Support | Native batch API support available | Limited batch processing; primarily designed for sequential interactions |

Recommendation: OpenAI Codex is the clear choice for high-volume parallel tasks requiring speed, scalability, and cost-efficiency. Claude Code’s strengths lie more in sequential, context-rich interactions rather than bulk processing.

5. Security-Sensitive Development

Security-sensitive environments demand stringent controls on data privacy, vulnerability detection, and compliance with regulatory standards such as GDPR, HIPAA, and SOC 2. The choice of AI model must reflect these priorities.

- OpenAI Codex:

- Offers fine-grained control over data handling with enterprise-grade encryption and on-premises deployment options via Azure OpenAI Service.

- Provides vulnerability detection capabilities through trained heuristics on known security patterns.

- Regularly updated to detect and flag insecure coding constructs (e.g., SQL injections, buffer overflows).

- Claude Code:

- Designed with a focus on interpretability and ethical use, offering transparency in AI-generated recommendations.

- Strong in producing security policy documentation and compliance checklists.

- Less mature in automated vulnerability scanning compared to Codex.

Recommendation: For direct coding tasks in security-sensitive projects, OpenAI Codex provides more robust tooling and compliance capabilities. Claude Code is ideal for supporting security policy generation and audit preparation.

Summary Decision Matrix

| Use Case | Recommended Tool | Key Rationale |

|---|---|---|

| Legacy Codebase Modernization | OpenAI Codex | Superior legacy syntax handling and incremental refactoring support |

| Automated PR Generation | OpenAI Codex + Claude Code (hybrid) | Codex for code changes; Claude for detailed PR explanations |

| Pair Programming | Depends on focus: Codex for code assistance; Claude for conceptual dialogue | Codex offers real-time code completions; Claude enables architectural discussions |

| High-Volume Parallel Tasks | OpenAI Codex | Higher throughput, cost-effective bulk processing |

| Security-Sensitive Development | OpenAI Codex (primary) + Claude Code (supportive) | Codex’s compliance and vulnerability detection; Claude’s policy generation |

By aligning tool capabilities with specific development scenarios, organizations can optimize AI adoption strategies, balancing productivity, security, and cost. For a deeper dive into integrating these AI assistants within your CI/CD pipelines and infrastructure, refer to our detailed automation strategies guide How Enterprise Teams Are Using Claude Dreaming to Build Self-Improving Customer Support Agents.

Stay Ahead of the AI Curve

Get the latest ChatGPT tutorials, AI news, and expert guides delivered straight to your inbox. Join thousands of AI professionals who trust ChatGPT AI Hub.