Inside Anthropic’s Claude Code Postmortem: Three Engineering Failures That Shook Developer Trust

Inside Anthropic’s Claude Code Postmortem: Three Engineering Failures That Shook Developer Trust

[IMAGE_PLACEHOLDER_HEADER]

In the fast-paced world of artificial intelligence, trust remains the cornerstone for widespread adoption and continued innovation. Large Language Models (LLMs) such as Anthropic’s Claude and OpenAI’s ChatGPT have become essential tools for developers, aiding in everything from code generation to complex problem-solving and data analysis. Yet, as powerful as these AI systems are, even subtle lapses can significantly impact productivity and developer confidence.

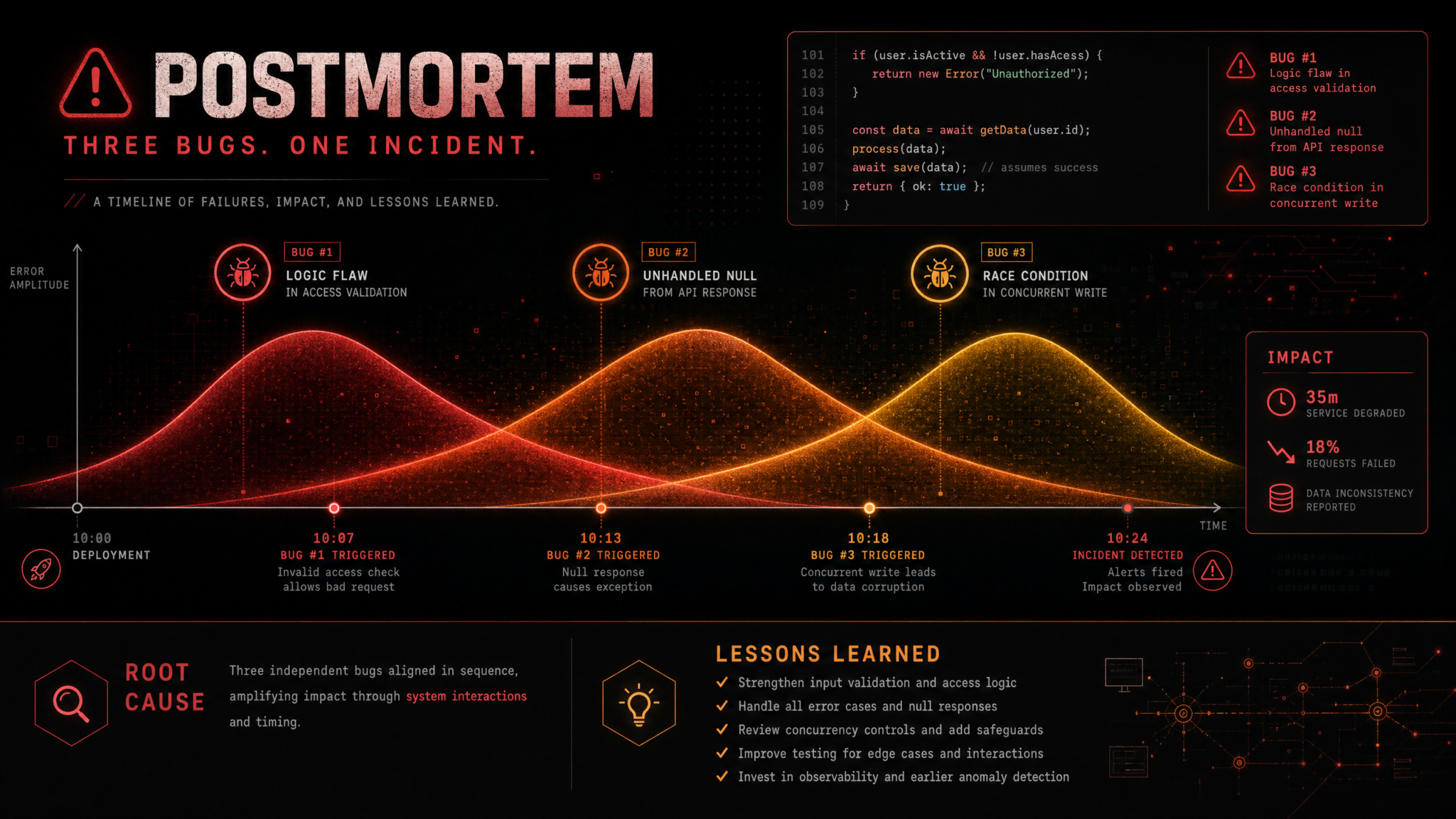

Recently, Anthropic released a transparent and detailed postmortem analyzing a six-week episode of degraded code generation performance in Claude. This incident, driven by three overlapping engineering failures, highlights both the inherent challenges in AI development and the critical importance of rigorous engineering practices. For developers and businesses relying heavily on reliable AI coding assistants, understanding the root causes and resolutions presented by Anthropic carries valuable lessons for the broader AI ecosystem.

The Triple Threat: Analyzing Claude’s Engineering Failures

Anthropic’s investigation identifies three distinct yet interconnected failures that concurrently compounded Claude’s code quality issues. While each failure on its own might have been manageable, their simultaneous occurrence created a complex troubleshooting environment that led to persistent performance degradation and a substantial erosion of developer trust.

Failure Point 1: Regression in Fine-Tuning Data Quality

At the core of Claude’s challenge was a subtle regression in the fine-tuning dataset pipeline. Fine-tuning allows a pre-trained LLM to specialize by training it further on carefully curated, task-specific data — in this case, coding examples. During a routine update, changes in data selection and preprocessing unintentionally introduced lower-quality or less relevant code snippets into the fine-tuning dataset.

Unlike a blatant failure, this regression caused a gradual decline in the relevance and accuracy of generated code. Developers observed increased occurrences of code with logical inconsistencies, incorrect syntax, and inefficient implementations for certain libraries. Because Claude continued to produce code-like outputs, the decline was not immediately apparent, making this bug particularly elusive.

This incident underscores a vital point: AI data pipelines demand continuous and granular validation. Not only must the raw data be verified, but downstream impacts on model behavior require systematic monitoring — ideally through targeted metrics and controlled experiments before new data is deployed.

[IMAGE_PLACEHOLDER_SECTION_1]

Failure Point 2: Overactive Safety Filter Update

Anthropic’s commitment to AI safety and ethical AI deployment is foundational to its product philosophy. However, a critical update to Claude’s safety filters inadvertently swung too far in restricting output. These filters are designed to screen potentially harmful or biased content, but the recent heuristics became overly aggressive in scrutinizing code patterns.

This led to two major issues affecting developers:

- Prompt Rejection: Claude declined to generate code for prompts flagged by the filters, even when the requests were legitimate — frustrating users expecting seamless assistance.

- Incomplete or Altered Code Snippets: The filters sometimes truncated or changed code mid-way to avoid flagged content, producing broken or non-functional outputs such as interrupted function definitions or omitted imports.

This scenario illustrates the challenging balance between safety and utility. In code generation, keywords and patterns innocuous in programming contexts might inadvertently be flagged by language-based safety heuristics. Building context-aware and nuanced safety systems is vital to preserving developer productivity while maintaining responsible AI use.

Failure Point 3: Versioning Mismatch in Deployment Infrastructure

The final key failure stemmed from a version inconsistency in Anthropic’s deployment infrastructure. Deploying AI models at scale involves coordinating multiple components, such as model versions, inference engines, and gateway services. In this case, a new model architecture or inference engine version was introduced without full compatibility testing against legacy modules managing context windows and prompt handling.

This mismatch caused erratic behavior, especially in multi-turn, complex coding tasks. Developers reported Claude “forgetting” earlier conversation parts or producing contextually irrelevant code. The core model remained intact intellectually, but the deployment layer failed to reliably deliver its capabilities.

This emphasizes the crucial role of robust MLOps practices—maintaining strict version control, automated compatibility checks, and incremental rollouts (canary deployments) to detect and prevent such mismatches before impacting all users.

For readers interested in how Anthropic is successfully deploying agentic AI despite such challenges, see our related article: How PwC and Anthropic Are Deploying Agentic AI Across 12 Enterprise Practice Areas.

The Combined Impact: Trust Eroded by Overlapping Failures

The most damaging aspect of these failures was their simultaneous appearance. Individually, each might have been diagnosed and mitigated swiftly. Together, they created a tangled web of symptoms, making root cause analysis challenging and prolonging resolution a drawn-out process.

Developers encountered inconsistent and unpredictable outputs, eroding their confidence. Questions arose: Was an issue with their prompt? A model misunderstanding? Or technical instability? This ambiguity forced users to waste valuable time debugging AI outputs rather than focusing on their core development work.

Organizations that depended on Claude for mission-critical applications faced tangible consequences—delayed project deadlines, increased need for manual oversight, and potential damage to customer trust. In today’s fiercely competitive AI subscription market, where Anthropic tightens usage limits and OpenAI offers enticing free trials for Codex, reliability is paramount. An AI system’s strength alone does not guarantee user retention if it can’t be trusted consistently.

[IMAGE_PLACEHOLDER_SECTION_2]

Lessons Learned: Advancing Engineering Excellence in AI

Anthropic’s forthright postmortem provides a blueprint for AI practitioners and organizations aiming to build more resilient systems:

- Rigorous Data Governance and Validation: Adopt comprehensive validation frameworks that assess not only data quality but also downstream impact on model outputs. Use A/B testing and incremental data pipeline changes to detect regressions early.

- Sophisticated, Context-Aware Safety Filters: Design safety mechanisms with nuanced understanding of the domain. For code generation, filters should distinguish between benign code patterns and genuinely harmful content. AI-driven safety evaluation techniques might provide adaptive balancing between safety and utility.

- Robust MLOps and Version Control: Implement military-grade deployment practices: immutable infrastructure, automated compatibility and integration tests, strict semantic versioning, and canary deployments. Ensuring all components align perfectly is critical to delivering stable AI experiences.

- Advanced Observability and Monitoring: Move beyond uptime or latency monitoring. Track domain-relevant KPIs such as code compilation success, semantic correctness, and efficiency metrics. Deploy anomaly detection systems to surface subtle performance degradations in real-time.

- Transparency Builds Developer Trust: Being open about challenges, causes, and mitigation strategies fosters accountability. Industry-wide adoption of transparent postmortems will accelerate shared learning and raise reliability standards across AI products.

- Holistic Testing Across System Components: AI platforms are complex ecosystems integrating models, data, infrastructure, and safety layers. Changes in one area can ripple unpredictably. Comprehensive testing protocols must reflect this interconnectedness.

To further explore how Anthropic is addressing ethical concerns and reducing agentic misalignment in their models, check out our detailed case study: How Anthropic Reduced Agentic Misalignment in Claude 4.5.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Useful Links

- How PwC and Anthropic Are Deploying Agentic AI Across 12 Enterprise Practice Areas

- How Anthropic Reduced Agentic Misalignment in Claude 4.5

- Anthropic’s Official Claude Code Quality Postmortem