Anthropic vs OpenAI in the Agent Era: Understanding the New AI Subscription Economics

[IMAGE_PLACEHOLDER_HEADER]

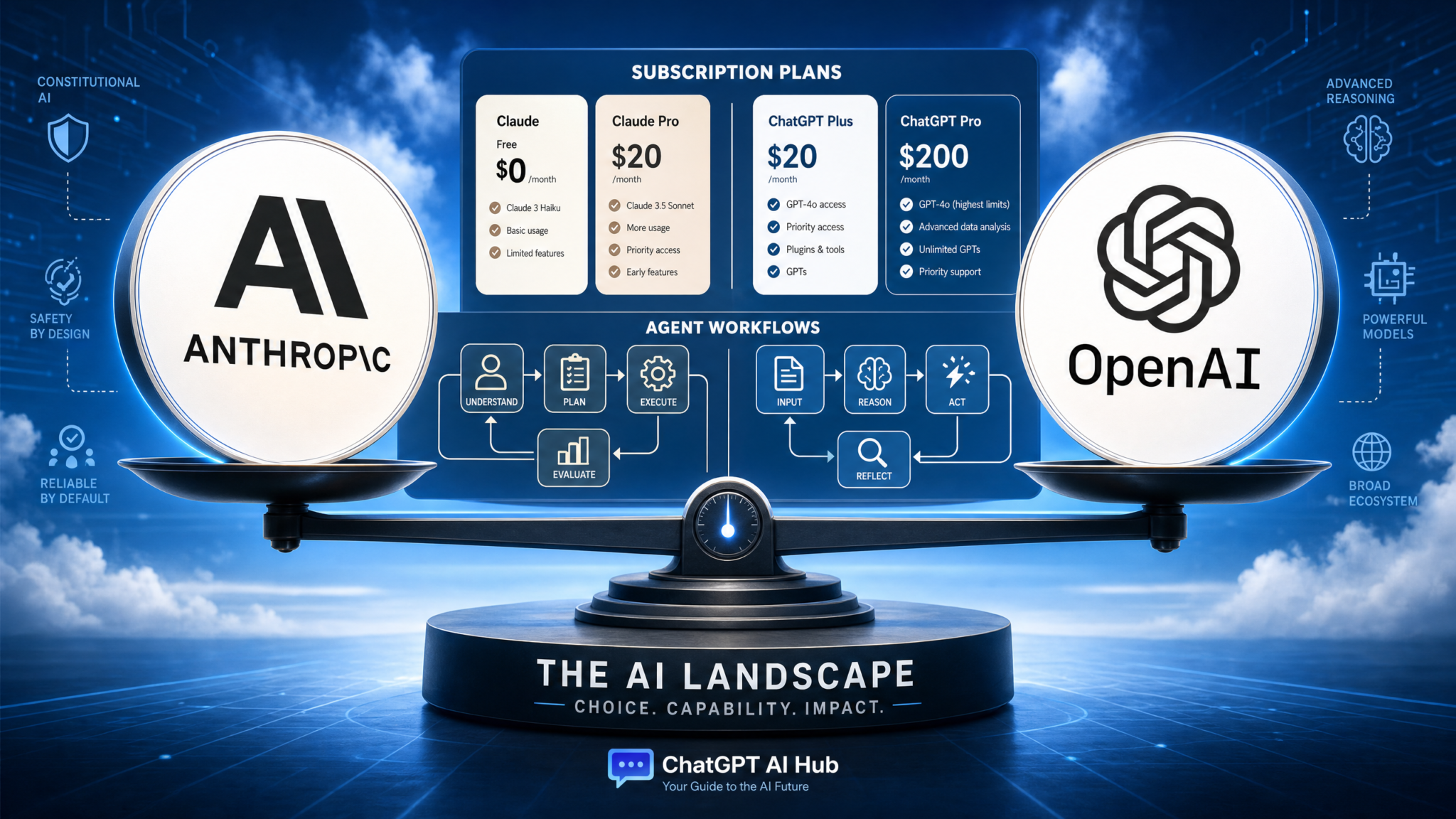

The artificial intelligence landscape is rapidly transforming—not only through groundbreaking technological advancements but also via evolving economic models that govern AI usage. For years, many AI subscription services promoted “unlimited” access or broad usage tiers for paying customers. However, as AI agents become more sophisticated and deeply integrated into critical workflows, computational costs have surged, forcing a fundamental rethink in pricing strategies.

We are entering an era where flat-rate, unlimited AI subscriptions give way to nuanced, metered models. Industry leaders like Anthropic and OpenAI are pioneering new subscription economics that factor in usage intensity, computational overhead, and business value. This article explores the contrasting yet convergent strategies employed by Anthropic and OpenAI—highlighting Anthropic’s credit meters for Claude agent tools and OpenAI’s introduction of free Codex access for enterprise clients. We will unpack why the era of unlimited AI subscriptions is fading and what this shift means for developers, businesses, and the broader AI ecosystem.

The Shift From Unlimited AI Subscriptions to Metered Usage

Initially, many AI platforms attracted users with subscription plans that featured seemingly generous quotas or unlimited access, especially at premium tiers. While this model accelerated adoption, it has now become unsustainable. AI models have grown exponentially in size and complexity, driving up the computational requirements to train and operate them. This growing demand has culminated in what industry leaders term the “compute crunch,” underscoring the scarcity and cost of high-performance GPUs and cloud infrastructure.

Similar to how cloud computing evolved from fixed hosting fees to granular pay-as-you-go billing based on compute, storage, and bandwidth, AI subscription pricing is trending toward usage-based metering. Such metering is crucial as AI shifts from straightforward prompt-response interactions to complex, multi-step agentic workflows—capable of autonomous decision-making and iterative reasoning—which consume significantly more computational resources.

[IMAGE_PLACEHOLDER_SECTION_1]

Anthropic’s Introduction of Credit Meters for Claude Agents

Anthropic, renowned for its focus on AI safety and research, recently implemented credit meters for its Claude agent tools. This change reflects a departure from the previous flexible or unlimited usage plans and aligns costs more closely with actual computational consumption.

For organizations leveraging Claude’s advanced agentic capabilities—such as extended context handling, autonomous task execution, and multi-turn reasoning—this new pricing structure imposes a direct connection between usage volume and cost. Unlike traditional single-turn API calls, Claude agents may initiate numerous sub-tasks, navigate complex reasoning chains, and engage in sustained dialogues, all demanding extensive GPU processing time.

The adoption of credit meters by Anthropic aims to:

- Promote Sustainable Growth: Ensuring revenue aligns proportionately with the computational resources consumed.

- Encourage Operational Efficiency: Motivating developers to optimize prompts, streamline workflows, and reduce unnecessary credit expenditure.

- Manage Resource Demand: Allowing Anthropic to intelligently throttle or scale user consumption in alignment with infrastructure capacity and pricing tiers.

This adjustment compels businesses to rethink their AI operational costs carefully, highlighting the importance of prompt engineering, agent interaction efficiency, and task complexity. The era of extremely liberal, cost-agnostic AI agent usage is drawing to a close.

For practical insights into corporate deployments of Claude agents, including collaborations with partners like PwC and SAP, explore our in-depth case study: How PwC and Anthropic Are Deploying Agentic AI Across 12 Enterprise Practice Areas.

OpenAI’s Strategic Move: Offering Free Codex Access to Business Customers

Contrasting Anthropic’s move toward metered billing, OpenAI has launched a strategic initiative providing two months of free access to its Codex model for business customers. Released soon after its integration within the ChatGPT mobile app, this offer aims to accelerate enterprise adoption of AI-assisted coding tools.

Codex, which powers GitHub Copilot, excels at generating and understanding code across multiple languages and frameworks. By introducing a complimentary trial period, OpenAI seeks to:

- Drive Market Penetration: Lowering barriers for businesses to embed AI coding assistance into development workflows without immediate subscription costs.

- Gather Enterprise Feedback: Collecting critical usage data and user insights to refine Codex and related developer-focused offerings.

- Gain Competitive Advantage: Challenging rival AI development tools by offering a compelling, cost-free entry point.

- Foster Ecosystem Lock-in: Encouraging businesses to experience enhanced productivity, leading to longer-term paid subscriptions and expanded OpenAI service usage.

This approach aligns with OpenAI’s broader organizational strategy to deepen AI adoption in enterprise environments, recently underscored by the launch of a dedicated business-focused AI subsidiary. Beyond merely providing an advanced tool, OpenAI is inviting businesses to explore a transformative coding paradigm empowered by AI.

For developers and security teams exploring AI’s impact on code quality and security, we recommend our detailed guide: Complete Guide to Claude Security: AI-Powered Vulnerability Scanning for Enterprise Codebases, which explores Anthropic’s AI-driven vulnerability detection capabilities and its implications for maintaining robust, secure codebases.

Why Unlimited AI Subscriptions Are Becoming Obsolete

The discontinuation of unlimited AI subscriptions stems from intertwined technological and economic pressures including:

- Rising Computational Expenses: Running and training today’s large language models (LLMs) and agent systems demands vast quantities of costly GPU resources.

- Limited Infrastructure Availability: The global shortage of high-end GPUs and cloud compute capacity—referred to as the “compute crunch”—constrains providers’ ability to scale unlimited plans sustainably.

- Exploding User Base: With platforms like ChatGPT accumulating over 800 million weekly users, even marginal per-user compute costs aggregate to significant financial outlay.

- Complexity of AI Agents: Unlike single-turn models, agents perform resource-intensive multi-step, multi-agent orchestration requiring more granular cost accounting.

- Shift to Value-Aligned Pricing: Providers are moving toward pricing that aligns with the economic value created, making flat-fee unlimited subscriptions financially untenable.

[IMAGE_PLACEHOLDER_SECTION_2]

These evolving subscription economics mirror traditional cloud service billing models, ensuring that users pay proportionally for compute, storage, and networking consumed. This approach drives industry-wide sustainability while encouraging consumers to optimize usage.

Key Implications for Developers and Businesses

For AI Developers:

- Efficiency and Optimization: Mastering prompt engineering and agent workflow design becomes essential to reduce token usage and computational overhead.

- Cost-Conscious Development: Evaluating trade-offs between model size, API selection, and architecture impacts both development budgets and user experience.

- Monitoring and Analytics: Leveraging real-time dashboards and analytics is critical to track credit consumption and identify optimization opportunities.

- Hybrid Model Strategies: Combining smaller fine-tuned models for routine tasks with larger models for complex reasoning helps manage costs effectively.

For Businesses:

- Advanced Budgeting: Moving beyond flat subscription fees to dynamic usage forecasts based on transactional AI consumption.

- Clear ROI Measurement: Justifying AI spend through demonstrable business outcomes linked to metered usage.

- Thoughtful Vendor Selection: Prioritizing providers offering transparent pricing, scalability, and value-added features—such as OpenAI’s free Codex trial—when choosing AI partners.

- Internal Cost Allocation: Implementing chargeback mechanisms to distribute AI expenses across departments or projects, promoting accountability.

- Strategic Partnerships: Building robust collaborations with AI providers, similar to PwC and SAP’s engagement with Anthropic, to negotiate tailored service agreements and optimize AI investment.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Useful Links

- How PwC and Anthropic Are Deploying Agentic AI Across 12 Enterprise Practice Areas

- Complete Guide to Claude Security: AI-Powered Vulnerability Scanning for Enterprise Codebases

- ChatGPT AI Hub – Free Prompt Library Access