GPT-5.3 Codex: Revolutionizing Software Development ( Part 1 of 7 )

⚡ The Brief

- What it is: GPT-5.3 Codex is an advanced AI model designed to assist in software development, acting as a coding co-pilot, architect, and QA engineer.

- Who it’s for: This tool is tailored for professional developers looking to enhance their coding workflows with AI-driven insights and automation.

- Key takeaways: GPT-5.3 Codex offers multi-modal inputs, advanced reasoning, and agentic capabilities, streamlining processes from prototyping to production.

- Pricing/Cost: Access to GPT-5.3 Codex may involve subscription fees or usage-based pricing, depending on the platform and integration level.

- Bottom line: GPT-5.3 Codex revolutionizes coding by integrating AI into development environments, boosting efficiency and innovation.

✓ Instant access✓ No spam✓ Unsubscribe anytime

ChatGPT Coding Masterclass Series

ChatGPT for Coding: The Complete Beginner’s Guide (Part 1 of 7)

Welcome to the ultimate deep dive into leveraging ChatGPT—powered by the cutting-edge GPT-5.3-Codex model—for coding mastery in 2026. This masterclass is designed for professional developers eager to harness AI agents, modern SDKs, and cloud-native tooling to supercharge software development workflows.

Table of Contents

- Introduction: Why ChatGPT for Coding?

- Deep-Dive Theoretical Overview

- Step-by-Step Guide: First Steps with GPT-5.3-Codex

- OpenAI Agents SDK & Agent Harness Patterns

- Codex CLI Usage: Terminal Commands & Flags

- IDE Integrations: Setup in Cursor, Windsurf, VS Code

- Advanced Prompt Templates for Coding

- Concrete Code Examples: Python, Rust, TypeScript

- Pro Tips & Edge Cases

Introduction: Why ChatGPT for Coding?

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.

The arrival of GPT-5.3-Codex has revolutionized AI-assisted programming by combining advanced reasoning, multi-modal inputs, and agentic capabilities. Unlike earlier models, GPT-5.3-Codex functions as a coding co-pilot, architect, and QA engineer rolled into one—accelerating everything from prototyping to production-grade code.

In 2026, the ecosystem surrounding GPT-5.3-Codex includes:

- Codex CLI: A Rust-based command-line interface for rapid experimentation and scripting.

- OpenAI Agents SDK: A comprehensive SDK facilitating multi-agent workflows with planning, generation, and evaluation.

- Cloud-native Sandboxes: Secure environments for executing and testing AI-generated code.

- IDE Integrations: Deep plugins for VS Code, Cursor, Windsurf, and JetBrains IDEs.

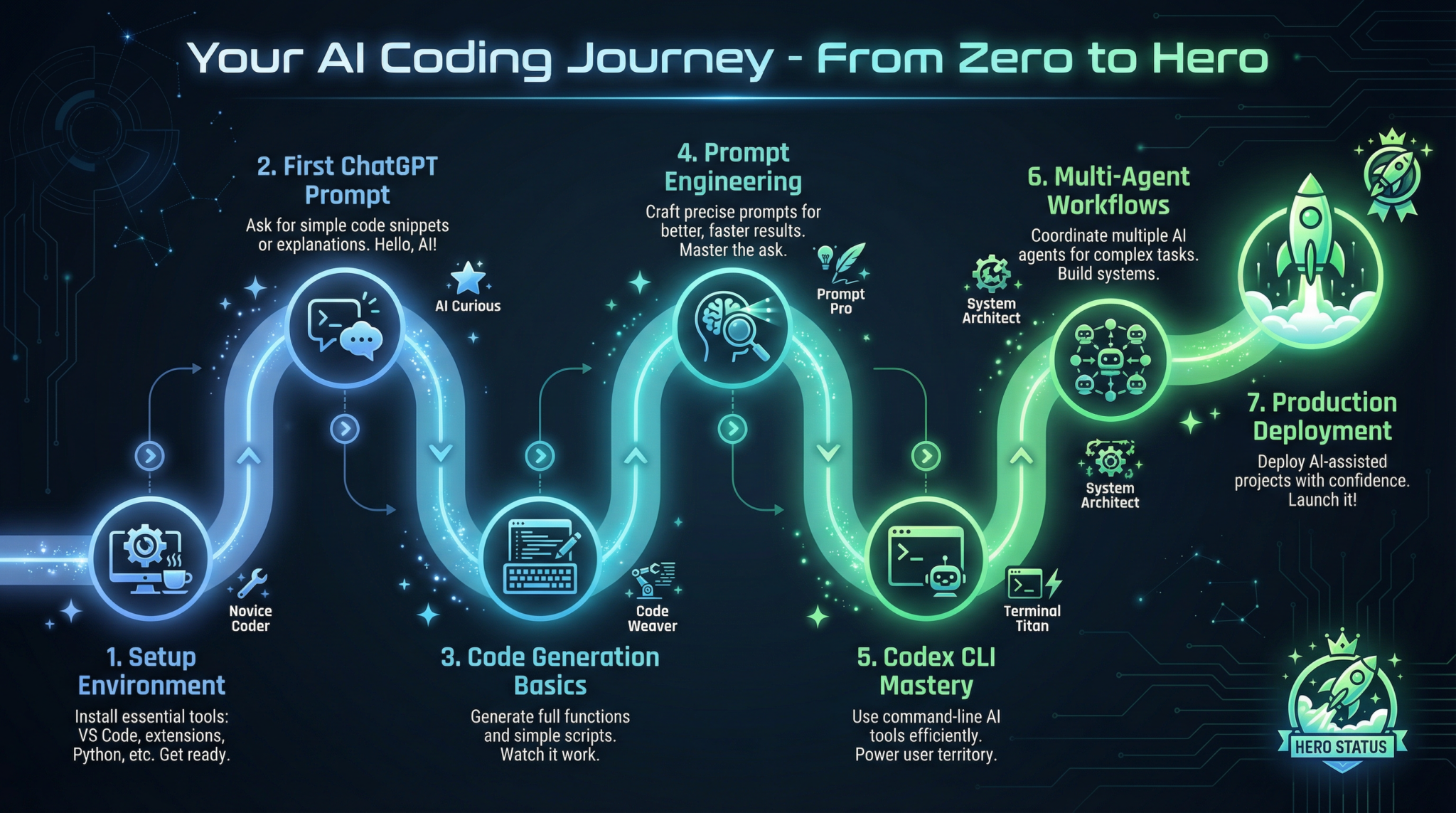

This guide will take you from zero to proficient, unpacking the model’s architecture, tooling, and best practices so you can build autonomous coding agents and integrate AI seamlessly into your workflows.

Deep-Dive Theoretical Overview

Understanding why and how GPT-5.3-Codex works under the hood is crucial for maximizing its potential. Below we dissect the core concepts and architectural principles.

The GPT-5.3-Codex Architecture

GPT-5.3-Codex is a transformer-based large language model with 175+ billion parameters specialized for source code. Its innovations include:

- Agentic Capabilities: It can plan, reason, and execute multi-step coding tasks autonomously.

- Multi-Modal Inputs: Supports text, images (UML diagrams, screenshots), and code snippets simultaneously.

- Long Context Windows: Handles up to 128k tokens, enabling complex project-wide reasoning.

- Entropy Management: Dynamically adjusts randomness during generation to balance creativity and precision.

- Context Engineering: Uses injected context files (e.g.,

AGENTS.md) to progressively disclose information to agents.

Agent Harness: The Operating System for AI Agents

Think of the Agent Harness as the OS that manages the AI “CPU” (the model) and “RAM” (context). It provides:

- Constraints: Limits on memory, time, and API calls to keep agents efficient.

- Verification: Continuous checking of agent outputs against specs or tests.

- Correction: Triggering retrials or human intervention on failure.

- Context Injection: Feeding agents with layered knowledge maps (

AGENTS.md) to prevent hallucinations.

The harness orchestrates the classic Planner, Generator, Evaluator triad:

| Component | Role |

|---|---|

| Planner | Expands high-level specs into detailed tasks |

| Generator | Produces code or artifacts via Sprint Contracts |

| Evaluator | Runs automated QA (e.g., Playwright MCP) |

Progressive Disclosure Pattern

Agents start with minimal context to avoid information overload. They receive pointers to deeper documentation or design files, enabling:

- Efficient Memory Use: Only relevant info loaded on demand.

- Modular Knowledge: Easy updates and versioning of specs.

- Robustness: Agents cross-verify sources dynamically.

Step-by-Step Guide: First Steps with GPT-5.3-Codex

This section walks through setting up your environment and running your first autonomous coding agent using the Codex CLI and OpenAI Agents SDK.

1. Prerequisites

- Rust toolchain installed (recommended:

rustup) - Node.js v20+ for SDK

- OpenAI API key with GPT-5.3-Codex access

- Docker for sandbox environments (optional but recommended)

2. Installing the Codex CLI

# Install via Cargo

cargo install codex-cli

# Verify installation

codex --version

# Expected output: codex 1.2.0 (Rust-based CLI for GPT-5.3-Codex)

3. Setting Up the OpenAI Agents SDK

# Clone SDK repo (example)

git clone https://github.com/openai/openai-agents-sdk.git

cd openai-agents-sdk

# Install dependencies

npm install

# Build SDK

npm run build

4. Initialize a New Agent Project

# Using Codex CLI to scaffold

codex init my-agent-project

cd my-agent-project

This generates a standard project structure with:

agents/directory for agent definitionsAGENTS.mdfile for context mapssandbox/with Docker configstests/directory with harnesses

5. Writing Your First Planner Agent

Create agents/planner.ts:

import { PlannerAgent } from 'openai-agents-sdk';

const planner = new PlannerAgent({

model: 'gpt-5.3-codex',

maxTokens: 8192,

contextFiles: ['AGENTS.md'],

});

export async function planFeature(spec: string) {

const plan = await planner.plan(spec);

console.log('Generated plan:', plan);

return plan;

}

6. Running the Agent

node agents/planner.ts "Implement feature: user authentication with OAuth2"

Expected output:

Generated plan:

1. Setup OAuth2 client credentials

2. Create login endpoint

3. Secure routes with token verification

4. Write unit and integration tests

7. Next Steps: Generator and Evaluator Agents

- Implement

generator.tsto produce code per plan. - Implement

evaluator.tsto run Playwright MCP tests automatically.

OpenAI Agents SDK & Agent Harness Patterns

This section explores how to leverage the OpenAI Agents SDK and Agent Harness to build complex, maintainable AI coding workflows.

Agent Harness Architecture

The harness acts as an orchestrator wrapping your model calls with:

- Context Injection: Load layered docs and code samples dynamically.

- Entropy Controls: Adjust temperature and top-p for different phases.

- Verification Loops: Auto-retry failed generations.

- Logging & Auditing: Record all agent interactions for debugging.

Using the SDK: Core Concepts

| Concept | Description |

|---|---|

| Agent | Encapsulates a GPT-5.3-Codex instance with configuration |

| Context | Injected files and environment variables provided to the agent |

| Sprint Contract | A unit of work defining inputs, outputs, and constraints |

| Harness | Infrastructure managing agent lifecycle and API interactions |

Example: Creating a Sprint Contract

import { SprintContract } from 'openai-agents-sdk';

const sprint = new SprintContract({

name: 'generate-auth-module',

inputSchema: {

type: 'object',

properties: {

featureSpec: { type: 'string' },

},

required: ['featureSpec'],

},

outputSchema: {

type: 'object',

properties: {

code: { type: 'string' },

tests: { type: 'string' },

},

required: ['code', 'tests'],

},

maxTokens: 12000,

temperature: 0.2,

});

export default sprint;

Integrating the Sprint with an Agent

import { GeneratorAgent } from 'openai-agents-sdk';

import sprint from './sprints/generate-auth-module';

const generator = new GeneratorAgent({

model: 'gpt-5.3-codex',

sprint,

contextFiles: ['AGENTS.md', 'auth-spec.md'],

});

export async function generateAuthCode(spec: string) {

const result = await generator.generate({ featureSpec: spec });

return result;

}

Testing with the Harness

The Harness supports running integration tests by injecting mocks and verifying outputs automatically.

import { Harness } from 'openai-agents-sdk';

const harness = new Harness({

agent: generator,

testCasesDir: 'tests/auth',

onFailure: (err) => console.error('Test failed:', err),

});

harness.runAllTests();

Codex CLI Usage: Terminal Commands & Flags

The Codex CLI provides fast, scriptable access to GPT-5.3-Codex capabilities from your terminal.

Installation Recap

cargo install codex-cli

Common Commands

| Command | Description | Example Usage |

|---|---|---|

codex run |

Run a single prompt | codex run "Generate a REST API in Rust" |

codex init |

Scaffold a new agent project | codex init my-project |

codex sprint |

Run a sprint contract | codex sprint ./sprints/generate-auth-module.json |

codex test |

Run harness tests | codex test --all |

codex context |

Inject context files | codex context AGENTS.md |

Flags

| Flag | Description | Default |

|---|---|---|

--model |

Specify model name | gpt-5.3-codex |

--temperature |

Sampling temperature | 0.2 |

--max-tokens |

Max tokens for response | 8192 |

--context |

Context file(s) to inject | None |

--output |

Output path for generated code | stdout |

Example: Running a Sprint with Context Injection

codex sprint ./sprints/generate-auth-module.json --context AGENTS.md,auth-spec.md --temperature 0.15 --output ./output/auth_module.rs

Expected output:

[SUCCESS] Sprint 'generate-auth-module' completed in 12.4s

Generated code saved to ./output/auth_module.rs

IDE Integrations: Setup in Cursor, Windsurf, VS Code

Achieving a seamless AI coding experience requires tight IDE integration. Below are detailed steps for enabling GPT-5.3-Codex plugins in popular 2026 IDEs.

VS Code GPT-5.3 Plugin Setup

-

Install the Plugin

-

Open VS Code Extensions Marketplace.

- Search for “GPT-5.3 Codex AI Assistant.”

-

Click Install.

-

Configure API Access

-

Open Command Palette (

Ctrl+Shift+P). - Run

GPT5.3: Set API Key. -

Paste your OpenAI API key.

-

Enable Context Injection

-

Place

AGENTS.mdand relevant context files in your workspace root. -

In Settings, enable

GPT5.3.contextFilesand addAGENTS.md. -

Using the Plugin

-

Highlight code or write a prompt in the editor.

- Press

Alt+Enterto invoke the agent. -

Select from Planner, Generator, or Evaluator agents.

-

Sprint Contracts

-

Use the command

GPT5.3: Run Sprintto execute predefined sprint contracts.

Cursor IDE Integration

Cursor is built around multi-agent workflows:

- Upon project open, Cursor scans for

AGENTS.md. - It dynamically spins up Planner, Generator, and Evaluator agents in parallel.

- The side panel shows sprint progress and test coverage.

- Use the

/agentchat window to interact directly with coding agents.

Windsurf Cloud IDE Integration

- Windsurf provides cloud-native sandboxes.

- Agents run server-side with full harness orchestration.

- Code completions, refactors, and QA results sync live.

- Set up via Windsurf’s AI tab: add API keys, enable multi-agent workflows, and configure sprint contracts per project.

Advanced Prompt Templates for Coding

Below are five advanced prompt templates optimized for GPT-5.3-Codex with placeholders for dynamic use.

1. Feature Planning Template

You are a software architect. Given the feature specification below, generate a detailed step-by-step implementation plan with priorities.

Feature Spec:

{{feature_spec}}

Constraints:

- Use microservices architecture

- Prioritize security and scalability

- Provide estimated time for each step

Output format:

1. Step description (priority: high/medium/low, est. time)

2. Code Generator Template

Write production-quality {{language}} code implementing the following feature:

Specification:

{{feature_spec}}

Requirements:

- Follow best practices for {{language}}

- Include inline comments and type annotations

- Provide unit tests in the same file or a test suite

Return only the code block.

3. Bug Reproduction Template

You are a QA engineer. Given this bug report, write a minimal reproducible test case in {{language}} that demonstrates the bug.

Bug report:

{{bug_report}}

Test case requirements:

- Use standard testing framework {{test_framework}}

- Include setup and teardown if necessary

- Make test self-contained and executable

4. Code Review Template

Perform a thorough code review of the following {{language}} code snippet:

Code:

{{code_snippet}}

Focus on:

- Correctness

- Security vulnerabilities

- Performance optimizations

- Style and readability

Provide a detailed review report with line references.

5. Refactor Suggestion Template

You are a senior engineer. Suggest an improved refactoring plan for this code module to enhance readability and maintainability.

Code:

{{code_snippet}}

Constraints:

- Preserve existing behavior

- Improve modularity and test coverage

- Suggest concrete code changes in {{language}}

{{language}}, {{feature_spec}}) dynamically in your agent harness for reusable prompt engineering.

Concrete Code Examples: Python, Rust, TypeScript

Python: Autonomous Sprint Runner

from openai_agents_sdk import PlannerAgent, GeneratorAgent, EvaluatorAgent, Harness

# Initialize agents

planner = PlannerAgent(model='gpt-5.3-codex', context_files=['AGENTS.md'])

generator = GeneratorAgent(model='gpt-5.3-codex', context_files=['AGENTS.md'])

evaluator = EvaluatorAgent(model='gpt-5.3-codex', context_files=['AGENTS.md'])

def run_sprint(feature_spec: str):

# Planning phase

plan = planner.plan(feature_spec)

print(f"Plan: {plan}")

# Generation phase

code_output = generator.generate({'featureSpec': feature_spec, 'plan': plan})

print(f"Generated Code:

{code_output}")

# Evaluation phase

test_results = evaluator.evaluate(code_output)

print(f"Test Results: {test_results}")

return code_output, test_results

if __name__ == "__main__":

feature = "Implement OAuth2 login system"

run_sprint(feature)

Rust: Codex CLI Sprint Contract Runner

use codex_cli::{CodexCli, SprintContract};

use std::fs;

fn main() -> Result<(), Box<dyn std::error::Error>> {

let sprint_json = fs::read_to_string("sprints/generate-auth-module.json")?;

let codex = CodexCli::new()

.model("gpt-5.3-codex")

.temperature(0.15)

.max_tokens(12000)

.context_files(vec!["AGENTS.md", "auth-spec.md"]);

let output = codex.run_sprint(&sprint_json)?;

println!("Sprint completed. Output:

{}", output);

Ok(())

}

TypeScript: Agent Harness with Progressive Disclosure

import { AgentHarness, PlannerAgent, GeneratorAgent, EvaluatorAgent } from 'openai-agents-sdk';

async function main() {

const harness = new AgentHarness({

model: 'gpt-5.3-codex',

contextFiles: ['AGENTS.md'],

entropyManagement: true,

});

const planner = new PlannerAgent(harness);

const generator = new GeneratorAgent(harness);

const evaluator = new EvaluatorAgent(harness);

const featureSpec = "Build a scalable chat server with WebSocket support";

// Step 1: Plan

const plan = await planner.plan(featureSpec);

// Step 2: Generate

const code = await generator.generate({ plan });

// Step 3: Evaluate

const testReport = await evaluator.evaluate(code);

console.log('Plan:', plan);

console.log('Generated Code:', code);

console.log('Test Report:', testReport);

}

main().catch(console.error);

Pro Tips & Edge Cases

| Issue | Symptom | Solution / Workaround |

|---|---|---|

| Agent hallucination | Generated code references nonexistent APIs or files | Use AGENTS.md for progressive disclosure; insist on references from docs; lower temperature. |

| Context token overflow | Model truncates important context in large projects | Use hierarchical context injection; chunk specs; prune irrelevant docs. |

| Sprint contract failures | Unexpected outputs or missing fields in JSON | Strictly validate inputs/outputs schemas; add retries with backoff in harness. |

| IDE plugin lag | Slow completions or crashes | Update plugins and CLI to latest versions; disable unnecessary context files; increase local RAM. |

| Multi-agent race conditions | Conflicting outputs from concurrent agents | Use harness locking mechanisms; serialize sprint execution or implement consensus logic. |

AGENTS.md and sprint contracts to maintain reproducibility and facilitate debugging.

Conclusion

This comprehensive beginner’s guide sets the foundation for mastering ChatGPT-powered coding workflows in 2026. By deeply understanding the GPT-5.3-Codex architecture, Agent Harness engineering, and the rich tooling ecosystem, you are now equipped to build autonomous, scalable AI coding agents.

The subsequent parts of this masterclass will expand on multi-agent orchestration, advanced harness design, and real-world deployment strategies—stay tuned!

Appendix: Useful Links and Resources

- OpenAI Agents SDK GitHub

- Codex CLI Documentation

- AGENTS.md Pattern Spec

- Playwright MCP Testing Framework

- VS Code GPT-5.3 Plugin

This concludes Part 1 of 7: ChatGPT for Coding: The Complete Beginner’s Guide.

🎉 Access 40,000+ ChatGPT Prompts — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of ChatGPT prompts available, including all Coding Masterclass templates and resources.

Subscribe & Get Free Access →ChatGPT Coding Masterclass Series

Frequently Asked Questions

What is GPT-5.3 Codex designed for?

GPT-5.3 Codex is designed to assist developers in software development by acting as a coding co-pilot. It helps in generating, reviewing, and optimizing code, making it an invaluable tool for enhancing productivity and accuracy in coding tasks.

How does GPT-5.3 Codex improve coding efficiency?

GPT-5.3 Codex improves coding efficiency by providing advanced reasoning and multi-modal input capabilities. It accelerates the development process from prototyping to production, allowing developers to focus on higher-level design and problem-solving tasks.

What integrations are available for GPT-5.3 Codex?

GPT-5.3 Codex integrates with popular IDEs such as VS Code, Cursor, and JetBrains. It also supports a Rust-based CLI and OpenAI Agents SDK, enabling seamless integration into existing development workflows and environments.

Can GPT-5.3 Codex be used for all programming languages?

While GPT-5.3 Codex supports a wide range of programming languages, its effectiveness may vary depending on the language and complexity of the task. It is particularly optimized for languages like Python, Rust, and TypeScript, among others.

What are the security features of GPT-5.3 Codex?

GPT-5.3 Codex includes cloud-native sandboxes that provide secure environments for executing and testing AI-generated code. These sandboxes help ensure that code runs safely and securely, minimizing the risk of vulnerabilities.

How does GPT-5.3 Codex handle multi-agent workflows?

GPT-5.3 Codex handles multi-agent workflows through the OpenAI Agents SDK, which facilitates planning, generation, and evaluation of tasks across multiple AI agents. This capability enhances collaborative coding and complex project management.

🕐 Instant∞ Unlimited🎁 Free