The Dawn of the Trillion-Dollar Compute Era

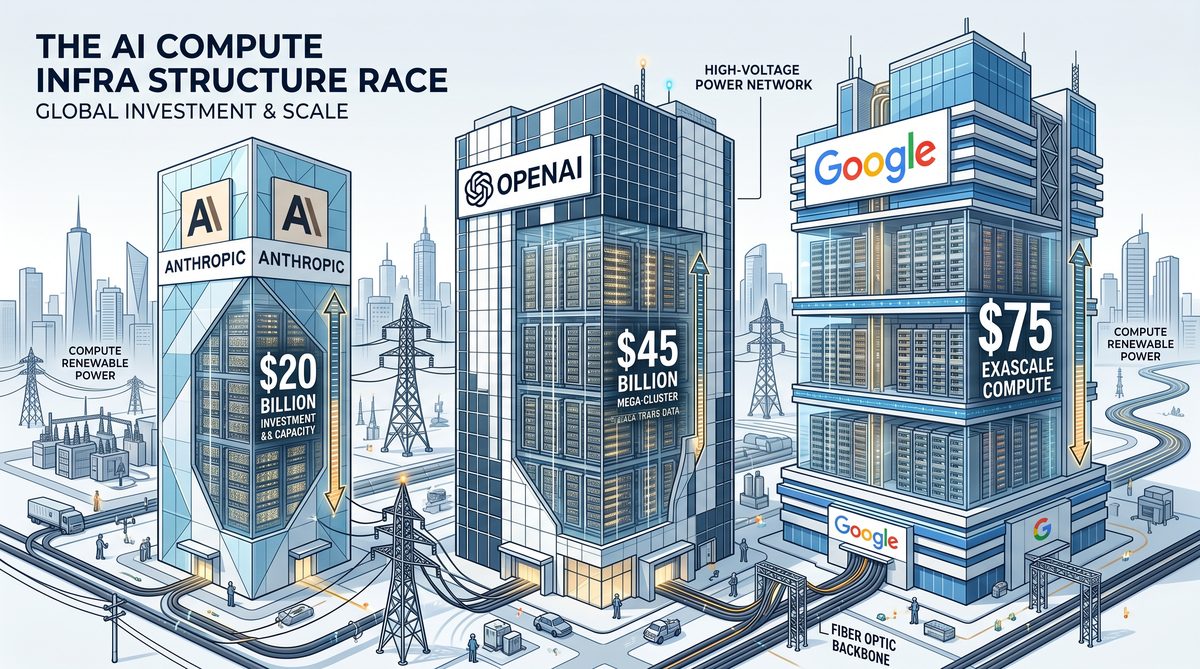

The race to develop ever more powerful artificial intelligence models has entered an unprecedented phase, characterized not just by breakthroughs in algorithms and architecture, but by a fierce competition for compute infrastructure. Leading AI pioneers—Anthropic, OpenAI, and Google—are each racing to secure billions of dollars in hardware, data center capacity, and specialized chips to power the next generation of AI systems by 2027. This escalating arms race in AI compute is not merely a financial contest; it is a strategic battle that will shape the future landscape of technology, innovation, and global economic power. The scale of investment and the complexity of the infrastructure involved have ushered in what many experts now call the “trillion-dollar compute era,” underscoring how foundational compute resources have become to AI’s evolving capabilities and commercial viability.

The competitive dynamics among these three titans reveal distinct philosophies and technical approaches to securing and optimizing compute resources. OpenAI, for instance, has historically pursued a model that tightly integrates cutting-edge hardware partnerships with cloud providers, focusing on scalability and flexibility. Their collaboration with Microsoft Azure has allowed them to rapidly scale up GPU-based compute clusters tailored for training massive transformer-based language models. Meanwhile, Anthropic, a relatively newer player founded by former OpenAI researchers, emphasizes safety and alignment in AI development, which is reflected in its compute strategy. Anthropic is investing heavily in custom infrastructure designed to optimize not only raw performance but also model interpretability and robustness. Their compute plans include aggressive procurement of next-generation GPUs and specialized AI accelerators that balance throughput with energy efficiency, aiming to support their unique research agenda.

Google, with its vast internal resources and pioneering AI hardware division, arguably leads the pack in integrating vertically optimized compute infrastructure. The company’s Tensor Processing Units (TPUs) represent a paradigm shift in AI hardware design, purpose-built to accelerate the training and inference of deep learning models at scale. Google’s strategy is deeply entwined with its global data center network, enabling it to deploy AI workloads more efficiently and cost-effectively than competitors who rely primarily on third-party cloud services. By controlling the entire stack—from silicon design to software frameworks—Google can tailor infrastructure to the evolving demands of its AI research and commercial applications, including large language models, vision systems, and multi-modal AI.

Understanding these divergent compute strategies requires a close examination of how each company views the balance between scalability, cost control, energy consumption, and AI safety. The evolution of AI models over the past decade—from relatively modest architectures to today’s gargantuan multi-trillion parameter networks—has exponentially increased the demand for specialized compute. Anthropic Partners with SpaceX for Compute Infrastructure as Claude Usage Limits Double This gradual escalation has forced companies to rethink their infrastructure investments, moving from commodity GPUs to custom ASICs and integrated software-hardware solutions that can sustain continuous innovation. These investments are not isolated; they influence global supply chains for semiconductors, data center construction, and even geopolitical considerations around technology sovereignty and access.

Moreover, the compute arms race extends beyond raw hardware acquisition. It involves innovations in software tooling, distributed training algorithms, and workload orchestration that maximize the utilization of these expensive resources. For example, techniques such as model parallelism, pipeline parallelism, and mixed precision training have become crucial in enabling these companies to push the boundaries of what current hardware can achieve. Each player tailors its stack to optimize throughput and reduce latency, ensuring that the massive investments in compute translate into tangible improvements in AI model performance and deployment speed.

As we approach 2027, the strategic decisions made by Anthropic, OpenAI, and Google in securing and deploying AI compute infrastructure will have lasting implications. These choices will determine not only who leads in AI capabilities but also who sets the standards for ethical AI deployment, energy sustainability, and integration across industries. This article delves deeply into the comparative analysis of their compute strategies, exploring the technical, economic, and strategic dimensions that define the trillion-dollar arms race in AI infrastructure.

The Compute Imperative: Why 2027 is the Target Year

The race to harness ever-greater computational power for artificial intelligence systems is accelerating rapidly, and industry experts have converged on 2027 as a pivotal target year. Understanding why this specific year holds such significance requires an exploration of the exponential growth in AI compute requirements, the technological and economic factors converging around this timeline, and the strategic plans of leading AI research organizations. This section delves into the multifaceted rationale behind the 2027 compute imperative, providing detailed examples and scenarios that highlight the urgency and complexity of meeting these computational demands.

Exponential Growth in AI Compute Requirements

Since the early 2010s, AI compute requirements have grown at an unprecedented exponential rate. This phenomenon can be traced through several key milestones in AI model scale and training complexity:

- 2012: AlexNet, with approximately 60 million parameters, revolutionized computer vision using GPUs, consuming on the order of tens of petaflop/s-days of compute.

- 2018: OpenAI’s GPT-2 introduced a model with 1.5 billion parameters, requiring roughly 1,000 times the compute of AlexNet to train.

- 2023: GPT-4 and other state-of-the-art models feature hundreds of billions to trillions of parameters, demanding exaflop-scale compute resources.

This growth is not linear but exponential, often doubling compute needs every 3 to 4 months according to empirical observations from AI research trends. If this trajectory continues, by 2027, training state-of-the-art AI models will require orders of magnitude more compute than today’s largest models.

For example, if GPT-4’s training consumed approximately 1 exaflop-day (1018 floating point operations per day), a model in 2027 could require on the order of 1,000 exaflop-days or more. This aligns with the growing ambitions of AI developers aiming to create artificial general intelligence (AGI) or highly capable multimodal models that integrate language, vision, and reasoning at unprecedented scales.

Technological Drivers Anchoring 2027

Several technological trends and milestones suggest that 2027 is not an arbitrary target but a natural inflection point for AI compute:

- Advancements in Semiconductor Fabrication: The semiconductor industry roadmap predicts the maturity and widespread adoption of sub-3nm process nodes by 2025-2026, enabling significantly faster and more energy-efficient chips by 2027. This leap is critical because AI workloads are heavily constrained by power and thermal limits.

- Emergence of Specialized AI Accelerators: Custom AI chips, such as those using novel architectures like systolic arrays, in-memory compute, or photonic processors, will reach commercial viability and scale around this timeframe. These accelerators will enable training of massive models within manageable power envelopes.

- Quantum and Neuromorphic Computing Research: While still experimental, these emerging paradigms may start contributing to hybrid AI training pipelines by 2027, increasing effective compute capacity beyond classical limits.

Without these technological advancements, the energy costs and physical infrastructure requirements for training next-generation AI models would become prohibitive. Thus, the compute imperative is tightly coupled with the maturation of new hardware technologies anticipated by 2027.

Economic and Strategic Considerations

In addition to technological drivers, economic and strategic factors also converge to make 2027 the focal point for AI compute expansion:

- Investment Cycles: Major tech companies and governments have initiated multi-billion dollar investments in AI infrastructure, often with 5-year horizons starting from the early 2020s. These timelines place peak deployment and utilization phases around 2027.

- Competitive Pressure: The global AI arms race compels organizations to hit critical compute milestones quickly to avoid losing leadership in AI innovation. Missing the 2027 target could mean being outpaced by rivals deploying more capable models.

- Regulatory and Ethical Frameworks: By 2027, many countries aim to have clearer AI regulations and governance in place. Organizations anticipate that meeting compute demands by then will enable compliance and responsible AI deployment at scale.

For instance, a hypothetical AI research lab aiming to develop a next-generation multimodal AGI system plans its compute infrastructure roadmap to align with these cycles. They project that by 2027, they need access to at least 500 exaflop-days of training compute per model iteration to maintain competitive parity. To achieve this, they must secure partnerships with semiconductor manufacturers, invest in custom AI accelerators, and establish energy-efficient data centers.

Projected Compute Needs of AI Generations: A Comparative Table

| AI Generation | Approximate Year | Model Size (Parameters) | Training Compute (Exaflop-Days) | Primary Use Cases | Key Technology Drivers |

|---|---|---|---|---|---|

| Early Deep Learning | 2012 | 60 million | 0.00001 | Image classification, basic NLP | GPUs, general-purpose compute |

| Transformer Revolution | 2018 | 1.5 billion | 0.01 | Language modeling, text generation | TPUs, optimized deep learning frameworks |

| Large-scale Foundation Models | 2023 | 100+ billion | 1 | Multimodal reasoning, advanced NLP | Custom AI accelerators, distributed training |

| Next-Gen AGI Precursors | 2027 (Projected) | 1+ trillion | 1,000+ | General intelligence, complex problem solving | Sub-3nm chips, photonic and neuromorphic co-processors |

Scenario: Building a 2027 Compute Infrastructure

Consider a hypothetical scenario where a global AI consortium aims to develop an AGI precursor model by late 2027. Their compute needs are estimated at approximately 1,200 exaflop-days per training run, with iterative training cycles planned every 6 months. To meet this demand, they must carefully architect their compute infrastructure with the following components:

- Hardware Procurement: Commit to purchasing the latest sub-3nm AI accelerators from multiple vendors to ensure supply chain resilience and peak performance.

- Data Center Design: Build hyper-efficient data centers optimized for cooling and power delivery, potentially co-located near renewable energy sources to offset enormous electricity needs.

- Distributed Training Systems: Develop advanced software stacks capable of orchestrating training across thousands of specialized nodes, minimizing communication bottlenecks and maximizing parallelism.

- Hybrid Compute Integration: Incorporate experimental quantum and neuromorphic modules as accelerators for specific training tasks, aiming to squeeze incremental performance gains.

This strategic infrastructure will likely require multi-billion dollar investments, years of development, and close collaboration between hardware manufacturers, cloud providers, and AI research teams. Missing the 2027 window could delay breakthroughs by multiple years, emphasizing why this year has become the focal point for the compute arms race.

Anthropic’s Strategic Maneuvers: The SpaceX Deal and Beyond

Anthropic’s Compute Strategy: Balancing Scale, Safety, and Sustainability

Anthropic has positioned itself uniquely within the AI compute arms race by emphasizing a compute strategy that is not solely about raw scale but also about integrating safety, efficiency, and sustainability. Founded by former OpenAI researchers, Anthropic has been vocal about the critical importance of AI alignment and the risks associated with unbridled compute escalation without corresponding safety frameworks. Their approach to compute infrastructure underscores this philosophy by pursuing partnerships and technology choices that optimize for both performance and responsible energy consumption.

Unlike some competitors that aggressively pursue the largest possible training runs regardless of environmental impact, Anthropic’s compute strategy involves meticulous optimization of training workloads. This includes experimenting with novel architectures and training paradigms designed to reduce the number of floating point operations (FLOPs) required to achieve state-of-the-art results. By concentrating on algorithmic efficiency and model robustness, Anthropic aims to achieve superior performance with comparatively lower compute expenditure— an approach that could redefine how compute is valued in the AI development lifecycle.

Moreover, Anthropic invests heavily in custom tooling and monitoring systems that provide granular visibility into power usage and computational bottlenecks. This data-driven approach enables iterative improvements and cost-effective scaling of their models. Their commitment to sustainability is also reflected in internal policies prioritizing renewable energy sources and offsetting carbon footprints associated with large-scale training runs.

Forging Strategic Partnerships: The Role of Industry Alliances in Anthropic’s Growth

Anthropic’s compute ambitions rely on strategic partnerships that extend beyond conventional cloud providers. While leveraging leading cloud infrastructure platforms remains part of their operational backbone, Anthropic has progressively sought deeper collaborations with specialized hardware vendors and energy providers. These partnerships aim to secure preferential access to cutting-edge accelerators such as next-generation GPUs and AI-focused silicon, ensuring that Anthropic remains competitive in both speed and cost-efficiency.

One notable dimension of Anthropic’s partnership strategy is their focus on securing long-term compute capacity guarantees. Rather than episodic cloud bursts, Anthropic negotiates dedicated infrastructure deals that allow for predictable scaling aligned with their research timelines. This approach not only stabilizes costs but also fosters collaborative innovation, where hardware providers can tailor solutions to Anthropic’s unique software stack and research objectives.

Anthropic also collaborates with academic institutions and open research consortia, facilitating cross-pollination of ideas around AI safety and scalable alignment techniques. These alliances enhance Anthropic’s internal R&D while contributing to the broader AI community’s understanding of responsible compute scaling. For more on how AI safety considerations shape compute scaling strategies industry-wide, see How Anthropic’s SpaceX Compute Deal Changes the AI Landscape: Scaling Claude for Enterprise.

The Rumored SpaceX Deal: A Game-Changer in AI Compute and Energy Synergies

Perhaps the most intriguing development in Anthropic’s strategic maneuvers is the rumored partnership with SpaceX, Elon Musk’s aerospace and energy conglomerate. While details remain confidential, industry insiders suggest that this collaboration could represent a paradigm shift by integrating SpaceX’s advanced energy infrastructure with Anthropic’s AI compute demands.

SpaceX is renowned not only for its rocket technology but also for its forward-thinking energy ventures, including Starlink’s global satellite internet network and ambitious plans in renewable energy storage and generation. The speculation is that Anthropic aims to leverage SpaceX’s energy assets—particularly on-site solar power arrays and battery storage systems—to power large-scale AI training facilities with clean, reliable energy. This integration could dramatically reduce the carbon footprint of AI training runs while providing Anthropic with a stable and potentially cost-advantaged energy supply.

From a compute perspective, SpaceX’s operational expertise in scaling complex, high-demand systems dovetails with Anthropic’s need for scalable, high-throughput computing environments. There is conjecture that SpaceX’s proprietary networking and edge computing technologies could be harnessed to optimize data flow and reduce latency in distributed AI training setups. Such advancements would enable Anthropic to train larger, more complex models more efficiently, while maintaining tight control over energy consumption and operational costs.

This potential deal also symbolizes a broader trend in the AI compute arms race—where energy infrastructure and compute hardware are becoming inseparable strategic assets. Companies are recognizing that raw compute power alone is insufficient; the sustainability and economics of powering that compute at scale are equally crucial. By aligning with SpaceX, Anthropic may be positioning itself at the forefront of this integrated compute-energy model, setting a precedent for future collaborations across the tech and energy sectors.

Implications and Future Outlook

If the rumors of the Anthropic-SpaceX collaboration hold true, the implications extend beyond just these two companies. It signals a maturation of the AI compute arms race where ecosystem partnerships become critical levers of competitive advantage. Access to proprietary energy infrastructure and custom hardware could redefine how AI firms approach scaling, potentially leading to a new class of hyper-efficient training hubs combining green energy with next-gen compute.

Furthermore, this strategy aligns with Anthropic’s broader mission to develop AI that is not only powerful but also safe and aligned with human values. Efficient compute powered by sustainable energy sources reduces environmental impact, an ethical consideration increasingly important to stakeholders and regulators alike. Integrating energy and compute also enables more predictable scaling, which is vital for iterative safety testing and alignment research.

In conclusion, Anthropic’s strategic maneuvers—including their innovative compute strategy, selective partnerships, and the rumored landmark deal with SpaceX—highlight an evolving paradigm in AI development. This paradigm balances the relentless pursuit of scale with responsibility, sustainability, and collaboration, setting the stage for the next phase of the AI compute arms race.

OpenAI’s Stargate: The $100 Billion Supercomputer Vision

Introduction to the Stargate Project

OpenAI, in partnership with Microsoft, has embarked on an ambitious endeavor known as the Stargate project—an initiative aimed at building one of the most powerful supercomputers ever conceived to accelerate the development of advanced artificial intelligence systems. This project represents a significant leap in computational scale and sophistication, with a proposed investment approaching $100 billion over the next decade. Stargate is envisioned not merely as a hardware upgrade but as a transformational infrastructure platform designed to handle the exponentially growing demands of large-scale AI model training, inference, and deployment.

The project’s name, “Stargate,” symbolizes a gateway to unprecedented AI capabilities, enabling research and applications that push beyond the current boundaries of machine learning. This endeavor is a direct response to the rapid increase in AI model complexity and size—where existing compute infrastructures are becoming bottlenecks rather than enablers. Stargate aims to provide a robust foundation for training next-generation models with trillions of parameters, supporting not only OpenAI’s internal research but also powering enterprise-grade AI services through Microsoft Azure integration.

Massive Capital Requirements and Investment Scale

The financial commitment to Stargate is staggering, reflecting the scale and ambition of the project. Industry estimates place the total capital expenditure for the buildout, deployment, and operational costs of this supercomputer at around $100 billion. This figure encompasses a wide range of investment areas:

- Hardware Acquisition: Procuring cutting-edge GPUs, TPUs, and custom AI accelerators in quantities that dwarf current supercomputing efforts.

- Data Center Construction: Building new hyperscale data centers or retrofitting existing ones to support the power, cooling, and networking requirements of the massive compute clusters.

- Energy Infrastructure: Designing and implementing sustainable energy solutions to handle the enormous power draw, including renewable energy integration and advanced cooling systems that minimize carbon footprint.

- Networking and Storage: Deploying ultra-high bandwidth, low-latency networking fabrics and vast, high-speed storage arrays optimized for AI workloads.

- Research and Development: Investing in custom chip design, system software, AI model optimization, and operational tooling to ensure efficient utilization of the hardware.

Such a colossal investment highlights the strategic importance placed on AI leadership by OpenAI and Microsoft, acknowledging that compute power is the cornerstone of future AI breakthroughs. It also signals a shift in the AI arms race from purely algorithmic innovation towards infrastructure innovation and economies of scale.

Infrastructure Challenges in Building Stargate

Constructing a supercomputer of Stargate’s magnitude is a feat of engineering that poses multiple interrelated challenges. These challenges span physical infrastructure, technical design, operational efficiency, and sustainability considerations.

Power and Cooling Requirements

One of the primary challenges is managing the immense power consumption and heat dissipation generated by hundreds of thousands of AI accelerators running in parallel. Current state-of-the-art GPUs used in AI training consume several hundred watts each; scaling this to hundreds of thousands leads to power requirements in the gigawatt range. To put this into perspective, Stargate’s power draw could rival that of a small city.

Effective cooling solutions are critical to prevent hardware failure and maintain operational efficiency. Traditional air cooling systems become impractical at this scale, pushing the need for advanced liquid cooling, immersion cooling, or even innovative thermal management techniques that recycle heat energy. Additionally, the data centers housing Stargate must be designed to handle these cooling systems and the physical footprint of the hardware.

Networking and Data Throughput

AI training workloads involve massive data movement between processing units and storage. Ensuring ultra-low latency and high bandwidth networking is essential to prevent bottlenecks. Stargate’s architecture relies on custom interconnects that provide terabytes per second of internal bandwidth, supporting model parallelism and distributed training across thousands of nodes.

Integrating these networking fabrics with storage systems optimized for streaming petabytes of training data requires novel storage hierarchies and caching strategies. This infrastructure must also seamlessly interface with Microsoft Azure’s global cloud network to support hybrid cloud scenarios and real-time AI inference services.

Scalability and Fault Tolerance

At this unprecedented scale, hardware failures become a common occurrence rather than an exception. Stargate’s system architecture must be resilient, employing fault-tolerant designs that allow training jobs to continue uninterrupted despite node failures. This involves sophisticated distributed software frameworks that handle checkpointing, job scheduling, and resource allocation dynamically.

Moreover, the system must scale efficiently not only in raw performance but also in manageability, enabling engineers to monitor, diagnose, and optimize thousands of compute nodes continuously.

Comparing Stargate to Current Supercomputers

To fully appreciate the magnitude of the Stargate project, it is helpful to compare its proposed specifications against the world’s leading supercomputers today. The table below outlines key metrics including peak performance (measured in FLOPS), power consumption, and GPU counts.

| Supercomputer | Peak Performance (PFLOPS) | GPU Count | Power Consumption (MW) | Primary Purpose |

|---|---|---|---|---|

| Fugaku (Japan) | 0.442 | ~7,630 CPUs (no GPUs) | 28 | Scientific Simulation |

| Frontier (USA) | 1.102 | ~9,400 AMD GPUs | 21 | Scientific Research |

| Perlmutter (USA) | 0.645 | ~6,100 NVIDIA GPUs | 7.5 | Scientific Computing & AI |

| Google TPU v4 Pod | 0.100 (est.) | ~4,096 TPUs | 4 | AI Training |

| OpenAI Stargate (Proposed) | 100+ | ~300,000+ Custom AI Accelerators | ~1000 (est.) | Next-Gen AI Research & Deployment |

Interpretation of the Comparison

The Stargate supercomputer dwarfs existing systems in both scale and specialization. Its peak performance target surpasses 100 petaflops, a hundredfold increase over some of the fastest systems currently operational. Unlike traditional supercomputers optimized for scientific simulations, Stargate is tailored exclusively for AI workloads, utilizing custom-designed accelerators that maximize throughput for training massive neural networks.

The GPU and accelerator count is also orders of magnitude larger, reflecting the necessity of parallelism at an unprecedented scale. This expansion necessitates a power envelope nearing 1 gigawatt, which introduces unique logistical and environmental challenges that are being addressed through innovative data center designs and sustainable energy sourcing.

Strategic Implications

OpenAI and Microsoft’s investment in Stargate is a clear signal of the future direction of AI development: massive, specialized compute infrastructures are essential to maintain competitive advantage in AI capabilities. By integrating Stargate with Azure’s cloud ecosystem, Microsoft amplifies its ability to offer AI-as-a-service at scale, democratizing access to the most powerful AI models.

Furthermore, the infrastructure innovations pioneered in Stargate will likely cascade into broader technology sectors, influencing data center design, energy management, and distributed computing paradigms. This project exemplifies the convergence of massive capital investment, engineering ingenuity, and strategic vision driving the AI compute arms race forward at breakneck speed.

Google’s Infrastructure Dominance: TPUs and Global Data Centers

Custom Silicon Innovation: The Rise of TPUs

Google has positioned itself at the forefront of the AI compute arms race largely through its pioneering development of custom silicon tailored explicitly for machine learning workloads: the Tensor Processing Units (TPUs). Unlike traditional CPUs or even GPUs, TPUs are designed from the ground up to accelerate the specific mathematical operations critical to deep learning, such as matrix multiplications and convolutions, at unprecedented speeds and energy efficiency.

The inception of TPUs began in 2015 when Google revealed its first-generation TPU, a highly specialized ASIC (Application-Specific Integrated Circuit) optimized for inferencing tasks in production environments like Google Search and Google Photos. Since then, Google has rapidly evolved the TPU architecture through multiple generations, with each iteration delivering substantial improvements in computational throughput, memory bandwidth, and power efficiency.

The latest TPU v4 chips, deployed extensively within Google’s data centers, are capable of delivering up to 275 teraflops of mixed-precision floating-point compute per chip. These chips are organized into large-scale pods, with hundreds to thousands of chips interconnected via custom high-speed networks, enabling them to collectively tackle some of the most demanding AI training workloads in the world. This scalability allows Google to train massive language models, such as PaLM, at speeds and scales that are unattainable with off-the-shelf GPUs alone.

Google’s TPU architecture also integrates tightly with its software stack, including TensorFlow, JAX, and proprietary compiler technologies. This synergy ensures that developers can optimize their models to squeeze maximum performance from the hardware without needing to manage low-level details. The result is a seamless AI development ecosystem where innovation in both hardware and software fuels rapid advancements in model complexity and capability.

Expansive Global Data Center Footprint

Complementing its silicon innovation, Google’s global data center infrastructure represents one of the most extensive and sophisticated compute backbones in the world. As of 2024, Google operates over 35 data center campuses across North America, South America, Europe, and Asia, with dozens of additional edge locations and network points of presence. This vast geographic distribution enables Google to deliver low-latency AI services to billions of users worldwide while maintaining redundancy and fault tolerance.

Each data center is purpose-built with a focus on sustainability and operational efficiency. Google has consistently invested in renewable energy sources, advanced cooling techniques, and hardware lifecycle management to reduce the environmental impact of its compute operations. These efforts not only lower costs but also ensure that Google can scale its AI infrastructure sustainably as demand continues to surge.

Inside these data centers, thousands of racks are populated with TPU pods interconnected via custom-built high-bandwidth networking fabrics. This close integration allows Google to manage AI workloads at an unprecedented scale, seamlessly distributing training jobs across pods and dynamically allocating compute resources based on real-time demand. The sheer scale of these facilities means Google can iterate rapidly on AI research projects, deploying larger models and experimenting with new architectures without the typical bottlenecks faced by smaller operators.

Furthermore, Google’s expertise in data center networking and security ensures that sensitive AI training data and model checkpoints are protected at every stage. This security posture is crucial for maintaining trust with enterprise customers and adhering to strict compliance requirements across global markets.

Scaling Towards 2027: The Future of AI Compute at Google

Looking ahead to 2027, Google is aggressively scaling its AI compute capacity to maintain and extend its leadership position in the AI arms race. Industry insiders anticipate that Google will deploy next-generation TPU designs, potentially TPU v5 or beyond, featuring even greater transistor density, enhanced AI-specific instructions, and integrated memory innovations such as on-chip HBM (High Bandwidth Memory) or advanced packaging technologies.

These hardware advancements will be complemented by architectural innovations in pod design, enabling more efficient inter-chip communication and fault tolerance. Google’s roadmap includes expanding TPU pod sizes from the current hundreds of chips to potentially thousands or tens of thousands, facilitating the training of AI models with hundreds of billions or even trillions of parameters at lightning speeds.

In parallel, Google continues to invest heavily in expanding its data center footprint, targeting new regions to reduce latency and address geopolitical considerations. This expansion is not simply about adding more physical locations but involves integrating cutting-edge infrastructure components such as liquid cooling systems, AI-powered operational monitoring, and quantum-safe networking protocols.

Moreover, Google is exploring hybrid cloud strategies to combine its private data centers with public cloud environments, offering customers unprecedented flexibility in how they access AI compute resources. This approach is critical as AI workloads diversify, ranging from massive model training to real-time inference at the edge.

A crucial aspect of this scaling effort involves competition and collaboration with other AI hardware providers. For example, Google’s TPU strategy contrasts with the GPU-centric approaches of companies like NVIDIA and AMD. The trade-offs between custom AI accelerators and generalized GPUs are a dynamic topic of discussion in the industry, influencing procurement, development cycles, and ecosystem partnerships. For a comprehensive analysis of these trade-offs, including performance benchmarks and cost considerations, see our detailed exploration on Inside Stargate: How OpenAI Is Building the $500 Billion Compute Foundation for AGI.

Ultimately, Google’s combined strategy of advancing custom silicon innovation, leveraging its expansive and efficient global data centers, and scaling aggressively towards 2027 positions it uniquely to support the next generation of AI breakthroughs. This infrastructure backbone not only enables Google’s internal AI research but also powers a vast ecosystem of developers, enterprises, and applications that rely on the company’s AI cloud services to accelerate their own innovation journeys.

Useful Links

- Scaling Laws for Neural Language Models – OpenAI Research Paper

- NVIDIA AI Enterprise Software Suite

- Google’s Pairstream: Scalable AI Training Infrastructure

- Microsoft Azure Supercomputing and AI Innovation

- OpenAI: AI and Compute

- MIT Technology Review: The AI Chip Compute Race

- DeepMind Research on High Performance Computing for AI

- AI Compute Frontiers: Challenges and Opportunities (Semantic Scholar)

Conclusion: The Winner Takes All

The AI compute arms race is not merely a competition over hardware resources or algorithmic innovations; it is a defining battle that will shape the trajectory of technological progress and economic power in the 21st century. At its core, this race is about scaling the computational capacity required to build ever more sophisticated, capable, and impactful artificial intelligence systems. As we have seen, the exponential growth in AI model size, complexity, and training data demands unprecedented advances in both hardware and software infrastructure. The companies and nations that master this compute frontier stand to unlock transformative capabilities that could redefine industries, governance, and everyday human experiences.

The stakes of this arms race are immense. Organizations with access to the most powerful AI training infrastructure gain a strategic advantage in developing models that push the boundaries of natural language understanding, computer vision, robotics, and decision-making. This advantage translates into market dominance, superior products, and control over critical AI ecosystems. It also fuels a feedback loop: more compute enables better models, which in turn justify investments in even greater compute resources. This dynamic risks creating a winner-takes-all scenario where a handful of entities consolidate their influence, raising important questions about access, fairness, and ethical governance.

Looking ahead, the trajectory of AI compute is likely to be shaped by several key factors. First, the physical limits of semiconductor manufacturing and chip design will drive innovation in specialized AI accelerators, such as GPUs, TPUs, and custom ASICs, alongside advances in photonic and quantum computing research. These innovations will be critical to sustaining growth in compute efficiency and cost-effectiveness. Second, software frameworks and algorithmic breakthroughs that improve training efficiency, sparsity, and model architectures will help mitigate the raw compute demands, enabling more organizations to participate in the race.

Moreover, geopolitical competition will heavily influence investment patterns and collaboration models. Governments recognize the strategic importance of AI compute capability and are increasingly funneling resources into national supercomputing infrastructure and AI research initiatives. This could lead to a bifurcation of AI development along geopolitical lines if open collaboration is compromised. At the same time, cloud providers and AI startups are democratizing access to high-performance compute through scalable on-demand platforms, potentially balancing centralization tendencies.

In summation, the AI compute arms race is a complex, high-stakes contest that blends technology, economics, and geopolitics. While the winner will gain unparalleled opportunities to shape the future of AI and its societal impact, the broader community must also grapple with ensuring that this power is wielded responsibly and inclusively. The next decade will be pivotal, not only in determining who leads the AI compute frontier but also in defining how the fruits of this race are shared across humanity.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.