⚡ The Brief

- What it is: AI desktop agents like OpenAI Codex and Claude Cowork are advanced tools integrated into engineering workflows, enhancing productivity by automating tasks and assisting in code generation.

- Who it’s for: These AI agents are designed for engineering teams seeking to streamline development processes, reduce repetitive coding tasks, and improve overall efficiency.

- Key takeaways: AI agents offer significant productivity gains, assist with complex tasks, and require careful integration to maximize benefits while addressing challenges like debugging AI-generated code.

- Pricing/Cost: Costs vary based on usage and integration needs, with potential for substantial ROI through increased productivity and reduced development time.

- Bottom line: AI desktop agents are transforming engineering workflows, but teams must navigate integration challenges to fully leverage their potential.

✓ Instant access✓ No spam✓ Unsubscribe anytime

How Engineering Teams Are Adopting AI Desktop Agents in 2026: A Deep Dive into OpenAI Codex and Claude Cowork

The year 2026 marks a pivotal moment in software engineering. The once-futuristic concept of AI desktop agents is no longer a distant dream but a tangible reality, profoundly reshaping how engineering teams operate. This article delves into the real-world impact of leading AI agents like OpenAI Codex and Claude Cowork, examining their integration into existing toolchains, the significant productivity gains achieved, and the critical challenges—particularly the persistent issue of debugging AI-generated code.

The rapid evolution of large language models (LLMs) and their integration into sophisticated agentic frameworks has ushered in an era where AI can not only assist but actively participate in the software development lifecycle. These agents are not merely autocomplete tools; they are designed to understand context, execute multi-step tasks, and interact with various applications on a developer’s desktop, mimicking human-like reasoning and action. The promise is immense: accelerated development cycles, reduced boilerplate, and a democratized access to advanced coding capabilities. However, the journey to widespread adoption is fraught with complexities, requiring a nuanced understanding of their capabilities and limitations.

Our focus will be on how these agents are specifically being leveraged by engineering teams. We’ll explore practical scenarios, analyze the metrics of their impact, and discuss the strategic adjustments teams are making to maximize their benefits while mitigating the inherent risks. From automating mundane tasks to assisting with complex architectural decisions, AI desktop agents are becoming indispensable members of the modern engineering toolkit.

The Rise of AI Desktop Agents: OpenAI Codex and Claude Cowork Leading the Charge

In 2026, two names frequently surface when discussing AI desktop agents for engineering: OpenAI Codex and Claude Cowork. While both leverage powerful underlying LLMs, their architectural approaches, integration philosophies, and target use cases present distinct advantages and challenges. Understanding these differences is crucial for teams deciding which agent, or combination of agents, best fits their operational paradigm.

OpenAI Codex: The Progenitor of Code Generation

OpenAI Codex, a direct descendant of the GPT series, has long been at the forefront of code generation. Its strength lies in its unparalleled ability to translate natural language into code across a multitude of programming languages and frameworks. In 2026, Codex has evolved beyond simple code snippets. Integrated into sophisticated desktop agent frameworks, it now acts as a proactive coding partner. These frameworks allow Codex to:

- Understand broader project context: By integrating with IDEs, version control systems (like GitHub), and project management tools (like JIRA), Codex can access file structures, commit histories, and task descriptions to generate more contextually relevant code.

- Perform multi-step coding tasks: Instead of just generating a function, Codex can be instructed to “implement a user authentication flow,” which might involve creating database schemas, API endpoints, and frontend components, all orchestrated through a series of interactions and self-corrections.

- Suggest refactorings and optimizations: Analyzing existing codebases, Codex agents can identify areas for performance improvement, security enhancements, or adherence to coding standards, often proposing concrete code changes.

- Automate repetitive tasks: From generating unit tests for new functions to creating boilerplate for new microservices, Codex significantly reduces the time spent on repetitive coding.

The core of Codex’s effectiveness lies in its vast training data, encompassing billions of lines of publicly available code. This allows it to grasp intricate programming patterns, idiomatic expressions, and common library usages. For engineering teams, this translates into a powerful assistant capable of accelerating initial development, prototyping, and even tackling bug fixes by suggesting potential solutions based on error logs and code context.

Claude Cowork: The Conversational and Collaborative Code Assistant

Anthropic’s Claude Cowork, on the other hand, distinguishes itself through its emphasis on safety, interpretability, and robust conversational abilities. While also highly capable at code generation, Cowork’s design philosophy leans more towards being a collaborative partner rather than just a code generator. Its key features as a desktop agent include:

- Advanced conversational interface: Cowork excels at understanding nuanced prompts, asking clarifying questions, and engaging in multi-turn dialogues to refine requirements before generating code. This reduces the “guesswork” often associated with less interactive agents.

- Strong emphasis on safety and ethical coding: Anthropic’s commitment to constitutional AI means Cowork is designed to avoid generating harmful, biased, or insecure code. It often flags potential security vulnerabilities or ethical considerations in proposed solutions.

- Contextual awareness through tool integration: Similar to Codex, Cowork integrates deeply with development environments. However, its strength lies in synthesizing information from various sources (documentation, JIRA tickets, Slack conversations) to provide more holistic and contextually rich suggestions.

- Code review and explanation capabilities: Cowork can act as an intelligent code reviewer, identifying bugs, suggesting improvements, and, crucially, explaining the rationale behind its suggestions in clear, natural language. This makes it an excellent tool for onboarding new team members or understanding complex legacy code.

Claude Cowork’s strength lies in its ability to act as a more human-like collaborator. Its conversational prowess and focus on safety make it particularly appealing for teams working on sensitive projects or those prioritizing maintainability and security from the outset. It’s often used for pair programming sessions, where the AI provides an additional perspective and catches potential issues early.

Comparative Analysis: Codex vs. Cowork in 2026

While both agents are powerful, their optimal application often depends on the specific needs of an engineering team. The table below summarizes their primary strengths and typical use cases in 2026:

| Feature/Aspect | OpenAI Codex (Agent Frameworks) | Claude Cowork |

|---|---|---|

| Primary Strength | Rapid code generation, automation of complex coding tasks, broad language support. | Conversational interaction, safety/ethics, code explanation, nuanced problem-solving. |

| Core Philosophy | Maximal output, efficiency, breadth of knowledge. | Collaborative partner, safety-first, depth of understanding. |

| Typical Use Cases | Boilerplate generation, initial feature development, prototyping, script automation, test generation. | Code review, architectural discussions, bug diagnosis with explanations, documentation generation, ethical code checks, pair programming. |

| Integration Focus | Deep IDE integration, version control hooks, CI/CD pipelines. | Cross-application context synthesis (IDE, JIRA, Slack, Confluence), natural language interfaces. |

| Debugging Support | Suggests fixes based on error logs and context, can refactor. | Explains potential root causes, offers detailed explanations for proposed fixes, helps understand complex errors. |

| Learning Curve | Relatively low for basic generation, higher for advanced agent orchestration. | Intuitive conversational interface, but mastering nuanced prompting takes practice. |

| Cost Model (General) | Often token-based or subscription tiers based on usage/compute. | Similar token-based, potentially with higher tiers for enhanced safety features. |

Many engineering teams in 2026 are finding success by employing a hybrid approach, leveraging Codex for raw code generation and automation, and then using Cowork for refinement, review, and deeper problem-solving. This “best of both worlds” strategy allows teams to maximize the benefits of both powerful agents.

Integration with Existing Toolchains: JIRA, GitHub, and Beyond

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.

The true power of AI desktop agents like OpenAI Codex and Claude Cowork is unlocked not in isolation, but through seamless integration with an engineering team’s existing toolchain. In 2026, these integrations are mature and sophisticated, turning standalone AI capabilities into deeply embedded components of the software development lifecycle. The goal is to minimize context switching, automate workflows, and provide AI assistance directly where developers are already working.

JIRA: Orchestrating AI-Driven Task Management

JIRA, or its equivalent project management system, serves as the central nervous system for many engineering teams. The integration of AI agents here is transformative, moving beyond simple issue creation to intelligent task orchestration and progress tracking.

- Automated Task Breakdown: When a new feature request or bug report is created in JIRA, AI agents can analyze the description, identify sub-tasks, estimate effort, and even suggest potential assignees based on past performance and skill sets. For example, a ticket like “Implement user profile editing” could be automatically broken down into “Create API endpoint for profile update,” “Design frontend form for profile,” “Write unit tests for profile service,” etc.

- Contextual Code Generation: Developers can trigger code generation directly from a JIRA ticket. An agent, having read the ticket description, attached designs, and linked documentation, can then generate relevant code snippets, database migrations, or even entire components, ensuring the generated code aligns with the task’s requirements.

- Progress Reporting and Updates: As developers commit code or complete local tasks, AI agents can automatically update JIRA tickets. For instance, a commit message referencing a JIRA ID might trigger an agent to transition the ticket from “In Progress” to “Code Review” and link the relevant pull request.

- Dependency Identification: For complex projects, agents can analyze JIRA backlogs to identify potential dependencies between tasks, flagging them to project managers and suggesting optimal sequencing.

- Intelligent Documentation Generation: Upon task completion, agents can summarize the work done, link to relevant code changes, and even draft initial documentation or release notes, attaching them directly to the JIRA ticket or a linked Confluence page.

This deep integration significantly reduces administrative overhead, ensures better alignment between code and requirements, and provides real-time visibility into project progress. It transforms JIRA from a static task tracker into a dynamic, AI-augmented project assistant.

GitHub (and other Version Control Systems): AI in the Codebase

GitHub, GitLab, Bitbucket – these platforms are the repositories of an engineering team’s intellectual property. AI desktop agents are deeply embedded here, enhancing everything from code creation to review and deployment.

- Intelligent Pull Request (PR) Creation: After a developer finishes a feature locally, an AI agent can assist in creating a comprehensive PR. This includes drafting a detailed description, suggesting reviewers based on code ownership or expertise, linking to relevant JIRA tickets, and automatically running preliminary static analysis checks.

- Automated Code Review: Beyond basic linting, AI agents conduct sophisticated code reviews. They can identify subtle bugs, suggest performance optimizations, enforce coding standards, check for security vulnerabilities, and even assess the readability and maintainability of the code. Claude Cowork, with its conversational prowess, excels here by providing detailed explanations for its suggestions.

- Conflict Resolution Assistance: When merge conflicts arise, AI agents can analyze the conflicting changes, understand the intent behind each, and suggest intelligent resolutions, significantly speeding up a traditionally tedious process.

- Test Case Generation: For new or modified code, agents can automatically generate a suite of unit, integration, and even end-to-end test cases, ensuring robust test coverage and reducing the burden on developers.

- Documentation and README Updates: As code evolves, agents can automatically update inline documentation, API specifications, and project README files, keeping them synchronized with the codebase.

- Code Search and Understanding: For large codebases, agents can help developers navigate by answering natural language queries like “Show me all functions that interact with the user authentication service” or “Explain how this module handles error logging.” This is particularly useful for onboarding new team members or understanding legacy code.

While the adoption of AI desktop agents is rapidly expanding, understanding how leading companies have successfully integrated AI into their engineering workflows at scale provides invaluable insights. For a deeper dive into the practical challenges and triumphs of deploying AI code generation tools in large organizations, explore our detailed analysis on how Notion, Ramp, and Braintrust scaled OpenAI Codex across their engineering teams, offering a blueprint for successful enterprise AI adoption.

The integration with GitHub turns the version control system into a dynamic, intelligent partner, improving code quality, accelerating the review process, and fostering a more collaborative development environment.

Beyond JIRA and GitHub: A Holistic Ecosystem

The integration doesn’t stop at JIRA and GitHub. AI desktop agents are connecting to an ever-expanding array of tools:

- Integrated Development Environments (IDEs): Deep IDE plugins (for VS Code, IntelliJ, etc.) allow agents to operate directly within the coding environment, providing real-time suggestions, refactorings, and code generation without leaving the editor.

- CI/CD Pipelines: Agents can monitor CI/CD pipelines, automatically triaging failed builds, suggesting fixes, and even optimizing pipeline configurations for faster execution.

- Communication Platforms (Slack, Microsoft Teams): Agents can participate in team discussions, answer technical questions, summarize meeting notes, and even proactively alert teams to critical issues identified in JIRA or GitHub.

- Documentation Systems (Confluence, Notion): Agents assist in creating, updating, and linking documentation across platforms, ensuring that knowledge bases remain current and accessible.

- Cloud Provider Consoles (AWS, Azure, GCP): For DevOps teams, agents can assist in provisioning infrastructure, debugging cloud-native applications, and optimizing resource utilization by interacting directly with cloud APIs.

This comprehensive integration strategy creates an AI-augmented ecosystem where developers can fluidly move between tasks, confident that their AI partners are providing context-aware assistance at every turn. The goal is to create a “flow state” for engineers, where interruptions are minimized and creative problem-solving is maximized, offloading repetitive and predictable tasks to the AI.

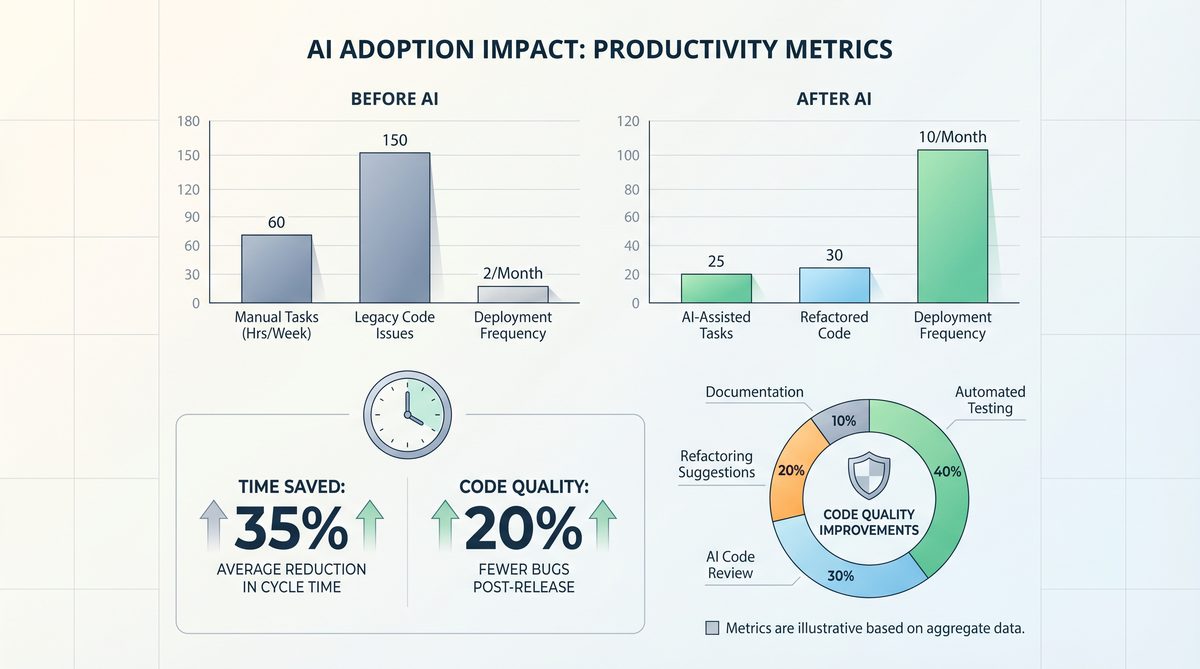

Productivity Gains: Quantifying the Impact of AI Desktop Agents

The adoption of AI desktop agents like OpenAI Codex and Claude Cowork in 2026 is driven by one primary factor: significant, measurable productivity gains. While the exact figures vary between teams and projects, a general consensus has emerged regarding the profound impact these tools have on development velocity, code quality, and developer satisfaction.

Accelerated Development Cycles

One of the most immediate and impactful benefits is the acceleration of the entire development cycle. AI agents contribute to this in several ways:

- Reduced Boilerplate and Repetitive Coding: Engineers report spending significantly less time writing standard CRUD operations, API endpoints, data models, and configuration files. Agents can generate these components in seconds, often with higher consistency and fewer initial errors than manual coding. This is particularly beneficial for microservices architectures where many similar services need to be spun up.

- Faster Prototyping and Experimentation: The ability to quickly generate code snippets or even entire proof-of-concept applications allows teams to iterate much faster. Ideas can be tested and validated more rapidly, leading to quicker product discovery and refinement.

- Onboarding Time Reduction: New team members, especially junior developers, can get up to speed much faster. AI agents can help them navigate large codebases, explain complex functions, and generate initial code for tasks, reducing the dependency on senior engineers for basic guidance.

- Task Completion Speed: For individual tasks, engineers often find that the time taken from understanding the requirement to having a working solution is cut down. This isn’t just about writing code faster, but also about quicker debugging cycles and automated testing.

Studies conducted by early adopters in 2025-2026 suggest an average increase in development velocity of 25-40% for tasks where AI agents are heavily utilized. This translates directly into faster time-to-market for new features and products.

Enhanced Code Quality and Consistency

Beyond speed, AI agents are proving instrumental in elevating the overall quality and consistency of codebases.

- Adherence to Coding Standards: Agents are trained on vast datasets of well-written code and can be fine-tuned to specific team coding standards. They automatically enforce these standards, reducing the need for manual review comments related to style or formatting.

- Improved Security Posture: By identifying common vulnerabilities (e.g., SQL injection, XSS, insecure deserialization) during code generation or review, agents help prevent security flaws from entering the codebase in the first place. Claude Cowork, with its safety-first design, is particularly adept at this.

- Better Test Coverage: Automated test generation by AI agents ensures that new features and bug fixes come with comprehensive test suites, leading to fewer regressions and more stable applications.

- Reduced Technical Debt: Agents can proactively suggest refactorings, identify dead code, and highlight areas of complexity, helping teams manage and reduce technical debt over time.

- Consistent Documentation: Automated generation and updates of documentation mean that code is better explained and easier to understand, benefiting both current and future developers.

Developer Satisfaction and Focus

Perhaps less tangible but equally important are the benefits to developer morale and job satisfaction. By offloading mundane, repetitive tasks to AI, engineers can focus on more challenging, creative, and strategically important problems.

- Reduced Cognitive Load: Developers spend less mental energy on remembering syntax, API details, or boilerplate structures, freeing up cognitive resources for higher-level problem-solving and architectural design.

- Less Frustration: Automated debugging assistance and intelligent error explanations reduce the time spent on frustrating bug hunts, allowing developers to maintain a more consistent flow state.

- Empowerment: Junior developers feel more empowered to tackle complex tasks with AI assistance, while senior developers can delegate more effectively and focus

As engineering teams increasingly leverage AI desktop agents to streamline workflows and boost individual productivity, the importance of robust collaboration platforms becomes even more pronounced. For instance, understanding how tools like Miro’s Powerful Updates for Enhanced Team Collaboration and Productivity in 2023 are evolving is crucial, as these platforms often serve as the central hub where the insights and outputs generated by AI agents are shared, discussed, and integrated into larger projects, ensuring that individual AI-driven gains translate into collective team success.

nd innovation. - Learning and Skill Development: AI agents can serve as powerful learning tools, explaining complex code, suggesting alternative approaches, and demonstrating best practices. This accelerates skill development across the team.

Anecdotal evidence from engineering managers suggests a noticeable increase in team morale and a decrease in burnout rates, as developers feel more productive and engaged in meaningful work rather than repetitive coding tasks. This is a crucial factor in talent retention in a competitive industry.

Consider the significant strides made in enterprise adoption, where companies like Notion, Ramp, and Braintrust have already demonstrated the power of these tools. For a detailed look at how these industry leaders successfully integrated and scaled OpenAI Codex across their engineering teams, exploring the challenges and triumphs of their journey, see our enterprise case study on Notion, Ramp, and Braintrust’s OpenAI Codex implementation.

Quantifying the Gains: A Snapshot from a 2026 Case Study

Consider a mid-sized SaaS company, “InnovateTech,” which fully integrated OpenAI Codex and Claude Cowork agents into their development workflow in late 2025. Their metrics from Q1 2026 show:

- Feature Delivery Time: Reduced by 30% on average for features involving significant backend API and frontend component development.

- Bug Fix Cycle Time: Decreased by 20%, largely due to AI-assisted debugging and automated test generation.

- Code Review Time: Reduced by 15%, as AI agents performed initial sweeps for common issues, allowing human reviewers to focus on architectural and business logic.

- Test Coverage: Increased from an average of 70% to 88% across new modules, primarily driven by AI-generated tests.

- Developer Onboarding: New hires reached full productivity 25% faster.

These figures illustrate that while the investment in AI agents requires strategic planning and integration effort, the returns in terms of productivity, quality, and team satisfaction are substantial and quantifiable.

Managing the 43% of AI-Generated Code That Needs Debugging

Get Free Access to 40,000+ AI Prompts

Join 40,000+ AI professionals. Get instant access to our curated Notion Prompt Library with prompts for ChatGPT, Claude, Codex, Gemini, and more — completely free.

Get Free Access Now →No spam. Instant access. Unsubscribe anytime.

While AI desktop agents offer unprecedented productivity gains, the reality in 2026 is that they are not infallible. A significant challenge that engineering teams consistently face is the necessity to debug and refine AI-generated code. Industry reports indicate that, on average, 43% of code initially generated by AI agents requires some level of debugging, modification, or correction before it can be considered production-ready. This statistic is not a condemnation of AI, but rather a crucial insight into its current limitations and the evolving role of human engineers.

Why AI-Generated Code Needs Debugging

Several factors contribute to the imperfect nature of AI-generated code:

- Contextual Misunderstanding: While AI agents are becoming increasingly context-aware, they can still misinterpret nuanced requirements, especially in complex, legacy, or highly domain-specific systems. A subtle misunderstanding of business logic or an implicit assumption can lead to functional bugs.

- Hallucinations and Plausible but Incorrect Code: LLMs are known to “hallucinate”—generating output that seems correct and confident but is fundamentally false or nonsensical in context. In code, this manifests as syntactically valid but logically flawed implementations, or references to non-existent APIs/libraries.

- Security Vulnerabilities: While agents like Claude Cowork prioritize safety, others might inadvertently introduce security flaws if not explicitly prompted to consider security or if the training data contained vulnerable patterns. Generic code might not account for specific threat models of an application.

- Performance Bottlenecks: AI-generated code might be functionally correct but inefficient. Agents may not always optimize for specific performance characteristics of a system, leading to slow queries, excessive resource consumption, or suboptimal algorithms.

- Integration Issues: Code generated in isolation might struggle to integrate seamlessly with existing complex architectures, particularly when dealing with intricate data flows, asynchronous operations, or specific error handling patterns.

- Outdated Information: AI models, even those frequently updated, may not always have the very latest information on library versions, framework best practices, or security patches, leading to deprecated code or compatibility issues.

- Lack of Holistic Design Perspective: While agents can generate components, they often struggle with the broader architectural implications, design patterns, and long-term maintainability considerations that a human architect would bring.

- Implicit Requirements: Many requirements in software engineering are implicit – unstated assumptions about user behavior, error handling, or performance. AI agents, by their nature, struggle with these unarticulated needs.

Strategies for Managing and Debugging AI-Generated Code

Engineering teams in 2026 have developed sophisticated strategies to address the 43% debugging challenge, transforming it from a roadblock into a manageable part of the development process.

1. Enhanced Prompt Engineering and Iterative Refinement

- Detailed and Iterative Prompting: Developers are learning to write more precise, comprehensive prompts, often including examples, constraints, and desired output formats. Instead of single-shot prompts, they engage in multi-turn conversations with agents, refining requirements and correcting initial output

While this article focuses on the tactical implementation of AI desktop agents within engineering teams, understanding the broader strategic impact and financial gains is equally crucial. For a deeper dive into how organizations are translating these technological advancements into tangible business value and achieving measurable returns on investment with AI across various sectors, explore our comprehensive analysis on Real-World AI Adoption Case Studies and ROI for Enterprises in 2026.

>Providing Contextual Information: Feeding agents relevant documentation, existing code snippets, schema definitions, and API specifications significantly improves the quality of generated code, reducing the likelihood of errors. - Specifying Constraints and Non-Functional Requirements: Explicitly instructing agents on performance targets, security considerations, and coding standards helps guide the generation process toward more robust solutions.

2. Robust Automated Testing Frameworks

- AI-Assisted Test Generation: Paradoxically, AI agents are also used to generate comprehensive unit, integration, and end-to-end tests for the code they (or humans) produce. This acts as an immediate sanity check. If the generated code fails its own AI-generated tests, it’s a clear signal for human intervention.

- Continuous Integration/Continuous Deployment (CI/CD) with AI Hooks: AI-generated code is immediately subjected to the full battery of automated tests, static analysis, and security scans within the CI/CD pipeline. Agents can even analyze build failures and suggest potential fixes.

This shift towards AI-powered desktop agents is not happening in a vacuum; it’s part of a broader enterprise trend where organizations are actively seeking tangible returns on their AI investments, as explored in our comprehensive analysis of real-world enterprise AI adoption case studies demonstrating ROI in 2026.

- Property-Based Testing: For critical components, teams are increasingly using property-based testing, where AI agents help define and verify general properties of the code, rather than just specific examples.

3. Human Oversight and Strategic Code Review

- Human-in-the-Loop Review: The role of the human developer shifts from writing boilerplate to critically reviewing and refining AI-generated code. This involves understanding the AI’s intent, verifying logic, and ensuring alignment with architectural principles.

- Targeted Code Review: Code reviews for AI-generated components often focus on higher-level concerns: architectural fit, security implications, performance characteristics, and adherence to complex business rules, rather than syntax or basic logic.

- Pair Programming with AI: Many teams treat AI agents as a pair programming partner. The human developer drives the high-level design and critical logic, while the AI handles the implementation details, allowing for real-time feedback and correction.

- Distinguishing AI-Generated Code: Some teams are implementing tooling to tag or visually distinguish AI-generated code in PRs, prompting reviewers to pay closer attention to these sections.

4. Advanced Debugging Tools and Techniques

- AI-Assisted Debuggers: Debugging tools are becoming more intelligent. AI agents integrated into debuggers can analyze stack traces, variable states, and execution paths to suggest root causes and potential fixes, often explaining complex errors in natural language.

- Observability and Monitoring: Robust logging, tracing, and monitoring systems are crucial. AI agents can analyze these logs in real-time to detect anomalies, identify performance bottlenecks, and pinpoint the source of errors in production environments.

- Root Cause Analysis Automation: For recurring bugs, AI agents can analyze historical data from issue trackers, code repositories, and monitoring systems to suggest common patterns and accelerate root cause analysis.

5. Training and Skill Development for Engineers

- “AI Literacy” Training: Engineers are receiving training on how to effectively prompt AI agents, interpret their output, and efficiently debug the generated code. This includes understanding the limitations and biases of different models.

- Focus on Higher-Order Skills: The emphasis shifts from rote coding to architectural design, complex problem-solving, system integration, and critical evaluation of AI outputs.

- Specialization in AI Agent Orchestration: Some engineers are specializing in designing, deploying, and maintaining the agent frameworks themselves, ensuring they are optimally configured for the team’s specific needs.

The 43% figure is not an indictment of AI, but a testament to the evolving partnership between humans and machines. It underscores that while AI excels at generating code, human intelligence remains indispensable for ensuring its correctness, security, performance, and alignment with complex business objectives. The future of engineering in 2026 is not about AI replacing humans, but about AI augmenting human capabilities, creating a more efficient, creative, and ultimately, more productive development landscape.

The Evolving Role of the Engineer in an AI-Augmented World

With the pervasive adoption of AI desktop agents like OpenAI Codex and Claude Cowork, the traditional role of a software engineer is undergoing a profound transformation. In 2026, the engineer is no longer solely a coder but evolves into a “prompt engineer,” a “system architect,” a “critical validator,” and a “collaborative orchestrator.” This shift redefines skill sets, team structures, and career paths within the engineering domain.

From Coder to Prompt Engineer and AI Orchestrator

The most immediate and visible change is the emphasis on prompt engineering. Engineers now spend a significant portion of their time crafting precise, detailed, and iterative prompts to guide AI agents. This requires:

- Deep Domain Knowledge: To instruct an AI effectively, an engineer must possess a thorough understanding of the problem domain, business logic, and desired outcomes. The AI provides the “how,” but the engineer defines the “what” and “why.”

- Contextual Awareness: Providing the AI with the right context – existing code, relevant documentation, architectural diagrams, error logs – is crucial. Engineers become adept at synthesizing information and presenting it to the AI in an actionable format.

- Iterative Refinement: Rather than expecting perfect code on the first try, engineers learn to engage in a conversational loop with the AI, refining prompts based on initial outputs, asking clarifying questions, and guiding the AI towards the desired solution.

- Tooling Expertise: Mastering the specific features and integration points of different AI agents and their surrounding frameworks becomes a core skill. Understanding when to use Codex for raw generation versus Cowork for review or explanation is key.

Beyond prompting, engineers are increasingly orchestrating multiple AI agents, designing workflows where different agents handle specific parts of the development process, from initial task breakdown to code generation, testing, and deployment. This requires a systems-thinking approach and an understanding of how to chain AI capabilities effectively.

The Rise of the Critical Validator and Quality Guardian

As discussed with the 43% debugging statistic, the human engineer’s role as a critical validator of AI-generated code is paramount. This involves:

- Architectural Oversight: Ensuring that AI-generated code aligns with the overall system architecture, design patterns, and long-term scalability goals. This is a level of reasoning that current AI agents still struggle with consistently.

- Security and Compliance Scrutiny: While AI can assist with security, the ultimate responsibility for ensuring code security and compliance with industry regulations rests with the human engineer. They must verify that AI-generated solutions don’t introduce new vulnerabilities or violate ethical guidelines.

- Performance Optimization: Reviewing AI-generated code for performance bottlenecks and applying human expertise to optimize algorithms, database queries, and resource utilization.

- Business Logic Verification: Confirming that the AI-generated code accurately implements complex business rules and edge cases, which often require nuanced understanding beyond what an AI can infer.

- Maintainability and Readability: Ensuring that the generated code is not just functional but also maintainable, readable, and understandable by other human engineers. This often involves refactoring and adding human-centric comments.

The engineer becomes the ultimate quality guardian, leveraging AI to accelerate creation but applying their unique human judgment to ensure correctness, robustness, and long-term viability.

Focus on Higher-Order Problem Solving and Innovation

By offloading repetitive and predictable coding tasks to AI, engineers are freed to focus on higher-value activities:

- Complex Problem Solving: Tackling truly novel, ambiguous, or conceptually difficult problems that require creative thinking, abstract reasoning, and deep analytical skills.

- System Design and Architecture: Spending more time on designing robust, scalable, and resilient systems, exploring new architectural patterns, and making strategic technology choices.

- Innovation and Research: Exploring new technologies, experimenting with novel solutions, and pushing the boundaries of what’s possible, rather than being bogged down by implementation details.

- Mentorship and Collaboration: Senior engineers can dedicate more time to mentoring junior team members, fostering a culture of knowledge sharing, and collaborating on complex cross-functional initiatives.

- Understanding User Needs: Deeper engagement with product managers and users to truly understand their pain points and translate them into effective technical solutions.

This shift makes the engineering role more intellectually stimulating and impactful, transforming engineers from code producers to strategic problem-solvers and innovators.

New Skill Sets and Career Paths

The AI-augmented engineering landscape necessitates the development of new skills and potentially new career specializations:

- AI Literacy: A fundamental understanding of how LLMs work, their capabilities, and their limitations.

- Critical Thinking and Verification: The ability to critically evaluate AI output, identify potential flaws, and verify correctness.

- System Integration Expertise: Proficiency in integrating various AI agents and tools into complex development workflows.

- Ethical AI Considerations: Awareness of the ethical implications of AI-generated code, including bias, fairness, and responsible deployment.

- Human-AI Collaboration: Skills in effectively communicating with and collaborating alongside AI agents.

We may see the emergence of roles like “AI Workflow Engineer,” “Prompt Architect,” or “AI Code Auditor,” specializing in optimizing human-AI collaboration within engineering teams.

In conclusion, the engineer in 2026 is an empowered individual, leveraging AI as a powerful extension of their capabilities. The role is less about the mechanics of coding and more about strategic thinking, critical evaluation, and creative problem-solving. This evolution promises a future where engineers can achieve unprecedented levels of productivity and innovation, driving technological advancement at a pace previously unimaginable.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Access Free Prompt LibraryUseful Links

- OpenAI Codex Official Page (Archived for Historical Context)

- Anthropic’s Claude Latest Information

- GitHub Copilot: An Early Precursor to Desktop Agents

- Jira Software Official Site

- Forbes Article: The Future of Software Engineering

- Accenture: Generative AI in Software Development

- McKinsey: The Economic Potential of Generative AI

- IEEE: AI and the Future of Work

Frequently Asked Questions

How do AI desktop agents improve engineering workflows?

AI desktop agents enhance workflows by automating repetitive tasks, assisting in code generation, and providing intelligent suggestions. They integrate into existing toolchains, allowing engineers to focus on complex problem-solving and innovation.

What are the main challenges of using AI desktop agents?

The primary challenges include debugging AI-generated code, ensuring seamless integration with existing systems, and managing the learning curve associated with new technologies. Teams must also address potential security and data privacy concerns.

Which AI desktop agents are leading in 2026?

In 2026, OpenAI Codex and Claude Cowork are prominent AI desktop agents. They offer robust capabilities for code generation and task automation, each with unique integration approaches and target use cases tailored to engineering needs.

Can AI desktop agents replace human engineers?

AI desktop agents are not replacements for human engineers. Instead, they serve as powerful tools that augment human capabilities, handling routine tasks and providing insights, thus allowing engineers to focus on strategic and creative aspects of development.

What are the benefits of integrating AI agents into toolchains?

Integrating AI agents into toolchains can lead to faster development cycles, reduced manual coding efforts, and enhanced decision-making support. They democratize access to advanced coding capabilities, making engineering more efficient and innovative.

How do teams choose the right AI desktop agent?

Choosing the right AI desktop agent involves evaluating specific team needs, existing toolchain compatibility, and the agent's strengths in handling desired tasks. Teams should also consider the agent's adaptability and support for ongoing development goals.

🕐 Instant∞ Unlimited🎁 Free