Google’s New TPU 8t and Inference Chips: What Developers Need to Know About the Nvidia Challenge

In a bold move at the Google Cloud Next 2026 conference, Google unveiled its latest advancements in artificial intelligence hardware, the TPU 8t, along with a new line of inference chips. These innovations are designed to redefine the computational efficiency landscape and challenge the dominance of Nvidia in the AI hardware market. This article delves into the details of these cutting-edge technologies and what they mean for developers and businesses alike.

The TPU 8t: A Leap Forward in AI Processing

Google’s TPU (Tensor Processing Unit) series has been a cornerstone in AI hardware, and the introduction of the TPU 8t marks a significant leap forward. Built to deliver unprecedented processing power, the TPU 8t is designed to handle the most demanding machine learning workloads with enhanced speed and efficiency.

Key Features of TPU 8t

- Increased Computational Power: The TPU 8t offers a substantial increase in teraflops, facilitating complex model training and real-time data processing.

- Energy Efficiency: Enhanced architecture reduces energy consumption, making it a sustainable option for large-scale data centers.

- Scalability: Easily integrates into existing systems, allowing businesses to scale operations without major infrastructure changes.

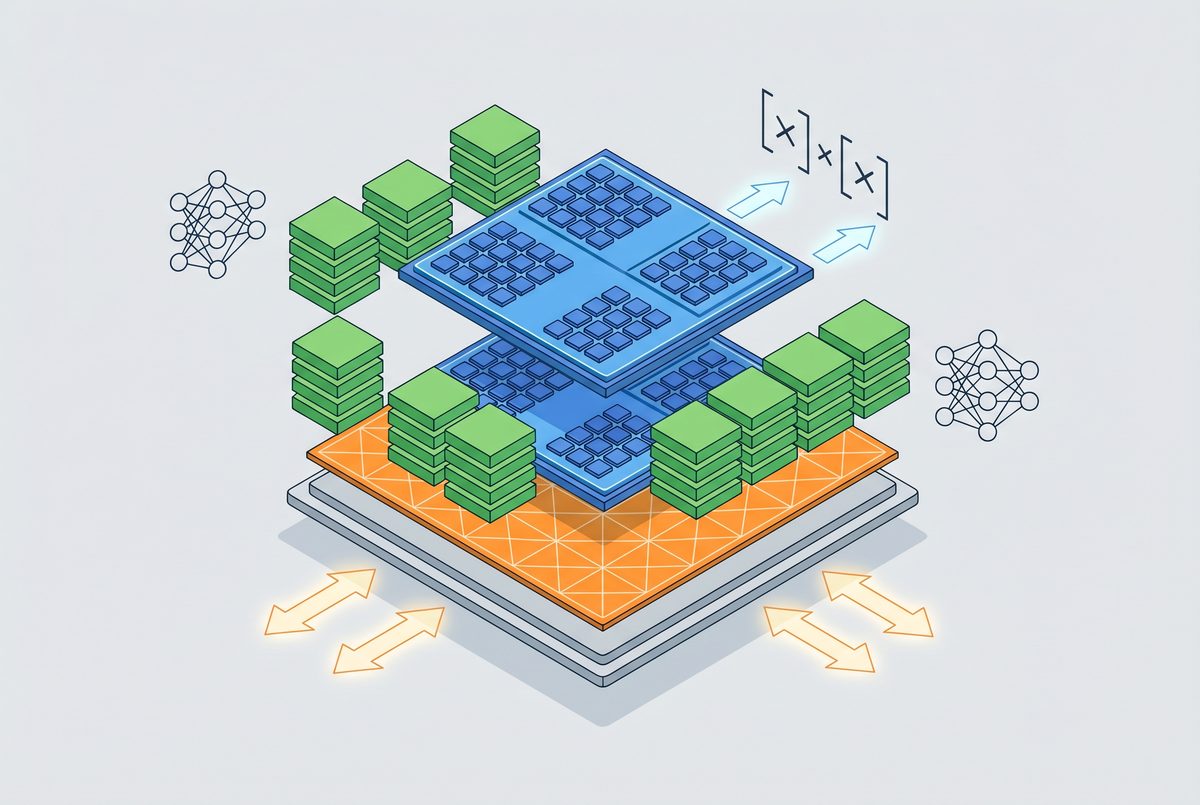

The TPU 8t’s architecture utilizes advanced tensor cores that improve upon the speed and performance of previous iterations. This development is poised to provide developers with the tools needed to push the boundaries of AI applications further.

New Inference Chips: Optimized for Efficient AI Deployment

In addition to the TPU 8t, Google has introduced a new line of inference chips designed specifically to optimize AI model deployment. These chips are aimed at improving the efficiency of AI inference tasks, which are critical for real-time applications such as autonomous vehicles and smart devices.

Advantages of Google’s Inference Chips

- Low Latency: Designed to process data quickly, reducing the time it takes for AI models to make decisions.

- High Throughput: Capable of handling multiple AI tasks simultaneously, which is essential for complex, multi-layered operations.

- Cost-Effective: Offers a balance between performance and cost, making it accessible for a wide range of applications.

These inference chips are a testament to Google’s commitment to providing scalable solutions that cater to the evolving needs of AI deployment. By addressing the challenges associated with real-time AI processing, Google positions itself as a formidable competitor in the AI hardware arena.

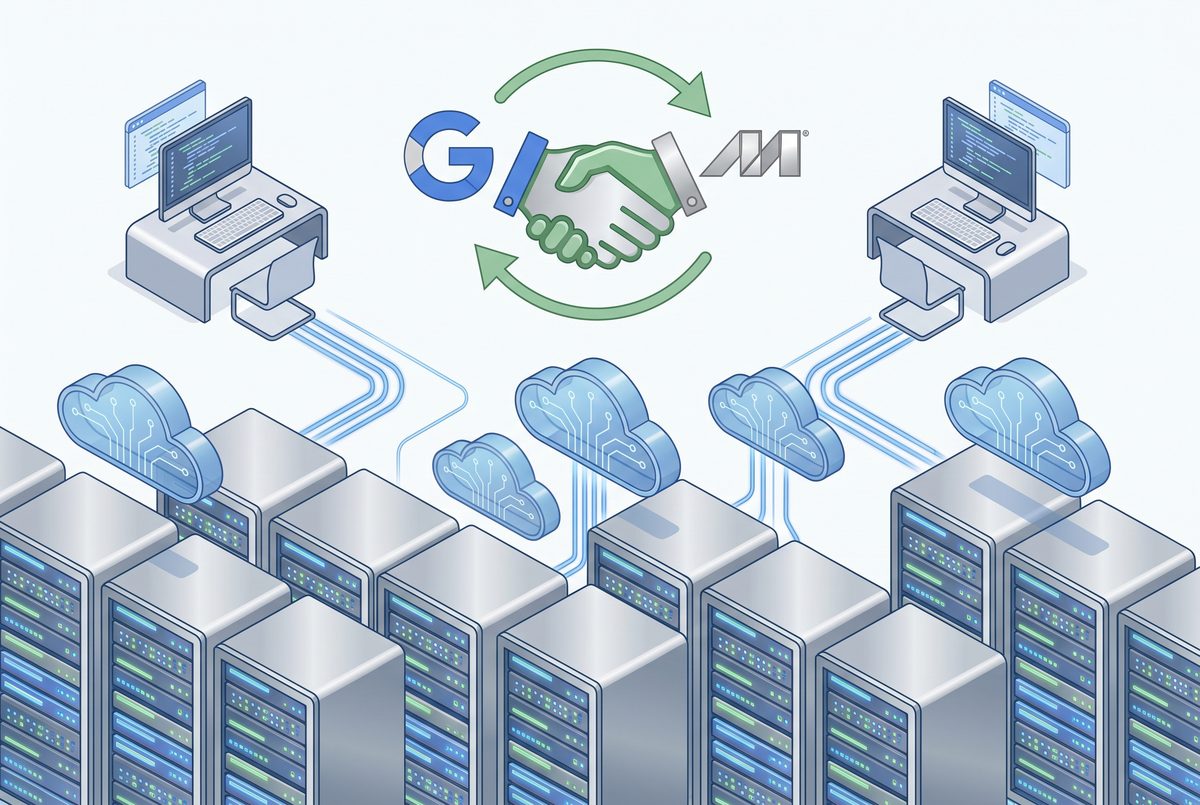

Google’s Strategic Moves: Partnering with Marvell and Others

To bolster its hardware offerings, Google announced a strategic partnership with Marvell, a leader in data infrastructure semiconductor solutions. This collaboration is part of a larger strategy involving a four-partner supply chain, designed to ensure reliability and innovation in Google’s hardware production.

“Our partnerships are central to delivering the most advanced AI hardware solutions,” said a Google spokesperson at the conference.

This multifaceted partnership is expected to enhance the manufacturing capabilities and ensure the seamless integration of Google’s chips into diverse technological ecosystems.

The Competitive Landscape: Challenging Nvidia

Google’s announcements at the 2026 conference signal a direct challenge to Nvidia, a long-standing leader in the AI hardware market. Nvidia’s GPUs have been the go-to for many AI developers, but Google’s new TPU 8t and inference chips offer compelling alternatives with their enhanced performance metrics.

Developers now have more options to choose from, which could lead to increased innovation and competition in AI applications. For those interested in the technical details and implications of this competition, you can explore our comprehensive analysis of AI hardware trends.

Implications for Developers and Businesses

For developers, the introduction of the TPU 8t and inference chips opens up new possibilities for designing and deploying AI models. These chips promise to reduce the time and cost associated with AI development, allowing for more rapid iteration and deployment of AI solutions.

Businesses stand to benefit from these advancements by gaining access to more powerful AI tools that can enhance their operational efficiencies and open new avenues for innovation. The scalability and cost-effectiveness of the new hardware make it a viable option for companies of all sizes looking to leverage AI technology.

For those interested in learning how to integrate these new technologies into their existing systems, check out our detailed guide on AI integration strategies.

Conclusion: A New Era in AI Hardware

Google’s latest hardware announcements herald a new era in AI processing, providing developers and businesses with the tools to push the boundaries of what is possible. By challenging Nvidia’s dominance, Google is fostering a competitive environment that will likely spur further innovation in the industry. As these technologies continue to evolve, they will undoubtedly play a critical role in shaping the future of AI.

Stay tuned to chatgptaihub.com for more updates and in-depth analyses of the latest trends in AI technology.