Mastering Multi-Agent Prompts: Advanced Techniques for Codex and Claude Code Orchestration

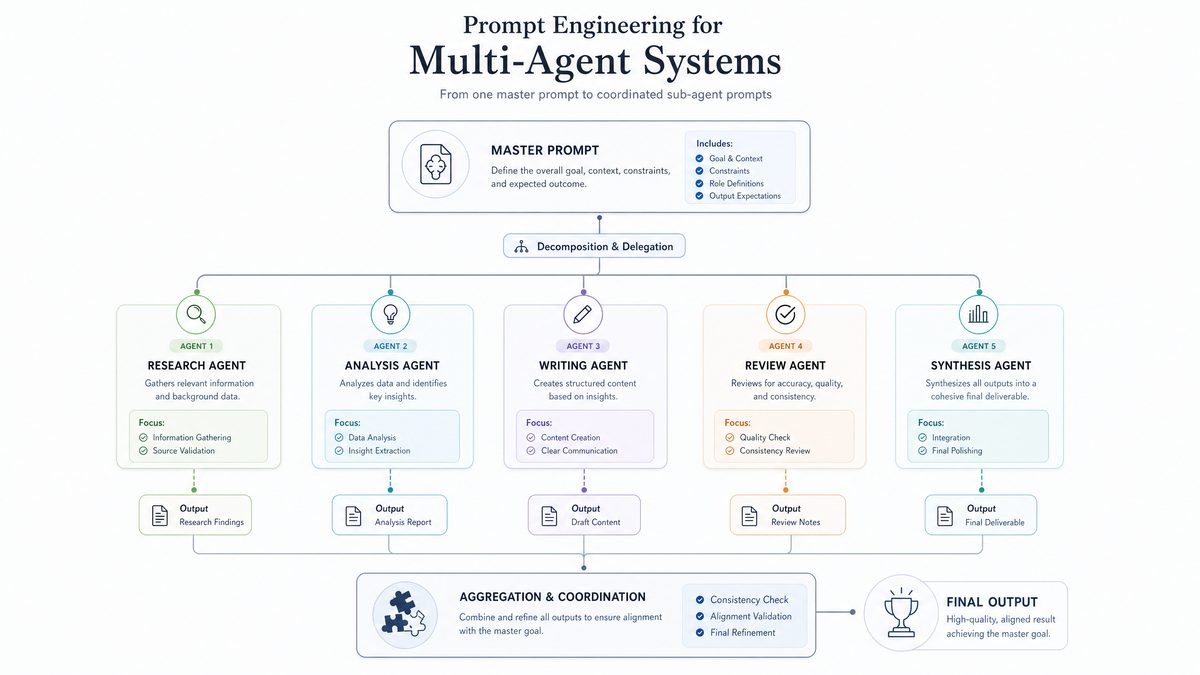

In recent years, the evolution of AI language models has transformed how developers and organizations approach complex problem-solving. Among these innovations, multi-agent prompting has emerged as a powerful technique to coordinate distinct AI agents—each specialized in a particular function—to collaboratively solve intricate tasks. This article delves deep into advanced prompt engineering methods for orchestrating multi-agent systems, with a particular focus on OpenAI’s Codex and Anthropic’s Claude code models. We explore the management of root and sub-agents, the nuances of setting thread caps to optimize concurrency, and how persistent goals can guide AI agents toward long-term objectives in modern AI workspaces.

This comprehensive guide is designed for AI practitioners, prompt engineers, and technical decision-makers aiming to harness the full potential of multi-agent orchestration for coding, automation, and AI-driven workflows. We will also examine historical developments, real-world case studies, and anticipate future trends to prepare readers for the next generation of AI collaboration frameworks.

Understanding Multi-Agent Systems in AI: Foundations and Evolution

The Concept of Multi-Agent AI Architectures

Multi-agent systems (MAS) represent a paradigm in artificial intelligence where multiple autonomous agents interact within an environment to solve problems collaboratively or competitively. Unlike monolithic AI models, MAS divides responsibilities among specialized agents, often mirroring human team dynamics. This decentralization fosters modularity, scalability, and robustness, especially in complex domains such as software development, data analysis, and real-time decision-making.

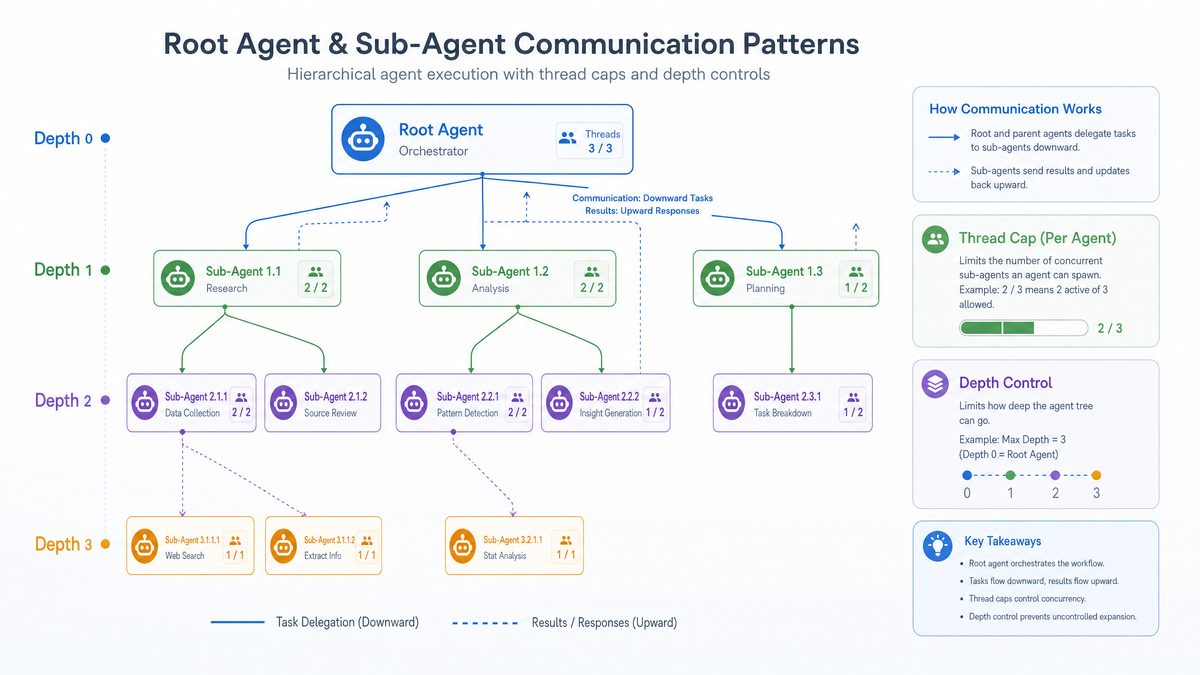

In the context of large language models (LLMs), multi-agent prompting involves orchestrating multiple model instances or agents, each tailored with specific prompt instructions, knowledge scopes, or operational parameters. These agents can be designated as root or sub-agents, where the root agent typically coordinates the overall task flow, delegating subtasks to sub-agents specialized in functions like code generation, debugging, or documentation.

To better understand this, imagine a software development team: the project manager (root agent) assigns tasks to developers (sub-agents), testers, and documentation specialists, coordinating their work to produce a final product. Similarly, in MAS, agents are assigned specialized roles, which collectively contribute to the solution.

Historical Context: From Single-Model Responses to Collaborative AI Agents

Initially, language models were employed in a linear fashion—one prompt, one response. While effective for straightforward tasks, this approach struggled with multifaceted problems requiring multiple, interdependent reasoning steps. The rise of multi-agent prompting can be traced back to innovations such as OpenAI’s ChatGPT plugins, Anthropic’s Constitutional AI, and research into cooperative AI frameworks.

The transition toward multi-agent setups was motivated by the need to overcome limitations inherent in single-agent deployments:

- Task Specialization: Different agents can be optimized for different subdomains, improving accuracy and efficiency. For example, one agent might specialize in code syntax, another in logical reasoning, and another in user interface design.

- Parallel Processing: Multiple agents working in parallel can accelerate complex workflows. Instead of sequentially processing subtasks, agents can operate concurrently, reducing overall latency.

- Robustness and Error Handling: Redundancy among agents allows error detection and correction through consensus or arbitration. If one agent produces inconsistent output, others can flag or correct the error.

- Enhanced Creativity and Problem Solving: By having diverse agents with different perspectives or heuristics, MAS can explore a wider solution space, leading to more innovative outcomes.

These developments mark a significant shift in AI design philosophy—from isolated task execution to collaborative problem-solving ecosystems. Contemporary research continues to explore how agent communication protocols, incentive structures, and learning mechanisms can be optimized for multi-agent interactions.

Key Terminology: Root Agents, Sub-Agents, Thread Caps, and Persistent Goals

To master multi-agent prompting, it is essential to understand the terminology shaping this discipline:

- Root Agent: The primary orchestrator that manages the overall workflow, delegating responsibilities to sub-agents and integrating their outputs. It acts as the “conductor” in an orchestra, ensuring all parts work harmoniously.

- Sub-Agent: A specialized agent tasked with handling specific subtasks or modules, often operating under constraints defined by the root agent. Sub-agents can be viewed as “specialists” focusing on particular aspects of the problem.

- Thread Cap: A concurrency control mechanism that limits the number of active threads or agents running simultaneously, balancing throughput with resource constraints. This is crucial to avoid overloading computational resources or facing API rate limits.

- Persistent Goals: Overarching objectives maintained across agent interactions and sessions, ensuring alignment and continuity in task execution. These goals provide strategic direction beyond individual prompt instructions.

- Agent Communication Protocols: The defined methods and formats by which agents exchange information, including message passing, status updates, and error reporting.

- Context Window: The segment of conversation or data history that an agent considers in making decisions or generating responses, limited by model token capacities.

These concepts form the foundation upon which advanced prompt engineering techniques are constructed. A thorough understanding facilitates designing scalable, efficient, and maintainable multi-agent AI systems.

Advanced Techniques for Managing Root and Sub-Agents

Structuring Effective Root Agent Prompts

The root agent serves as the central command unit in multi-agent orchestration. Its prompt must clearly define the overall problem, set expectations for sub-agent collaboration, and maintain context continuity. Key considerations include:

- Explicit Role Definition: Clearly instruct the root agent on its role as coordinator, specifying how it should delegate tasks and synthesize responses. For example, “You are the root agent responsible for managing and consolidating the outputs of specialized sub-agents.”

- Contextual Awareness: Include necessary background information and maintain state across interactions to avoid information loss. Utilize techniques such as context caching, session summaries, or external memory references.

- Fallback Protocols: Define strategies for handling sub-agent failures or ambiguous outputs, such as retry mechanisms, escalation procedures, or invoking alternative agents.

- Prioritization Rules: Instruct the root agent on how to prioritize conflicting outputs or incomplete results, for example by favoring agents with higher confidence scores or domain expertise.

- Output Formatting Instructions: Specify clear formatting requirements for the final consolidated output to ensure consistency and ease of downstream processing.

Example prompt snippet for a root agent orchestrating code review:

You are the root agent coordinating a code review workflow. Your task is to delegate syntax checking, logical analysis, and documentation verification to respective sub-agents. Collect their feedback, resolve conflicts, and generate a comprehensive review report formatted as a structured JSON object with fields for each review aspect.

Designing Sub-Agent Prompts for Specialization

Sub-agents require focused prompts that empower them to excel in their designated roles. When crafting sub-agent prompts, consider:

- Domain Specialization: Tailor instructions to specific expertise areas such as syntax validation, performance optimization, security auditing, or testing automation.

- Input Constraints: Define clear input-output expectations to prevent scope creep and maintain modularity. Ensure sub-agents know exactly what data to process and how to format their responses.

- Inter-Agent Communication: Incorporate mechanisms for sub-agents to report status, request clarifications, or flag issues to the root agent. This can be achieved through structured message protocols embedded in their prompts.

- Error Reporting: Instruct sub-agents to explicitly indicate uncertainty or inability to complete a task, enabling root agents to take corrective actions.

- Resource Awareness: Guide sub-agents to optimize their output length and complexity to respect token limits and computational budgets.

Example sub-agent prompt for a code syntax checker:

You are a syntax validation agent. Analyze the provided code snippet for syntax errors and report any issues with line numbers and suggestions for correction. Do not alter the code. Provide your response as a JSON array of error objects, each containing 'line', 'error', and 'suggestion' fields.

Techniques for Seamless Integration of Sub-Agent Outputs

Integrating sub-agent outputs into a cohesive final product is a significant challenge. Strategies include:

- Structured Response Formats: Mandate JSON, XML, or other machine-readable structures to facilitate automated parsing and aggregation. This reduces ambiguity in interpreting agent outputs.

- Weighted Consensus: Assign confidence scores or reliability metrics to sub-agent outputs and resolve inconsistencies based on weighted voting or trust models.

- Iterative Refinement: Allow the root agent to request clarifications or additional passes from sub-agents if outputs conflict or lack completeness. This iterative feedback loop can enhance accuracy.

- Conflict Resolution Protocols: Define explicit rules for handling contradictory outputs, such as prioritizing certain agents or requesting a tie-breaker agent’s input.

- Aggregation Techniques: Use summarization or fusion methods to consolidate multiple sub-agent responses into a unified final output, preserving essential details while eliminating redundancy.

Case Study: Coordinating Code Generation and Testing Agents

Consider a scenario where the root agent oversees both code generation and automated testing sub-agents. The root agent’s prompt must coordinate the sequence—first generating code, then triggering tests, followed by review of test outcomes to determine if regeneration or debugging is necessary.

In this workflow, the root agent:

- Assigns the code generation sub-agent a detailed specification and monitors its output for completeness.

- Dispatches the generated code to the testing sub-agent, which runs unit tests, integration tests, and reports results.

- Evaluates test results to decide whether to accept the code, request bug fixes, or regenerate portions.

- Maintains a session context tracking the history of iterations and detected issues, enabling intelligent decision-making.

This approach enhances reliability by enforcing a rigorous feedback loop, ensuring that code is not only syntactically correct but also functionally verified. The root agent’s ability to dynamically adapt instructions based on sub-agent feedback exemplifies advanced orchestration.

Extending to Multi-Domain Orchestration

Beyond coding workflows, similar orchestration principles apply to multi-domain tasks such as data science pipelines, content creation, or customer support automation. For instance, a root agent managing a data science project could coordinate data cleaning, feature engineering, model training, and report generation sub-agents, each specialized and operating under a unified goal.

Setting Thread Caps: Balancing Concurrency and Resource Utilization

Understanding Thread Caps in Multi-Agent Contexts

Thread caps limit the number of simultaneous agent threads running within a session or workspace. This is critical to manage compute resources, prevent API rate limiting, and optimize response latencies.

In AI orchestration, setting an appropriate thread cap is a nuanced balancing act:

- High Thread Caps enable greater parallelism but consume more computational resources and can increase contention or throttling risks. This is analogous to having many cooks in the kitchen—while tasks proceed faster, resource contention or coordination overhead may arise.

- Low Thread Caps conserve resources but may introduce bottlenecks, delaying task completion. This is like having too few workers, leading to underutilization and slower throughput.

Optimizing thread caps requires understanding the workload characteristics, agent response times, and infrastructure constraints.

Determining Optimal Thread Caps for Codex and Claude

Codex and Claude models differ in architecture, latency profiles, and cost structures, influencing thread cap strategies:

| Aspect | Codex | Claude |

|---|---|---|

| Model Specialization | Code generation with strong syntax and language understanding | Conversational AI with enhanced contextual memory and safety features |

| Typical Latency | Moderate (~1-2 seconds per request) | Lower latency (~0.8-1.5 seconds per request) |

| Cost per Token | Higher for large code generation tasks | Optimized for multi-turn dialogues, cost-efficient for iterative prompting |

| Recommended Thread Cap | 4-6 concurrent threads for balanced throughput | 6-8 concurrent threads leveraging efficient context handling |

| Resource Consumption | Higher GPU/memory footprint due to complex code context processing | More efficient memory management allows denser concurrency |

| Use Case Suitability | Batch code generation, refactoring, and complex logic synthesis | Interactive coding assistance and conversational debugging |

Experimentation with thread caps should consider organizational priorities such as speed versus cost, as well as the complexity of the orchestrated tasks. For instance, latency-sensitive applications may prefer higher thread caps at the expense of cost, while budget-constrained projects might prioritize fewer threads.

Implementing Thread Caps in Prompt Engineering

Thread caps can be enforced programmatically or via prompt constraints:

- Programmatic Control: Use orchestration middleware or workflow platforms that limit simultaneous API calls. This can involve queueing systems, rate limiters, or concurrency pools.

- Prompt-Level Constraints: Instruct agents to self-regulate concurrency or queue requests through root agent arbitration. This technique is particularly useful when programmatic controls are unavailable or when decentralized control is desired.

For example, the root agent prompt may include:

Manage a maximum of 5 concurrent sub-agent threads. Queue additional tasks and process them as threads become available. Report the current thread usage status periodically.

Monitoring and Dynamic Adjustment of Thread Caps

Thread caps should not be static. Continuous monitoring of system load, response times, and error rates can inform dynamic adjustments. Techniques involve:

- Auto-Scaling: Increasing thread caps during off-peak hours or when latency thresholds are comfortably met. This approach maximizes resource utilization without sacrificing performance.

- Backoff Strategies: Reducing concurrency when error rates or timeouts increase, thereby preventing cascading failures.

- Feedback Loops: Implementing monitoring dashboards and alerting systems to guide operators in tuning thread caps. Real-time analytics can provide insights into bottlenecks and resource saturation.

- Adaptive Load Balancing: Distributing concurrency dynamically across agents based on priority, complexity, or historical performance.

Comparative Analysis: Thread Cap Impact on Throughput and Latency

Understanding the trade-offs between concurrency and performance is aided by empirical data. Below is an illustrative example comparing throughput and latency at different thread caps for Codex and Claude:

| Thread Cap | Codex Avg Latency (s) | Codex Throughput (req/sec) | Claude Avg Latency (s) | Claude Throughput (req/sec) |

|---|---|---|---|---|

| 2 | 1.9 | 1.05 | 1.2 | 1.6 |

| 4 | 2.1 | 1.9 | 1.4 | 2.8 |

| 6 | 2.5 | 2.4 | 1.6 | 3.7 |

| 8 | 3.0 | 2.6 | 1.9 | 4.2 |

Note: Higher thread caps improve throughput but increase average latency due to resource contention. Optimal settings depend on specific application requirements.

Leveraging Persistent Goals for Long-Term AI Collaboration

Defining Persistent Goals in AI Workspaces

Persistent goals represent sustained objectives that guide AI agents beyond isolated interactions. Unlike transient instructions, persistent goals maintain continuity across sessions, enabling agents to develop context-aware behaviors that align with broader organizational or project aims.

Examples of persistent goals include:

- Maintaining code quality standards throughout development cycles, ensuring consistency and reliability.

- Continuously improving documentation accuracy as code evolves, promoting maintainability.

- Adhering to security best practices in all generated outputs to mitigate vulnerabilities.

- Ensuring compliance with regulatory requirements such as GDPR or HIPAA in data processing tasks.

- Optimizing for performance and resource efficiency in deployed solutions.

Techniques for Encoding Persistent Goals in Prompts

To embed persistent goals effectively, consider the following approaches:

- Goal Priming: Introduce persistent goals explicitly at the start of every interaction or agent session to keep the objectives top-of-mind.

- Context Injection: Append summaries or status reports from previous sessions to maintain goal continuity and provide historical context.

- Goal Reinforcement: Use periodic reminders and checkpoints to realign agent outputs with persistent goals, especially after error corrections or divergent paths.

- Goal Hierarchies: Define layered goals where high-level strategic aims are decomposed into subordinate objectives assigned to specific agents.

- Meta-Prompting: Incorporate meta-instructions that guide the agent’s reasoning process to prioritize persistent goals over transient requests.

Sample prompt fragment for persistent goal priming:

Your persistent goal is to ensure all generated code adheres to the organization's security guidelines and passes all automated vulnerability scans before submission. Always prioritize security compliance even if other requirements conflict.

Persistent Goals in Multi-Agent Collaboration

In multi-agent systems, persistent goals serve as the “north star” aligning root and sub-agents. The root agent can enforce persistent goals by:

- Regularly summarizing progress and goal adherence in its prompts, keeping all agents updated.

- Validating sub-agent outputs against persistent criteria before acceptance, functioning as a quality gate.

- Triggering corrective workflows when deviations are detected, such as reassigning tasks or invoking specialized agents for remediation.

- Maintaining a shared knowledge base or goal repository accessible to all agents for consistent reference.

- Engaging in meta-cognition—agents reflecting on their outputs in relation to persistent goals for continuous improvement.

This approach creates a feedback-rich environment where agents self-correct and evolve in alignment with long-term objectives, enhancing sustainability and coherence in AI-driven workflows.

Future Directions: Persistent Goals and Continuous Learning

Looking forward, persistent goals will increasingly interface with continuous learning systems, enabling AI agents to adapt their policies and prompt strategies based on historical performance and evolving requirements. This convergence promises more autonomous, resilient, and context-aware AI orchestration frameworks.

Emerging trends include:

- Reinforcement Learning with Human Feedback (RLHF): Incorporating persistent goals as reward signals guiding agent behavior refinement over time.

- Meta-Learning: Agents learning how to better align with persistent goals by generalizing from prior tasks and adapting to new contexts.

- Dynamic Goal Adjustment: Systems automatically updating persistent goals in response to environmental changes, user feedback, or project evolution.

- Cross-Agent Knowledge Sharing: Facilitating transfer of learned strategies related to persistent goals among agents to accelerate collective learning.

These advancements will enable multi-agent systems to not only maintain consistency but also innovate within the boundaries of long-term objectives, transforming AI collaboration into a truly adaptive and intelligent process.

Practical Implementation Strategies and Best Practices

Designing Modular Prompt Architectures

Decompose complex workflows into modular prompt components assigned to specialized agents. This modularity simplifies maintenance, testing, and iterative improvement. Key strategies include:

- Clear Interface Definitions: Define precise input and output schemas for each agent module to facilitate integration.

- Reusable Prompt Templates: Develop standardized prompt templates that can be parameterized for different tasks or domains.

- Version Control: Track prompt versions and changes to enable rollback and auditability.

- Separation of Concerns: Ensure each agent focuses on a discrete aspect, minimizing overlap and conflict.

Maintaining Context Across Agent Interactions

Use memory buffers, context windows, or external databases to preserve state and history, enabling agents to build on prior outputs effectively. Approaches include:

- Session Memory: Retain conversation or task history within agent sessions, refreshed or trimmed based on token limits.

- External Knowledge Stores: Integrate with databases or knowledge graphs that agents can query to retrieve and update information.

- Context Summarization: Periodically summarize long histories to condense information while preserving essential details.

- State Serialization: Serialize and deserialize agent states between interactions to maintain continuity.

Testing and Validation Frameworks for Multi-Agent Systems

Implement rigorous testing pipelines that simulate multi-agent interactions under various scenarios to identify failure modes and optimize prompt formulations. Components include:

- Unit Tests: Validate individual agent prompt outputs against expected results.

- Integration Tests: Assess coordination among agents, ensuring proper delegation and output synthesis.

- Stress Tests: Evaluate system behavior under high concurrency or load, testing thread cap configurations.

- Red-Teaming: Introduce adversarial inputs or scenarios to uncover vulnerabilities or biases.

- Performance Benchmarks: Measure latency, throughput, and resource utilization to guide optimization.

Security and Ethical Considerations

Ensure prompt designs include safeguards against generating harmful or biased content by incorporating ethical guidelines and red-teaming exercises into agent workflows. Best practices include:

- Content Filtering: Use filters and validators to detect and block inappropriate or unsafe outputs.

- Bias Mitigation: Regularly audit agent responses for biases and retrain or adjust prompts accordingly.

- Access Controls: Limit sensitive data exposure and enforce authentication for multi-agent orchestration systems.

- Transparency: Maintain logs and explanations of agent decisions to support accountability.

- Human-in-the-Loop: Incorporate human oversight for critical decisions or ambiguous cases.

Integration with DevOps and CI/CD Pipelines

Embed multi-agent orchestration within automated development cycles to enable continuous code generation, testing, and deployment with minimal human intervention. Strategies include:

- Trigger-Based Workflows: Initiate multi-agent processes based on code commits, pull requests, or issue tracking events.

- Automated Feedback Loops: Incorporate agent-generated test results and code reviews into pipeline gating mechanisms.

- Artifact Management: Store generated code, documentation, and reports in artifact repositories for versioning and traceability.

- Monitoring and Alerts: Integrate with observability tools to detect failures or anomalies in multi-agent processes.

- Scalability: Use container orchestration platforms or serverless functions to dynamically manage agent workloads.

Case Study: Multi-Agent Orchestration in Large-Scale Code Refactoring

In a large enterprise software project, maintaining code quality and consistency during refactoring is challenging due to the scale and complexity involved. A multi-agent orchestration approach was implemented with the following agent roles:

- Root Agent: Coordinated the overall refactoring plan and monitored progress.

- Code Analysis Agent: Scanned the codebase to identify technical debt, code smells, and refactoring opportunities.

- Refactoring Agent: Executed automated code transformations based on analysis reports.

- Testing Agent: Ran regression tests and verified functional correctness post-refactoring.

- Documentation Agent: Updated code comments and generated refactoring summaries.

The system leveraged thread caps to balance concurrency with resource constraints, dynamically adjusting based on system load. Persistent goals ensured adherence to coding standards and security policies throughout the process.

Results included:

- 30% reduction in code smells over several iterations.

- Automated regression testing coverage exceeding 95%.

- Significant improvement in developer productivity due to reduced manual intervention.

This case study highlights the practical benefits and challenges of deploying multi-agent prompting in real-world software engineering contexts.

Future Outlook: The Next Frontier in Multi-Agent AI Orchestration

As AI models and orchestration frameworks evolve, several promising directions emerge:

- Cross-Model Collaboration: Integrating diverse LLMs and specialized AI systems (e.g., vision, planning, robotics) into unified multi-agent workflows.

- Decentralized AI Ecosystems: Enabling agents to operate across distributed networks, fostering collaborative intelligence without centralized control.

- Adaptive Agent Architectures: Agents capable of self-reconfiguration, role reassignment, and dynamic teaming based on task demands.

- Human-AI Hybrid Teams: Blending human expertise with AI agents through interactive orchestration platforms, enhancing creativity and decision-making.

- Explainability and Trustworthiness: Developing transparent agent reasoning and audit trails to build user confidence and regulatory compliance.

These trends point toward increasingly sophisticated multi-agent systems that are more autonomous, flexible, and aligned with human values and objectives.

Access 40,000+ AI Prompts for ChatGPT, Claude & Codex — Free!

Subscribe to get instant access to our complete Notion Prompt Library — the largest curated collection of prompts for ChatGPT, Claude, OpenAI Codex, and other leading AI models. Optimized for real-world workflows across coding, research, content creation, and business.

Useful Links

- OpenAI Codex Official Blog

- Anthropic Claude AI Overview

- OpenAI Prompting Guide

- Anthropic Constitutional AI Principles

- Multi-Agent Systems Research Paper (arXiv)

- Microsoft Azure OpenAI Service Documentation

- OpenAI Cookbook GitHub Repository

- Anthropic AI Safety Research

- Google AI Multi-Agent Systems Blog

Mastering multi-agent prompts requires a blend of technical acumen, thoughtful design, and iterative experimentation. By understanding the principles detailed in this guide and applying the advanced techniques for root and sub-agent management, thread cap optimization, and persistent goal integration, AI practitioners can unlock unprecedented capabilities in AI-driven coding and automation workflows.

Multi-agent prompting builds on foundational prompting skills for desktop agents; our 2026 mastery guide for advanced prompting of AI desktop agents covers the prerequisite techniques that make multi-agent orchestration effective: Advanced Prompting for AI Desktop Agents: The 2026 Mastery Guide.

As the field progresses, continuous learning and adaptation will be key to sustaining effective multi-agent orchestration in dynamic environments. Exploring the interplay between AI agents and human collaborators will further enhance the potential of these systems, driving innovation across industries and disciplines.

Before diving into multi-agent complexity, understanding what prompting techniques actually work in 2026 is essential; our six-month testing report on AI prompting reveals which strategies deliver consistent results across different models: AI Prompting in 2026: What Actually Works Based on 6 Months of Testing.

For those seeking to deepen their expertise, combining the insights from this guide with hands-on experimentation in AI workspaces that support multi-agent configurations is highly recommended. Understanding the trade-offs in thread management and goal persistence can significantly improve solution quality and operational efficiency.

Managing multi-agent prompt configurations at scale requires proper versioning; our guide to prompt libraries with versioning, tagging, and deletion rules explains how to maintain prompt collections that remain effective as models evolve: Prompt Libraries That Do Not Rot: Versioning, Tagging, And Deletion Rules.